Ana Sabina Uban

Measuring cross-language intelligibility between Romance languages with computational tools

Feb 07, 2026Abstract:We present an analysis of mutual intelligibility in related languages applied for languages in the Romance family. We introduce a novel computational metric for estimating intelligibility based on lexical similarity using surface and semantic similarity of related words, and use it to measure mutual intelligibility for the five main Romance languages (French, Italian, Portuguese, Spanish, and Romanian), and compare results using both the orthographic and phonetic forms of words as well as different parallel corpora and vectorial models of word meaning representation. The obtained intelligibility scores confirm intuitions related to intelligibility asymmetry across languages and significantly correlate with results of cloze tests in human experiments.

Generating Summaries for Scientific Paper Review

Sep 28, 2021

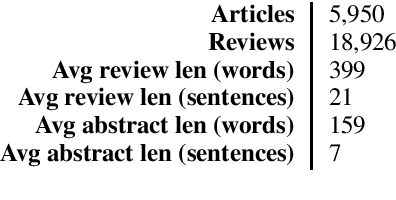

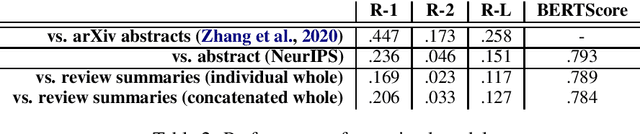

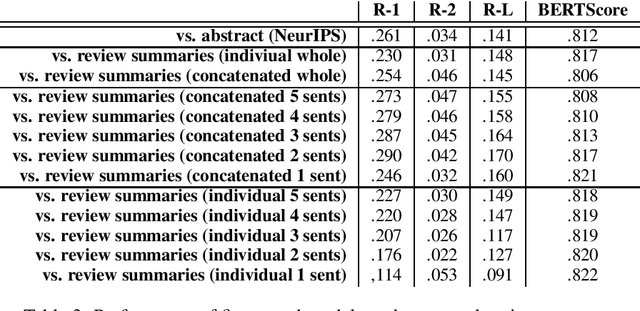

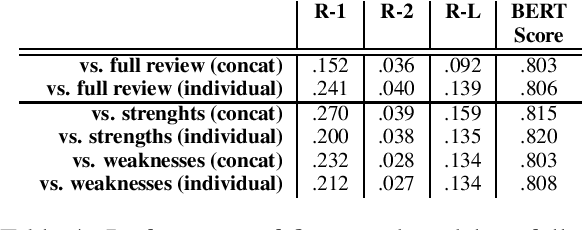

Abstract:The review process is essential to ensure the quality of publications. Recently, the increase of submissions for top venues in machine learning and NLP has caused a problem of excessive burden on reviewers and has often caused concerns regarding how this may not only overload reviewers, but also may affect the quality of the reviews. An automatic system for assisting with the reviewing process could be a solution for ameliorating the problem. In this paper, we explore automatic review summary generation for scientific papers. We posit that neural language models have the potential to be valuable candidates for this task. In order to test this hypothesis, we release a new dataset of scientific papers and their reviews, collected from papers published in the NeurIPS conference from 2013 to 2020. We evaluate state of the art neural summarization models, present initial results on the feasibility of automatic review summary generation, and propose directions for the future.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge