Ali Caner Türkmen

Detecting Anomalous Event Sequences with Temporal Point Processes

Jun 08, 2021

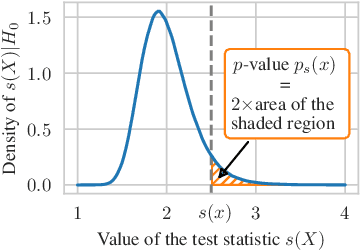

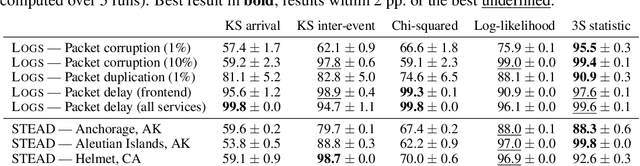

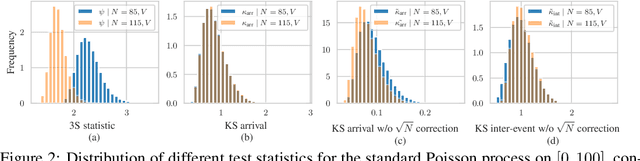

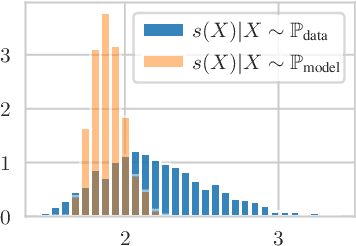

Abstract:Automatically detecting anomalies in event data can provide substantial value in domains such as healthcare, DevOps, and information security. In this paper, we frame the problem of detecting anomalous continuous-time event sequences as out-of-distribution (OoD) detection for temporal point processes (TPPs). First, we show how this problem can be approached using goodness-of-fit (GoF) tests. We then demonstrate the limitations of popular GoF statistics for TPPs and propose a new test that addresses these shortcomings. The proposed method can be combined with various TPP models, such as neural TPPs, and is easy to implement. In our experiments, we show that the proposed statistic excels at both traditional GoF testing, as well as at detecting anomalies in simulated and real-world data.

Neural Temporal Point Processes: A Review

Apr 27, 2021

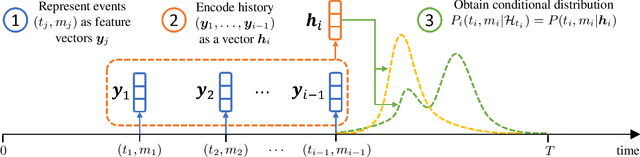

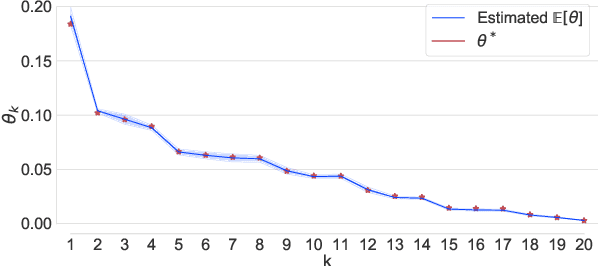

Abstract:Temporal point processes (TPP) are probabilistic generative models for continuous-time event sequences. Neural TPPs combine the fundamental ideas from point process literature with deep learning approaches, thus enabling construction of flexible and efficient models. The topic of neural TPPs has attracted significant attention in the recent years, leading to the development of numerous new architectures and applications for this class of models. In this review paper we aim to consolidate the existing body of knowledge on neural TPPs. Specifically, we focus on important design choices and general principles for defining neural TPP models. Next, we provide an overview of application areas commonly considered in the literature. We conclude this survey with the list of open challenges and important directions for future work in the field of neural TPPs.

A Bayesian Choice Model for Eliminating Feedback Loops

Aug 21, 2019

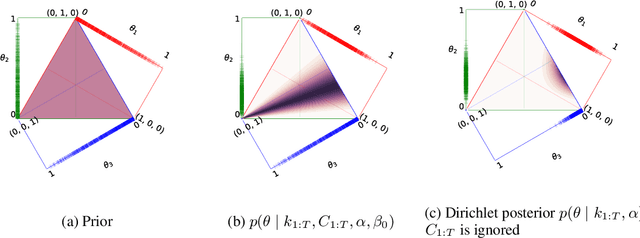

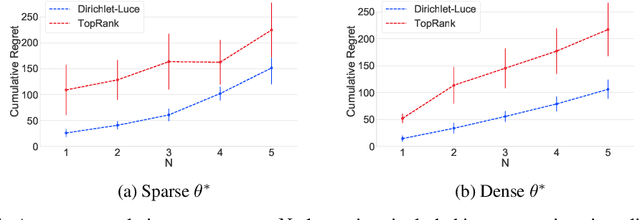

Abstract:Self-reinforcing feedback loops in personalization systems are typically caused by users choosing from a limited set of alternatives presented systematically based on previous choices. We propose a Bayesian choice model built on Luce axioms that explicitly accounts for users' limited exposure to alternatives. Our model is fair---it does not impose negative bias towards unpresented alternatives, and practical---preference estimates are accurately inferred upon observing a small number of interactions. It also allows efficient sampling, leading to a straightforward online presentation mechanism based on Thompson sampling. Our approach achieves low regret in learning to present upon exploration of only a small fraction of possible presentations. The proposed structure can be reused as a building block in interactive systems, e.g., recommender systems, free of feedback loops.

GluonTS: Probabilistic Time Series Models in Python

Jun 14, 2019

Abstract:We introduce Gluon Time Series (GluonTS, available at https://gluon-ts.mxnet.io), a library for deep-learning-based time series modeling. GluonTS simplifies the development of and experimentation with time series models for common tasks such as forecasting or anomaly detection. It provides all necessary components and tools that scientists need for quickly building new models, for efficiently running and analyzing experiments and for evaluating model accuracy.

A Review of Nonnegative Matrix Factorization Methods for Clustering

Aug 28, 2015

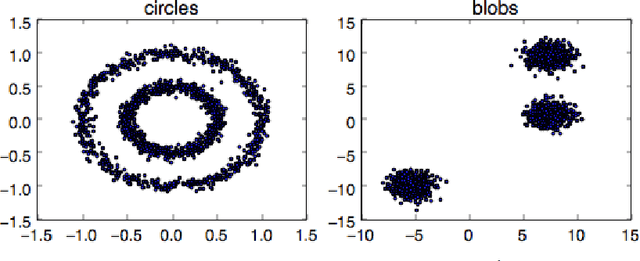

Abstract:Nonnegative Matrix Factorization (NMF) was first introduced as a low-rank matrix approximation technique, and has enjoyed a wide area of applications. Although NMF does not seem related to the clustering problem at first, it was shown that they are closely linked. In this report, we provide a gentle introduction to clustering and NMF before reviewing the theoretical relationship between them. We then explore several NMF variants, namely Sparse NMF, Projective NMF, Nonnegative Spectral Clustering and Cluster-NMF, along with their clustering interpretations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge