Brnocompspeed

Papers and Code

Efficient Vision-based Vehicle Speed Estimation

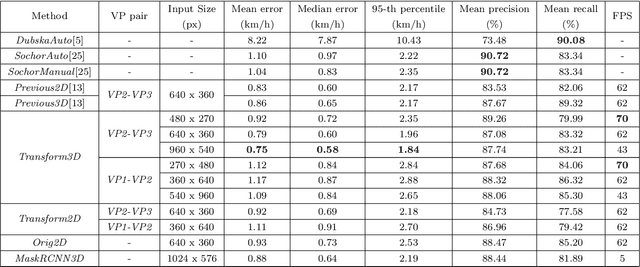

May 02, 2025This paper presents a computationally efficient method for vehicle speed estimation from traffic camera footage. Building upon previous work that utilizes 3D bounding boxes derived from 2D detections and vanishing point geometry, we introduce several improvements to enhance real-time performance. We evaluate our method in several variants on the BrnoCompSpeed dataset in terms of vehicle detection and speed estimation accuracy. Our extensive evaluation across various hardware platforms, including edge devices, demonstrates significant gains in frames per second (FPS) compared to the prior state-of-the-art, while maintaining comparable or improved speed estimation accuracy. We analyze the trade-off between accuracy and computational cost, showing that smaller models utilizing post-training quantization offer the best balance for real-world deployment. Our best performing model beats previous state-of-the-art in terms of median vehicle speed estimation error (0.58 km/h vs. 0.60 km/h), detection precision (91.02% vs 87.08%) and recall (91.14% vs. 83.32%) while also being 5.5 times faster.

Detection of 3D Bounding Boxes of Vehicles Using Perspective Transformation for Accurate Speed Measurement

Mar 29, 2020

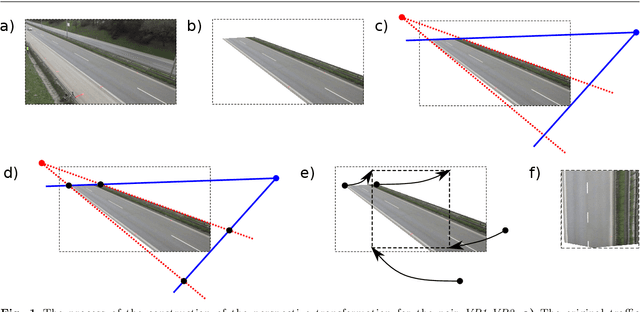

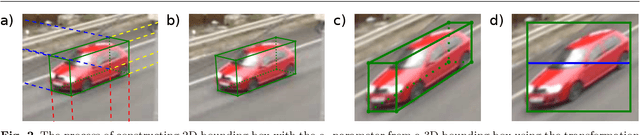

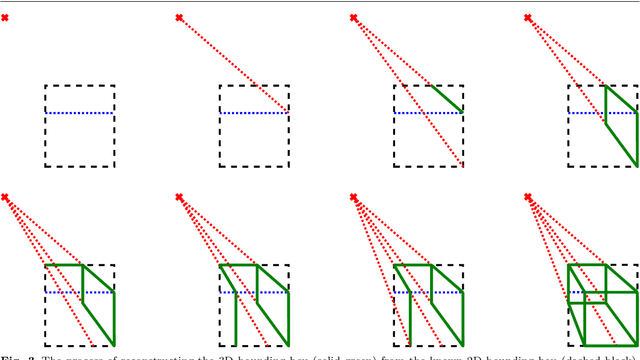

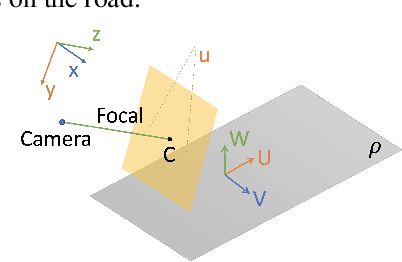

Detection and tracking of vehicles captured by traffic surveillance cameras is a key component of intelligent transportation systems. We present an improved version of our algorithm for detection of 3D bounding boxes of vehicles, their tracking and subsequent speed estimation. Our algorithm utilizes the known geometry of vanishing points in the surveilled scene to construct a perspective transformation. The transformation enables an intuitive simplification of the problem of detecting 3D bounding boxes to detection of 2D bounding boxes with one additional parameter using a standard 2D object detector. Main contribution of this paper is an improved construction of the perspective transformation which is more robust and fully automatic and an extended experimental evaluation of speed estimation. We test our algorithm on the speed estimation task of the BrnoCompSpeed dataset. We evaluate our approach with different configurations to gauge the relationship between accuracy and computational costs and benefits of 3D bounding box detection over 2D detection. All of the tested configurations run in real-time and are fully automatic. Compared to other published state-of-the-art fully automatic results our algorithm reduces the mean absolute speed measurement error by 32% (1.10 km/h to 0.75 km/h) and the absolute median error by 40% (0.97 km/h to 0.58 km/h).

Traffic Danger Recognition With Surveillance Cameras Without Training Data

Nov 29, 2018

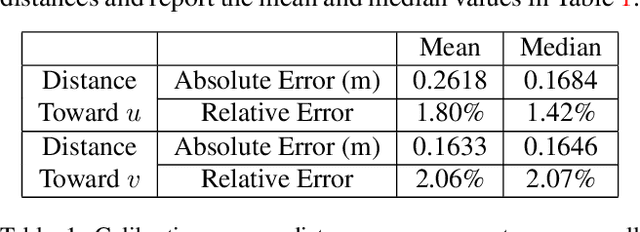

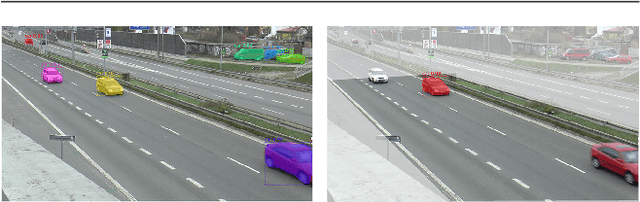

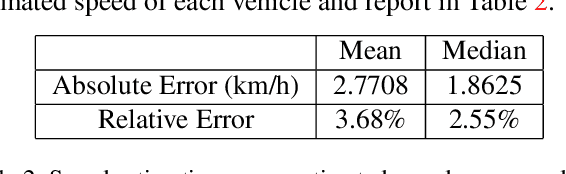

We propose a traffic danger recognition model that works with arbitrary traffic surveillance cameras to identify and predict car crashes. There are too many cameras to monitor manually. Therefore, we developed a model to predict and identify car crashes from surveillance cameras based on a 3D reconstruction of the road plane and prediction of trajectories. For normal traffic, it supports real-time proactive safety checks of speeds and distances between vehicles to provide insights about possible high-risk areas. We achieve good prediction and recognition of car crashes without using any labeled training data of crashes. Experiments on the BrnoCompSpeed dataset show that our model can accurately monitor the road, with mean errors of 1.80% for distance measurement, 2.77 km/h for speed measurement, 0.24 m for car position prediction, and 2.53 km/h for speed prediction.

Traffic Surveillance Camera Calibration by 3D Model Bounding Box Alignment for Accurate Vehicle Speed Measurement

Jun 01, 2017

In this paper, we focus on fully automatic traffic surveillance camera calibration, which we use for speed measurement of passing vehicles. We improve over a recent state-of-the-art camera calibration method for traffic surveillance based on two detected vanishing points. More importantly, we propose a novel automatic scene scale inference method. The method is based on matching bounding boxes of rendered 3D models of vehicles with detected bounding boxes in the image. The proposed method can be used from arbitrary viewpoints, since it has no constraints on camera placement. We evaluate our method on the recent comprehensive dataset for speed measurement BrnoCompSpeed. Experiments show that our automatic camera calibration method by detection of two vanishing points reduces error by 50% (mean distance ratio error reduced from 0.18 to 0.09) compared to the previous state-of-the-art method. We also show that our scene scale inference method is more precise, outperforming both state-of-the-art automatic calibration method for speed measurement (error reduction by 86% -- 7.98km/h to 1.10km/h) and manual calibration (error reduction by 19% -- 1.35km/h to 1.10km/h). We also present qualitative results of the proposed automatic camera calibration method on video sequences obtained from real surveillance cameras in various places, and under different lighting conditions (night, dawn, day).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge