Understanding and Comparing Deep Neural Networks for Age and Gender Classification

Paper and Code

Aug 25, 2017

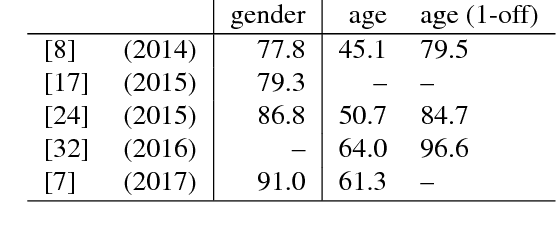

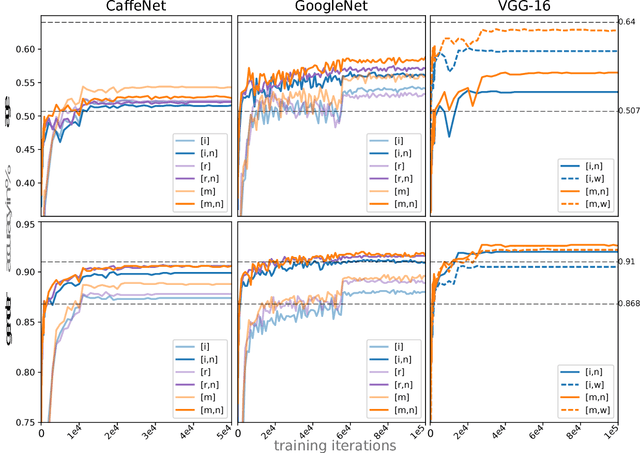

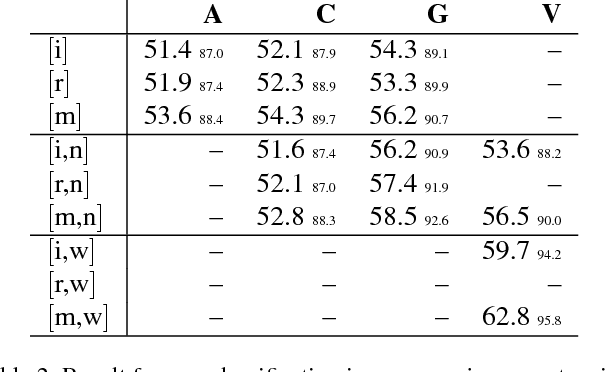

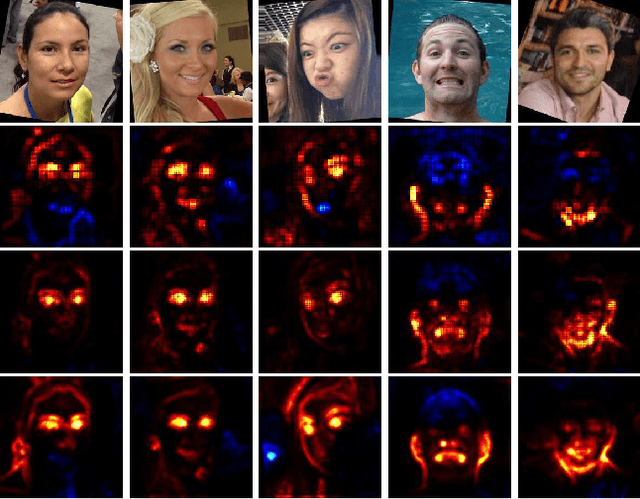

Recently, deep neural networks have demonstrated excellent performances in recognizing the age and gender on human face images. However, these models were applied in a black-box manner with no information provided about which facial features are actually used for prediction and how these features depend on image preprocessing, model initialization and architecture choice. We present a study investigating these different effects. In detail, our work compares four popular neural network architectures, studies the effect of pretraining, evaluates the robustness of the considered alignment preprocessings via cross-method test set swapping and intuitively visualizes the model's prediction strategies in given preprocessing conditions using the recent Layer-wise Relevance Propagation (LRP) algorithm. Our evaluations on the challenging Adience benchmark show that suitable parameter initialization leads to a holistic perception of the input, compensating artefactual data representations. With a combination of simple preprocessing steps, we reach state of the art performance in gender recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge