Nonlinear Matrix Approximation with Radial Basis Function Components

Paper and Code

Jun 23, 2021

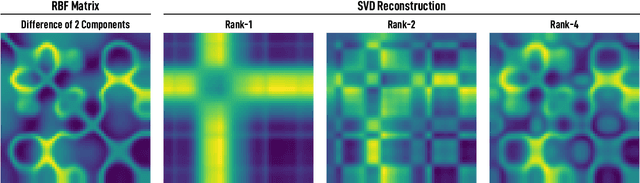

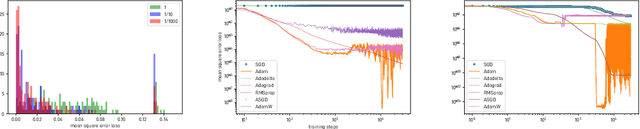

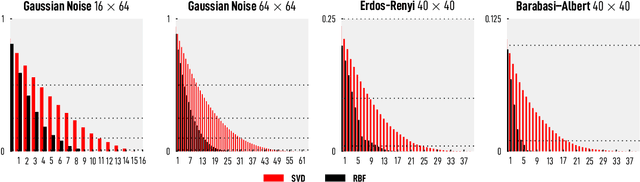

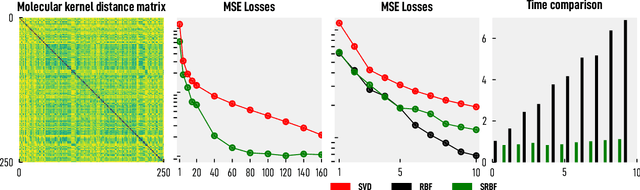

We introduce and investigate matrix approximation by decomposition into a sum of radial basis function (RBF) components. An RBF component is a generalization of the outer product between a pair of vectors, where an RBF function replaces the scalar multiplication between individual vector elements. Even though the RBF functions are positive definite, the summation across components is not restricted to convex combinations and allows us to compute the decomposition for any real matrix that is not necessarily symmetric or positive definite. We formulate the problem of seeking such a decomposition as an optimization problem with a nonlinear and non-convex loss function. Several modern versions of the gradient descent method, including their scalable stochastic counterparts, are used to solve this problem. We provide extensive empirical evidence of the effectiveness of the RBF decomposition and that of the gradient-based fitting algorithm. While being conceptually motivated by singular value decomposition (SVD), our proposed nonlinear counterpart outperforms SVD by drastically reducing the memory required to approximate a data matrix with the same L2 error for a wide range of matrix types. For example, it leads to 2 to 6 times memory save for Gaussian noise, graph adjacency matrices, and kernel matrices. Moreover, this proximity-based decomposition can offer additional interpretability in applications that involve, e.g., capturing the inner low-dimensional structure of the data, retaining graph connectivity structure, and preserving the acutance of images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge