Adaptivity to Noise Parameters in Nonparametric Active Learning

Paper and Code

Mar 16, 2017

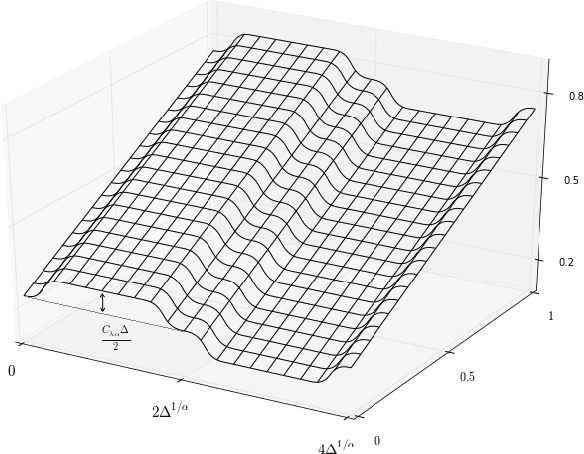

This work addresses various open questions in the theory of active learning for nonparametric classification. Our contributions are both statistical and algorithmic: -We establish new minimax-rates for active learning under common \textit{noise conditions}. These rates display interesting transitions -- due to the interaction between noise \textit{smoothness and margin} -- not present in the passive setting. Some such transitions were previously conjectured, but remained unconfirmed. -We present a generic algorithmic strategy for adaptivity to unknown noise smoothness and margin; our strategy achieves optimal rates in many general situations; furthermore, unlike in previous work, we avoid the need for \textit{adaptive confidence sets}, resulting in strictly milder distributional requirements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge