Zhexiong Shang

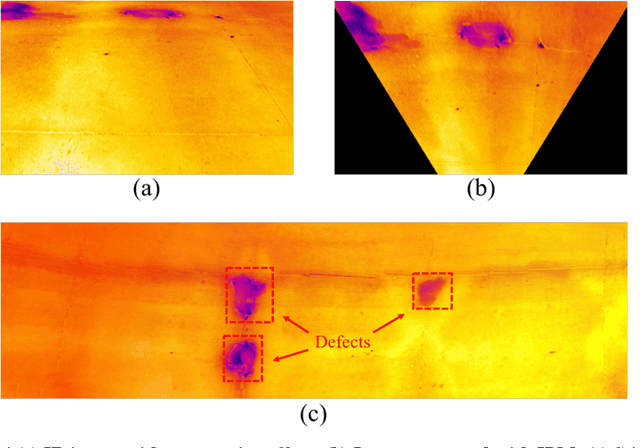

CNN-Based Deep Architecture for Reinforced Concrete Delamination Segmentation Through Thermography

Apr 11, 2019

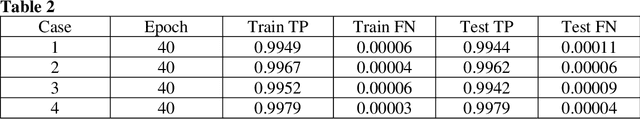

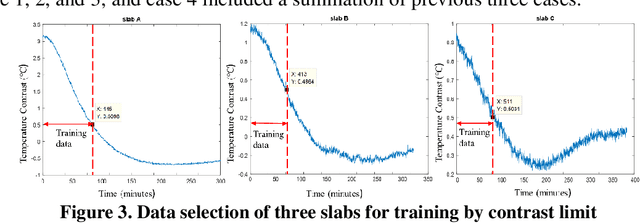

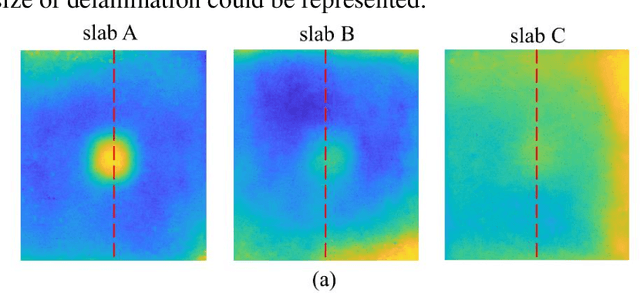

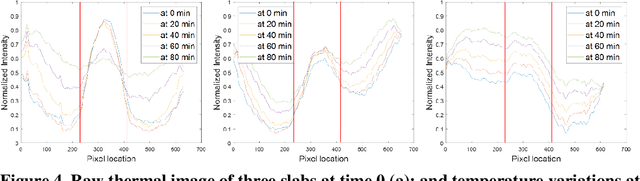

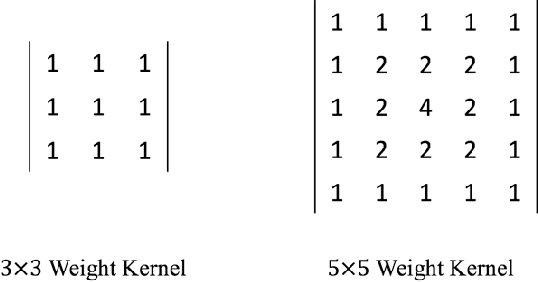

Abstract:Delamination assessment of the bridge deck plays a vital role for bridge health monitoring. Thermography as one of the nondestructive technologies for delamination detection has the advantage of efficient data acquisition. But there are challenges on the interpretation of data for accurate delamination shape profiling. Due to the environmental variation and the irregular presence of delamination size and depth, conventional processing methods based on temperature contrast fall short in accurate segmentation of delamination. Inspired by the recent development of deep learning architecture for image segmentation, the Convolutional Neural Network (CNN) based framework was investigated for the applicability of delamination segmentation under variations in temperature contrast and shape diffusion. The models were developed based on Dense Convolutional Network (DenseNet) and trained on thermal images collected for mimicked delamination in concrete slabs with different depths under experimental setup. The results suggested satisfactory performance of accurate profiling the delamination shapes.

Indoor Testing and Simulation Platform for Close-distance Visual Inspection of Complex Structures using Micro Quadrotor UAV

Apr 10, 2019

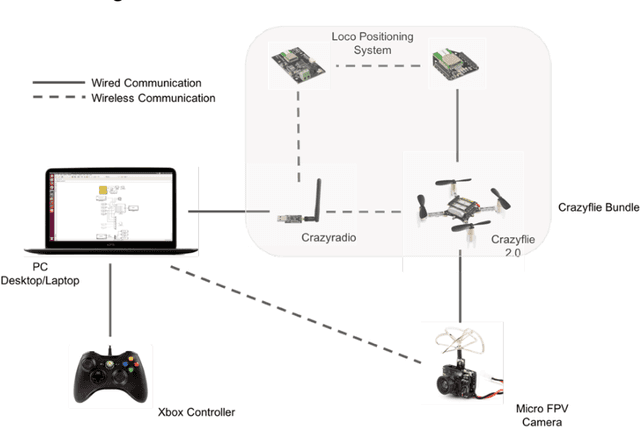

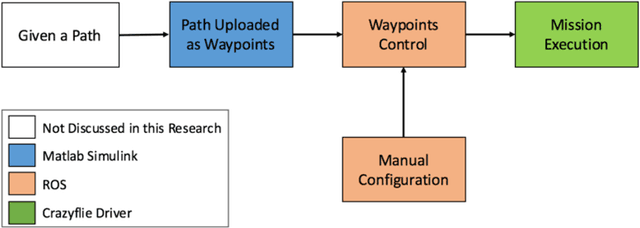

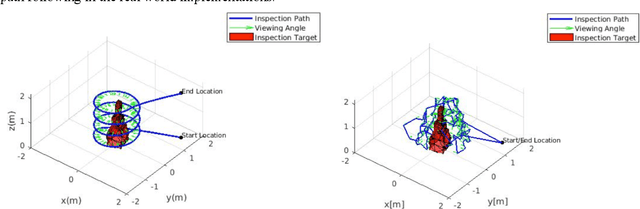

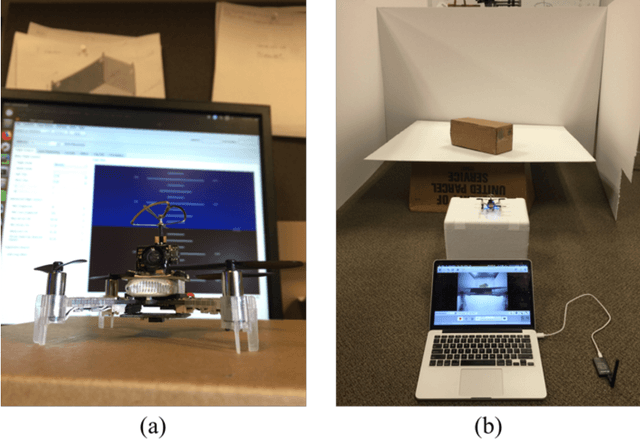

Abstract:In recent years, using drone, also known as unmanned aerial vehicle (UAV), in close-distance visual inspection has became an active area in many disciplines. However, many challenges still remain before we can achieve autonomous inspection, especially when inspecting complex structures. The complex civil structures, such as bridges, dams and wind turbines, are large-scale and geometrical complicated. It requires sophisticated path planning algorithms to achieve close-distance inspection and, at the same time, avoid collisions. In practice, directly deploying the path planning result on such structures is error prone, costly, and full of hazards. In this paper, rely on micro quadrotor UAV, the authors present an affordable experimental platform for testing drone-based path planning result. The platform allows the users to conduct many path planning experiments at any time without worrying expensive and time consuming outdoor test flying. This platform is developed based on the bundle of Crazyflie, which includes Crazyflie 2.0 quadrotor, Crazyradio and loco positioning system (LPS). Equipped with an onboard micro FPV camera, the visual data can be lively streamed to the host computer during flight. The functions of manual configuration and waypoints control are explicitly designed in this platform to increase its flexibility and performance on path following and debugging. To evaluate the practicability of the proposed test platform, two existing drone-based path planning algorithms are tested. The results show that even though certain level of error existed, the quality of visual data and accuracy of path following are high enough for simulating most practical inspection applications.

Vision-model-based Real-time Localization of Unmanned Aerial Vehicle for Autonomous Structure Inspection under GPS-denied Environment

Apr 10, 2019

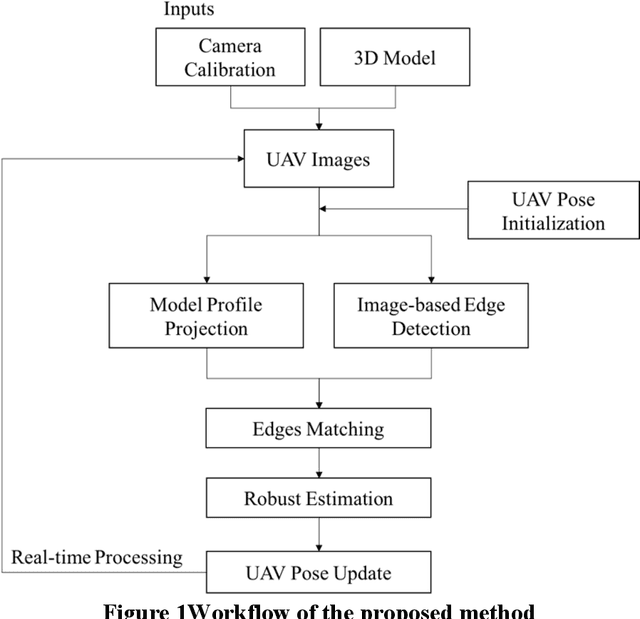

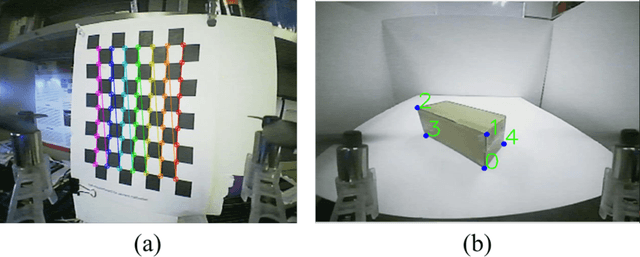

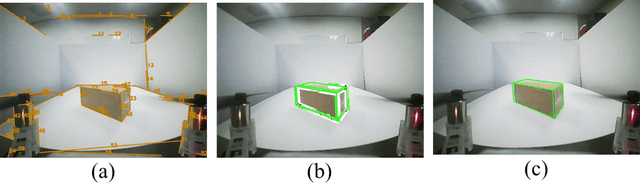

Abstract:UAVs have been widely used in visual inspections of buildings, bridges and other structures. In either outdoor autonomous or semi-autonomous flights missions strong GPS signal is vital for UAV to locate its own positions. However, strong GPS signal is not always available, and it can degrade or fully loss underneath large structures or close to power lines, which can cause serious control issues or even UAV crashes. Such limitations highly restricted the applications of UAV as a routine inspection tool in various domains. In this paper a vision-model-based real-time self-positioning method is proposed to support autonomous aerial inspection without the need of GPS support. Compared to other localization methods that requires additional onboard sensors, the proposed method uses a single camera to continuously estimate the inflight poses of UAV. Each step of the proposed method is discussed in detail, and its performance is tested through an indoor test case.

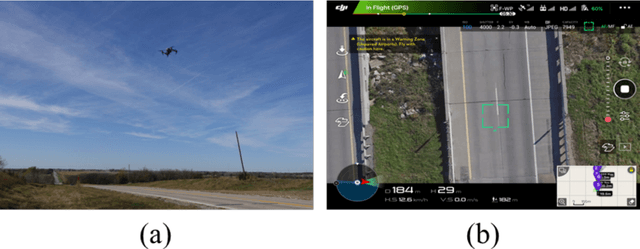

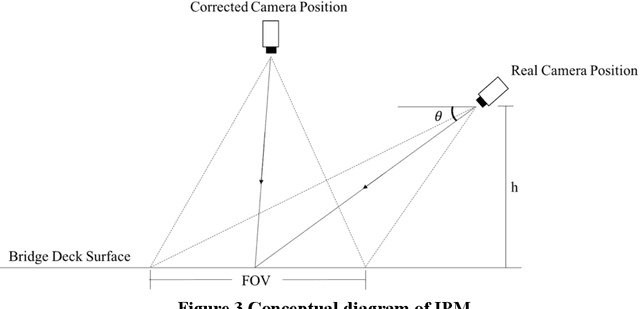

A Data Fusion Platform for Supporting Bridge Deck Condition Monitoring by Merging Aerial and Ground Inspection Imagery

Apr 10, 2019

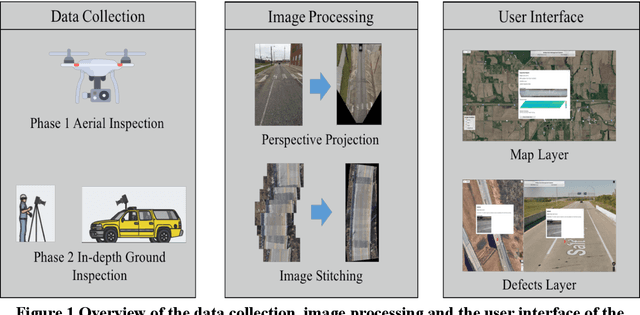

Abstract:UAVs showed great efficiency on scanning bridge decks surface by taking a single shot or through stitching a couple of overlaid still images. If potential surface deficits are identified through aerial images, subsequent ground inspections can be scheduled. This two-phase inspection procedure showed great potentials on increasing field inspection productivity. Since aerial and ground inspection images are taken at different scales, a tool to properly fuse these multi-scale images is needed for improving the current bridge deck condition monitoring practice. In response to this need a data fusion platform is introduced in this study. Using this proposed platform multi-scale images taken by different inspection devices can be fused through geo-referencing. As part of the platform, a web-based user interface is developed to organize and visualize those images with inspection notes under users queries. For illustration purpose, a case study involving multi-scale optical and infrared images from UAV and ground inspector, and its implementation using the proposed platform is presented.

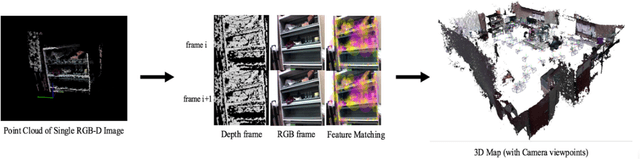

Real-time 3D Reconstruction on Construction Site using Visual SLAM and UAV

Dec 19, 2017

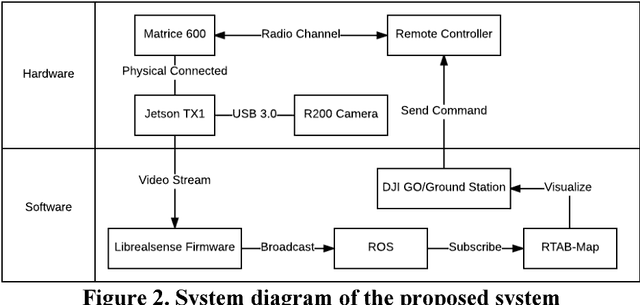

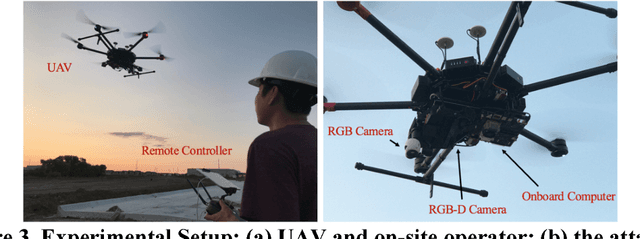

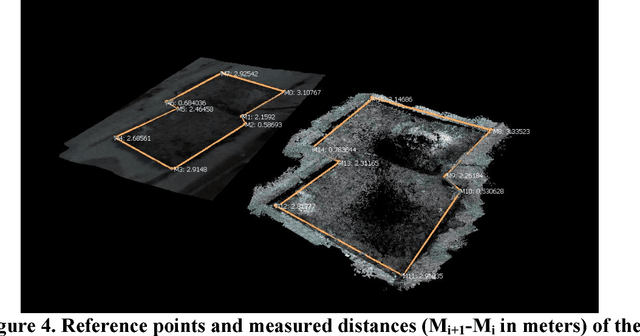

Abstract:3D reconstruction can be used as a platform to monitor the performance of activities on construction site, such as construction progress monitoring, structure inspection and post-disaster rescue. Comparing to other sensors, RGB image has the advantages of low-cost, texture rich and easy to implement that has been used as the primary method for 3D reconstruction in construction industry. However, the image-based 3D reconstruction always requires extended time to acquire and/or to process the image data, which limits its application on time critical projects. Recent progress in Visual Simultaneous Localization and Mapping (SLAM) make it possible to reconstruct a 3D map of construction site in real-time. Integrated with Unmanned Aerial Vehicle (UAV), the obstacles areas that are inaccessible for the ground equipment can also be sensed. Despite these advantages of visual SLAM and UAV, until now, such technique has not been fully investigated on construction site. Therefore, the objective of this research is to present a pilot study of using visual SLAM and UAV for real-time construction site reconstruction. The system architecture and the experimental setup are introduced, and the preliminary results and the potential applications using Visual SLAM and UAV on construction site are discussed.

Multi-point Vibration Measurement for Mode Identification of Bridge Structures using Video-based Motion Magnification

Dec 18, 2017

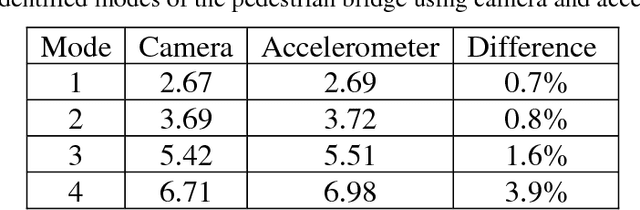

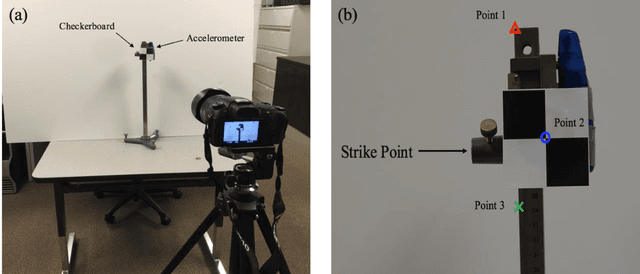

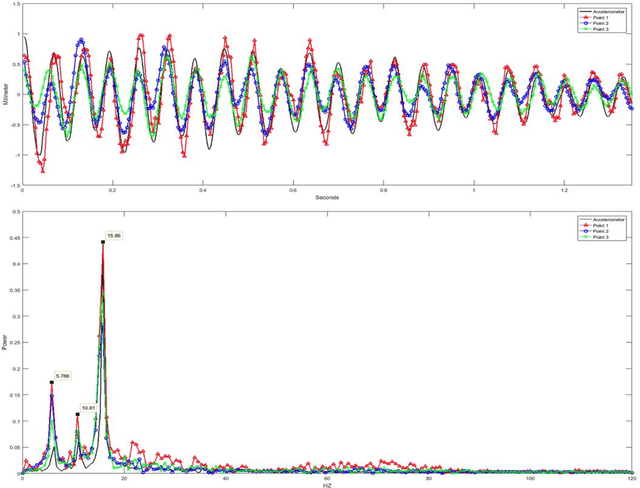

Abstract:Image-based vibration mode identification gained increased attentions in civil and construction communities. A recent video-based motion magnification method was developed to measure and visualize small structure motions. This new approach presents a potential for low-cost vibration measurement and mode shape identification. Pilot studies using this approach on simple rigid body structures was reported. Its validity on complex outdoor structures have not been investigated. The objective is to investigate the capacity of video-based motion magnification approach in measuring the modal frequency and visualizing the mode shapes of complex steel bridges. A novel method that increases the performance of the current motion magnification for efficient structure modal analysis is introduced. This method was tested in both indoor and outdoor environments for validation. The results of the investigation show that motion magnification can be an efficient tool for modal analysis on complex bridge structures. With the developed method, mode frequencies of multiple structures are simultaneously measured and mode shapes of each structure are automatically visualized.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge