Yudong Cao

Quantum Computing-Enhanced Algorithm Unveils Novel Inhibitors for KRAS

Feb 13, 2024

Abstract:The discovery of small molecules with therapeutic potential is a long-standing challenge in chemistry and biology. Researchers have increasingly leveraged novel computational techniques to streamline the drug development process to increase hit rates and reduce the costs associated with bringing a drug to market. To this end, we introduce a quantum-classical generative model that seamlessly integrates the computational power of quantum algorithms trained on a 16-qubit IBM quantum computer with the established reliability of classical methods for designing small molecules. Our hybrid generative model was applied to designing new KRAS inhibitors, a crucial target in cancer therapy. We synthesized 15 promising molecules during our investigation and subjected them to experimental testing to assess their ability to engage with the target. Notably, among these candidates, two molecules, ISM061-018-2 and ISM061-22, each featuring unique scaffolds, stood out by demonstrating effective engagement with KRAS. ISM061-018-2 was identified as a broad-spectrum KRAS inhibitor, exhibiting a binding affinity to KRAS-G12D at $1.4 \mu M$. Concurrently, ISM061-22 exhibited specific mutant selectivity, displaying heightened activity against KRAS G12R and Q61H mutants. To our knowledge, this work shows for the first time the use of a quantum-generative model to yield experimentally confirmed biological hits, showcasing the practical potential of quantum-assisted drug discovery to produce viable therapeutics. Moreover, our findings reveal that the efficacy of distribution learning correlates with the number of qubits utilized, underlining the scalability potential of quantum computing resources. Overall, we anticipate our results to be a stepping stone towards developing more advanced quantum generative models in drug discovery.

Research on Sparsity Measures for Rotating Machinery Health Monitoring

Apr 30, 2022

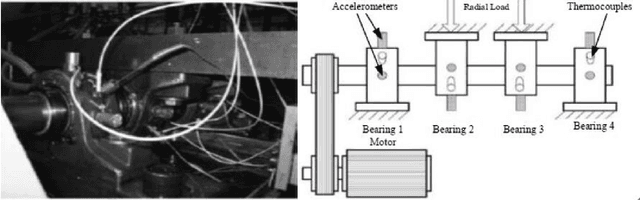

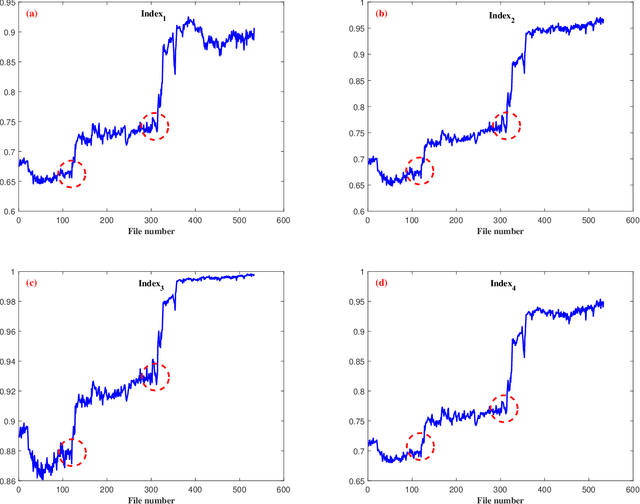

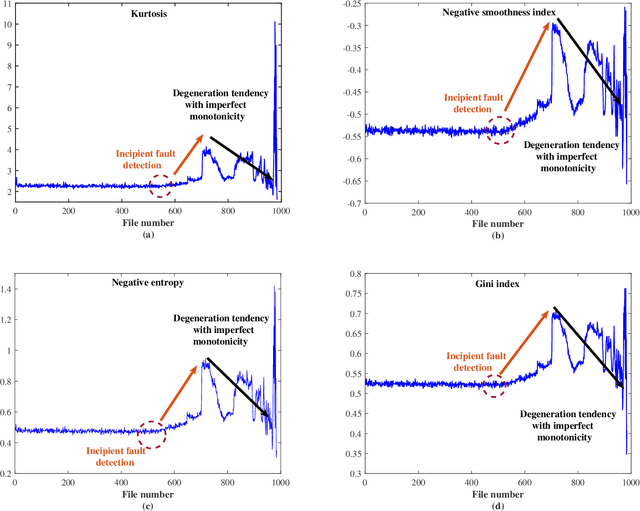

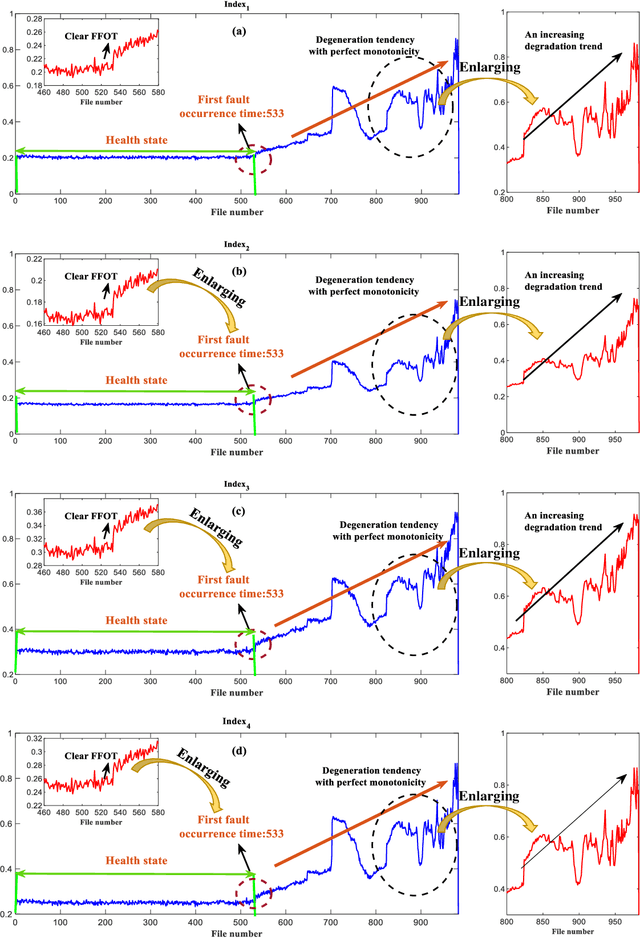

Abstract:Machine health management is one of the main research contents of PHM technology, which aims to monitor the health states of machines online and evaluate degradation stages through real-time sensor data. In recent years, classic sparsity measures such as kurtosis, Lp/Lq norm, pq-mean, smoothness index, negative entropy, and Gini index have been widely used to characterize the impulsivity of repetitive transients. Since smoothness index and negative entropy were proposed, the sparse properties have not been fully analyzed. The first work of this paper is to analyze six properties of smoothness index and negative entropy. In addition, this paper conducts a thorough investigation on multivariate power average function and finds that existing classical sparsity measures can be respectively reformulated as the ratio of multivariate power mean functions (MPMFs). Finally, a general paradigm of index design are proposed for the expansion of sparsity measures family, and several newly designed dimensionless health indexes are given as examples. Two different run-to-failure bearing datasets were used to analyze and validate the capabilities and advantages of the newly designed health indexes. Experimental results prove that the newly designed health indexes show good performance in terms of monotonic degradation description, first fault occurrence time determination and degradation state assessment.

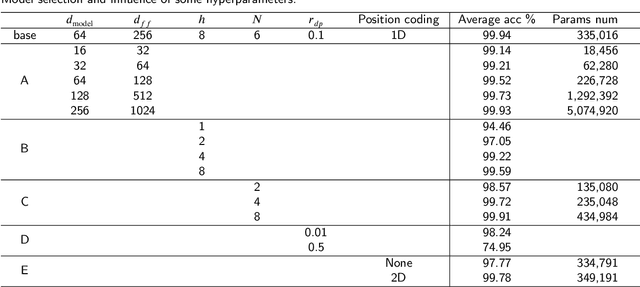

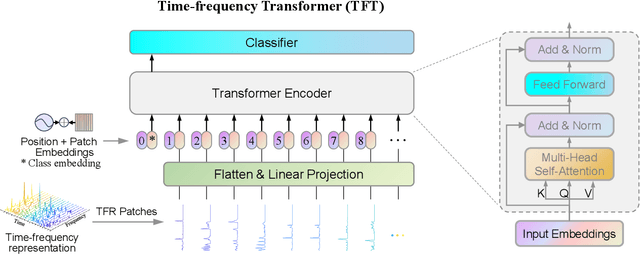

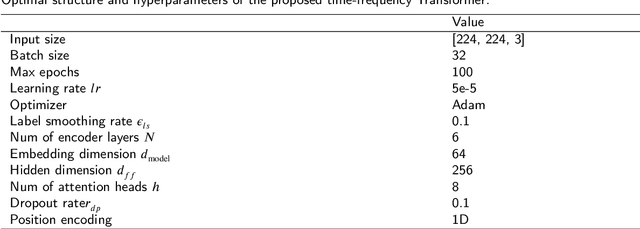

A novel Time-frequency Transformer and its Application in Fault Diagnosis of Rolling Bearings

Apr 19, 2021

Abstract:The scope of data-driven fault diagnosis models is greatly improved through deep learning (DL). However, the classical convolution and recurrent structure have their defects in computational efficiency and feature representation, while the latest Transformer architecture based on attention mechanism has not been applied in this field. To solve these problems, we propose a novel time-frequency Transformer (TFT) model inspired by the massive success of standard Transformer in sequence processing. Specially, we design a fresh tokenizer and encoder module to extract effective abstractions from the time-frequency representation (TFR) of vibration signals. On this basis, a new end-to-end fault diagnosis framework based on time-frequency Transformer is presented in this paper. Through the case studies on bearing experimental datasets, we constructed the optimal Transformer structure and verified the performance of the diagnostic method. The superiority of the proposed method is demonstrated in comparison with the benchmark model and other state-of-the-art methods.

Quantum Neuron: an elementary building block for machine learning on quantum computers

Nov 30, 2017

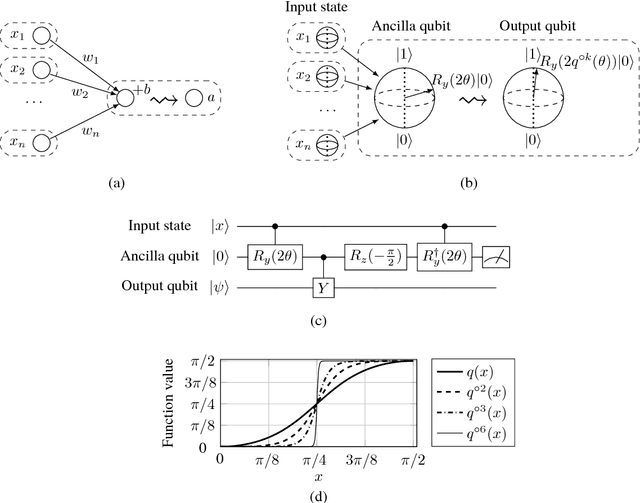

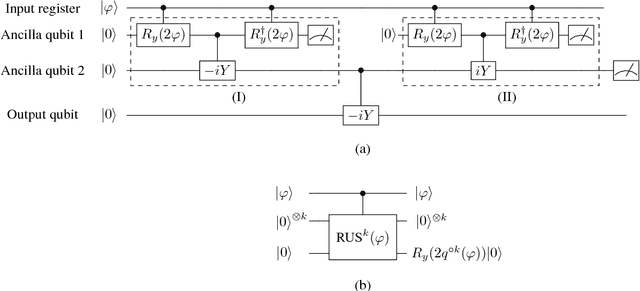

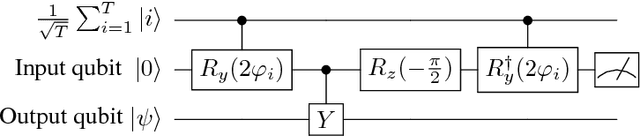

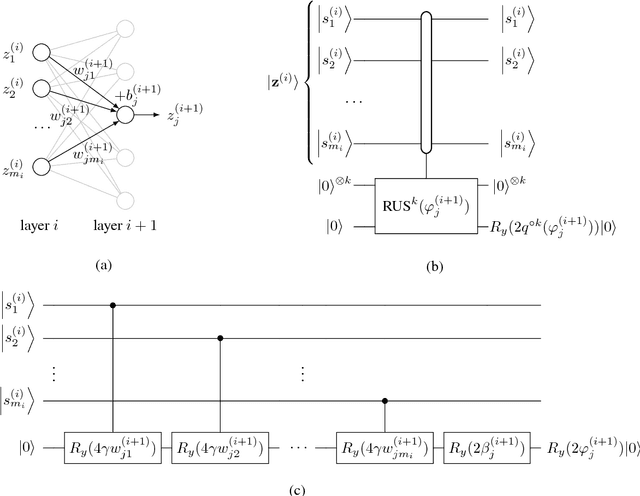

Abstract:Even the most sophisticated artificial neural networks are built by aggregating substantially identical units called neurons. A neuron receives multiple signals, internally combines them, and applies a non-linear function to the resulting weighted sum. Several attempts to generalize neurons to the quantum regime have been proposed, but all proposals collided with the difficulty of implementing non-linear activation functions, which is essential for classical neurons, due to the linear nature of quantum mechanics. Here we propose a solution to this roadblock in the form of a small quantum circuit that naturally simulates neurons with threshold activation. Our quantum circuit defines a building block, the "quantum neuron", that can reproduce a variety of classical neural network constructions while maintaining the ability to process superpositions of inputs and preserve quantum coherence and entanglement. In the construction of feedforward networks of quantum neurons, we provide numerical evidence that the network not only can learn a function when trained with superposition of inputs and the corresponding output, but that this training suffices to learn the function on all individual inputs separately. When arranged to mimic Hopfield networks, quantum neural networks exhibit properties of associative memory. Patterns are encoded using the simple Hebbian rule for the weights and we demonstrate attractor dynamics from corrupted inputs. Finally, the fact that our quantum model closely captures (traditional) neural network dynamics implies that the vast body of literature and results on neural networks becomes directly relevant in the context of quantum machine learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge