Yuanzhi Cheng

Tired of Over-smoothing? Stress Graph Drawing Is All You Need!

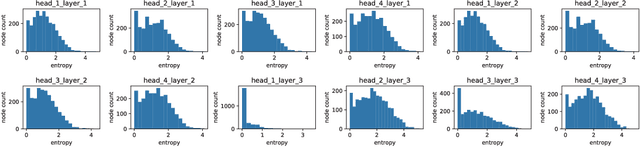

Nov 19, 2022Abstract:In designing and applying graph neural networks, we often fall into some optimization pitfalls, the most deceptive of which is that we can only build a deep model by solving over-smoothing. The fundamental reason is that we do not understand how graph neural networks work. Stress graph drawing can offer a unique viewpoint to message iteration in the graph, such as the root of the over-smoothing problem lies in the inability of graph models to maintain an ideal distance between nodes. We further elucidate the trigger conditions of over-smoothing and propose Stress Graph Neural Networks. By introducing the attractive and repulsive message passing from stress iteration, we show how to build a deep model without preventing over-smoothing, how to use repulsive information, and how to optimize the current message-passing scheme to approximate the full stress message propagation. By performing different tasks on 23 datasets, we verified the effectiveness of our attractive and repulsive models and the derived relationship between stress iteration and graph neural networks. We believe that stress graph drawing will be a popular resource for understanding and designing graph neural networks.

Understanding the Message Passing in Graph Neural Networks via Power Iteration

Jun 02, 2020

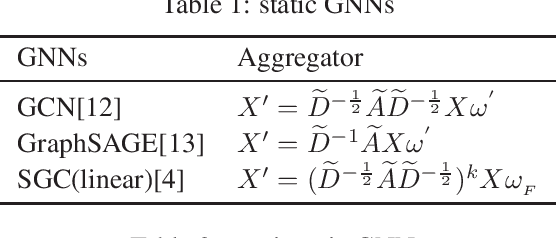

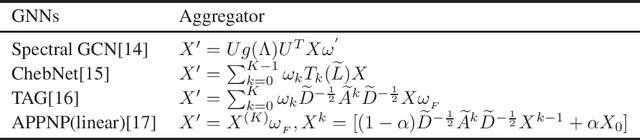

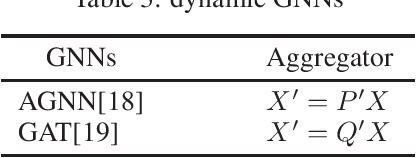

Abstract:The mechanism of message passing in graph neural networks(GNNs) is still mysterious for the literature. No one, to our knowledge, has given another possible theoretical origin for GNNs apart from convolutional neural networks. Somewhat to our surprise, the message passing can be best understood in terms of the power iteration. By removing activation functions and layer weights of GNNs, we propose power iteration clustering (SPIC) models which are naturally interpretable and scalable. The experiment shows our models extend the existing GNNs and enhance its capability of processing random featured networks. Moreover, we demonstrate the redundancy of some state-of-the-art GNNs in designing and define a lower limit for model evaluation by randomly initializing the aggregator of message passing. All the findings in this paper push the boundaries of our understanding of neural networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge