Yakov Babichenko

Sequential Naive Learning

Jan 08, 2021Abstract:We analyze boundedly rational updating from aggregate statistics in a model with binary actions and binary states. Agents each take an irreversible action in sequence after observing the unordered set of previous actions. Each agent first forms her prior based on the aggregate statistic, then incorporates her signal with the prior based on Bayes rule, and finally applies a decision rule that assigns a (mixed) action to each belief. If priors are formed according to a discretized DeGroot rule, then actions converge to the state (in probability), i.e., \emph{asymptotic learning}, in any informative information structure if and only if the decision rule satisfies probability matching. This result generalizes to unspecified information settings where information structures differ across agents and agents know only the information structure generating their own signal. Also, the main result extends to the case of $n$ states and $n$ actions.

Learning of Optimal Forecast Aggregation in Partial Evidence Environments

Feb 20, 2018

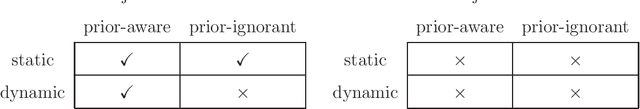

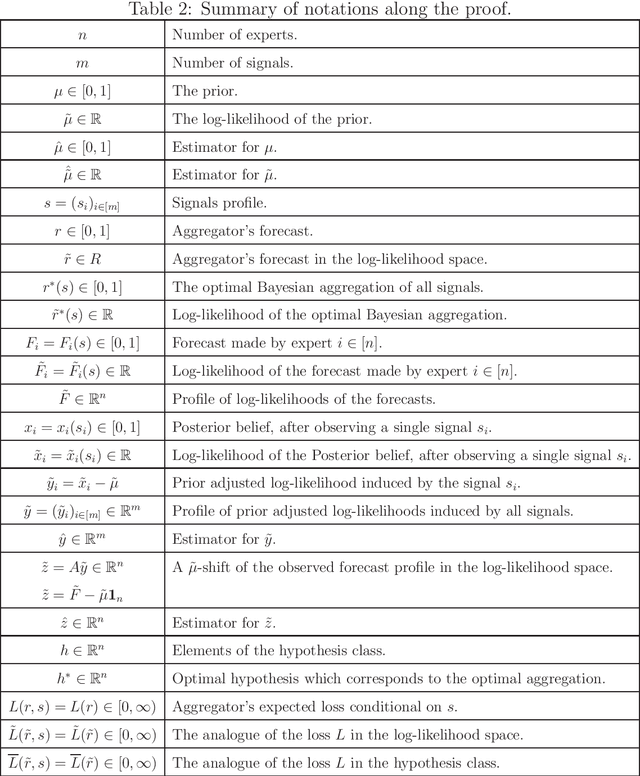

Abstract:We consider the forecast aggregation problem in repeated settings, where the forecasts are done on a binary event. At each period multiple experts provide forecasts about an event. The goal of the aggregator is to aggregate those forecasts into a subjective accurate forecast. We assume that experts are Bayesian; namely they share a common prior, each expert is exposed to some evidence, and each expert applies Bayes rule to deduce his forecast. The aggregator is ignorant with respect to the information structure (i.e., distribution over evidence) according to which experts make their prediction. The aggregator observes the experts' forecasts only. At the end of each period the actual state is realized. We focus on the question whether the aggregator can learn to aggregate optimally the forecasts of the experts, where the optimal aggregation is the Bayesian aggregation that takes into account all the information (evidence) in the system. We consider the class of partial evidence information structures, where each expert is exposed to a different subset of conditionally independent signals. Our main results are positive; We show that optimal aggregation can be learned in polynomial time in a quite wide range of instances of the partial evidence environments. We provide a tight characterization of the instances where learning is possible and impossible.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge