Wolfgang Fuhl

PupilNet: Convolutional Neural Networks for Robust Pupil Detection

Jan 19, 2016

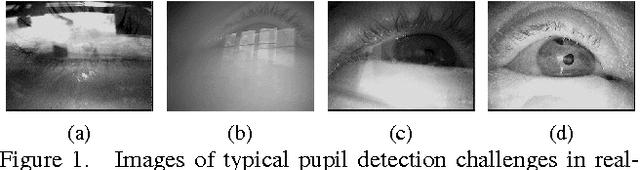

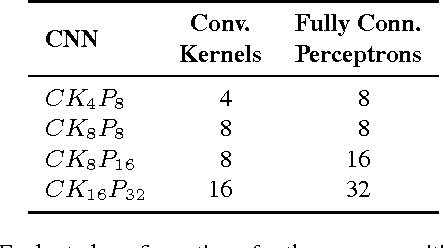

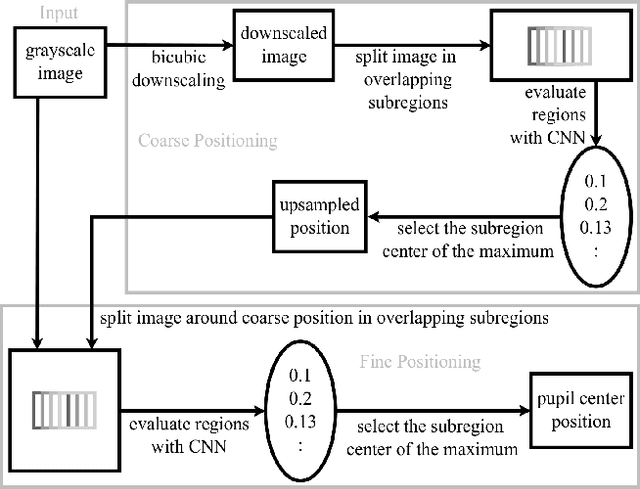

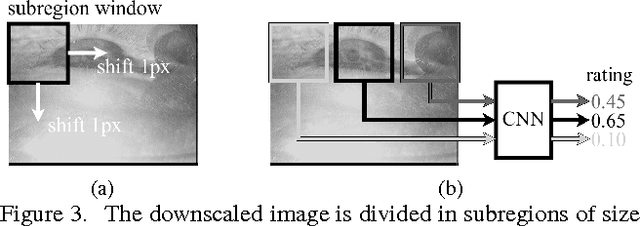

Abstract:Real-time, accurate, and robust pupil detection is an essential prerequisite for pervasive video-based eye-tracking. However, automated pupil detection in real-world scenarios has proven to be an intricate challenge due to fast illumination changes, pupil occlusion, non centered and off-axis eye recording, and physiological eye characteristics. In this paper, we propose and evaluate a method based on a novel dual convolutional neural network pipeline. In its first stage the pipeline performs coarse pupil position identification using a convolutional neural network and subregions from a downscaled input image to decrease computational costs. Using subregions derived from a small window around the initial pupil position estimate, the second pipeline stage employs another convolutional neural network to refine this position, resulting in an increased pupil detection rate up to 25% in comparison with the best performing state-of-the-art algorithm. Annotated data sets can be made available upon request.

Bayesian Identification of Fixations, Saccades, and Smooth Pursuits

Nov 24, 2015

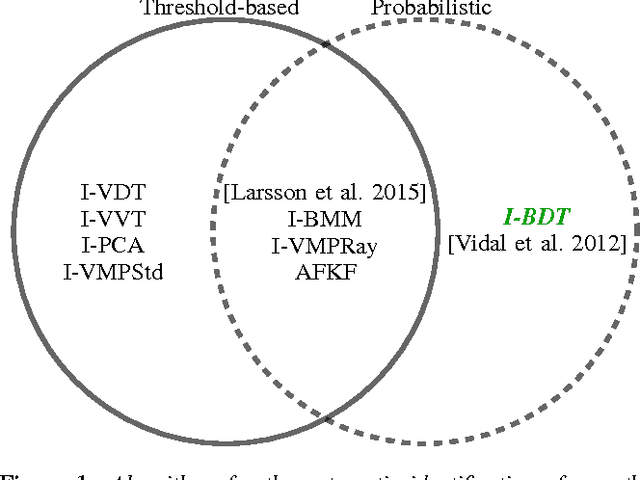

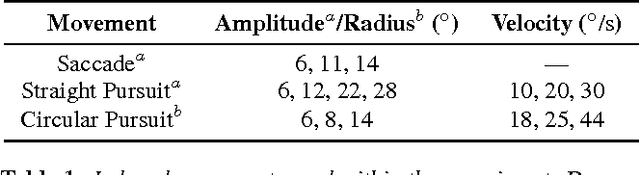

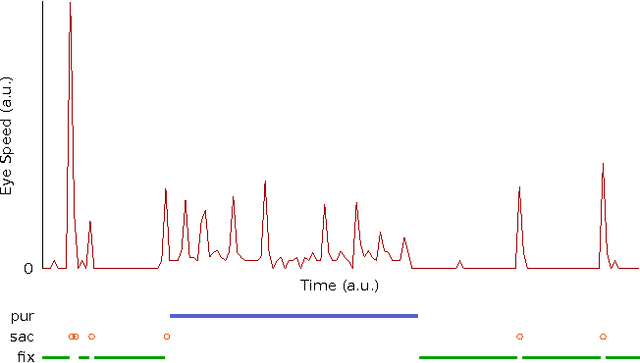

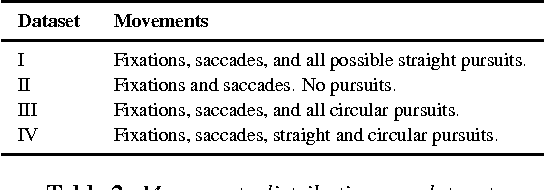

Abstract:Smooth pursuit eye movements provide meaningful insights and information on subject's behavior and health and may, in particular situations, disturb the performance of typical fixation/saccade classification algorithms. Thus, an automatic and efficient algorithm to identify these eye movements is paramount for eye-tracking research involving dynamic stimuli. In this paper, we propose the Bayesian Decision Theory Identification (I-BDT) algorithm, a novel algorithm for ternary classification of eye movements that is able to reliably separate fixations, saccades, and smooth pursuits in an online fashion, even for low-resolution eye trackers. The proposed algorithm is evaluated on four datasets with distinct mixtures of eye movements, including fixations, saccades, as well as straight and circular smooth pursuits; data was collected with a sample rate of 30 Hz from six subjects, totaling 24 evaluation datasets. The algorithm exhibits high and consistent performance across all datasets and movements relative to a manual annotation by a domain expert (recall: \mu = 91.42%, \sigma = 9.52%; precision: \mu = 95.60%, \sigma = 5.29%; specificity \mu = 95.41%, \sigma = 7.02%) and displays a significant improvement when compared to I-VDT, an state-of-the-art algorithm (recall: \mu = 87.67%, \sigma = 14.73%; precision: \mu = 89.57%, \sigma = 8.05%; specificity \mu = 92.10%, \sigma = 11.21%). For algorithm implementation and annotated datasets, please contact the first author.

ElSe: Ellipse Selection for Robust Pupil Detection in Real-World Environments

Nov 23, 2015

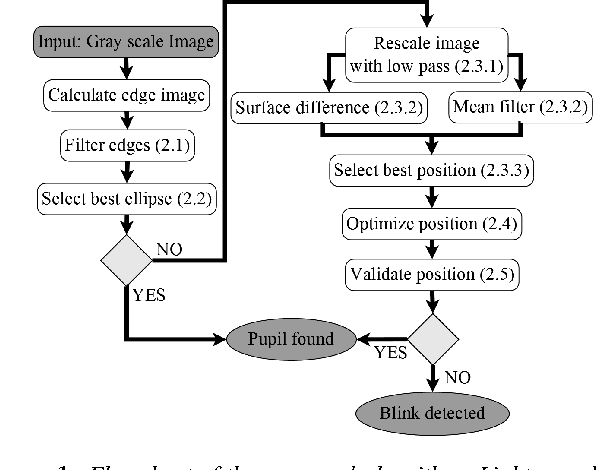

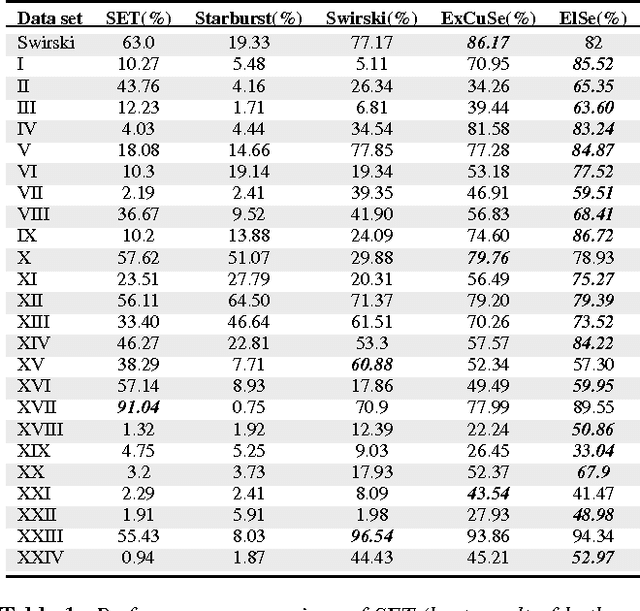

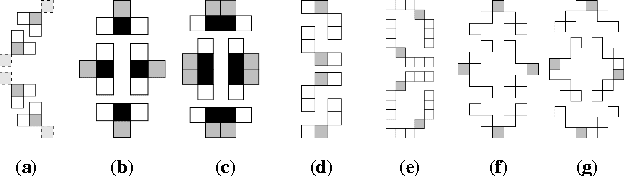

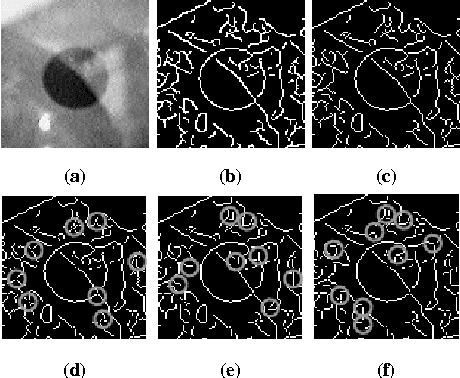

Abstract:Fast and robust pupil detection is an essential prerequisite for video-based eye-tracking in real-world settings. Several algorithms for image-based pupil detection have been proposed, their applicability is mostly limited to laboratory conditions. In realworld scenarios, automated pupil detection has to face various challenges, such as illumination changes, reflections (on glasses), make-up, non-centered eye recording, and physiological eye characteristics. We propose ElSe, a novel algorithm based on ellipse evaluation of a filtered edge image. We aim at a robust, resource-saving approach that can be integrated in embedded architectures e.g. driving. The proposed algorithm was evaluated against four state-of-the-art methods on over 93,000 hand-labeled images from which 55,000 are new images contributed by this work. On average, the proposed method achieved a 14.53% improvement on the detection rate relative to the best state-of-the-art performer. download:ftp://emmapupildata@messor.informatik.unituebingen. de (password:eyedata).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge