Thomas Kübler

Deep semantic gaze embedding and scanpath comparison for expertise classification during OPT viewing

Mar 31, 2020

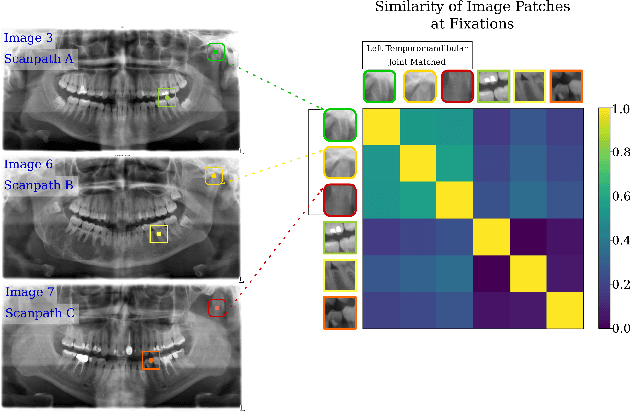

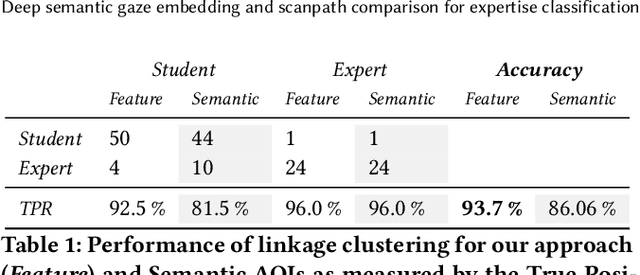

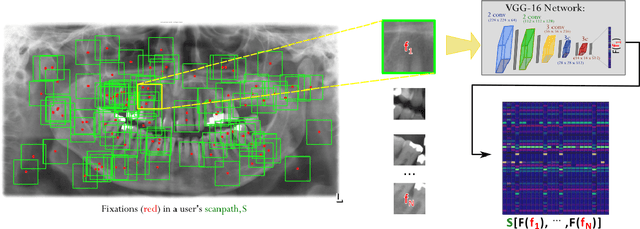

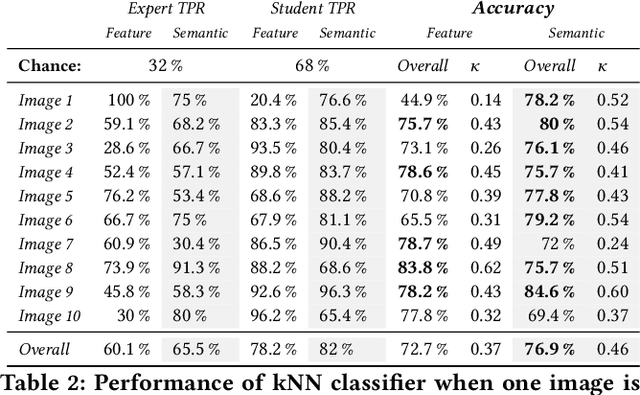

Abstract:Modeling eye movement indicative of expertise behavior is decisive in user evaluation. However, it is indisputable that task semantics affect gaze behavior. We present a novel approach to gaze scanpath comparison that incorporates convolutional neural networks (CNN) to process scene information at the fixation level. Image patches linked to respective fixations are used as input for a CNN and the resulting feature vectors provide the temporal and spatial gaze information necessary for scanpath similarity comparison.We evaluated our proposed approach on gaze data from expert and novice dentists interpreting dental radiographs using a local alignment similarity score. Our approach was capable of distinguishing experts from novices with 93% accuracy while incorporating the image semantics. Moreover, our scanpath comparison using image patch features has the potential to incorporate task semantics from a variety of tasks

Validation loss for landmark detection

Jan 30, 2019

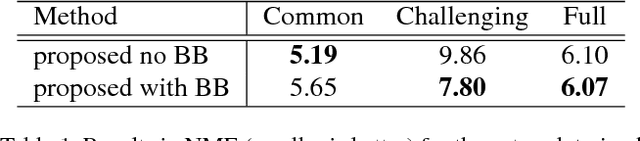

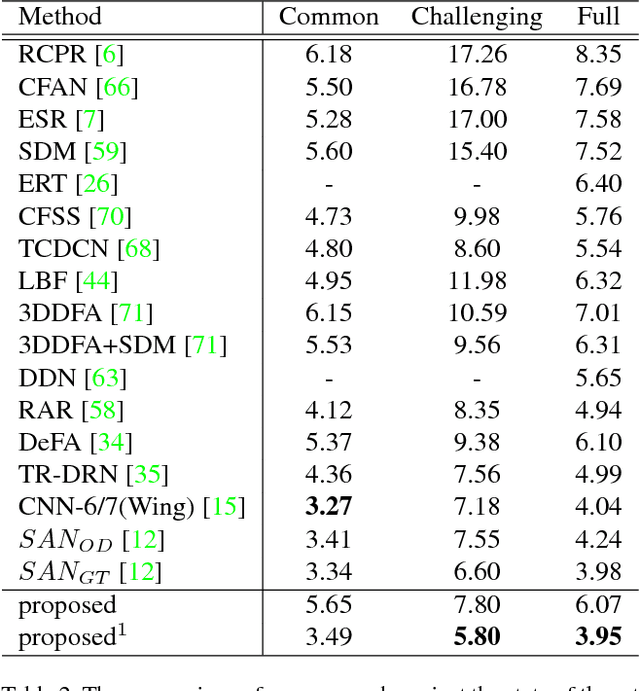

Abstract:We present a new loss function for the validation of image landmarks detected via Convolutional Neural Networks (CNNs). The network learns to estimate how accurate its landmark estimation is. This loss function is applicable to all regression-based location estimations and allows exclusion of unreliable landmarks from further processing. In addition, we formulate a novel batch balancing approach which weights the importance of samples based on their produced loss. This is done by computing a probability distribution mapping on an interval from which samples can be selected using a uniform random selection scheme. We conducted several experiments on the 300W facial landmark data. In the first experiment, the influence of our batch balancing approach is evaluated by comparing it against uniform sampling. Afterwards, we compare two networks with the state of the art and demonstrate the usage and practical importance of our landmark validation signal. The effectiveness of our validation signal is further confirmed by a correlation analysis over all landmarks. Finally, we show a study on head pose estimation of truck drivers on German highways and compare our network to a commercial multi-camera system.

Bayesian Identification of Fixations, Saccades, and Smooth Pursuits

Nov 24, 2015

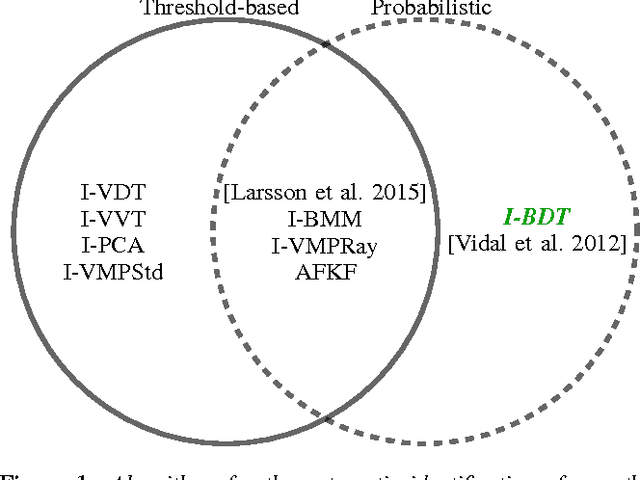

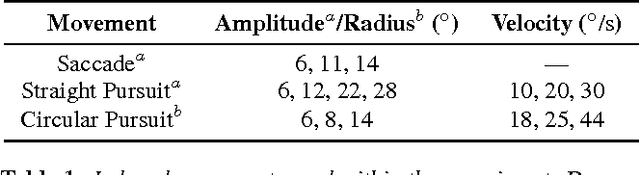

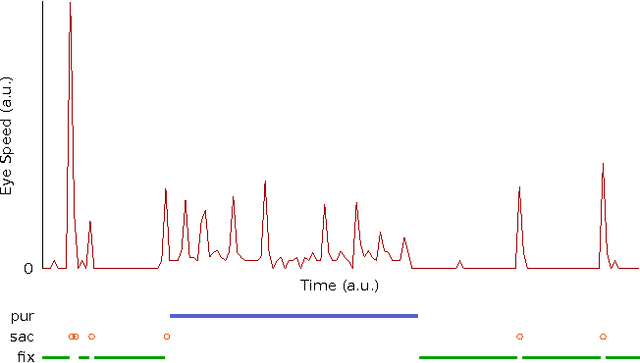

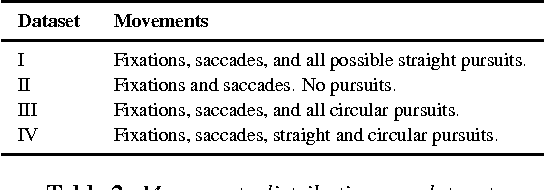

Abstract:Smooth pursuit eye movements provide meaningful insights and information on subject's behavior and health and may, in particular situations, disturb the performance of typical fixation/saccade classification algorithms. Thus, an automatic and efficient algorithm to identify these eye movements is paramount for eye-tracking research involving dynamic stimuli. In this paper, we propose the Bayesian Decision Theory Identification (I-BDT) algorithm, a novel algorithm for ternary classification of eye movements that is able to reliably separate fixations, saccades, and smooth pursuits in an online fashion, even for low-resolution eye trackers. The proposed algorithm is evaluated on four datasets with distinct mixtures of eye movements, including fixations, saccades, as well as straight and circular smooth pursuits; data was collected with a sample rate of 30 Hz from six subjects, totaling 24 evaluation datasets. The algorithm exhibits high and consistent performance across all datasets and movements relative to a manual annotation by a domain expert (recall: \mu = 91.42%, \sigma = 9.52%; precision: \mu = 95.60%, \sigma = 5.29%; specificity \mu = 95.41%, \sigma = 7.02%) and displays a significant improvement when compared to I-VDT, an state-of-the-art algorithm (recall: \mu = 87.67%, \sigma = 14.73%; precision: \mu = 89.57%, \sigma = 8.05%; specificity \mu = 92.10%, \sigma = 11.21%). For algorithm implementation and annotated datasets, please contact the first author.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge