Wang Dai

Inter-Speaker Relative Cues for Two-Stage Text-Guided Target Speech Extraction

Mar 01, 2026Abstract:This paper investigates the use of relative cues for text-based target speech extraction (TSE). We first provide a theoretical justification for relative cues from the perspectives of human perception and label quantization, showing that relative cues preserve fine-grained distinctions often lost in absolute categorical representations. Building on this analysis, we propose a two-stage TSE framework, in which a speech separation model generates candidate sources, followed by a text-guided classifier that selects the target speaker based on embedding similarity. Using this framework, we train two separate classification models to evaluate the advantages of relative cues over independent cues in terms of both classification accuracy and TSE performance. Experimental results demonstrate that (i) relative cues achieve higher overall classification accuracy and improved TSE performance compared with independent cues, (ii) the two-stage framework substantially outperforms single-stage text-conditioned extraction methods on both signal-level and objective perceptual metrics, and (iii) certain relative cues (language, gender, loudness, distance, temporal order, speaking duration, random cue and all cue) can surpass the performance of an audio-based TSE system. Further analysis reveals notable differences in discriminative power across cue types, providing insights into the effectiveness of different relative cues for TSE.

Reference Channel Selection by Multi-Channel Masking for End-to-End Multi-Channel Speech Enhancement

Jun 05, 2024

Abstract:In end-to-end multi-channel speech enhancement, the traditional approach of designating one microphone signal as the reference for processing may not always yield optimal results. The limitation is particularly in scenarios with large distributed microphone arrays with varying speaker-to-microphone distances or compact, highly directional microphone arrays where speaker or microphone positions change over time. Current mask-based methods often fix the reference channel during training, which makes it not possible to adaptively select the reference channel for optimal performance. To address this problem, we introduce an adaptive approach for selecting the optimal reference channel. Our method leverages a multi-channel masking-based scheme, where multiple masked signals are combined to generate a single-channel output signal. This enhanced signal is then used for loss calculation, while the reference clean speech is adjusted based on the highest scale-invariant signal-to-distortion ratio (SI-SDR). The experimental results on the Spear challenge simulated dataset D4 demonstrate the superiority of our proposed method over the conventional approach of using a fixed reference channel with single-channel masking

Multi-Channel Masking with Learnable Filterbank for Sound Source Separation

Mar 14, 2023

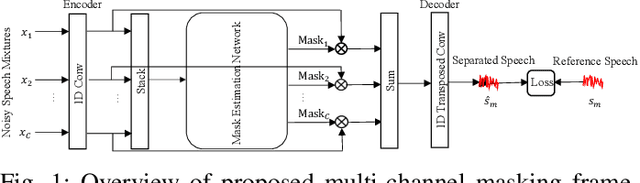

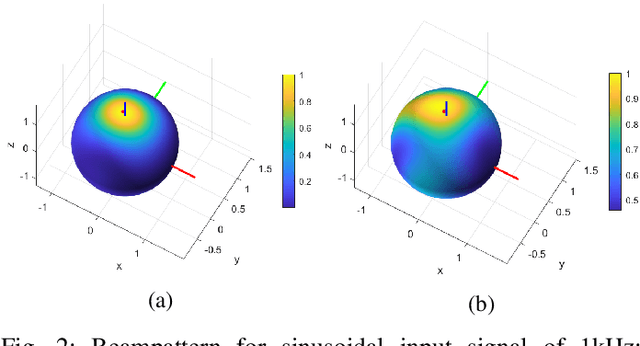

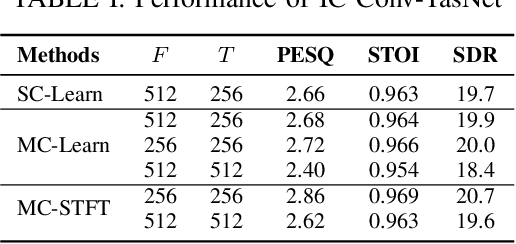

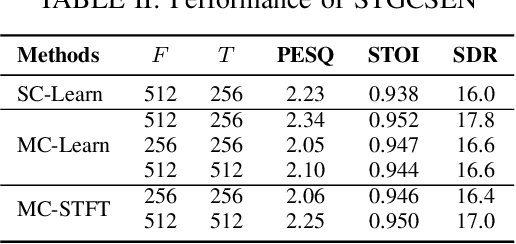

Abstract:This work proposes a learnable filterbank based on a multi-channel masking framework for multi-channel source separation. The learnable filterbank is a 1D Conv layer, which transforms the raw waveform into a 2D representation. In contrast to the conventional single-channel masking method, we estimate a mask for each individual microphone channel. The estimated masks are then applied to the transformed waveform representation like in the traditional filter-and-sum beamforming operation. Specifically, each mask is used to multiply the corresponding channel's 2D representation, and the masked output of all channels are then summed. At last, a 1D transposed Conv layer is used to convert the summed masked signal into the waveform domain. The experimental results show our method outperforms single-channel masking with a learnable filterbank and can outperform multi-channel complex masking with STFT complex spectrum in the STGCSEN model if a learnable filterbank is transformed to a higher feature dimension. The spatial response analysis also verifies that multi-channel masking in the learnable filterbank domain has spatial selectivity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge