Volker H. Schulz

Riemannian Optimization for Variance Estimation in Linear Mixed Models

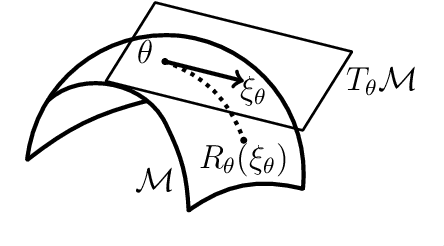

Dec 18, 2022Abstract:Variance parameter estimation in linear mixed models is a challenge for many classical nonlinear optimization algorithms due to the positive-definiteness constraint of the random effects covariance matrix. We take a completely novel view on parameter estimation in linear mixed models by exploiting the intrinsic geometry of the parameter space. We formulate the problem of residual maximum likelihood estimation as an optimization problem on a Riemannian manifold. Based on the introduced formulation, we give geometric higher-order information on the problem via the Riemannian gradient and the Riemannian Hessian. Based on that, we test our approach with Riemannian optimization algorithms numerically. Our approach yields a higher quality of the variance parameter estimates compared to existing approaches.

A Riemannian Newton Trust-Region Method for Fitting Gaussian Mixture Models

Apr 30, 2021

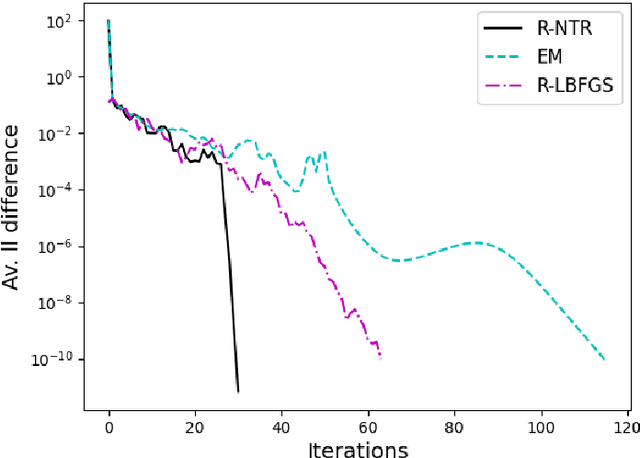

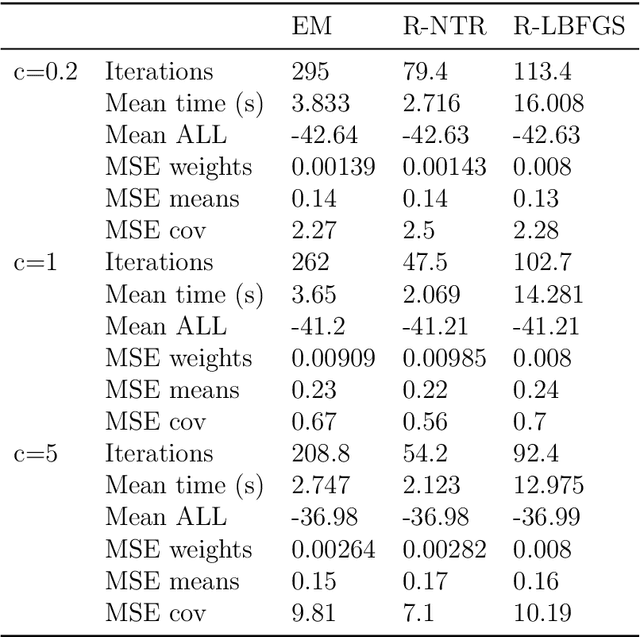

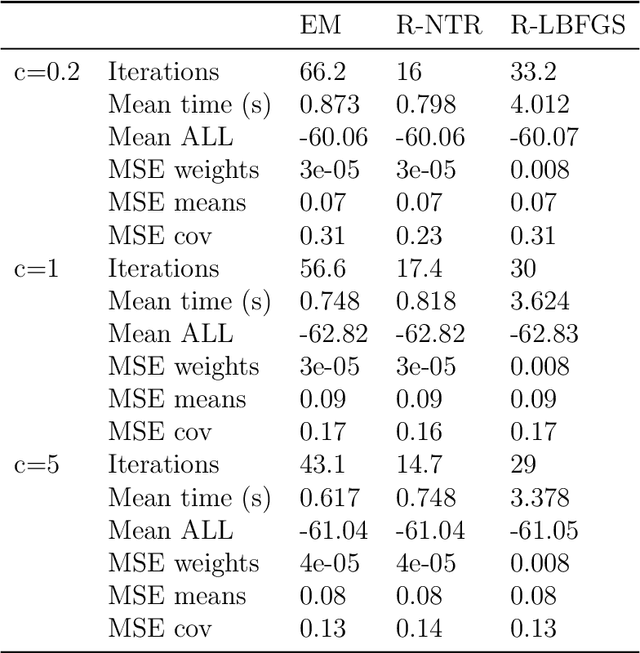

Abstract:Gaussian Mixture Models are a powerful tool in Data Science and Statistics that are mainly used for clustering and density approximation. The task of estimating the model parameters is in practice often solved by the Expectation Maximization (EM) algorithm which has its benefits in its simplicity and low per-iteration costs. However, the EM converges slowly if there is a large share of hidden information or overlapping clusters. Recent advances in Manifold Optimization for Gaussian Mixture Models have gained increasing interest. We introduce a formula for the Riemannian Hessian for Gaussian Mixture Models. On top, we propose a new Riemannian Newton Trust-Region method which outperforms current approaches both in terms of runtime and number of iterations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge