Vivek Muralidharan

Assessing The Potential Of Mid-Sized Language Models For Clinical QA

Apr 24, 2024

Abstract:Large language models, such as GPT-4 and Med-PaLM, have shown impressive performance on clinical tasks; however, they require access to compute, are closed-source, and cannot be deployed on device. Mid-size models such as BioGPT-large, BioMedLM, LLaMA 2, and Mistral 7B avoid these drawbacks, but their capacity for clinical tasks has been understudied. To help assess their potential for clinical use and help researchers decide which model they should use, we compare their performance on two clinical question-answering (QA) tasks: MedQA and consumer query answering. We find that Mistral 7B is the best performing model, winning on all benchmarks and outperforming models trained specifically for the biomedical domain. While Mistral 7B's MedQA score of 63.0% approaches the original Med-PaLM, and it often can produce plausible responses to consumer health queries, room for improvement still exists. This study provides the first head-to-head assessment of open source mid-sized models on clinical tasks.

DRIFT: Deep Reinforcement Learning for Intelligent Floating Platforms Trajectories

Oct 06, 2023

Abstract:This investigation introduces a novel deep reinforcement learning-based suite to control floating platforms in both simulated and real-world environments. Floating platforms serve as versatile test-beds to emulate microgravity environments on Earth. Our approach addresses the system and environmental uncertainties in controlling such platforms by training policies capable of precise maneuvers amid dynamic and unpredictable conditions. Leveraging state-of-the-art deep reinforcement learning techniques, our suite achieves robustness, adaptability, and good transferability from simulation to reality. Our Deep Reinforcement Learning (DRL) framework provides advantages such as fast training times, large-scale testing capabilities, rich visualization options, and ROS bindings for integration with real-world robotic systems. Beyond policy development, our suite provides a comprehensive platform for researchers, offering open-access at https://github.com/elharirymatteo/RANS/tree/ICRA24.

Evaluation of Position and Velocity Based Forward Dynamics Compliance Control (FDCC) for Robotic Interactions in Position Controlled Robots

Oct 24, 2022

Abstract:In robotic manipulation, end-effector compliance is an essential precondition for performing contact-rich tasks, such as machining, assembly, and human-robot interaction. Most robotic arms are position-controlled stiff systems at a hardware level. Thus, adding compliance becomes essential. Compliance in those systems has been recently achieved using Forward dynamics compliance control (FDCC), which, owing to its virtual forward dynamics model, can be implemented on both position and velocity-controlled robots. This paper evaluates the choice of control interface (and hence the control domain), which, although considered trivial, is essential due to differences in their characteristics. In some cases, the choice is restricted to the available hardware interface. However, given the option to choose, the velocity-based control interface makes a better candidate for compliance control because of smoother compliant behaviour, reduced interaction forces, and work done. To prove these points, in this paper FDCC is evaluated on the UR10e six-DOF manipulator with velocity and position control modes. The evaluation is based on force-control benchmarking metrics using 3D-printed artefacts. Real experiments favour the choice of velocity control over position control.

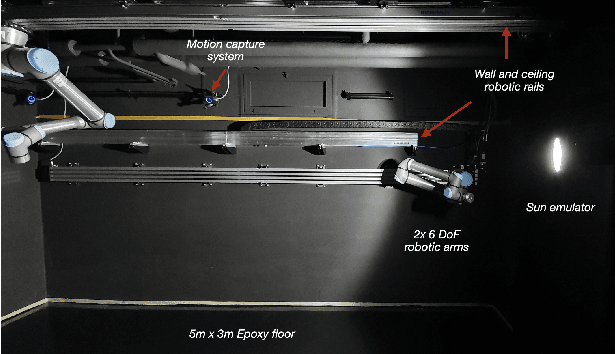

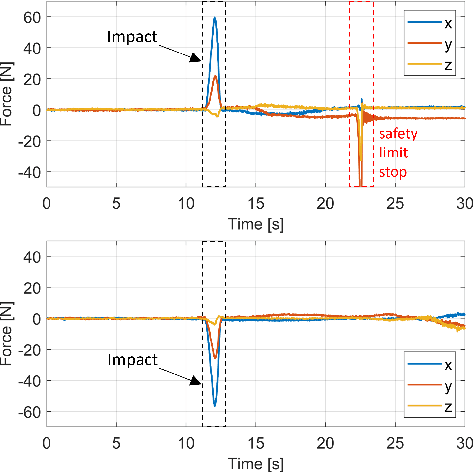

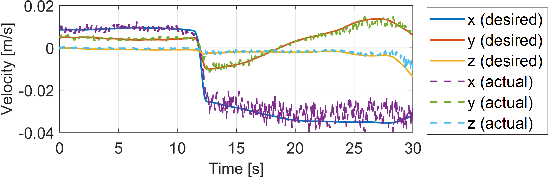

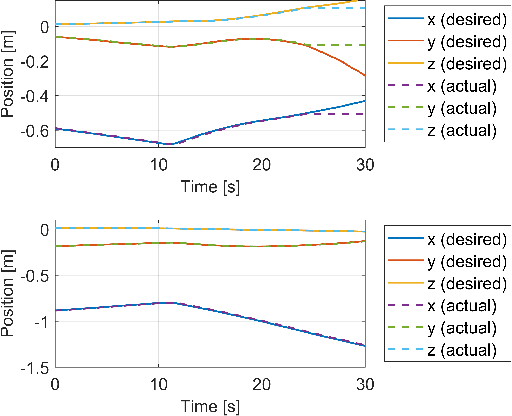

Emulating On-Orbit Interactions Using Forward Dynamics Based Cartesian Motion

Sep 30, 2022

Abstract:The paper presents a novel Hardware-In-the-Loop (HIL) emulation framework of on-orbit interactions using on-ground robotic manipulators. It combines Virtual Forward Dynamic Model (VFDM) for Cartesian motion control of robotic manipulators with an Orbital Dynamics Simulator (ODS) based on the Clohessy Wiltshire (CW) Model. VFDM-based Inverse Kinematics (IK) solver is known to have better motion tracking, path accuracy, and solver convergency than traditional IK solvers. Therefore it provides a stable Cartesian motion for manipulator-based HIL on-orbit emulations. The framework is tested on a ROS-based robotics testbed to emulate two scenarios: free-floating satellite motion and free-floating interaction (collision). Mock-ups of two satellites are mounted at the robots' end-effectors. Forces acting on the mock-ups are measured through an in-built F/T sensor on each robotic arm. During the tests, the relative motion of the mock-ups is expressed with respect to a moving observer rotating at a fixed angular velocity in a circular orbit rather than their motion in the inertial frame. The ODS incorporates the force and torque values on the fly and delivers the corresponding satellite motions to the virtual forward dynamics model as online trajectories. Results are comparable to other free-floating HIL emulators. Fidelity between the simulated motion and robot-mounted mock-up motion is confirmed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge