Vildan Atalay Aydin

Volumetric Super-Resolution of Multispectral Data

May 14, 2017

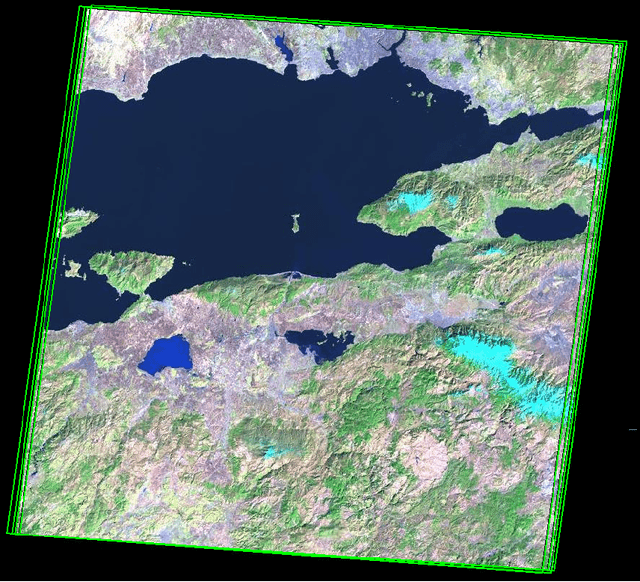

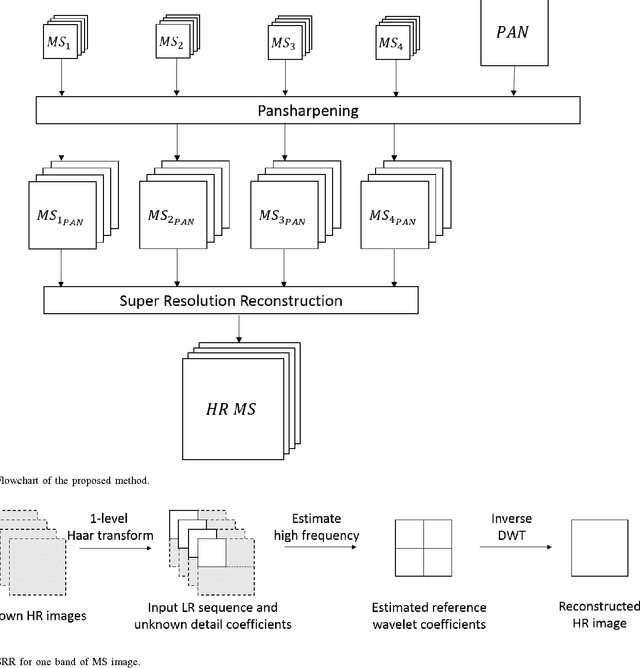

Abstract:Most multispectral remote sensors (e.g. QuickBird, IKONOS, and Landsat 7 ETM+) provide low-spatial high-spectral resolution multispectral (MS) or high-spatial low-spectral resolution panchromatic (PAN) images, separately. In order to reconstruct a high-spatial/high-spectral resolution multispectral image volume, either the information in MS and PAN images are fused (i.e. pansharpening) or super-resolution reconstruction (SRR) is used with only MS images captured on different dates. Existing methods do not utilize temporal information of MS and high spatial resolution of PAN images together to improve the resolution. In this paper, we propose a multiframe SRR algorithm using pansharpened MS images, taking advantage of both temporal and spatial information available in multispectral imagery, in order to exceed spatial resolution of given PAN images. We first apply pansharpening to a set of multispectral images and their corresponding PAN images captured on different dates. Then, we use the pansharpened multispectral images as input to the proposed wavelet-based multiframe SRR method to yield full volumetric SRR. The proposed SRR method is obtained by deriving the subband relations between multitemporal MS volumes. We demonstrate the results on Landsat 7 ETM+ images comparing our method to conventional techniques.

Motion-Compensated Temporal Filtering for Critically-Sampled Wavelet-Encoded Images

May 13, 2017

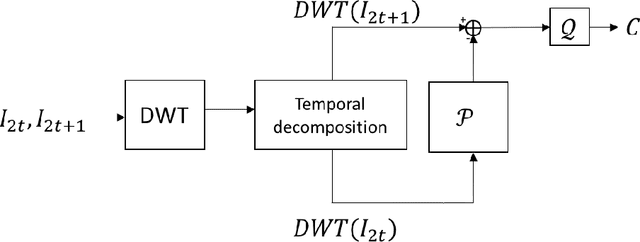

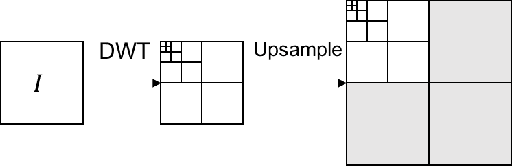

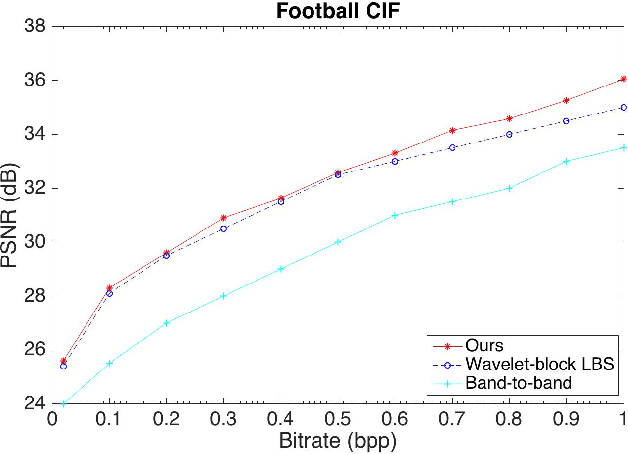

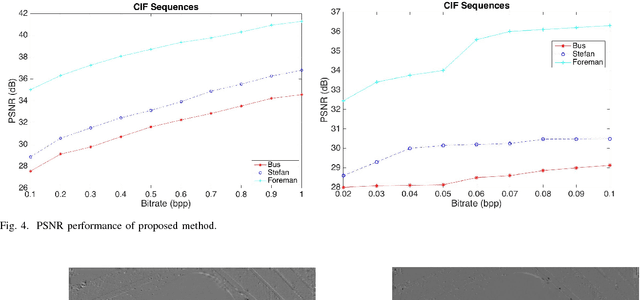

Abstract:We propose a novel motion estimation/compensation (ME/MC) method for wavelet-based (in-band) motion compensated temporal filtering (MCTF), with application to low-bitrate video coding. Unlike the conventional in-band MCTF algorithms, which require redundancy to overcome the shift-variance problem of critically sampled (complete) discrete wavelet transforms (DWT), we perform ME/MC steps directly on DWT coefficients by avoiding the need of shift-invariance. We omit upsampling, the inverse-DWT (IDWT), and the calculation of redundant DWT coefficients, while achieving arbitrary subpixel accuracy without interpolation, and high video quality even at very low-bitrates, by deriving the exact relationships between DWT subbands of input image sequences. Experimental results demonstrate the accuracy of the proposed method, confirming that our model for ME/MC effectively improves video coding quality.

Super-Resolution of Wavelet-Encoded Images

May 03, 2017

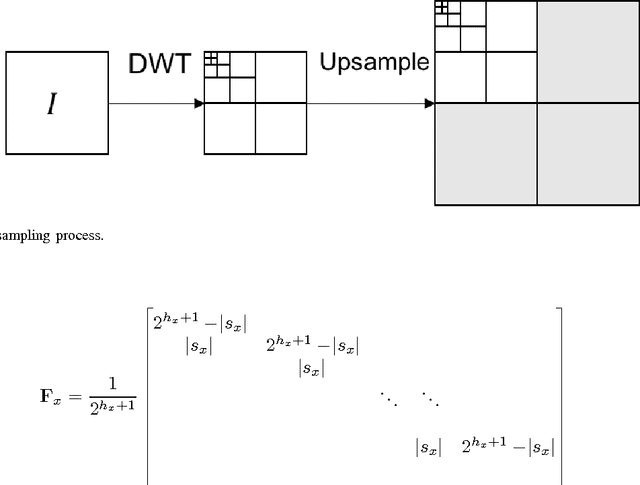

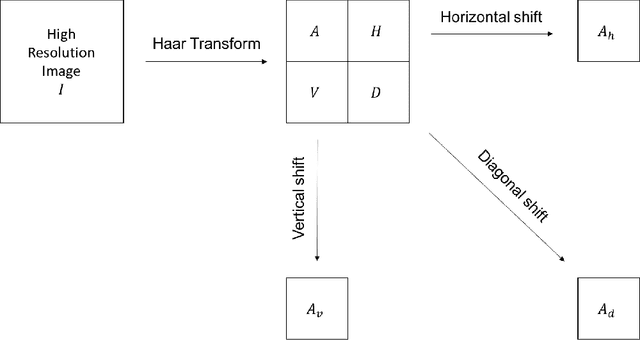

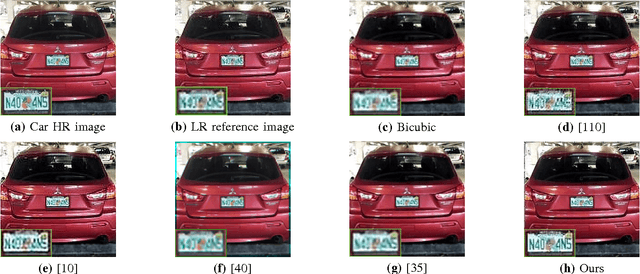

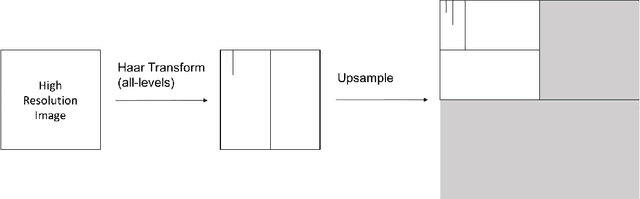

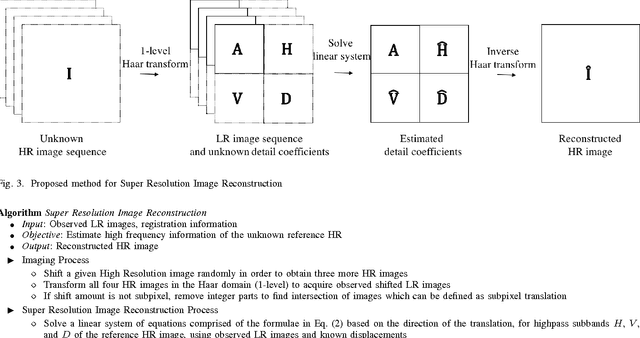

Abstract:Multiview super-resolution image reconstruction (SRIR) is often cast as a resampling problem by merging non-redundant data from multiple low-resolution (LR) images on a finer high-resolution (HR) grid, while inverting the effect of the camera point spread function (PSF). One main problem with multiview methods is that resampling from nonuniform samples (provided by LR images) and the inversion of the PSF are highly nonlinear and ill-posed problems. Non-linearity and ill-posedness are typically overcome by linearization and regularization, often through an iterative optimization process, which essentially trade off the very same information (i.e. high frequency) that we want to recover. We propose a novel point of view for multiview SRIR: Unlike existing multiview methods that reconstruct the entire spectrum of the HR image from the multiple given LR images, we derive explicit expressions that show how the high-frequency spectra of the unknown HR image are related to the spectra of the LR images. Therefore, by taking any of the LR images as the reference to represent the low-frequency spectra of the HR image, one can reconstruct the super-resolution image by focusing only on the reconstruction of the high-frequency spectra. This is very much like single-image methods, which extrapolate the spectrum of one image, except that we rely on information provided by all other views, rather than by prior constraints as in single-image methods (which may not be an accurate source of information). This is made possible by deriving and applying explicit closed-form expressions that define how the local high frequency information that we aim to recover for the reference high resolution image is related to the local low frequency information in the sequence of views. Results and comparisons with recently published state-of-the-art methods show the superiority of the proposed solution.

Sub-Pixel Registration of Wavelet-Encoded Images

May 01, 2017

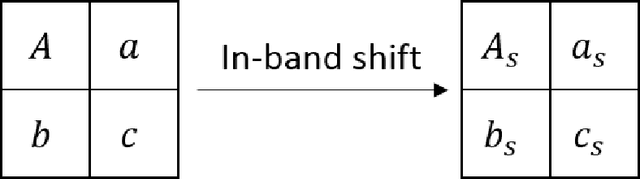

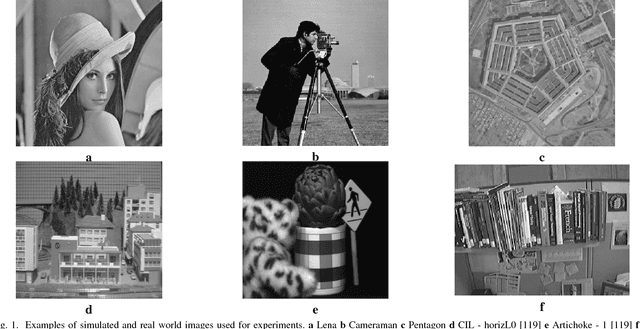

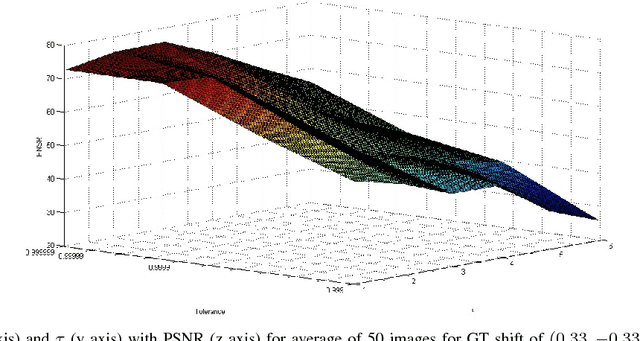

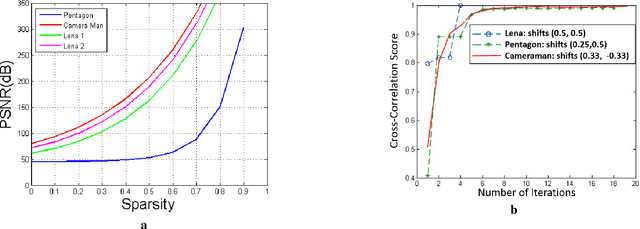

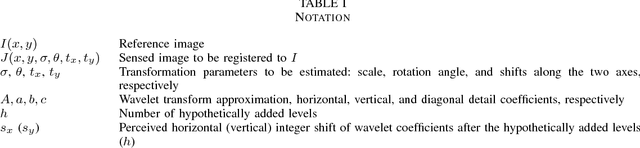

Abstract:Sub-pixel registration is a crucial step for applications such as super-resolution in remote sensing, motion compensation in magnetic resonance imaging, and non-destructive testing in manufacturing, to name a few. Recently, these technologies have been trending towards wavelet encoded imaging and sparse/compressive sensing. The former plays a crucial role in reducing imaging artifacts, while the latter significantly increases the acquisition speed. In view of these new emerging needs for applications of wavelet encoded imaging, we propose a sub-pixel registration method that can achieve direct wavelet domain registration from a sparse set of coefficients. We make the following contributions: (i) We devise a method of decoupling scale, rotation, and translation parameters in the Haar wavelet domain, (ii) We derive explicit mathematical expressions that define in-band sub-pixel registration in terms of wavelet coefficients, (iii) Using the derived expressions, we propose an approach to achieve in-band subpixel registration, avoiding back and forth transformations. (iv) Our solution remains highly accurate even when a sparse set of coefficients are used, which is due to localization of signals in a sparse set of wavelet coefficients. We demonstrate the accuracy of our method, and show that it outperforms the state-of-the-art on simulated and real data, even when the data is sparse.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge