Vesa Hirvisalo

Positioning Fog Computing for Smart Manufacturing

May 22, 2022

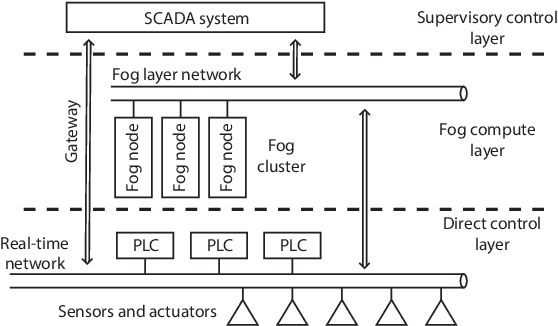

Abstract:We study machine learning systems for real-time industrial quality control. In many factory systems, production processes must be continuously controlled in order to maintain product quality. Especially challenging are the systems that must balance in real-time between stringent resource consumption constraints and the risk of defective end-product. There is a need for automated quality control systems as human control is tedious and error-prone. We see machine learning as a viable choice for developing automated quality control systems, but integrating such system with existing factory automation remains a challenge. In this paper we propose introducing a new fog computing layer to the standard hierarchy of automation control to meet the needs of machine learning driven quality control.

A machine learning environment for evaluating autonomous driving software

Mar 07, 2020

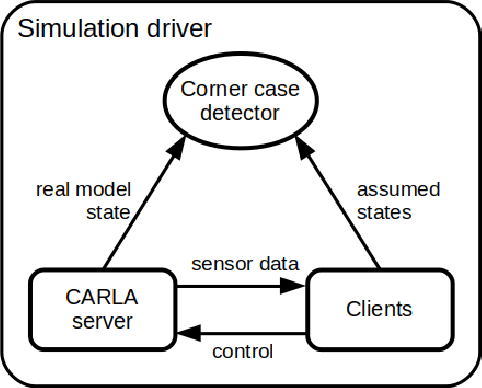

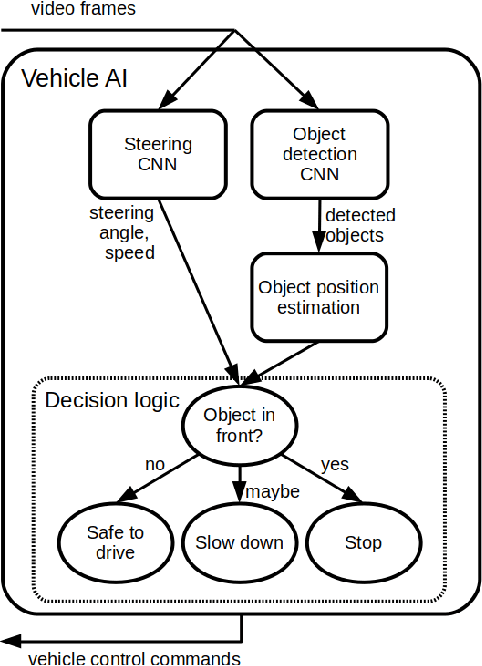

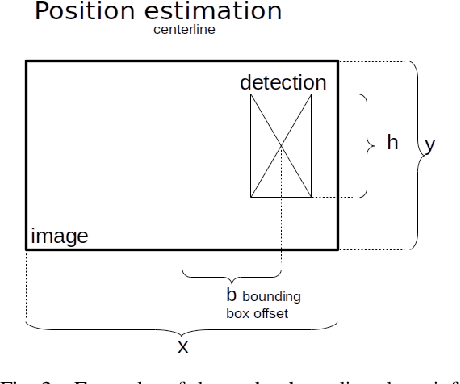

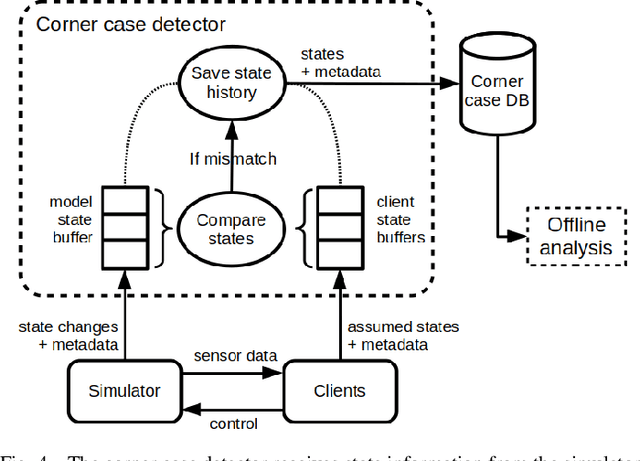

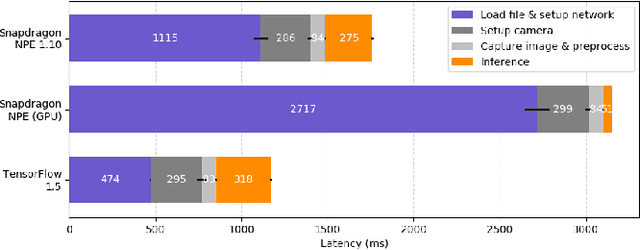

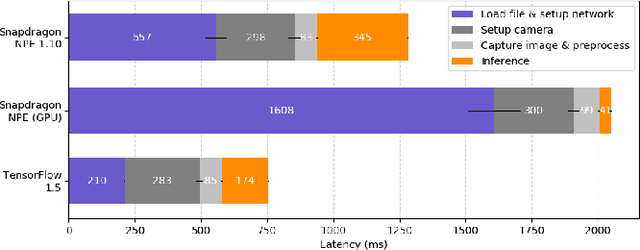

Abstract:Autonomous vehicles need safe development and testing environments. Many traffic scenarios are such that they cannot be tested in the real world. We see hybrid photorealistic simulation as a viable tool for developing AI (artificial intelligence) software for autonomous driving. We present a machine learning environment for detecting autonomous vehicle corner case behavior. Our environment is based on connecting the CARLA simulation software to TensorFlow machine learning framework and custom AI client software. The AI client software receives data from a simulated world via virtual sensors and transforms the data into information using machine learning models. The AI clients control vehicles in the simulated world. Our environment monitors the state assumed by the vehicle AIs to the ground truth state derived from the simulation model. Our system can search for corner cases where the vehicle AI is unable to correctly understand the situation. In our paper, we present the overall hybrid simulator architecture and compare different configurations. We present performance measurements from real setups, and outline the main parameters affecting the hybrid simulator performance.

* 8 pages, 13 figures

Latency and Throughput Characterization of Convolutional Neural Networks for Mobile Computer Vision

Mar 26, 2018

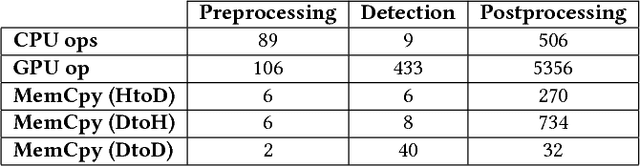

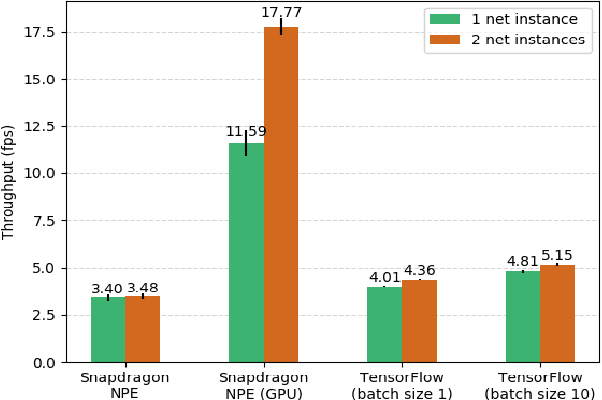

Abstract:We study performance characteristics of convolutional neural networks (CNN) for mobile computer vision systems. CNNs have proven to be a powerful and efficient approach to implement such systems. However, the system performance depends largely on the utilization of hardware accelerators, which are able to speed up the execution of the underlying mathematical operations tremendously through massive parallelism. Our contribution is performance characterization of multiple CNN-based models for object recognition and detection with several different hardware platforms and software frameworks, using both local (on-device) and remote (network-side server) computation. The measurements are conducted using real workloads and real processing platforms. On the platform side, we concentrate especially on TensorFlow and TensorRT. Our measurements include embedded processors found on mobile devices and high-performance processors that can be used on the network side of mobile systems. We show that there exists significant latency--throughput trade-offs but the behavior is very complex. We demonstrate and discuss several factors that affect the performance and yield this complex behavior.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge