Valentin Mercier

Toulouse INP, IRIT, EPE UT

Toward an Operational GNN-Based Multimesh Surrogate for Fast Flood Forecasting

Apr 03, 2026Abstract:Operational flood forecasting still relies on high-fidelity two-dimensional hydraulic solvers, but their runtime can be prohibitive for rapid decision support on large urban floodplains. In parallel, AI-based surrogate models have shown strong potential in several areas of computational physics for accelerating otherwise expensive high-fidelity simulations. We address this issue on the lower Têt River (France), starting from a production-grade Telemac2D model defined on a high-resolution unstructured finite-element mesh with more than $4\times 10^5$ nodes. From this setup, we build a learning-ready database of synthetic but operationally grounded flood events covering several representative hydrograph families and peak discharges. On top of this database, we develop a graph-neural surrogate based on projected meshes and multimesh connectivity. The projected-mesh strategy keeps training tractable while preserving high-fidelity supervision from the original Telemac simulations, and the multimesh construction enlarges the effective spatial receptive field without increasing network depth. We further study the effect of an explicit discharge feature $Q(t)$ and of pushforward training for long autoregressive rollouts. The experiments show that conditioning on $Q(t)$ is essential in this boundary-driven setting, that multimesh connectivity brings additional gains once the model is properly conditioned, and that pushforward further improves rollout stability. Among the tested configurations, the combination of $Q(t)$, multimesh connectivity, and pushforward provides the best overall results. These gains are observed both on hydraulic variables over the surrogate mesh and on inundation maps interpolated onto a common $25\,\mathrm{m}$ regular grid and compared against the original high-resolution Telemac solution. On the studied case, the learned surrogate produces 6-hour predictions in about $0.4\,\mathrm{s}$ on a single NVIDIA A100 GPU, compared with about $180\,\mathrm{min}$ on 56 CPU cores for the reference simulation. These results support graph-based surrogates as practical complements to industrial hydraulic solvers for operational flood mapping.

Two-level deep domain decomposition method

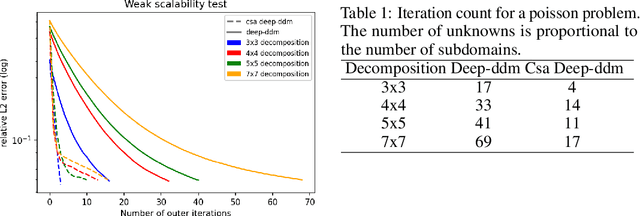

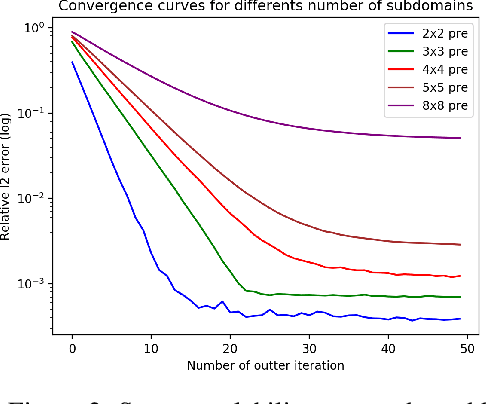

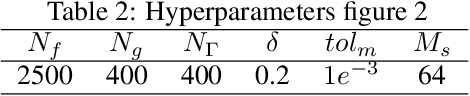

Aug 22, 2024Abstract:This study presents a two-level Deep Domain Decomposition Method (Deep-DDM) augmented with a coarse-level network for solving boundary value problems using physics-informed neural networks (PINNs). The addition of the coarse level network improves scalability and convergence rates compared to the single level method. Tested on a Poisson equation with Dirichlet boundary conditions, the two-level deep DDM demonstrates superior performance, maintaining efficient convergence regardless of the number of subdomains. This advance provides a more scalable and effective approach to solving complex partial differential equations with machine learning.

A Block-Coordinate Approach of Multi-level Optimization with an Application to Physics-Informed Neural Networks

May 25, 2023Abstract:Multi-level methods are widely used for the solution of large-scale problems, because of their computational advantages and exploitation of the complementarity between the involved sub-problems. After a re-interpretation of multi-level methods from a block-coordinate point of view, we propose a multi-level algorithm for the solution of nonlinear optimization problems and analyze its evaluation complexity. We apply it to the solution of partial differential equations using physics-informed neural networks (PINNs) and show on a few test problems that the approach results in better solutions and significant computational savings

A coarse space acceleration of deep-DDM

Dec 07, 2021

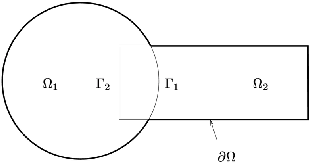

Abstract:The use of deep learning methods for solving PDEs is a field in full expansion. In particular, Physical Informed Neural Networks, that implement a sampling of the physical domain and use a loss function that penalizes the violation of the partial differential equation, have shown their great potential. Yet, to address large scale problems encountered in real applications and compete with existing numerical methods for PDEs, it is important to design parallel algorithms with good scalability properties. In the vein of traditional domain decomposition methods (DDM), we consider the recently proposed deep-ddm approach. We present an extension of this method that relies on the use of a coarse space correction, similarly to what is done in traditional DDM solvers. Our investigations shows that the coarse correction is able to alleviate the deterioration of the convergence of the solver when the number of subdomains is increased thanks to an instantaneous information exchange between subdomains at each iteration. Experimental results demonstrate that our approach induces a remarkable acceleration of the original deep-ddm method, at a reduced additional computational cost.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge