Udi Apsel

Lifted Message Passing for the Generalized Belief Propagation

Oct 05, 2016

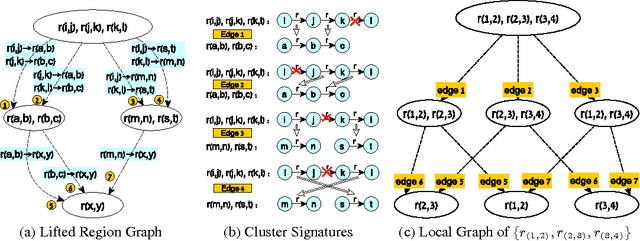

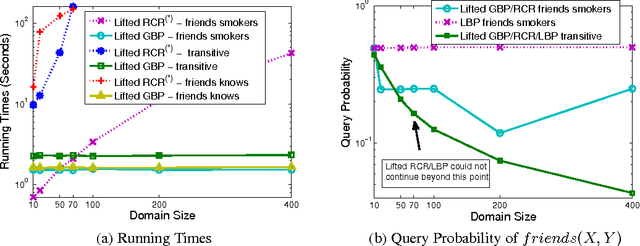

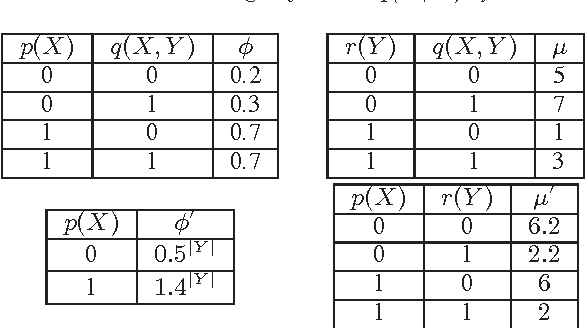

Abstract:We introduce the lifted Generalized Belief Propagation (GBP) message passing algorithm, for the computation of sum-product queries in Probabilistic Relational Models (e.g. Markov logic network). The algorithm forms a compact region graph and establishes a modified version of message passing, which mimics the GBP behavior in a corresponding ground model. The compact graph is obtained by exploiting a graphical representation of clusters, which reduces cluster symmetry detection to isomorphism tests on small local graphs. The framework is thus capable of handling complex models, while remaining domain-size independent.

Exploiting Uniform Assignments in First-Order MPE

Oct 16, 2012

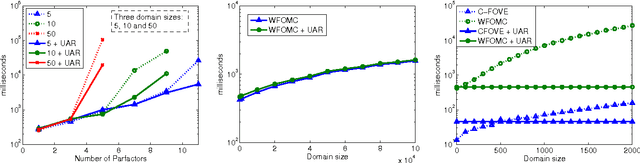

Abstract:The MPE (Most Probable Explanation) query plays an important role in probabilistic inference. MPE solution algorithms for probabilistic relational models essentially adapt existing belief assessment method, replacing summation with maximization. But the rich structure and symmetries captured by relational models together with the properties of the maximization operator offer an opportunity for additional simplification with potentially significant computational ramifications. Specifically, these models often have groups of variables that define symmetric distributions over some population of formulas. The maximizing choice for different elements of this group is the same. If we can realize this ahead of time, we can significantly reduce the size of the model by eliminating a potentially significant portion of random variables. This paper defines the notion of uniformly assigned and partially uniformly assigned sets of variables, shows how one can recognize these sets efficiently, and how the model can be greatly simplified once we recognize them, with little computational effort. We demonstrate the effectiveness of these ideas empirically on a number of models.

Extended Lifted Inference with Joint Formulas

Feb 14, 2012

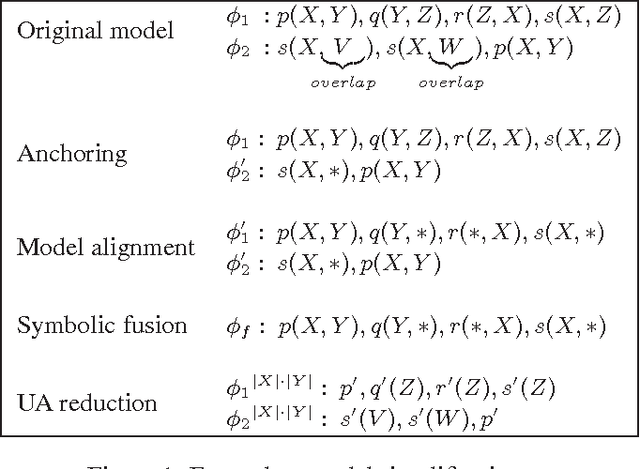

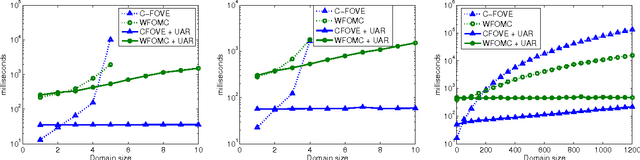

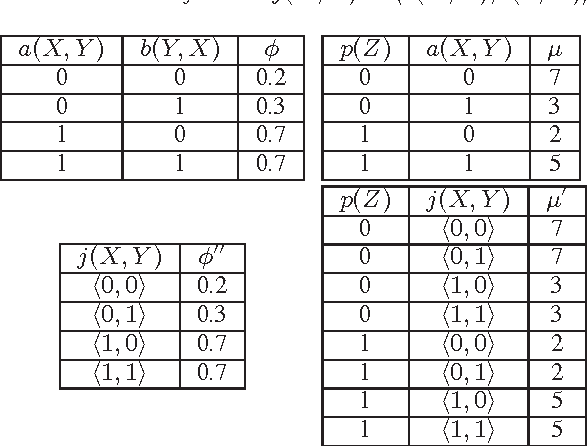

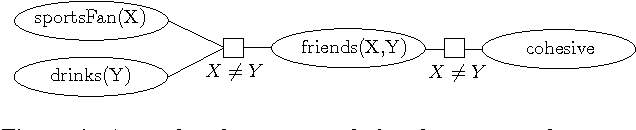

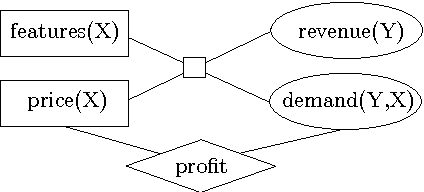

Abstract:The First-Order Variable Elimination (FOVE) algorithm allows exact inference to be applied directly to probabilistic relational models, and has proven to be vastly superior to the application of standard inference methods on a grounded propositional model. Still, FOVE operators can be applied under restricted conditions, often forcing one to resort to propositional inference. This paper aims to extend the applicability of FOVE by providing two new model conversion operators: the first and the primary is joint formula conversion and the second is just-different counting conversion. These new operations allow efficient inference methods to be applied directly on relational models, where no existing efficient method could be applied hitherto. In addition, aided by these capabilities, we show how to adapt FOVE to provide exact solutions to Maximum Expected Utility (MEU) queries over relational models for decision under uncertainty. Experimental evaluations show our algorithms to provide significant speedup over the alternatives.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge