Tyler Smith

Application and Validation of Geospatial Foundation Model Data for the Prediction of Health Facility Programmatic Outputs -- A Case Study in Malawi

Oct 29, 2025

Abstract:The reliability of routine health data in low and middle-income countries (LMICs) is often constrained by reporting delays and incomplete coverage, necessitating the exploration of novel data sources and analytics. Geospatial Foundation Models (GeoFMs) offer a promising avenue by synthesizing diverse spatial, temporal, and behavioral data into mathematical embeddings that can be efficiently used for downstream prediction tasks. This study evaluated the predictive performance of three GeoFM embedding sources - Google Population Dynamics Foundation Model (PDFM), Google AlphaEarth (derived from satellite imagery), and mobile phone call detail records (CDR) - for modeling 15 routine health programmatic outputs in Malawi, and compared their utility to traditional geospatial interpolation methods. We used XGBoost models on data from 552 health catchment areas (January 2021-May 2023), assessing performance with R2, and using an 80/20 training and test data split with 5-fold cross-validation used in training. While predictive performance was mixed, the embedding-based approaches improved upon baseline geostatistical methods in 13 of 15 (87%) indicators tested. A Multi-GeoFM model integrating all three embedding sources produced the most robust predictions, achieving average 5-fold cross validated R2 values for indicators like population density (0.63), new HIV cases (0.57), and child vaccinations (0.47) and test set R2 of 0.64, 0.68, and 0.55, respectively. Prediction was poor for prediction targets with low primary data availability, such as TB and malnutrition cases. These results demonstrate that GeoFM embeddings imbue a modest predictive improvement for select health and demographic outcomes in an LMIC context. We conclude that the integration of multiple GeoFM sources is an efficient and valuable tool for supplementing and strengthening constrained routine health information systems.

Compressive Sensing with Low Precision Data Representation: Theory and Applications

Jun 06, 2018

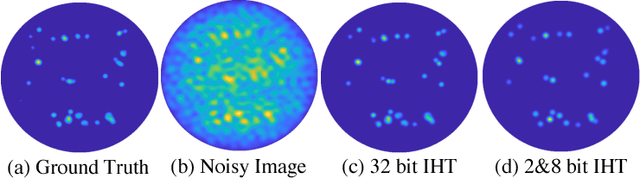

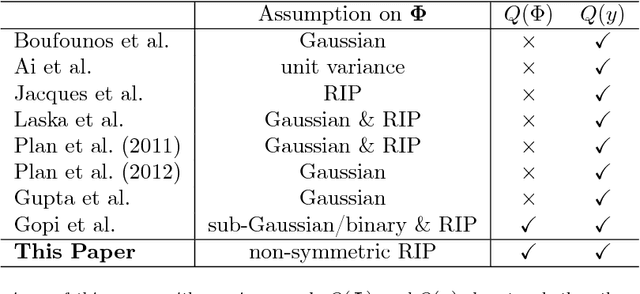

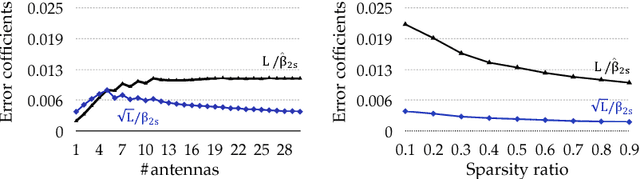

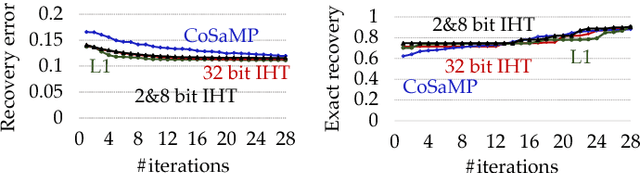

Abstract:Modern scientific instruments produce vast amounts of data, which can overwhelm the processing ability of computer systems. Lossy compression of data is an intriguing solution, but comes with its own dangers, such as potential signal loss, and the need for careful parameter optimization. In this work, we focus on a setting where this problem is especially acute -compressive sensing frameworks for radio astronomy- and ask: Can the precision of the data representation be lowered for all inputs, with both recovery guarantees and practical performance? Our first contribution is a theoretical analysis of the Iterative Hard Thresholding (IHT) algorithm when all input data, that is, the measurement matrix and the observation, are quantized aggressively to as little as 2 bits per value. Under reasonable constraints, we show that there exists a variant of low precision IHT that can still provide recovery guarantees. The second contribution is an analysis of our general quantized framework tailored to radio astronomy, showing that its conditions are satisfied in this case. We evaluate our approach using CPU and FPGA implementations, and show that it can achieve up to 9.19x speed up with negligible loss of recovery quality, on real telescope data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge