Timo Wilm

Industry Insights from Comparing Deep Learning and GBDT Models for E-Commerce Learning-to-Rank

Jul 28, 2025

Abstract:In e-commerce recommender and search systems, tree-based models, such as LambdaMART, have set a strong baseline for Learning-to-Rank (LTR) tasks. Despite their effectiveness and widespread adoption in industry, the debate continues whether deep neural networks (DNNs) can outperform traditional tree-based models in this domain. To contribute to this discussion, we systematically benchmark DNNs against our production-grade LambdaMART model. We evaluate multiple DNN architectures and loss functions on a proprietary dataset from OTTO and validate our findings through an 8-week online A/B test. The results show that a simple DNN architecture outperforms a strong tree-based baseline in terms of total clicks and revenue, while achieving parity in total units sold.

Pareto Front Approximation for Multi-Objective Session-Based Recommender Systems

Jul 23, 2024

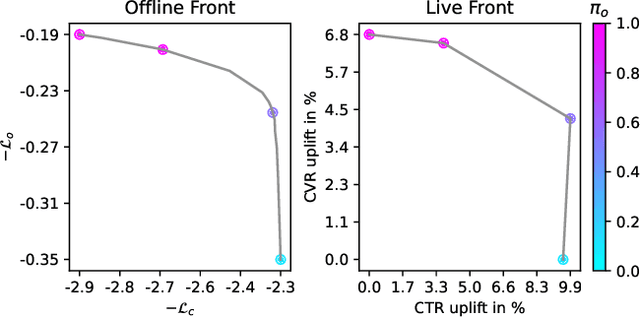

Abstract:This work introduces MultiTRON, an approach that adapts Pareto front approximation techniques to multi-objective session-based recommender systems using a transformer neural network. Our approach optimizes trade-offs between key metrics such as click-through and conversion rates by training on sampled preference vectors. A significant advantage is that after training, a single model can access the entire Pareto front, allowing it to be tailored to meet the specific requirements of different stakeholders by adjusting an additional input vector that weights the objectives. We validate the model's performance through extensive offline and online evaluation. For broader application and research, the source code is made available at https://github.com/otto-de/MultiTRON . The results confirm the model's ability to manage multiple recommendation objectives effectively, offering a flexible tool for diverse business needs.

Scaling Session-Based Transformer Recommendations using Optimized Negative Sampling and Loss Functions

Jul 27, 2023Abstract:This work introduces TRON, a scalable session-based Transformer Recommender using Optimized Negative-sampling. Motivated by the scalability and performance limitations of prevailing models such as SASRec and GRU4Rec+, TRON integrates top-k negative sampling and listwise loss functions to enhance its recommendation accuracy. Evaluations on relevant large-scale e-commerce datasets show that TRON improves upon the recommendation quality of current methods while maintaining training speeds similar to SASRec. A live A/B test yielded an 18.14% increase in click-through rate over SASRec, highlighting the potential of TRON in practical settings. For further research, we provide access to our source code at https://github.com/otto-de/TRON and an anonymized dataset at https://github.com/otto-de/recsys-dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge