Svyatoslav Voloshynovskiy

TRANSIT your events into a new mass: Fast background interpolation for weakly-supervised anomaly searches

Mar 06, 2025Abstract:We introduce a new model for conditional and continuous data morphing called TRansport Adversarial Network for Smooth InTerpolation (TRANSIT). We apply it to create a background data template for weakly-supervised searches at the LHC. The method smoothly transforms sideband events to match signal region mass distributions. We demonstrate the performance of TRANSIT using the LHC Olympics R\&D dataset. The model captures non-linear mass correlations of features and produces a template that offers a competitive anomaly sensitivity compared to state-of-the-art transport-based template generators. Moreover, the computational training time required for TRANSIT is an order of magnitude lower than that of competing deep learning methods. This makes it ideal for analyses that iterate over many signal regions and signal models. Unlike generative models, which must learn a full probability density distribution, i.e., the correlations between all the variables, the proposed transport model only has to learn a smooth conditional shift of the distribution. This allows for a simpler, more efficient residual architecture, enabling mass uncorrelated features to pass the network unchanged while the mass correlated features are adjusted accordingly. Furthermore, we show that the latent space of the model provides a set of mass decorrelated features useful for anomaly detection without background sculpting.

Magnetogram-to-Magnetogram: Generative Forecasting of Solar Evolution

Jul 16, 2024

Abstract:Investigating the solar magnetic field is crucial to understand the physical processes in the solar interior as well as their effects on the interplanetary environment. We introduce a novel method to predict the evolution of the solar line-of-sight (LoS) magnetogram using image-to-image translation with Denoising Diffusion Probabilistic Models (DDPMs). Our approach combines "computer science metrics" for image quality and "physics metrics" for physical accuracy to evaluate model performance. The results indicate that DDPMs are effective in maintaining the structural integrity, the dynamic range of solar magnetic fields, the magnetic flux and other physical features such as the size of the active regions, surpassing traditional persistence models, also in flaring situation. We aim to use deep learning not only for visualisation but as an integrative and interactive tool for telescopes, enhancing our understanding of unexpected physical events like solar flares. Future studies will aim to integrate more diverse solar data to refine the accuracy and applicability of our generative model.

ScatSimCLR: self-supervised contrastive learning with pretext task regularization for small-scale datasets

Aug 31, 2021

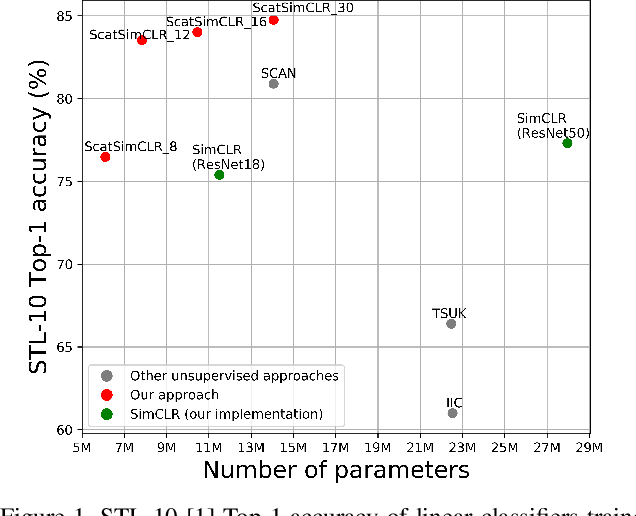

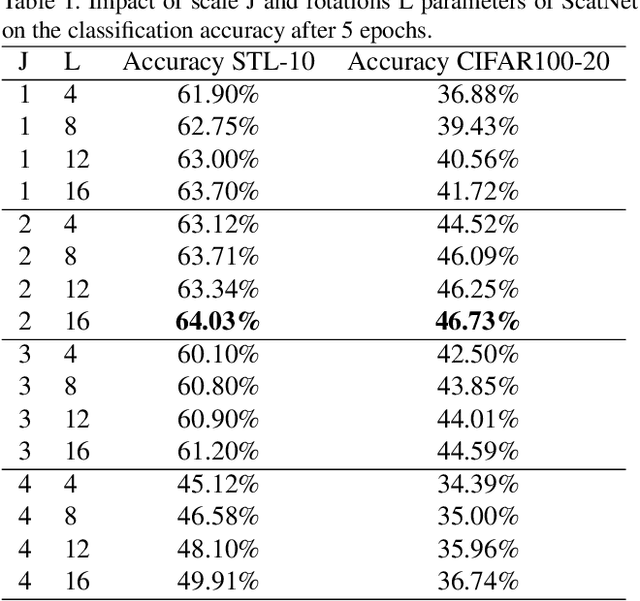

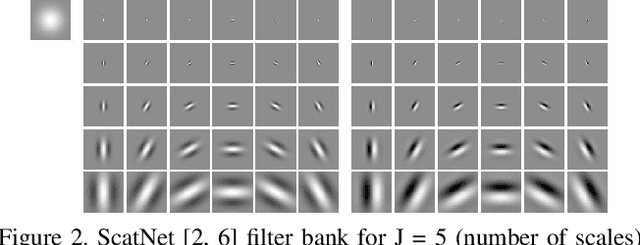

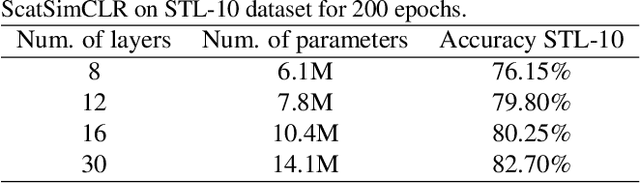

Abstract:In this paper, we consider a problem of self-supervised learning for small-scale datasets based on contrastive loss between multiple views of the data, which demonstrates the state-of-the-art performance in classification task. Despite the reported results, such factors as the complexity of training requiring complex architectures, the needed number of views produced by data augmentation, and their impact on the classification accuracy are understudied problems. To establish the role of these factors, we consider an architecture of contrastive loss system such as SimCLR, where baseline model is replaced by geometrically invariant "hand-crafted" network ScatNet with small trainable adapter network and argue that the number of parameters of the whole system and the number of views can be considerably reduced while practically preserving the same classification accuracy. In addition, we investigate the impact of regularization strategies using pretext task learning based on an estimation of parameters of augmentation transform such as rotation and jigsaw permutation for both traditional baseline models and ScatNet based models. Finally, we demonstrate that the proposed architecture with pretext task learning regularization achieves the state-of-the-art classification performance with a smaller number of trainable parameters and with reduced number of views.

Sparse Multi-layer Image Approximation: Facial Image Compression

Jun 12, 2015

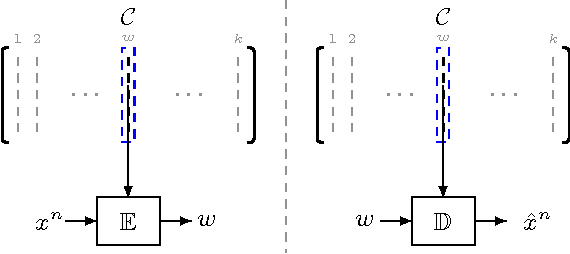

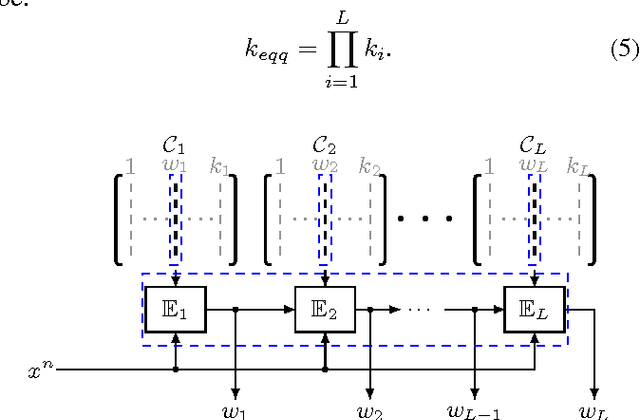

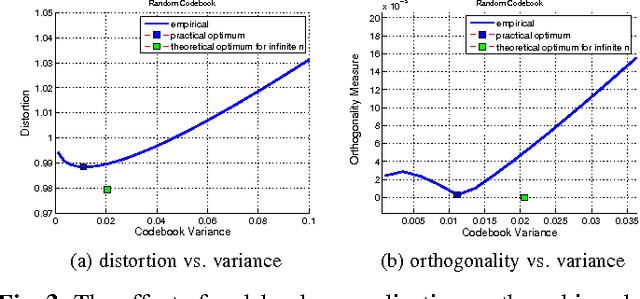

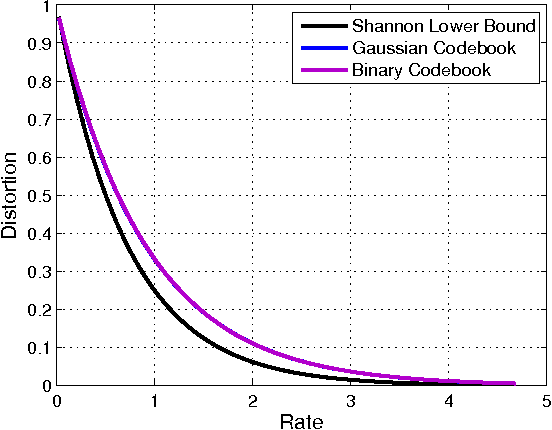

Abstract:We propose a scheme for multi-layer representation of images. The problem is first treated from an information-theoretic viewpoint where we analyze the behavior of different sources of information under a multi-layer data compression framework and compare it with a single-stage (shallow) structure. We then consider the image data as the source of information and link the proposed representation scheme to the problem of multi-layer dictionary learning for visual data. For the current work we focus on the problem of image compression for a special class of images where we report a considerable performance boost in terms of PSNR at high compression ratios in comparison with the JPEG2000 codec.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge