Sudhanya Chatterjee

Physics-Guided Dual-Domain Plug-and-Play ADMM for Low-Dose CT Reconstruction

Jan 02, 2026Abstract:Ultra-low-dose CT (ULDCT) imaging can greatly reduce patient radiation exposure, but the resulting scans suffer from severe structured and random noise that degrades image quality. To address this challenge, we propose a novel Plug-and-Play model-based iterative reconstruction framework (PnP-MBIR) that integrates a deep convolutional denoiser trained in a 2-stage self-supervised Noise-to-Noise (N2N) scheme. The method alternates between enforcing sinogram-domain data fidelity and applying the learned image-domain denoiser within an optimization, enabling artifact suppression while maintaining anatomical structure. The 2-stage protocol enables fully self-supervised training from noisy data, followed by high-dose fine-tuning, ensuring the denoiser's robustness in the ultra-low-dose regime. Our method enables high-quality reconstructions at $\sim$70--80\% lower dose levels, while maintaining diagnostic fidelity comparable to standard full-dose scans. Quantitative evaluations using Gray-Level Co-occurrence Matrix (GLCM) features -- including contrast, homogeneity, entropy, and correlation -- confirm that the proposed method yields superior texture consistency and detail preservation over standalone deep learning and supervised PnP baselines. Qualitative and quantitative results on both simulated and clinical datasets demonstrate that our framework effectively reduces streaks and structured artifacts while preserving subtle tissue contrast, making it a promising tool for ULDCT reconstruction.

Automated Motion Artifact Check for MRI (AutoMAC-MRI): An Interpretable Framework for Motion Artifact Detection and Severity Assessment

Dec 17, 2025Abstract:Motion artifacts degrade MRI image quality and increase patient recalls. Existing automated quality assessment methods are largely limited to binary decisions and provide little interpretability. We introduce AutoMAC-MRI, an explainable framework for grading motion artifacts across heterogeneous MR contrasts and orientations. The approach uses supervised contrastive learning to learn a discriminative representation of motion severity. Within this feature space, we compute grade-specific affinity scores that quantify an image's proximity to each motion grade, thereby making grade assignments transparent and interpretable. We evaluate AutoMAC-MRI on more than 5000 expert-annotated brain MRI slices spanning multiple contrasts and views. Experiments assessing affinity scores against expert labels show that the scores align well with expert judgment, supporting their use as an interpretable measure of motion severity. By coupling accurate grade detection with per-grade affinity scoring, AutoMAC-MRI enables inline MRI quality control, with the potential to reduce unnecessary rescans and improve workflow efficiency.

Test Time Optimized Generalized AI-based Medical Image Registration Method

Dec 16, 2025

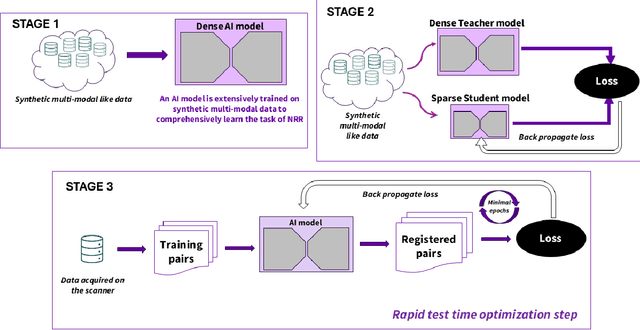

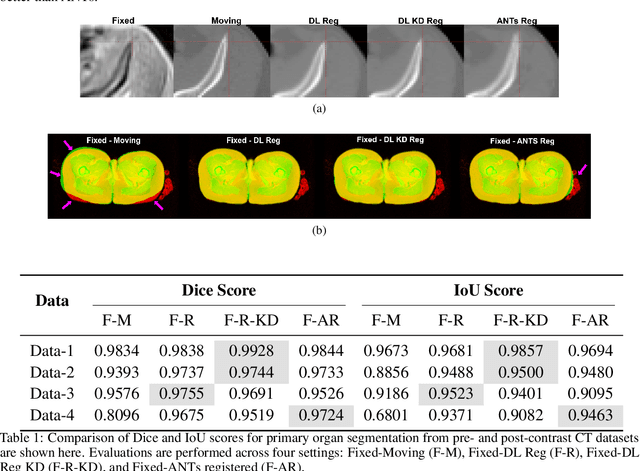

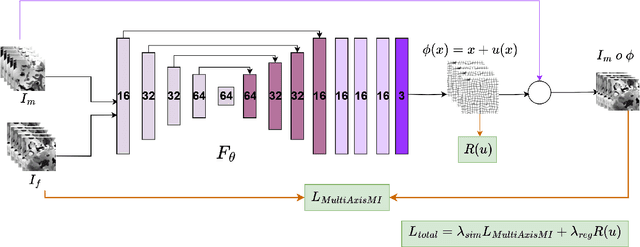

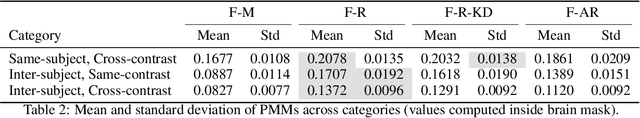

Abstract:Medical image registration is critical for aligning anatomical structures across imaging modalities such as computed tomography (CT), magnetic resonance imaging (MRI), and ultrasound. Among existing techniques, non-rigid registration (NRR) is particularly challenging due to the need to capture complex anatomical deformations caused by physiological processes like respiration or contrast-induced signal variations. Traditional NRR methods, while theoretically robust, often require extensive parameter tuning and incur high computational costs, limiting their use in real-time clinical workflows. Recent deep learning (DL)-based approaches have shown promise; however, their dependence on task-specific retraining restricts scalability and adaptability in practice. These limitations underscore the need for efficient, generalizable registration frameworks capable of handling heterogeneous imaging contexts. In this work, we introduce a novel AI-driven framework for 3D non-rigid registration that generalizes across multiple imaging modalities and anatomical regions. Unlike conventional methods that rely on application-specific models, our approach eliminates anatomy- or modality-specific customization, enabling streamlined integration into diverse clinical environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge