Stefan Woltran

TU Wien, Austria

Clique-Width and Directed Width Measures for Answer-Set Programming

Dec 30, 2016

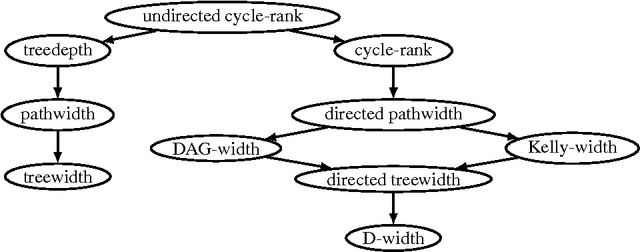

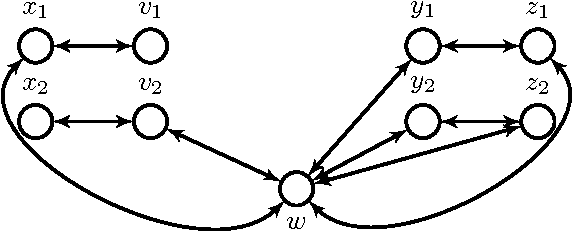

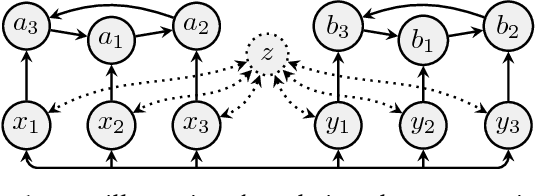

Abstract:Disjunctive Answer Set Programming (ASP) is a powerful declarative programming paradigm whose main decision problems are located on the second level of the polynomial hierarchy. Identifying tractable fragments and developing efficient algorithms for such fragments are thus important objectives in order to complement the sophisticated ASP systems available to date. Hard problems can become tractable if some problem parameter is bounded by a fixed constant; such problems are then called fixed-parameter tractable (FPT). While several FPT results for ASP exist, parameters that relate to directed or signed graphs representing the program at hand have been neglected so far. In this paper, we first give some negative observations showing that directed width measures on the dependency graph of a program do not lead to FPT results. We then consider the graph parameter of signed clique-width and present a novel dynamic programming algorithm that is FPT w.r.t. this parameter. Clique-width is more general than the well-known treewidth, and, to the best of our knowledge, ours is the first FPT algorithm for bounded clique-width for reasoning problems beyond SAT.

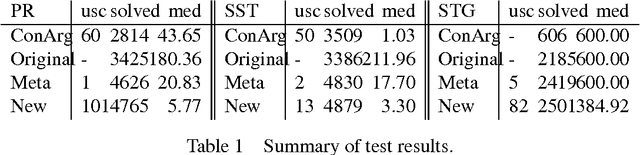

Counting Answer Sets via Dynamic Programming

Dec 22, 2016

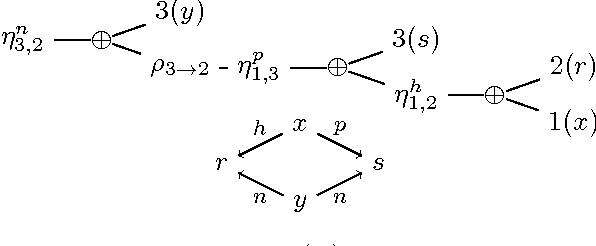

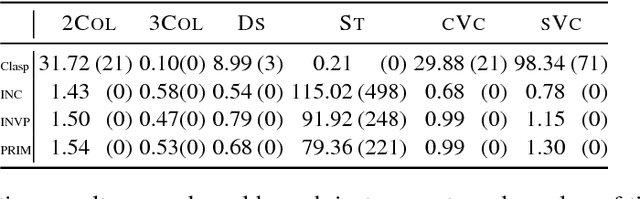

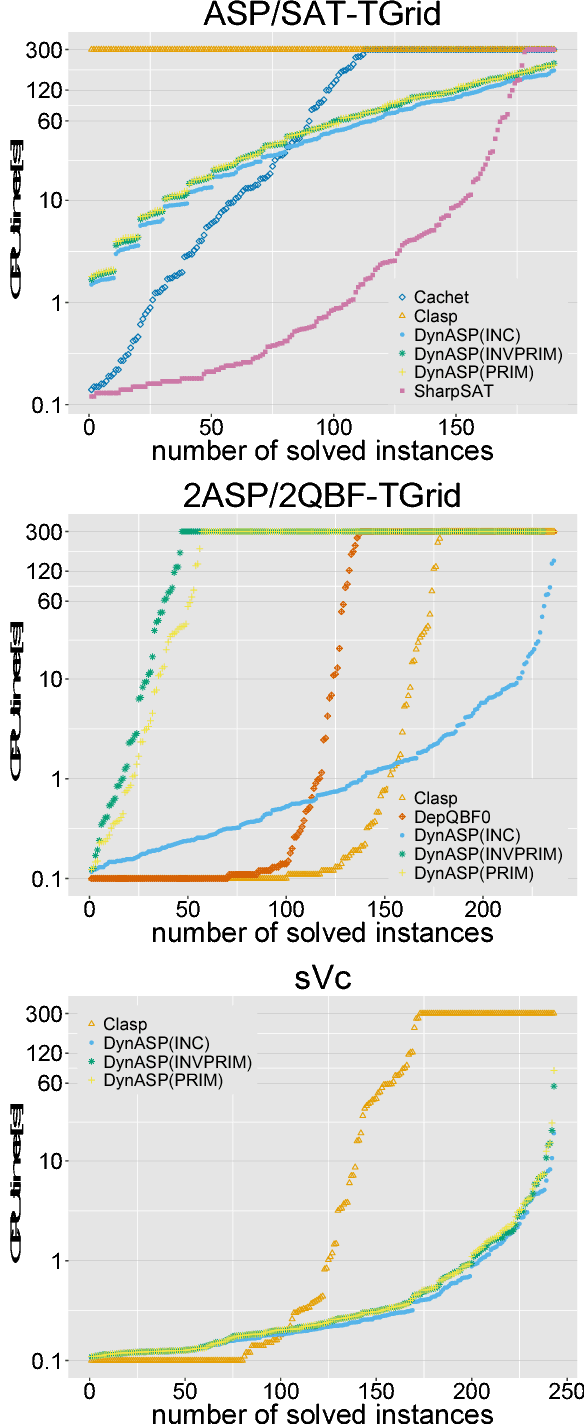

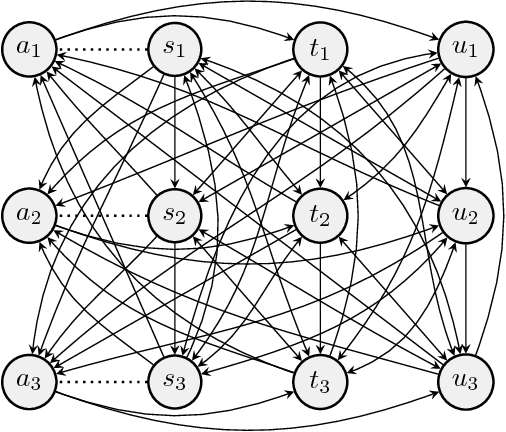

Abstract:While the solution counting problem for propositional satisfiability (#SAT) has received renewed attention in recent years, this research trend has not affected other AI solving paradigms like answer set programming (ASP). Although ASP solvers are designed to enumerate all solutions, and counting can therefore be easily done, the involved materialization of all solutions is a clear bottleneck for the counting problem of ASP (#ASP). In this paper we propose dynamic programming-based #ASP algorithms that exploit the structure of the underlying (ground) ASP program. Experimental results for a prototype implementation show promise when compared to existing solvers.

lpopt: A Rule Optimization Tool for Answer Set Programming

Aug 23, 2016

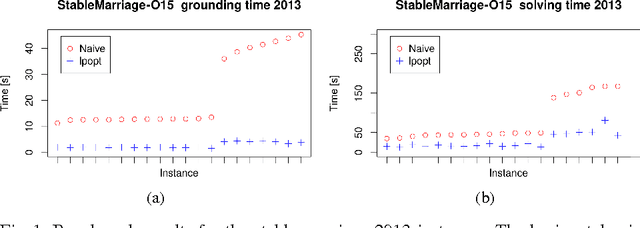

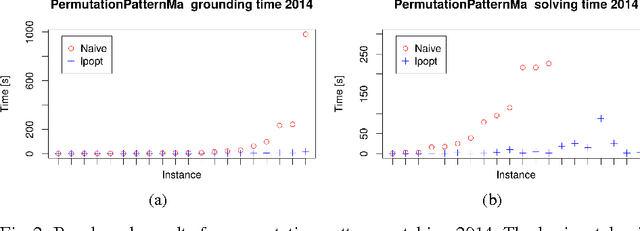

Abstract:State-of-the-art answer set programming (ASP) solvers rely on a program called a grounder to convert non-ground programs containing variables into variable-free, propositional programs. The size of this grounding depends heavily on the size of the non-ground rules, and thus, reducing the size of such rules is a promising approach to improve solving performance. To this end, in this paper we announce lpopt, a tool that decomposes large logic programming rules into smaller rules that are easier to handle for current solvers. The tool is specifically tailored to handle the standard syntax of the ASP language (ASP-Core) and makes it easier for users to write efficient and intuitive ASP programs, which would otherwise often require significant hand-tuning by expert ASP engineers. It is based on an idea proposed by Morak and Woltran (2012) that we extend significantly in order to handle the full ASP syntax, including complex constructs like aggregates, weak constraints, and arithmetic expressions. We present the algorithm, the theoretical foundations on how to treat these constructs, as well as an experimental evaluation showing the viability of our approach.

The Power of Non-Ground Rules in Answer Set Programming

Aug 05, 2016

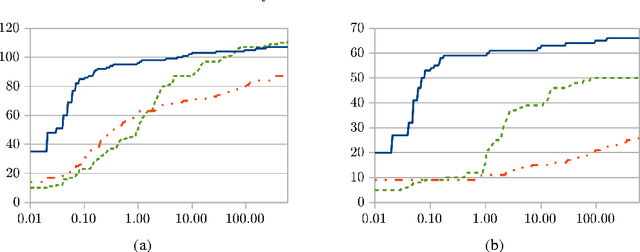

Abstract:Answer set programming (ASP) is a well-established logic programming language that offers an intuitive, declarative syntax for problem solving. In its traditional application, a fixed ASP program for a given problem is designed and the actual instance of the problem is fed into the program as a set of facts. This approach typically results in programs with comparably short and simple rules. However, as is known from complexity analysis, such an approach limits the expressive power of ASP; in fact, an entire NP-check can be encoded into a single large rule body of bounded arity that performs both a guess and a check within the same rule. Here, we propose a novel paradigm for encoding hard problems in ASP by making explicit use of large rules which depend on the actual instance of the problem. We illustrate how this new encoding paradigm can be used, providing examples of problems from the first, second, and even third level of the polynomial hierarchy. As state-of-the-art solvers are tuned towards short rules, rule decomposition is a key technique in the practical realization of our approach. We also provide some preliminary benchmarks which indicate that giving up the convenient way of specifying a fixed program can lead to a significant speed-up. This paper is under consideration for acceptance into TPLP.

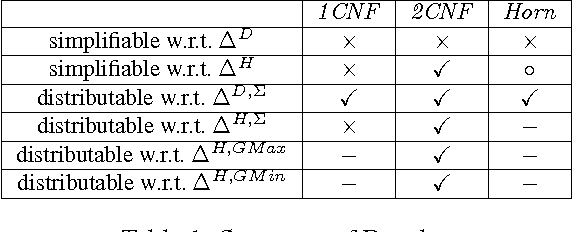

Distributing Knowledge into Simple Bases

Mar 31, 2016

Abstract:Understanding the behavior of belief change operators for fragments of classical logic has received increasing interest over the last years. Results in this direction are mainly concerned with adapting representation theorems. However, fragment-driven belief change also leads to novel research questions. In this paper we propose the concept of belief distribution, which can be understood as the reverse task of merging. More specifically, we are interested in the following question: given an arbitrary knowledge base $K$ and some merging operator $\Delta$, can we find a profile $E$ and a constraint $\mu$, both from a given fragment of classical logic, such that $\Delta_\mu(E)$ yields a result equivalent to $K$? In other words, we are interested in seeing if $K$ can be distributed into knowledge bases of simpler structure, such that the task of merging allows for a reconstruction of the original knowledge. Our initial results show that merging based on drastic distance allows for an easy distribution of knowledge, while the power of distribution for operators based on Hamming distance relies heavily on the fragment of choice.

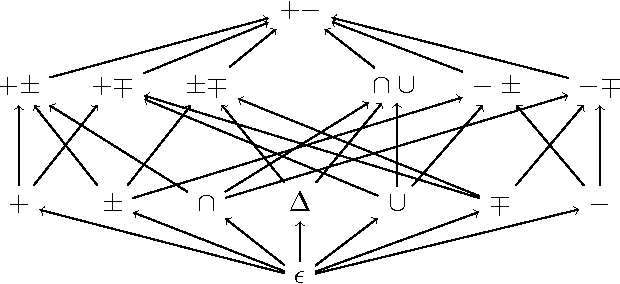

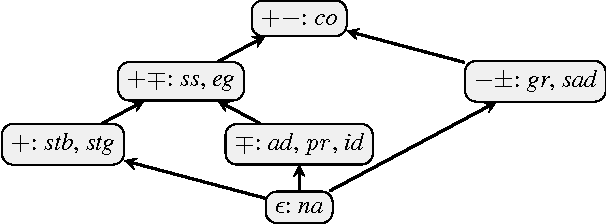

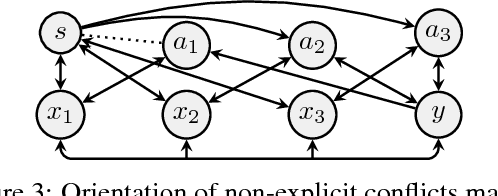

Verifiability of Argumentation Semantics

Mar 31, 2016

Abstract:Dung's abstract argumentation theory is a widely used formalism to model conflicting information and to draw conclusions in such situations. Hereby, the knowledge is represented by so-called argumentation frameworks (AFs) and the reasoning is done via semantics extracting acceptable sets. All reasonable semantics are based on the notion of conflict-freeness which means that arguments are only jointly acceptable when they are not linked within the AF. In this paper, we study the question which information on top of conflict-free sets is needed to compute extensions of a semantics at hand. We introduce a hierarchy of so-called verification classes specifying the required amount of information. We show that well-known standard semantics are exactly verifiable through a certain such class. Our framework also gives a means to study semantics lying inbetween known semantics, thus contributing to a more abstract understanding of the different features argumentation semantics offer.

Improved Answer-Set Programming Encodings for Abstract Argumentation

Oct 20, 2015

Abstract:The design of efficient solutions for abstract argumentation problems is a crucial step towards advanced argumentation systems. One of the most prominent approaches in the literature is to use Answer-Set Programming (ASP) for this endeavor. In this paper, we present new encodings for three prominent argumentation semantics using the concept of conditional literals in disjunctions as provided by the ASP-system clingo. Our new encodings are not only more succinct than previous versions, but also outperform them on standard benchmarks.

* To appear in Theory and Practice of Logic Programming (TPLP), Proceedings of ICLP 2015

Dual-normal Logic Programs - the Forgotten Class

Jul 20, 2015Abstract:Disjunctive Answer Set Programming is a powerful declarative programming paradigm with complexity beyond NP. Identifying classes of programs for which the consistency problem is in NP is of interest from the theoretical standpoint and can potentially lead to improvements in the design of answer set programming solvers. One of such classes consists of dual-normal programs, where the number of positive body atoms in proper rules is at most one. Unlike other classes of programs, dual-normal programs have received little attention so far. In this paper we study this class. We relate dual-normal programs to propositional theories and to normal programs by presenting several inter-translations. With the translation from dual-normal to normal programs at hand, we introduce the novel class of body-cycle free programs, which are in many respects dual to head-cycle free programs. We establish the expressive power of dual-normal programs in terms of SE- and UE-models, and compare them to normal programs. We also discuss the complexity of deciding whether dual-normal programs are strongly and uniformly equivalent.

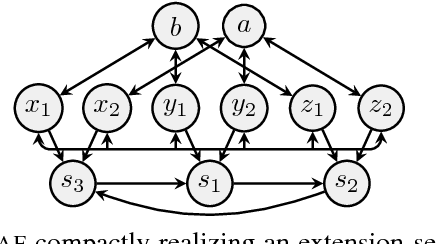

Compact Argumentation Frameworks

Apr 30, 2014

Abstract:Abstract argumentation frameworks (AFs) are one of the most studied formalisms in AI. In this work, we introduce a certain subclass of AFs which we call compact. Given an extension-based semantics, the corresponding compact AFs are characterized by the feature that each argument of the AF occurs in at least one extension. This not only guarantees a certain notion of fairness; compact AFs are thus also minimal in the sense that no argument can be removed without changing the outcome. We address the following questions in the paper: (1) How are the classes of compact AFs related for different semantics? (2) Under which circumstances can AFs be transformed into equivalent compact ones? (3) Finally, we show that compact AFs are indeed a non-trivial subclass, since the verification problem remains coNP-hard for certain semantics.

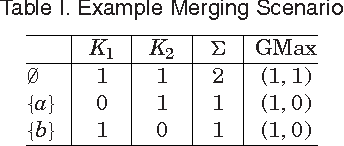

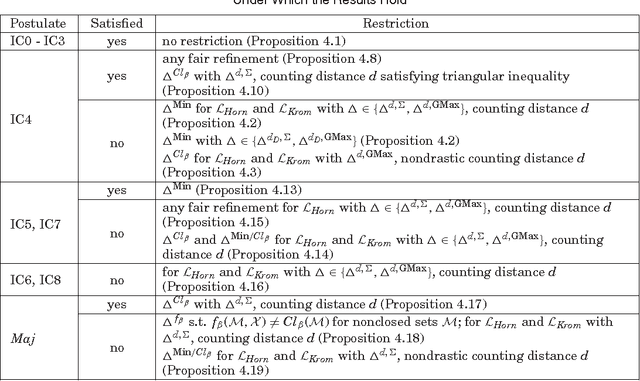

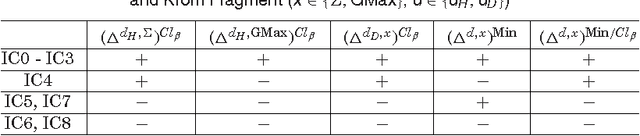

Belief merging within fragments of propositional logic

Apr 25, 2014

Abstract:Recently, belief change within the framework of fragments of propositional logic has gained increasing attention. Previous works focused on belief contraction and belief revision on the Horn fragment. However, the problem of belief merging within fragments of propositional logic has been neglected so far. This paper presents a general approach to define new merging operators derived from existing ones such that the result of merging remains in the fragment under consideration. Our approach is not limited to the case of Horn fragment but applicable to any fragment of propositional logic characterized by a closure property on the sets of models of its formulae. We study the logical properties of the proposed operators in terms of satisfaction of merging postulates, considering in particular distance-based merging operators for Horn and Krom fragments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge