Stefan Klus

Data-driven model reduction of agent-based systems using the Koopman generator

Dec 14, 2020

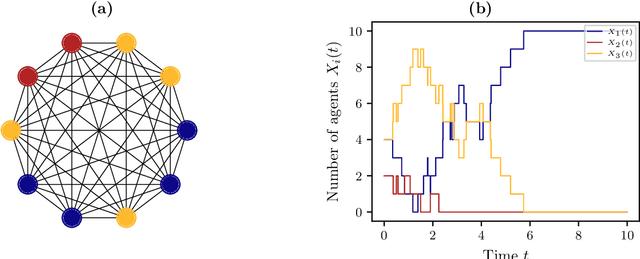

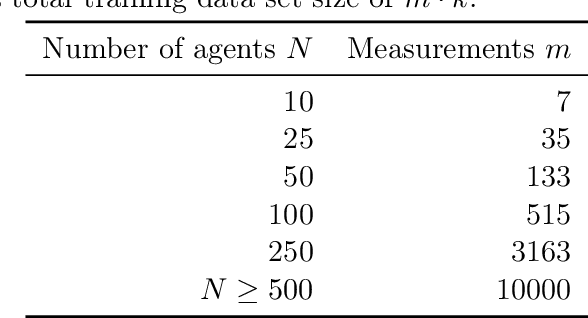

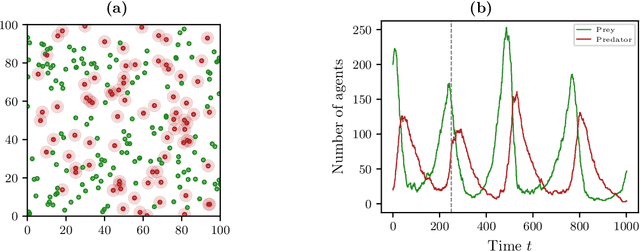

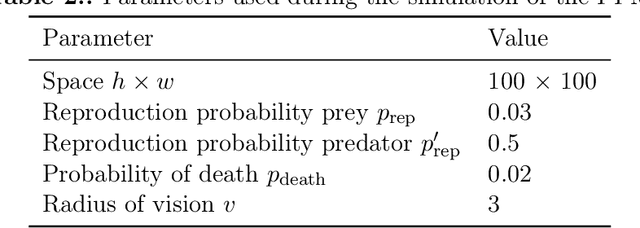

Abstract:The dynamical behavior of social systems can be described by agent-based models. Although single agents follow easily explainable rules, complex time-evolving patterns emerge due to their interaction. The simulation and analysis of such agent-based models, however, is often prohibitively time-consuming if the number of agents is large. In this paper, we show how Koopman operator theory can be used to derive reduced models of agent-based systems using only simulation or real-world data. Our goal is to learn coarse-grained models and to represent the reduced dynamics by ordinary or stochastic differential equations. The new variables are, for instance, aggregated state variables of the agent-based model, modeling the collective behavior of larger groups or the entire population. Using benchmark problems with known coarse-grained models, we demonstrate that the obtained reduced systems are in good agreement with the analytical results, provided that the numbers of agents is sufficiently large.

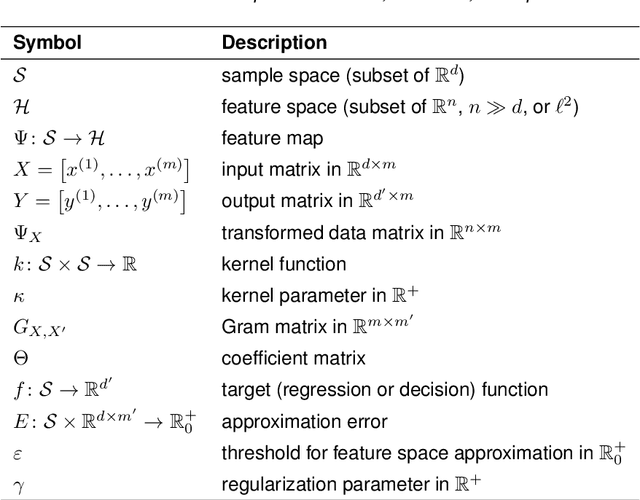

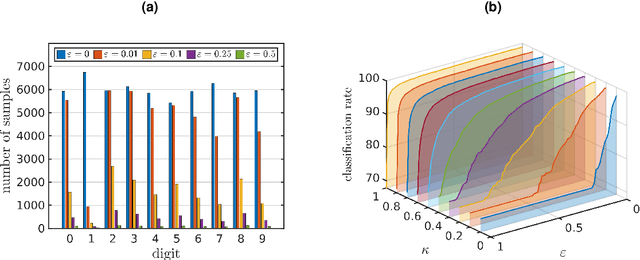

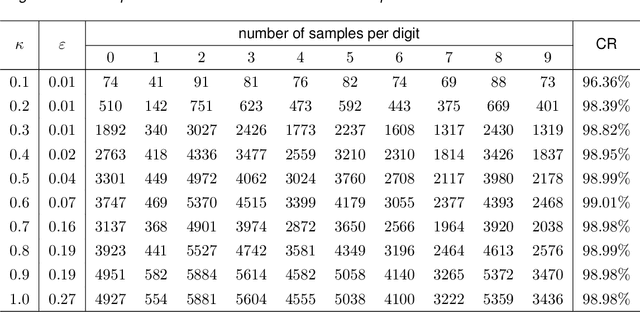

Feature space approximation for kernel-based supervised learning

Nov 25, 2020

Abstract:We propose a method for the approximation of high- or even infinite-dimensional feature vectors, which play an important role in supervised learning. The goal is to reduce the size of the training data, resulting in lower storage consumption and computational complexity. Furthermore, the method can be regarded as a regularization technique, which improves the generalizability of learned target functions. We demonstrate significant improvements in comparison to the computation of data-driven predictions involving the full training data set. The method is applied to classification and regression problems from different application areas such as image recognition, system identification, and oceanographic time series analysis.

GraphKKE: Graph Kernel Koopman Embedding for Human Microbiome Analysis

Sep 07, 2020

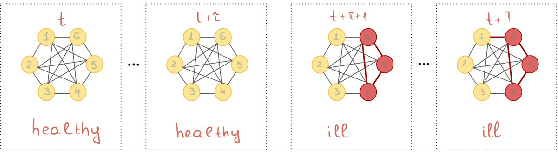

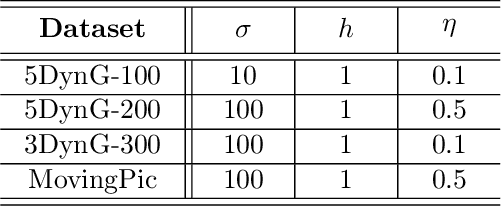

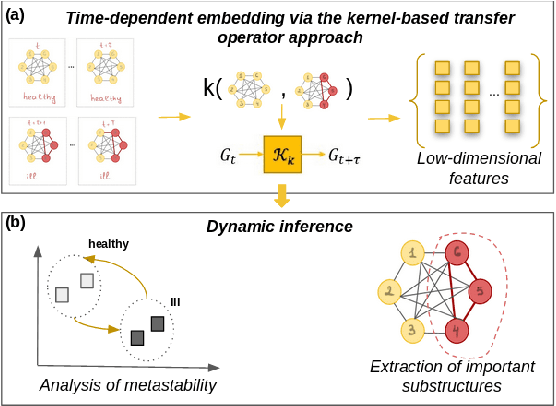

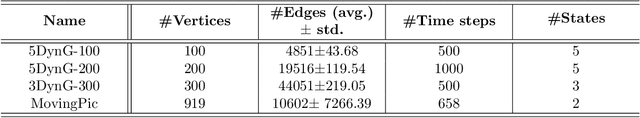

Abstract:More and more diseases have been found to be strongly correlated with disturbances in the microbiome constitution, e.g., obesity, diabetes, or some cancer types. Thanks to modern high-throughput omics technologies, it becomes possible to directly analyze human microbiome and its influence on the health status. Microbial communities are monitored over long periods of time and the associations between their members are explored. These relationships can be described by a time-evolving graph. In order to understand responses of the microbial community members to a distinct range of perturbations such as antibiotics exposure or diseases and general dynamical properties, the time-evolving graph of the human microbial communities has to be analyzed. This becomes especially challenging due to dozens of complex interactions among microbes and metastable dynamics. The key to solving this problem is the representation of the time-evolving graphs as fixed-length feature vectors preserving the original dynamics. We propose a method for learning the embedding of the time-evolving graph that is based on the spectral analysis of transfer operators and graph kernels. We demonstrate that our method can capture temporary changes in the time-evolving graph on both created synthetic data and real-world data. Our experiments demonstrate the efficacy of the method. Furthermore, we show that our method can be applied to human microbiome data to study dynamic processes.

Kernel-based approximation of the Koopman generator and Schrödinger operator

Jun 25, 2020

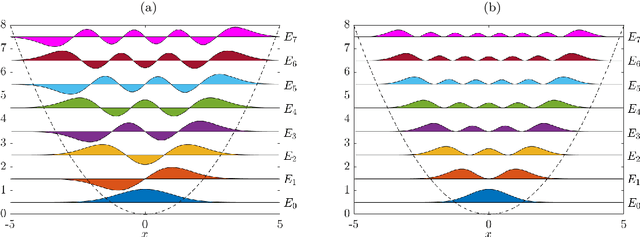

Abstract:Many dimensionality and model reduction techniques rely on estimating dominant eigenfunctions of associated dynamical operators from data. Important examples include the Koopman operator and its generator, but also the Schr\"odinger operator. We propose a kernel-based method for the approximation of differential operators in reproducing kernel Hilbert spaces and show how eigenfunctions can be estimated by solving auxiliary matrix eigenvalue problems. The resulting algorithms are applied to molecular dynamics and quantum chemistry examples. Furthermore, we exploit that, under certain conditions, the Schr\"odinger operator can be transformed into a Kolmogorov backward operator corresponding to a drift-diffusion process and vice versa. This allows us to apply methods developed for the analysis of high-dimensional stochastic differential equations to quantum mechanical systems.

Kernel autocovariance operators of stationary processes: Estimation and convergence

Apr 02, 2020Abstract:We consider autocovariance operators of a stationary stochastic process on a Polish space that is embedded into a reproducing kernel Hilbert space. We investigate how empirical estimates of these operators converge along realizations of the process under various conditions. In particular, we examine ergodic and strongly mixing processes and prove several asymptotic results as well as finite sample error bounds with a detailed analysis for the Gaussian kernel. We provide applications of our theory in terms of consistency results for kernel PCA with dependent data and the conditional mean embedding of transition probabilities. Finally, we use our approach to examine the nonparametric estimation of Markov transition operators and highlight how our theory can give a consistency analysis for a large family of spectral analysis methods including kernel-based dynamic mode decomposition.

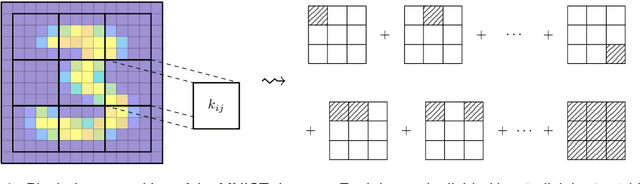

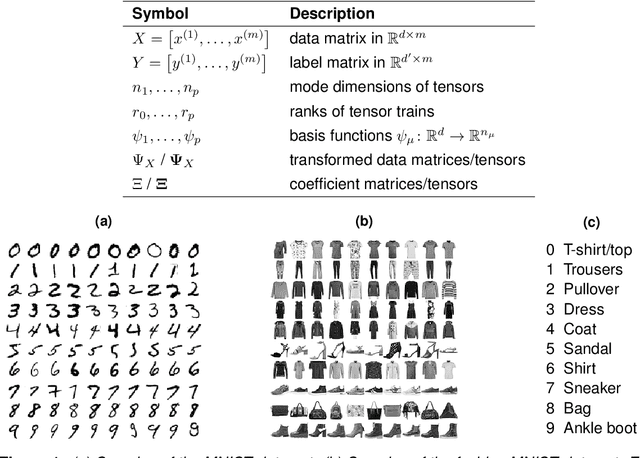

Tensor-based algorithms for image classification

Oct 04, 2019

Abstract:The interest in machine learning with tensor networks has been growing rapidly in recent years. The goal is to exploit tensor-structured basis functions in order to generate exponentially large feature spaces which are then used for supervised learning. We will propose two different tensor approaches for quantum-inspired machine learning. One is a kernel-based reformulation of the previously introduced MANDy, the other an alternating ridge regression in the tensor-train format. We will apply both methods to the MNIST and fashion MNIST data set and compare the results with state-of-the-art neural network-based classifiers.

Data-driven approximation of the Koopman generator: Model reduction, system identification, and control

Sep 23, 2019

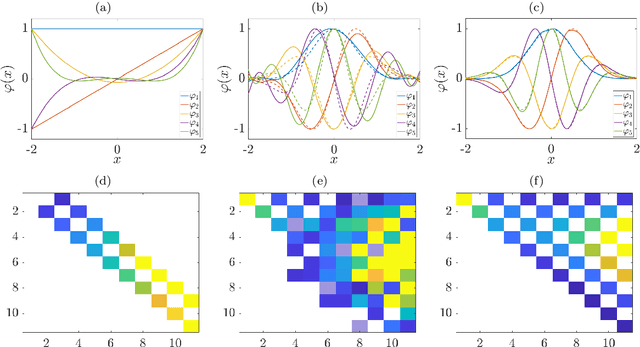

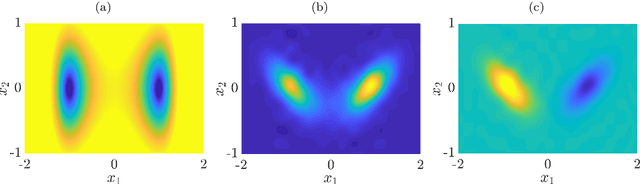

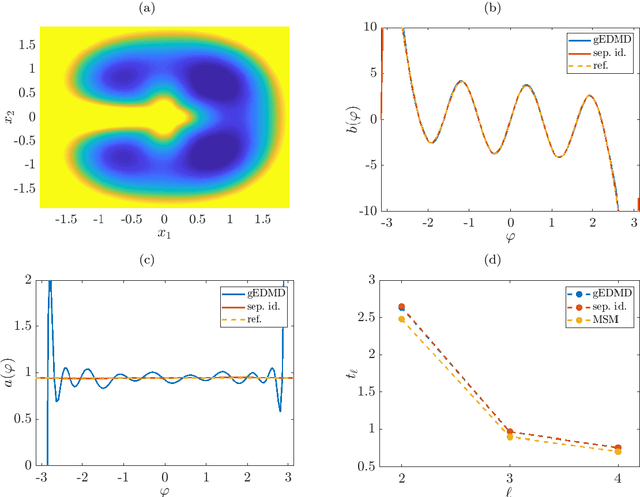

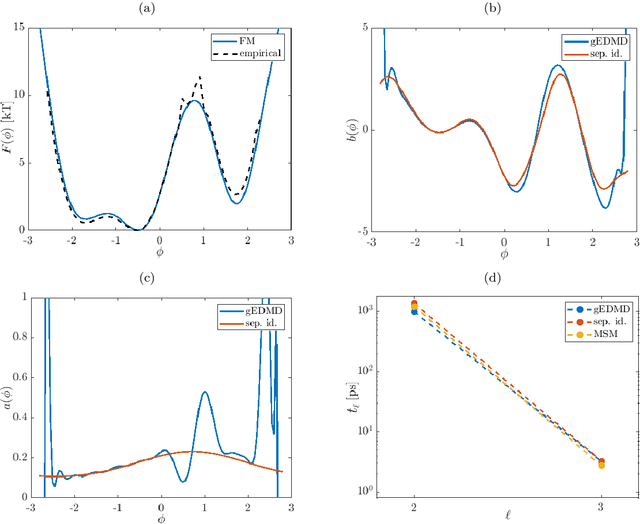

Abstract:We derive a data-driven method for the approximation of the Koopman generator called gEDMD, which can be regarded as a straightforward extension of EDMD (extended dynamic mode decomposition). This approach is applicable to deterministic and stochastic dynamical systems. It can be used for computing eigenvalues, eigenfunctions, and modes of the generator and for system identification. In addition to learning the governing equations of deterministic systems, which then reduces to SINDy (sparse identification of nonlinear dynamics), it is possible to identify the drift and diffusion terms of stochastic differential equations from data. Moreover, we apply gEDMD to derive coarse-grained models of high-dimensional systems, and also to determine efficient model predictive control strategies. We highlight relationships with other methods and demonstrate the efficacy of the proposed methods using several guiding examples and prototypical molecular dynamics problems.

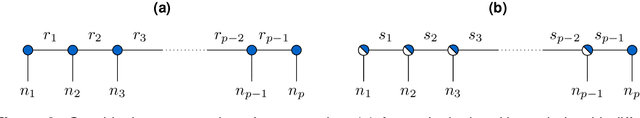

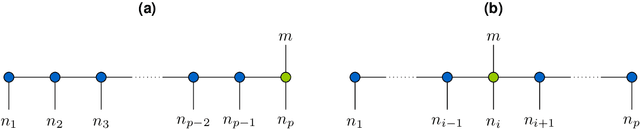

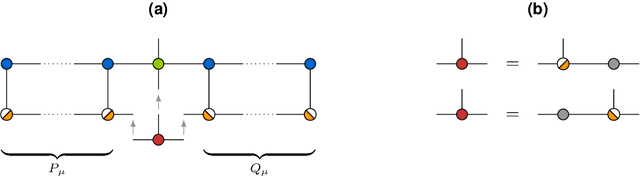

Tensor-based EDMD for the Koopman analysis of high-dimensional systems

Aug 12, 2019

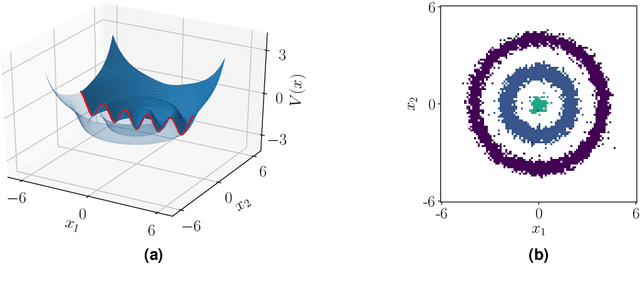

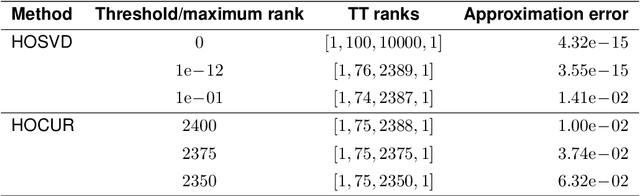

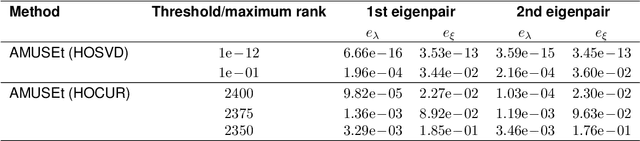

Abstract:Recent years have seen rapid advances in the data-driven analysis of dynamical systems based on Koopman operator theory -- with extended dynamic mode decomposition (EDMD) being a cornerstone of the field. On the other hand, low-rank tensor product approximations -- in particular the tensor train (TT) format -- have become a valuable tool for the solution of large-scale problems in a number of fields. In this work, we combine EDMD and the TT format, enabling the application of EDMD to high-dimensional problems in conjunction with a large set of features. We present the construction of different TT representations of tensor-structured data arrays. Furthermore, we also derive efficient algorithms to solve the EDMD eigenvalue problem based on those representations and to project the data into a low-dimensional representation defined by the eigenvectors. We prove that there is a physical interpretation of the procedure and demonstrate its capabilities by applying the method to benchmark data sets of molecular dynamics simulation.

Kernel Conditional Density Operators

May 27, 2019

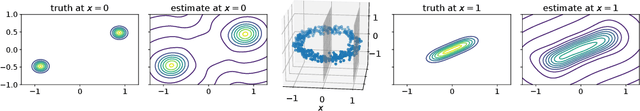

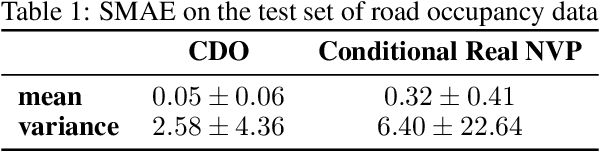

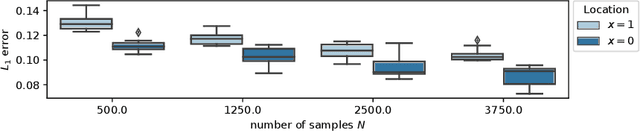

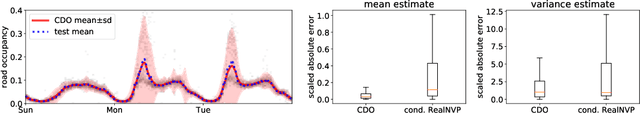

Abstract:We introduce a conditional density estimation model termed the conditional density operator. It naturally captures multivariate, multimodal output densities and is competitive with recent neural conditional density models and Gaussian processes. To derive the model, we propose a novel approach to the reconstruction of probability densities from their kernel mean embeddings by drawing connections to estimation of Radon-Nikodym derivatives in the reproducing kernel Hilbert space (RKHS). We prove finite sample error bounds which are independent of problem dimensionality. Furthermore, the resulting conditional density model is applied to real-world data and we demonstrate its versatility and competitive performance.

A kernel-based method for coarse graining complex dynamical systems

Apr 18, 2019

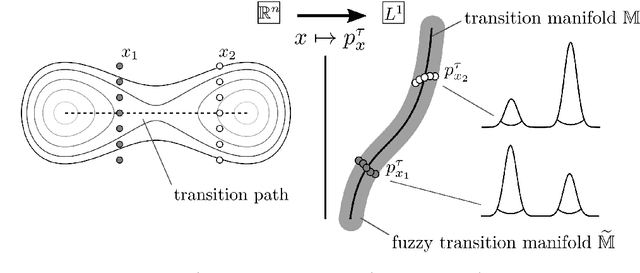

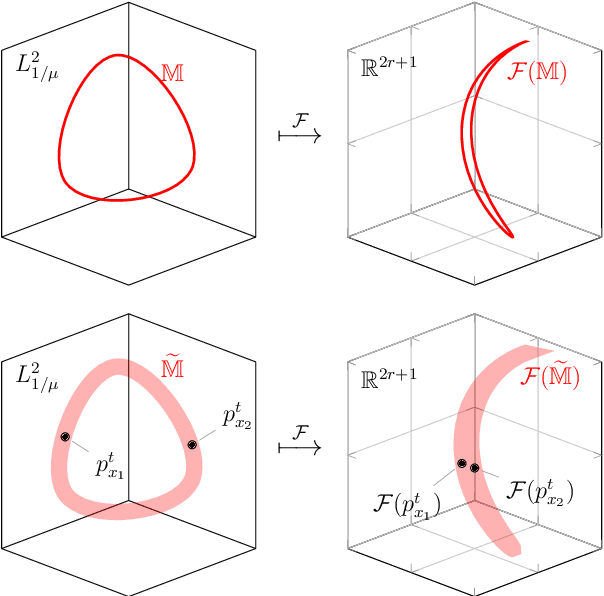

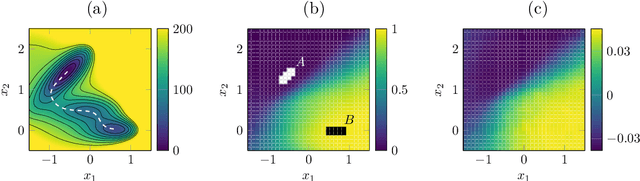

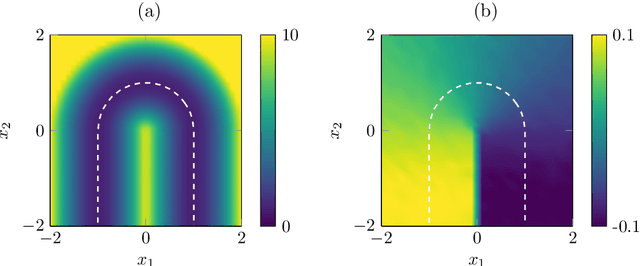

Abstract:We present a novel kernel-based machine learning algorithm for identifying the low-dimensional geometry of the effective dynamics of high-dimensional multiscale stochastic systems. Recently, the authors developed a mathematical framework for the computation of optimal reaction coordinates of such systems that is based on learning a parametrization of a low-dimensional transition manifold in a certain function space. In this article, we enhance this approach by embedding and learning this transition manifold in a reproducing kernel Hilbert space, exploiting the favorable properties of kernel embeddings. Under mild assumptions on the kernel, the manifold structure is shown to be preserved under the embedding, and distortion bounds can be derived. This leads to a more robust and more efficient algorithm compared to previous parametrization approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge