Siwei Ju

Behavior-Constrained Reinforcement Learning with Receding-Horizon Credit Assignment for High-Performance Control

Apr 03, 2026Abstract:Learning high-performance control policies that remain consistent with expert behavior is a fundamental challenge in robotics. Reinforcement learning can discover high-performing strategies but often departs from desirable human behavior, whereas imitation learning is limited by demonstration quality and struggles to improve beyond expert data. We propose a behavior-constrained reinforcement learning framework that improves beyond demonstrations while explicitly controlling deviation from expert behavior. Because expert-consistent behavior in dynamic control is inherently trajectory-level, we introduce a receding-horizon predictive mechanism that models short-term future trajectories and provides look-ahead rewards during training. To account for the natural variability of human behavior under disturbances and changing conditions, we further condition the policy on reference trajectories, allowing it to represent a distribution of expert-consistent behaviors rather than a single deterministic target. Empirically, we evaluate the approach in high-fidelity race car simulation using data from professional drivers, a domain characterized by extreme dynamics and narrow performance margins. The learned policies achieve competitive lap times while maintaining close alignment with expert driving behavior, outperforming baseline methods in both performance and imitation quality. Beyond standard benchmarks, we conduct human-grounded evaluation in a driver-in-the-loop simulator and show that the learned policies reproduce setup-dependent driving characteristics consistent with the feedback of top-class professional race drivers. These results demonstrate that our method enables learning high-performance control policies that are both optimal and behavior-consistent, and can serve as reliable surrogates for human decision-making in complex control systems.

An Adaptive Human Driver Model for Realistic Race Car Simulations

Mar 03, 2022

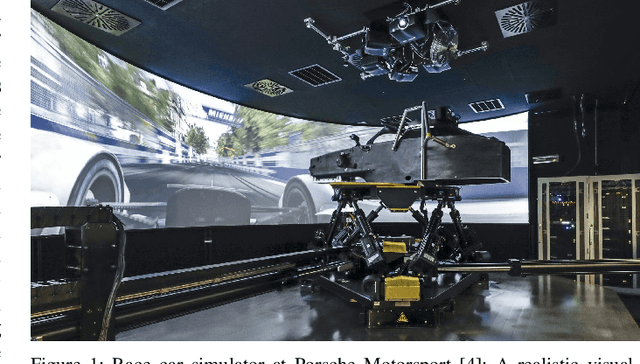

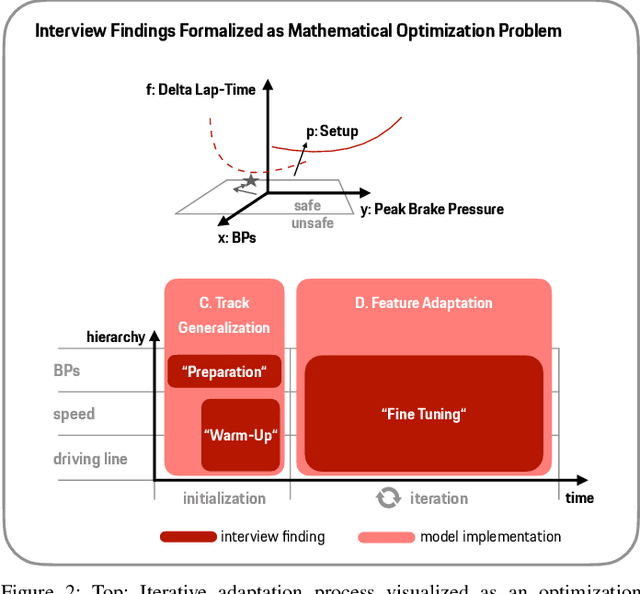

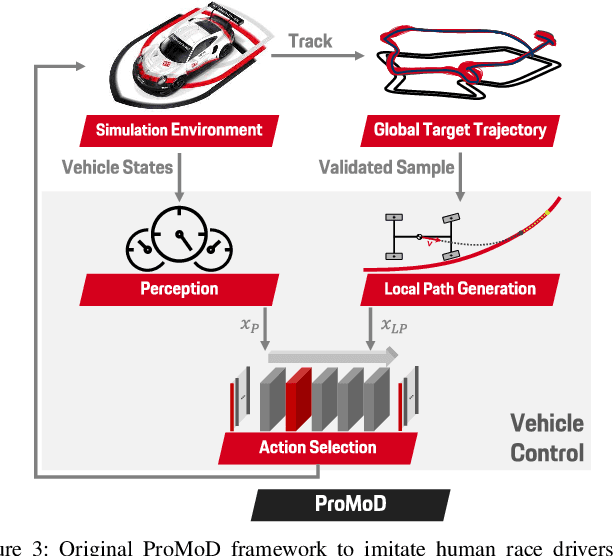

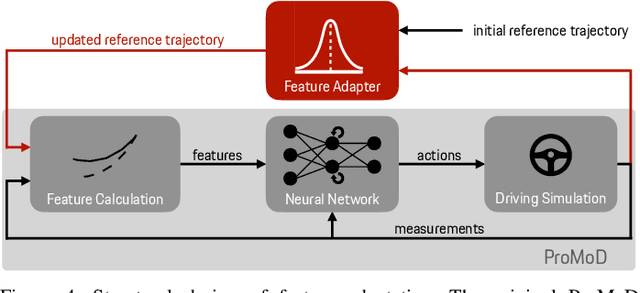

Abstract:Engineering a high-performance race car requires a direct consideration of the human driver using real-world tests or Human-Driver-in-the-Loop simulations. Apart from that, offline simulations with human-like race driver models could make this vehicle development process more effective and efficient but are hard to obtain due to various challenges. With this work, we intend to provide a better understanding of race driver behavior and introduce an adaptive human race driver model based on imitation learning. Using existing findings and an interview with a professional race engineer, we identify fundamental adaptation mechanisms and how drivers learn to optimize lap time on a new track. Subsequently, we use these insights to develop generalization and adaptation techniques for a recently presented probabilistic driver modeling approach and evaluate it using data from professional race drivers and a state-of-the-art race car simulator. We show that our framework can create realistic driving line distributions on unseen race tracks with almost human-like performance. Moreover, our driver model optimizes its driving lap by lap, correcting driving errors from previous laps while achieving faster lap times. This work contributes to a better understanding and modeling of the human driver, aiming to expedite simulation methods in the modern vehicle development process and potentially supporting automated driving and racing technologies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge