Sichen Yang

Johns Hopkins University

Multi-level meta-reinforcement learning with skill-based curriculum

Mar 09, 2026Abstract:We consider problems in sequential decision making with natural multi-level structure, where sub-tasks are assembled together to accomplish complex goals. Systematically inferring and leveraging hierarchical structure has remained a longstanding challenge; we describe an efficient multi-level procedure for repeatedly compressing Markov decision processes (MDPs), wherein a parametric family of policies at one level is treated as single actions in the compressed MDPs at higher levels, while preserving the semantic meanings and structure of the original MDP, and mimicking the natural logic to address a complex MDP. Higher-level MDPs are themselves independent MDPs with less stochasticity, and may be solved using existing algorithms. As a byproduct, spatial or temporal scales may be coarsened at higher levels, making it more efficient to find long-term optimal policies. The multi-level representation delivered by this procedure decouples sub-tasks from each other and usually greatly reduces unnecessary stochasticity and the policy search space, leading to fewer iterations and computations when solving the MDPs. A second fundamental aspect of this work is that these multi-level decompositions plus the factorization of policies into embeddings (problem-specific) and skills (including higher-order functions) yield new transfer opportunities of skills across different problems and different levels. This whole process is framed within curriculum learning, wherein a teacher organizes the student agent's learning process in a way that gradually increases the difficulty of tasks and and promotes transfer across MDPs and levels within and across curricula. The consistency of this framework and its benefits can be guaranteed under mild assumptions. We demonstrate abstraction, transferability, and curriculum learning in examples, including MazeBase+, a more complex variant of the MazeBase example.

Exact Recovery of Community Detection in dependent Gaussian Mixture Models

Sep 23, 2022

Abstract:We study the community detection problem on a Gaussian mixture model, in which (1) vertices are divided into $k\geq 2$ distinct communities that are not necessarily equally-sized; (2) the Gaussian perturbations for different entries in the observation matrix are not necessarily independent or identically distributed. We prove necessary and sufficient conditions for the exact recovery of the maximum likelihood estimation (MLE), and discuss the cases when these necessary and sufficient conditions give sharp threshold. Applications include the community detection on a graph where the Gaussian perturbations of observations on each edge is the sum of i.i.d.~Gaussian random variables on its end vertices, in which we explicitly obtain the threshold for the exact recovery of the MLE.

Nonlinear model reduction for slow-fast stochastic systems near manifolds

Apr 05, 2021

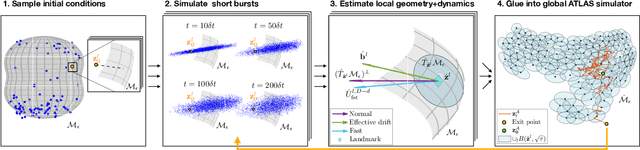

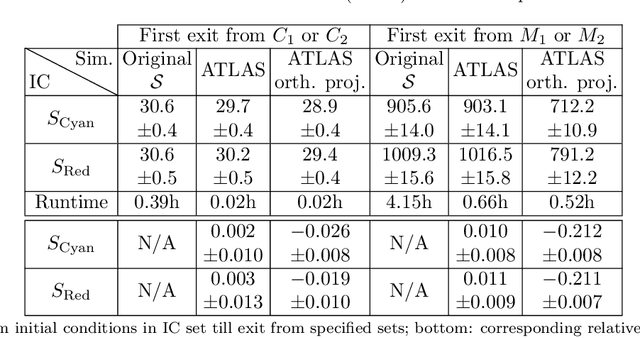

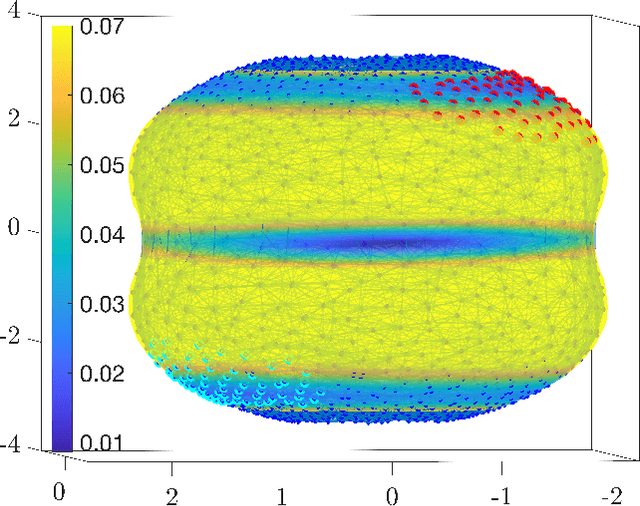

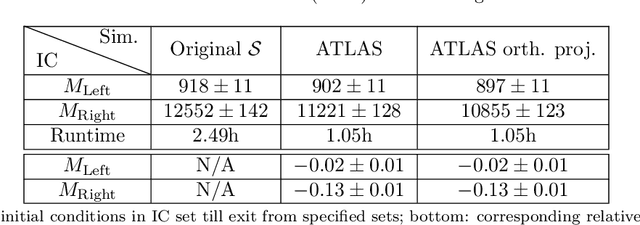

Abstract:We introduce a nonlinear stochastic model reduction technique for high-dimensional stochastic dynamical systems that have a low-dimensional invariant effective manifold with slow dynamics, and high-dimensional, large fast modes. Given only access to a black box simulator from which short bursts of simulation can be obtained, we estimate the invariant manifold, a process of the effective (stochastic) dynamics on it, and construct an efficient simulator thereof. These estimation steps can be performed on-the-fly, leading to efficient exploration of the effective state space, without losing consistency with the underlying dynamics. This construction enables fast and efficient simulation of paths of the effective dynamics, together with estimation of crucial features and observables of such dynamics, including the stationary distribution, identification of metastable states, and residence times and transition rates between them.

Image Super-Resolution Using a Wavelet-based Generative Adversarial Network

Jul 24, 2019

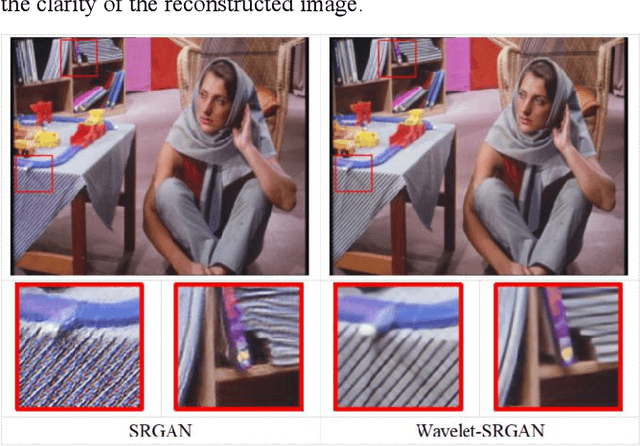

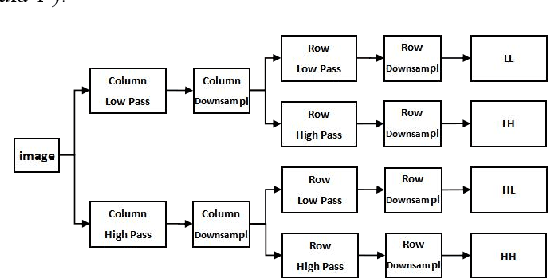

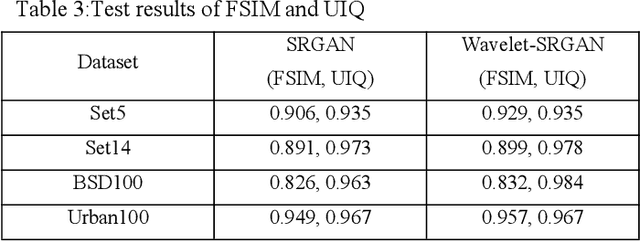

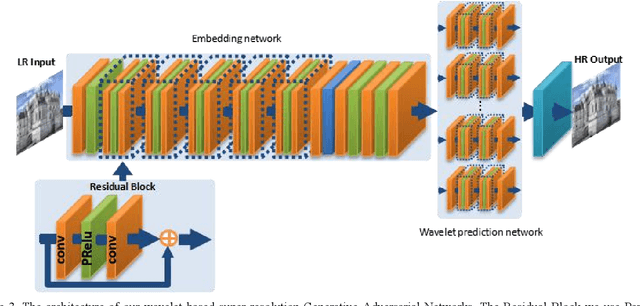

Abstract:In this paper, we consider the problem of super-resolution recons-truction. This is a hot topic because super-resolution reconstruction has a wide range of applications in the medical field, remote sensing monitoring, and criminal investigation. Compared with traditional algorithms, the current super-resolution reconstruction algorithm based on deep learning greatly improves the clarity of reconstructed pictures. Existing work like Super-Resolution Using a Generative Adversarial Network (SRGAN) can effectively restore the texture details of the image. However, experimentally verified that the texture details of the image recovered by the SRGAN are not robust. In order to get super-resolution reconstructed images with richer high-frequency details, we improve the network structure and propose a super-resolution reconstruction algorithm combining wavelet transform and Generative Adversarial Network. The proposed algorithm can efficiently reconstruct high-resolution images with rich global information and local texture details. We have trained our model by PyTorch framework and VOC2012 dataset, and tested it by Set5, Set14, BSD100 and Urban100 test datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge