Sebastiano Battiato

Department of Mathematics and Computer Science, University of Catania, Italy

Deep Audio Analyzer: a Framework to Industrialize the Research on Audio Forensics

Oct 29, 2023

Abstract:Deep Audio Analyzer is an open source speech framework that aims to simplify the research and the development process of neural speech processing pipelines, allowing users to conceive, compare and share results in a fast and reproducible way. This paper describes the core architecture designed to support several tasks of common interest in the audio forensics field, showing possibility of creating new tasks thus customizing the framework. By means of Deep Audio Analyzer, forensics examiners (i.e. from Law Enforcement Agencies) and researchers will be able to visualize audio features, easily evaluate performances on pretrained models, to create, export and share new audio analysis workflows by combining deep neural network models with few clicks. One of the advantages of this tool is to speed up research and practical experimentation, in the field of audio forensics analysis thus also improving experimental reproducibility by exporting and sharing pipelines. All features are developed in modules accessible by the user through a Graphic User Interface. Index Terms: Speech Processing, Deep Learning Audio, Deep Learning Audio Pipeline creation, Audio Forensics.

Improving Video Deepfake Detection: A DCT-Based Approach with Patch-Level Analysis

Oct 17, 2023Abstract:The term deepfake refers to all those multimedia contents that were synthetically altered or created from scratch through the use of generative models. This phenomenon has become widespread due to the use of increasingly accurate and efficient architectures capable of rendering manipulated content indistinguishable from real content. In order to fight the illicit use of this powerful technology, it has become necessary to develop algorithms able to distinguish synthetic content from real ones. In this study, a new algorithm for the detection of deepfakes in digital videos is presented, focusing on the main goal of creating a fast and explainable method from a forensic perspective. To achieve this goal, the I-frames were extracted in order to provide faster computation and analysis than approaches described in literature. In addition, to identify the most discriminating regions within individual video frames, the entire frame, background, face, eyes, nose, mouth, and face frame were analyzed separately. From the Discrete Cosine Transform (DCT), the Beta components were extracted from the AC coefficients and used as input to standard classifiers (e.g., k-NN, SVM, and others) in order to identify those frequencies most discriminative for solving the task in question. Experimental results obtained on the Faceforensics++ and Celeb-DF (v2) datasets show that the eye and mouth regions are those most discriminative and able to determine the nature of the video with greater reliability than the analysis of the whole frame. The method proposed in this study is analytical, fast and does not require much computational power.

Innovative Methods for Non-Destructive Inspection of Handwritten Documents

Oct 17, 2023Abstract:Handwritten document analysis is an area of forensic science, with the goal of establishing authorship of documents through examination of inherent characteristics. Law enforcement agencies use standard protocols based on manual processing of handwritten documents. This method is time-consuming, is often subjective in its evaluation, and is not replicable. To overcome these limitations, in this paper we present a framework capable of extracting and analyzing intrinsic measures of manuscript documents related to text line heights, space between words, and character sizes using image processing and deep learning techniques. The final feature vector for each document involved consists of the mean and standard deviation for every type of measure collected. By quantifying the Euclidean distance between the feature vectors of the documents to be compared, authorship can be discerned. We also proposed a new and challenging dataset consisting of 362 handwritten manuscripts written on paper and digital devices by 124 different people. Our study pioneered the comparison between traditionally handwritten documents and those produced with digital tools (e.g., tablets). Experimental results demonstrate the ability of our method to objectively determine authorship in different writing media, outperforming the state of the art.

Deep Learning Algorithm for Advanced Level-3 Inverse-Modeling of Silicon-Carbide Power MOSFET Devices

Oct 16, 2023Abstract:Inverse modelling with deep learning algorithms involves training deep architecture to predict device's parameters from its static behaviour. Inverse device modelling is suitable to reconstruct drifted physical parameters of devices temporally degraded or to retrieve physical configuration. There are many variables that can influence the performance of an inverse modelling method. In this work the authors propose a deep learning method trained for retrieving physical parameters of Level-3 model of Power Silicon-Carbide MOSFET (SiC Power MOS). The SiC devices are used in applications where classical silicon devices failed due to high-temperature or high switching capability. The key application of SiC power devices is in the automotive field (i.e. in the field of electrical vehicles). Due to physiological degradation or high-stressing environment, SiC Power MOS shows a significant drift of physical parameters which can be monitored by using inverse modelling. The aim of this work is to provide a possible deep learning-based solution for retrieving physical parameters of the SiC Power MOSFET. Preliminary results based on the retrieving of channel length of the device are reported. Channel length of power MOSFET is a key parameter involved in the static and dynamic behaviour of the device. The experimental results reported in this work confirmed the effectiveness of a multi-layer perceptron designed to retrieve this parameter.

Not with my name! Inferring artists' names of input strings employed by Diffusion Models

Jul 25, 2023

Abstract:Diffusion Models (DM) are highly effective at generating realistic, high-quality images. However, these models lack creativity and merely compose outputs based on their training data, guided by a textual input provided at creation time. Is it acceptable to generate images reminiscent of an artist, employing his name as input? This imply that if the DM is able to replicate an artist's work then it was trained on some or all of his artworks thus violating copyright. In this paper, a preliminary study to infer the probability of use of an artist's name in the input string of a generated image is presented. To this aim we focused only on images generated by the famous DALL-E 2 and collected images (both original and generated) of five renowned artists. Finally, a dedicated Siamese Neural Network was employed to have a first kind of probability. Experimental results demonstrate that our approach is an optimal starting point and can be employed as a prior for predicting a complete input string of an investigated image. Dataset and code are available at: https://github.com/ictlab-unict/not-with-my-name .

Boosting multiple sclerosis lesion segmentation through attention mechanism

Apr 21, 2023

Abstract:Magnetic resonance imaging is a fundamental tool to reach a diagnosis of multiple sclerosis and monitoring its progression. Although several attempts have been made to segment multiple sclerosis lesions using artificial intelligence, fully automated analysis is not yet available. State-of-the-art methods rely on slight variations in segmentation architectures (e.g. U-Net, etc.). However, recent research has demonstrated how exploiting temporal-aware features and attention mechanisms can provide a significant boost to traditional architectures. This paper proposes a framework that exploits an augmented U-Net architecture with a convolutional long short-term memory layer and attention mechanism which is able to segment and quantify multiple sclerosis lesions detected in magnetic resonance images. Quantitative and qualitative evaluation on challenging examples demonstrated how the method outperforms previous state-of-the-art approaches, reporting an overall Dice score of 89% and also demonstrating robustness and generalization ability on never seen new test samples of a new dedicated under construction dataset.

Early detection of hip periprosthetic joint infections through CNN on Computed Tomography images

Apr 18, 2023Abstract:Early detection of an infection prior to prosthesis removal (e.g., hips, knees or other areas) would provide significant benefits to patients. Currently, the detection task is carried out only retrospectively with a limited number of methods relying on biometric or other medical data. The automatic detection of a periprosthetic joint infection from tomography imaging is a task never addressed before. This study introduces a novel method for early detection of the hip prosthesis infections analyzing Computed Tomography images. The proposed solution is based on a novel ResNeSt Convolutional Neural Network architecture trained on samples from more than 100 patients. The solution showed exceptional performance in detecting infections with an experimental high level of accuracy and F-score.

UniCT DMI Solution for 3rd COV19D Competition on COVID-19 Detection through attention-based CNN for CT Scan

Mar 22, 2023

Abstract:This paper presents our solution for the first challenge of the 3rd Covid-19 competition, which is part of the "AI-enabled Medical Image Analysis Workshop" organized by IEEE International Conference on Acoustic, Speech and Signal Processing (ICASSP) 2023. Our proposed solution is based on a Resnet as a backbone network with the addition of attention mechanisms. The Resnet provides an effective feature extractor for the classification task, while the attention mechanisms improve the model's ability to focus on important regions of interest within the images. We conducted extensive experiments on the provided dataset and achieved promising results. Our proposed approach has the potential to assist in the accurate diagnosis of Covid-19 from chest computed tomography images, which can aid in the early detection and management of the disease.

Level Up the Deepfake Detection: a Method to Effectively Discriminate Images Generated by GAN Architectures and Diffusion Models

Mar 01, 2023

Abstract:The image deepfake detection task has been greatly addressed by the scientific community to discriminate real images from those generated by Artificial Intelligence (AI) models: a binary classification task. In this work, the deepfake detection and recognition task was investigated by collecting a dedicated dataset of pristine images and fake ones generated by 9 different Generative Adversarial Network (GAN) architectures and by 4 additional Diffusion Models (DM). A hierarchical multi-level approach was then introduced to solve three different deepfake detection and recognition tasks: (i) Real Vs AI generated; (ii) GANs Vs DMs; (iii) AI specific architecture recognition. Experimental results demonstrated, in each case, more than 97% classification accuracy, outperforming state-of-the-art methods.

Transfer Learning via Test-Time Neural Networks Aggregation

Jun 27, 2022

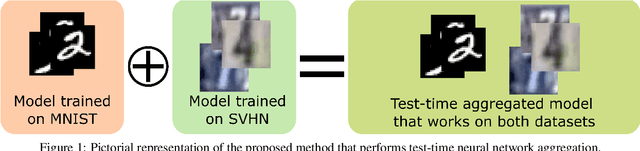

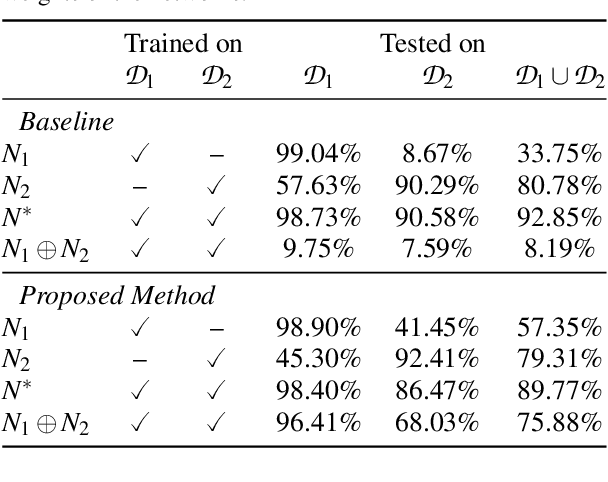

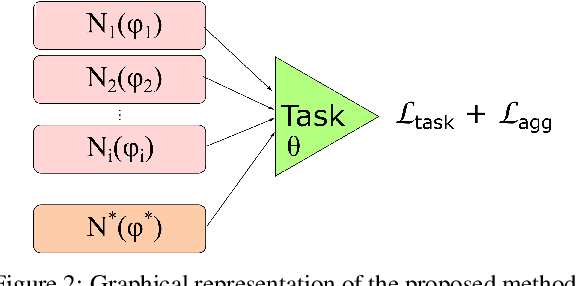

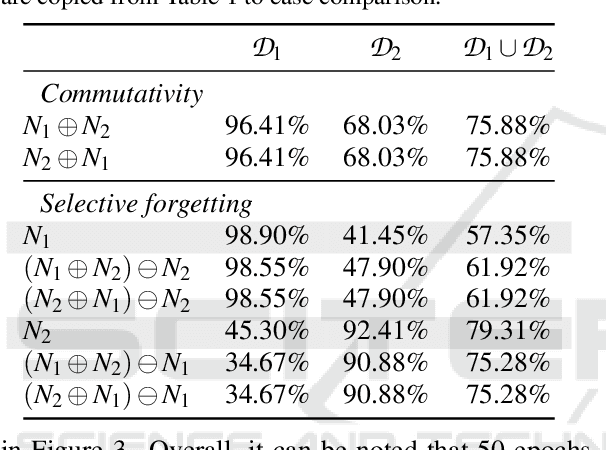

Abstract:It has been demonstrated that deep neural networks outperform traditional machine learning. However, deep networks lack generalisability, that is, they will not perform as good as in a new (testing) set drawn from a different distribution due to the domain shift. In order to tackle this known issue, several transfer learning approaches have been proposed, where the knowledge of a trained model is transferred into another to improve performance with different data. However, most of these approaches require additional training steps, or they suffer from catastrophic forgetting that occurs when a trained model has overwritten previously learnt knowledge. We address both problems with a novel transfer learning approach that uses network aggregation. We train dataset-specific networks together with an aggregation network in a unified framework. The loss function includes two main components: a task-specific loss (such as cross-entropy) and an aggregation loss. The proposed aggregation loss allows our model to learn how trained deep network parameters can be aggregated with an aggregation operator. We demonstrate that the proposed approach learns model aggregation at test time without any further training step, reducing the burden of transfer learning to a simple arithmetical operation. The proposed approach achieves comparable performance w.r.t. the baseline. Besides, if the aggregation operator has an inverse, we will show that our model also inherently allows for selective forgetting, i.e., the aggregated model can forget one of the datasets it was trained on, retaining information on the others.

* 8 pages

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge