Sangheon Lee

VLM-SubtleBench: How Far Are VLMs from Human-Level Subtle Comparative Reasoning?

Mar 09, 2026Abstract:The ability to distinguish subtle differences between visually similar images is essential for diverse domains such as industrial anomaly detection, medical imaging, and aerial surveillance. While comparative reasoning benchmarks for vision-language models (VLMs) have recently emerged, they primarily focus on images with large, salient differences and fail to capture the nuanced reasoning required for real-world applications. In this work, we introduce VLM-SubtleBench, a benchmark designed to evaluate VLMs on subtle comparative reasoning. Our benchmark covers ten difference types - Attribute, State, Emotion, Temporal, Spatial, Existence, Quantity, Quality, Viewpoint, and Action - and curate paired question-image sets reflecting these fine-grained variations. Unlike prior benchmarks restricted to natural image datasets, our benchmark spans diverse domains, including industrial, aerial, and medical imagery. Through extensive evaluation of both proprietary and open-source VLMs, we reveal systematic gaps between model and human performance across difference types and domains, and provide controlled analyses highlighting where VLMs' reasoning sharply deteriorates. Together, our benchmark and findings establish a foundation for advancing VLMs toward human-level comparative reasoning.

Masked Deep Q-Recommender for Effective Question Scheduling

Dec 19, 2021

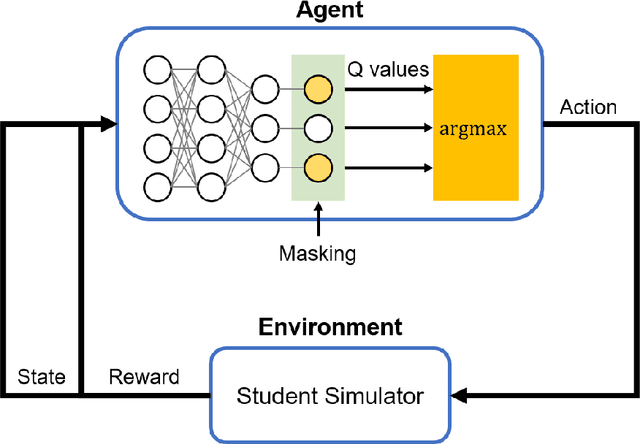

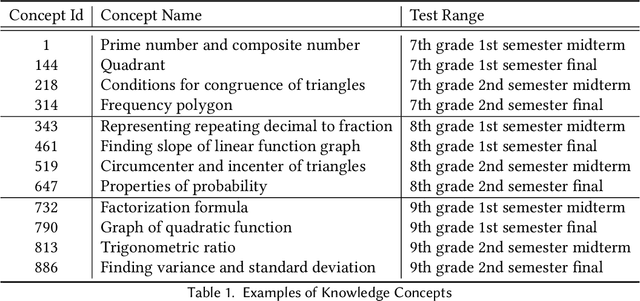

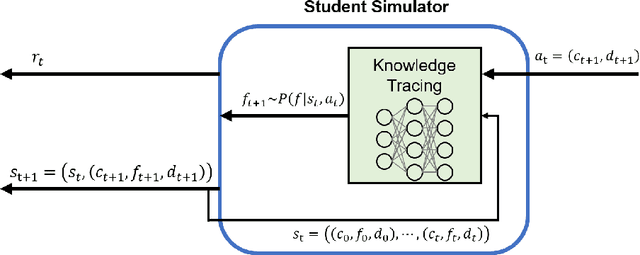

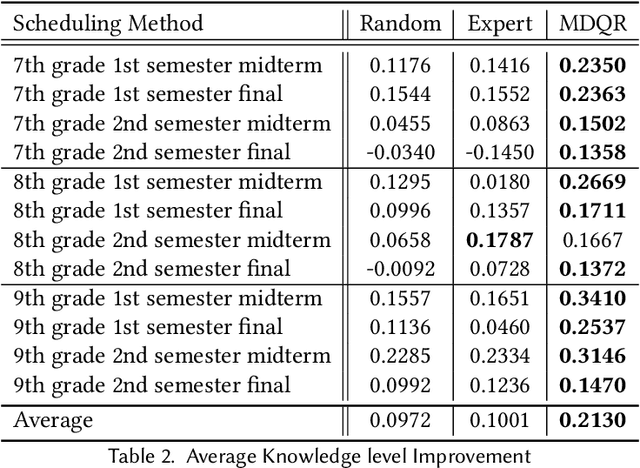

Abstract:Providing appropriate questions according to a student's knowledge level is imperative in personalized learning. However, It requires a lot of manual effort for teachers to understand students' knowledge status and provide optimal questions accordingly. To address this problem, we introduce a question scheduling model that can effectively boost student knowledge level using Reinforcement Learning (RL). Our proposed method first evaluates students' concept-level knowledge using knowledge tracing (KT) model. Given predicted student knowledge, RL-based recommender predicts the benefits of each question. With curriculum range restriction and duplicate penalty, the recommender selects questions sequentially until it reaches the predefined number of questions. In an experimental setting using a student simulator, which gives 20 questions per day for two weeks, questions recommended by the proposed method increased average student knowledge level by 21.3%, superior to an expert-designed schedule baseline with a 10% increase in student knowledge levels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge