Sabrina Enriquez

Categorical exploratory data analysis on goodness-of-fit issues

Dec 04, 2020

Abstract:If the aphorism "All models are wrong"- George Box, continues to be true in data analysis, particularly when analyzing real-world data, then we should annotate this wisdom with visible and explainable data-driven patterns. Such annotations can critically shed invaluable light on validity as well as limitations of statistical modeling as a data analysis approach. In an effort to avoid holding our real data to potentially unattainable or even unrealistic theoretical structures, we propose to utilize the data analysis paradigm called Categorical Exploratory Data Analysis (CEDA). We illustrate the merits of this proposal with two real-world data sets from the perspective of goodness-of-fit. In both data sets, the Normal distribution's bell shape seemingly fits rather well by first glance. We apply CEDA to bring out where and how each data fits or deviates from the model shape via several important distributional aspects. We also demonstrate that CEDA affords a version of tree-based p-value, and compare it with p-values based on traditional statistical approaches. Along our data analysis, we invest computational efforts in making graphic display to illuminate the advantages of using CEDA as one primary way of data analysis in Data Science education.

Extreme-K categorical samples problem

Jul 29, 2020

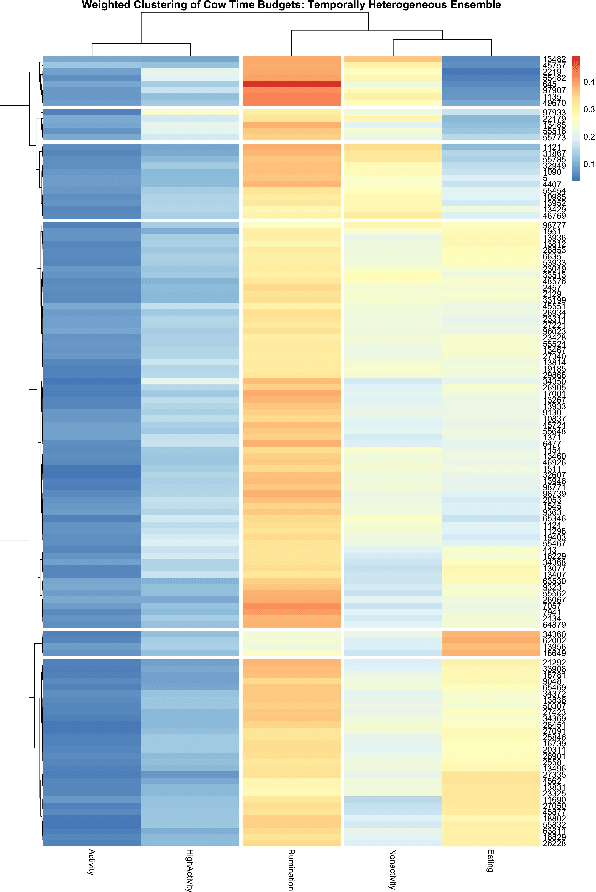

Abstract:With histograms as its foundation, we develop Categorical Exploratory Data Analysis (CEDA) under the extreme-$K$ sample problem, and illustrate its universal applicability through four 1D categorical datasets. Given a sizable $K$, CEDA's ultimate goal amounts to discover by data's information content via carrying out two data-driven computational tasks: 1) establish a tree geometry upon $K$ populations as a platform for discovering a wide spectrum of patterns among populations; 2) evaluate each geometric pattern's reliability. In CEDA developments, each population gives rise to a row vector of categories proportions. Upon the data matrix's row-axis, we discuss the pros and cons of Euclidean distance against its weighted version for building a binary clustering tree geometry. The criterion of choice rests on degrees of uniformness in column-blocks framed by this binary clustering tree. Each tree-leaf (population) is then encoded with a binary code sequence, so is tree-based pattern. For evaluating reliability, we adopt row-wise multinomial randomness to generate an ensemble of matrix mimicries, so an ensemble of mimicked binary trees. Reliability of any observed pattern is its recurrence rate within the tree ensemble. A high reliability value means a deterministic pattern. Our four applications of CEDA illuminate four significant aspects of extreme-$K$ sample problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge