Saba Akhyani

Towards Inclusive HRI: Using Sim2Real to Address Underrepresentation in Emotion Expression Recognition

Aug 15, 2022

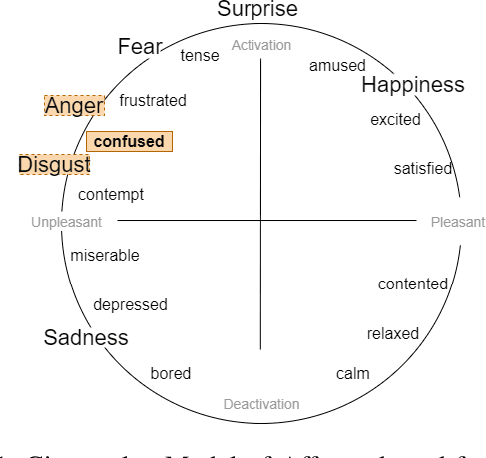

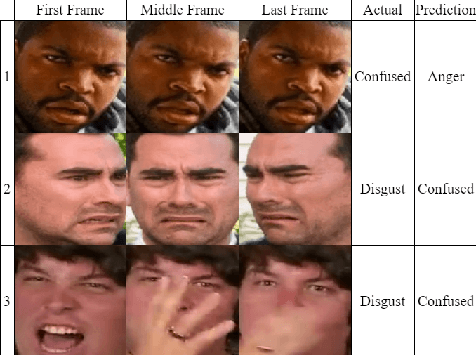

Abstract:Robots and artificial agents that interact with humans should be able to do so without bias and inequity, but facial perception systems have notoriously been found to work more poorly for certain groups of people than others. In our work, we aim to build a system that can perceive humans in a more transparent and inclusive manner. Specifically, we focus on dynamic expressions on the human face, which are difficult to collect for a broad set of people due to privacy concerns and the fact that faces are inherently identifiable. Furthermore, datasets collected from the Internet are not necessarily representative of the general population. We address this problem by offering a Sim2Real approach in which we use a suite of 3D simulated human models that enables us to create an auditable synthetic dataset covering 1) underrepresented facial expressions, outside of the six basic emotions, such as confusion; 2) ethnic or gender minority groups; and 3) a wide range of viewing angles that a robot may encounter a human in the real world. By augmenting a small dynamic emotional expression dataset containing 123 samples with a synthetic dataset containing 4536 samples, we achieved an improvement in accuracy of 15% on our own dataset and 11% on an external benchmark dataset, compared to the performance of the same model architecture without synthetic training data. We also show that this additional step improves accuracy specifically for racial minorities when the architecture's feature extraction weights are trained from scratch.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge