S. Fernando

Interpretability and accessibility of machine learning in selected food processing, agriculture and health applications

Nov 30, 2022Abstract:Artificial Intelligence (AI) and its data-centric branch of machine learning (ML) have greatly evolved over the last few decades. However, as AI is used increasingly in real world use cases, the importance of the interpretability of and accessibility to AI systems have become major research areas. The lack of interpretability of ML based systems is a major hindrance to widespread adoption of these powerful algorithms. This is due to many reasons including ethical and regulatory concerns, which have resulted in poorer adoption of ML in some areas. The recent past has seen a surge in research on interpretable ML. Generally, designing a ML system requires good domain understanding combined with expert knowledge. New techniques are emerging to improve ML accessibility through automated model design. This paper provides a review of the work done to improve interpretability and accessibility of machine learning in the context of global problems while also being relevant to developing countries. We review work under multiple levels of interpretability including scientific and mathematical interpretation, statistical interpretation and partial semantic interpretation. This review includes applications in three areas, namely food processing, agriculture and health.

* published in the "Journal of the National Science Foundation of Sri Lanka, Volume 50"

Wavelet based edge feature enhancement for convolutional neural networks

Aug 29, 2018Abstract:Convolutional neural networks are able to perform a hierarchical learning process starting with local features. However, a limited attention is paid to enhancing such elementary level features like edges. We propose and evaluate two wavelet-based edge feature enhancement methods to preprocess the input images to convolutional neural networks. The fi?rst method develops feature enhanced representations by decomposing the input images using wavelet transform and limited reconstructing subsequently. The second method develops such feature enhanced inputs to the network using local modulus maxima of wavelet coe?cients. For each method, we have developed a new preprocessing layer by implementing each purposed method and have appended to the network architecture. Our empirical evaluations demonstrate that the proposed methods are outperforming the baselines and previously published work with signi?cant accuracy gains.

On Optimizing Deep Convolutional Neural Networks by Evolutionary Computing

Aug 06, 2018

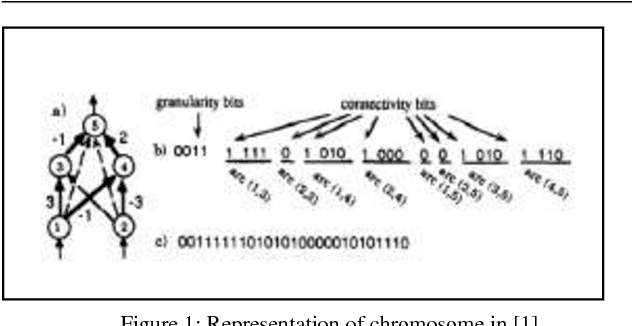

Abstract:Optimization for deep networks is currently a very active area of research. As neural networks become deeper, the ability in manually optimizing the network becomes harder. Mini-batch normalization, identification of effective respective fields, momentum updates, introduction of residual blocks, learning rate adoption, etc. have been proposed to speed up the rate of convergent in manual training process while keeping the higher accuracy level. However, the problem of finding optimal topological structure for a given problem is becoming a challenging task need to be addressed immediately. Few researchers have attempted to optimize the network structure using evolutionary computing approaches. Among them, few have successfully evolved networks with reinforcement learning and long-short-term memory. A very few has applied evolutionary programming into deep convolution neural networks. These attempts are mainly evolved the network structure and then subsequently optimized the hyper-parameters of the network. However, a mechanism to evolve the deep network structure under the techniques currently being practiced in manual process is still absent. Incorporation of such techniques into chromosomes level of evolutionary computing, certainly can take us to better topological deep structures. The paper concludes by identifying the gap between evolutionary based deep neural networks and deep neural networks. Further, it proposes some insights for optimizing deep neural networks using evolutionary computing techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge