I. T. S. Piyatilake

Multi-Path Learnable Wavelet Neural Network for Image Classification

Aug 26, 2019

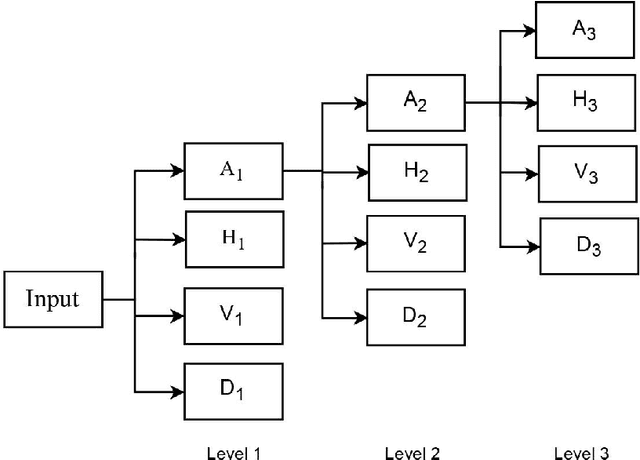

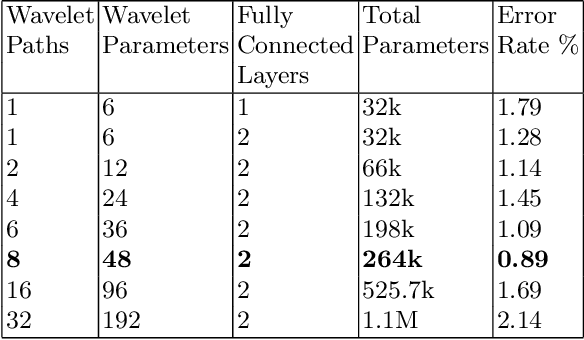

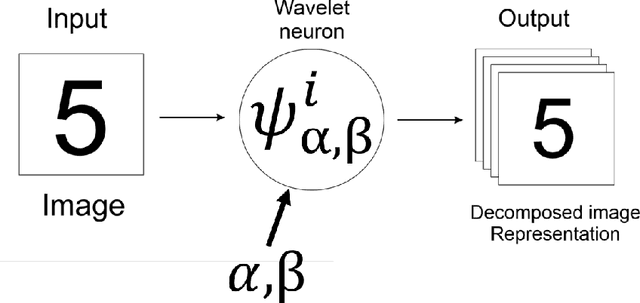

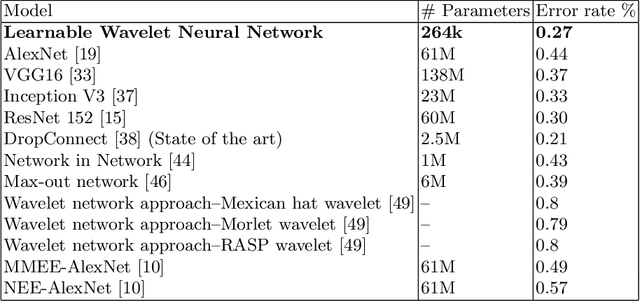

Abstract:Despite the remarkable success of deep learning in pattern recognition, deep network models face the problem of training a large number of parameters. In this paper, we propose and evaluate a novel multi-path wavelet neural network architecture for image classification with far less number of trainable parameters. The model architecture consists of a multi-path layout with several levels of wavelet decompositions performed in parallel followed by fully connected layers. These decomposition operations comprise wavelet neurons with learnable parameters, which are updated during the training phase using the back-propagation algorithm. We evaluate the performance of the introduced network using common image datasets without data augmentation except for SVHN and compare the results with influential deep learning models. Our findings support the possibility of reducing the number of parameters significantly in deep neural networks without compromising its accuracy.

Wavelet based edge feature enhancement for convolutional neural networks

Aug 29, 2018Abstract:Convolutional neural networks are able to perform a hierarchical learning process starting with local features. However, a limited attention is paid to enhancing such elementary level features like edges. We propose and evaluate two wavelet-based edge feature enhancement methods to preprocess the input images to convolutional neural networks. The fi?rst method develops feature enhanced representations by decomposing the input images using wavelet transform and limited reconstructing subsequently. The second method develops such feature enhanced inputs to the network using local modulus maxima of wavelet coe?cients. For each method, we have developed a new preprocessing layer by implementing each purposed method and have appended to the network architecture. Our empirical evaluations demonstrate that the proposed methods are outperforming the baselines and previously published work with signi?cant accuracy gains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge