Ripan Kumar Kundu

PILAR: Personalizing Augmented Reality Interactions with LLM-based Human-Centric and Trustworthy Explanations for Daily Use Cases

Dec 19, 2025

Abstract:Artificial intelligence (AI)-driven augmented reality (AR) systems are becoming increasingly integrated into daily life, and with this growth comes a greater need for explainability in real-time user interactions. Traditional explainable AI (XAI) methods, which often rely on feature-based or example-based explanations, struggle to deliver dynamic, context-specific, personalized, and human-centric insights for everyday AR users. These methods typically address separate explainability dimensions (e.g., when, what, how) with different explanation techniques, resulting in unrealistic and fragmented experiences for seamless AR interactions. To address this challenge, we propose PILAR, a novel framework that leverages a pre-trained large language model (LLM) to generate context-aware, personalized explanations, offering a more intuitive and trustworthy experience in real-time AI-powered AR systems. Unlike traditional methods, which rely on multiple techniques for different aspects of explanation, PILAR employs a unified LLM-based approach that dynamically adapts explanations to the user's needs, fostering greater trust and engagement. We implement the PILAR concept in a real-world AR application (e.g., personalized recipe recommendations), an open-source prototype that integrates real-time object detection, recipe recommendation, and LLM-based personalized explanations of the recommended recipes based on users' dietary preferences. We evaluate the effectiveness of PILAR through a user study with 16 participants performing AR-based recipe recommendation tasks, comparing an LLM-based explanation interface to a traditional template-based one. Results show that the LLM-based interface significantly enhances user performance and experience, with participants completing tasks 40% faster and reporting greater satisfaction, ease of use, and perceived transparency.

PrivateXR: Defending Privacy Attacks in Extended Reality Through Explainable AI-Guided Differential Privacy

Dec 18, 2025

Abstract:The convergence of artificial AI and XR technologies (AI XR) promises innovative applications across many domains. However, the sensitive nature of data (e.g., eye-tracking) used in these systems raises significant privacy concerns, as adversaries can exploit these data and models to infer and leak personal information through membership inference attacks (MIA) and re-identification (RDA) with a high success rate. Researchers have proposed various techniques to mitigate such privacy attacks, including differential privacy (DP). However, AI XR datasets often contain numerous features, and applying DP uniformly can introduce unnecessary noise to less relevant features, degrade model accuracy, and increase inference time, limiting real-time XR deployment. Motivated by this, we propose a novel framework combining explainable AI (XAI) and DP-enabled privacy-preserving mechanisms to defend against privacy attacks. Specifically, we leverage post-hoc explanations to identify the most influential features in AI XR models and selectively apply DP to those features during inference. We evaluate our XAI-guided DP approach on three state-of-the-art AI XR models and three datasets: cybersickness, emotion, and activity classification. Our results show that the proposed method reduces MIA and RDA success rates by up to 43% and 39%, respectively, for cybersickness tasks while preserving model utility with up to 97% accuracy using Transformer models. Furthermore, it improves inference time by up to ~2x compared to traditional DP approaches. To demonstrate practicality, we deploy the XAI-guided DP AI XR models on an HTC VIVE Pro headset and develop a user interface (UI), namely PrivateXR, allowing users to adjust privacy levels (e.g., low, medium, high) while receiving real-time task predictions, protecting user privacy during XR gameplay.

Adversarial VR: An Open-Source Testbed for Evaluating Adversarial Robustness of VR Cybersickness Detection and Mitigation

Dec 18, 2025Abstract:Deep learning (DL)-based automated cybersickness detection methods, along with adaptive mitigation techniques, can enhance user comfort and interaction. However, recent studies show that these DL-based systems are susceptible to adversarial attacks; small perturbations to sensor inputs can degrade model performance, trigger incorrect mitigation, and disrupt the user's immersive experience (UIX). Additionally, there is a lack of dedicated open-source testbeds that evaluate the robustness of these systems under adversarial conditions, limiting the ability to assess their real-world effectiveness. To address this gap, this paper introduces Adversarial-VR, a novel real-time VR testbed for evaluating DL-based cybersickness detection and mitigation strategies under adversarial conditions. Developed in Unity, the testbed integrates two state-of-the-art (SOTA) DL models: DeepTCN and Transformer, which are trained on the open-source MazeSick dataset, for real-time cybersickness severity detection and applies a dynamic visual tunneling mechanism that adjusts the field-of-view based on model outputs. To assess robustness, we incorporate three SOTA adversarial attacks: MI-FGSM, PGD, and C&W, which successfully prevent cybersickness mitigation by fooling DL-based cybersickness models' outcomes. We implement these attacks using a testbed with a custom-built VR Maze simulation and an HTC Vive Pro Eye headset, and we open-source our implementation for widespread adoption by VR developers and researchers. Results show that these adversarial attacks are capable of successfully fooling the system. For instance, the C&W attack results in a $5.94x decrease in accuracy for the Transformer-based cybersickness model compared to the accuracy without the attack.

Securing Virtual Reality Experiences: Unveiling and Tackling Cybersickness Attacks with Explainable AI

Mar 17, 2025Abstract:The synergy between virtual reality (VR) and artificial intelligence (AI), specifically deep learning (DL)-based cybersickness detection models, has ushered in unprecedented advancements in immersive experiences by automatically detecting cybersickness severity and adaptively various mitigation techniques, offering a smooth and comfortable VR experience. While this DL-enabled cybersickness detection method provides promising solutions for enhancing user experiences, it also introduces new risks since these models are vulnerable to adversarial attacks; a small perturbation of the input data that is visually undetectable to human observers can fool the cybersickness detection model and trigger unexpected mitigation, thus disrupting user immersive experiences (UIX) and even posing safety risks. In this paper, we present a new type of VR attack, i.e., a cybersickness attack, which successfully stops the triggering of cybersickness mitigation by fooling DL-based cybersickness detection models and dramatically hinders the UIX. Next, we propose a novel explainable artificial intelligence (XAI)-guided cybersickness attack detection framework to detect such attacks in VR to ensure UIX and a comfortable VR experience. We evaluate the proposed attack and the detection framework using two state-of-the-art open-source VR cybersickness datasets: Simulation 2021 and Gameplay dataset. Finally, to verify the effectiveness of our proposed method, we implement the attack and the XAI-based detection using a testbed with a custom-built VR roller coaster simulation with an HTC Vive Pro Eye headset and perform a user study. Our study shows that such an attack can dramatically hinder the UIX. However, our proposed XAI-guided cybersickness attack detection can successfully detect cybersickness attacks and trigger the proper mitigation, effectively reducing VR cybersickness.

Mazed and Confused: A Dataset of Cybersickness, Working Memory, Mental Load, Physical Load, and Attention During a Real Walking Task in VR

Sep 10, 2024

Abstract:Virtual Reality (VR) is quickly establishing itself in various industries, including training, education, medicine, and entertainment, in which users are frequently required to carry out multiple complex cognitive and physical activities. However, the relationship between cognitive activities, physical activities, and familiar feelings of cybersickness is not well understood and thus can be unpredictable for developers. Researchers have previously provided labeled datasets for predicting cybersickness while users are stationary, but there have been few labeled datasets on cybersickness while users are physically walking. Thus, from 39 participants, we collected head orientation, head position, eye tracking, images, physiological readings from external sensors, and the self-reported cybersickness severity, physical load, and mental load in VR. Throughout the data collection, participants navigated mazes via real walking and performed tasks challenging their attention and working memory. To demonstrate the dataset's utility, we conducted a case study of training classifiers in which we achieved 95% accuracy for cybersickness severity classification. The noteworthy performance of the straightforward classifiers makes this dataset ideal for future researchers to develop cybersickness detection and reduction models. To better understand the features that helped with classification, we performed SHAP(SHapley Additive exPlanations) analysis, highlighting the importance of eye tracking and physiological measures for cybersickness prediction while walking. This open dataset can allow future researchers to study the connection between cybersickness and cognitive loads and develop prediction models. This dataset will empower future VR developers to design efficient and effective Virtual Environments by improving cognitive load management and minimizing cybersickness.

LiteVR: Interpretable and Lightweight Cybersickness Detection using Explainable AI

Feb 05, 2023

Abstract:Cybersickness is a common ailment associated with virtual reality (VR) user experiences. Several automated methods exist based on machine learning (ML) and deep learning (DL) to detect cybersickness. However, most of these cybersickness detection methods are perceived as computationally intensive and black-box methods. Thus, those techniques are neither trustworthy nor practical for deploying on standalone energy-constrained VR head-mounted devices (HMDs). In this work, we present an explainable artificial intelligence (XAI)-based framework, LiteVR, for cybersickness detection, explaining the model's outcome and reducing the feature dimensions and overall computational costs. First, we develop three cybersickness DL models based on long-term short-term memory (LSTM), gated recurrent unit (GRU), and multilayer perceptron (MLP). Then, we employed a post-hoc explanation, such as SHapley Additive Explanations (SHAP), to explain the results and extract the most dominant features of cybersickness. Finally, we retrain the DL models with the reduced number of features. Our results show that eye-tracking features are the most dominant for cybersickness detection. Furthermore, based on the XAI-based feature ranking and dimensionality reduction, we significantly reduce the model's size by up to 4.3x, training time by up to 5.6x, and its inference time by up to 3.8x, with higher cybersickness detection accuracy and low regression error (i.e., on Fast Motion Scale (FMS)). Our proposed lite LSTM model obtained an accuracy of 94% in classifying cybersickness and regressing (i.e., FMS 1-10) with a Root Mean Square Error (RMSE) of 0.30, which outperforms the state-of-the-art. Our proposed LiteVR framework can help researchers and practitioners analyze, detect, and deploy their DL-based cybersickness detection models in standalone VR HMDs.

VR-LENS: Super Learning-based Cybersickness Detection and Explainable AI-Guided Deployment in Virtual Reality

Feb 03, 2023Abstract:A plethora of recent research has proposed several automated methods based on machine learning (ML) and deep learning (DL) to detect cybersickness in Virtual reality (VR). However, these detection methods are perceived as computationally intensive and black-box methods. Thus, those techniques are neither trustworthy nor practical for deploying on standalone VR head-mounted displays (HMDs). This work presents an explainable artificial intelligence (XAI)-based framework VR-LENS for developing cybersickness detection ML models, explaining them, reducing their size, and deploying them in a Qualcomm Snapdragon 750G processor-based Samsung A52 device. Specifically, we first develop a novel super learning-based ensemble ML model for cybersickness detection. Next, we employ a post-hoc explanation method, such as SHapley Additive exPlanations (SHAP), Morris Sensitivity Analysis (MSA), Local Interpretable Model-Agnostic Explanations (LIME), and Partial Dependence Plot (PDP) to explain the expected results and identify the most dominant features. The super learner cybersickness model is then retrained using the identified dominant features. Our proposed method identified eye tracking, player position, and galvanic skin/heart rate response as the most dominant features for the integrated sensor, gameplay, and bio-physiological datasets. We also show that the proposed XAI-guided feature reduction significantly reduces the model training and inference time by 1.91X and 2.15X while maintaining baseline accuracy. For instance, using the integrated sensor dataset, our reduced super learner model outperforms the state-of-the-art works by classifying cybersickness into 4 classes (none, low, medium, and high) with an accuracy of 96% and regressing (FMS 1-10) with a Root Mean Square Error (RMSE) of 0.03.

RobustPdM: Designing Robust Predictive Maintenance against Adversarial Attacks

Jan 25, 2023

Abstract:The state-of-the-art predictive maintenance (PdM) techniques have shown great success in reducing maintenance costs and downtime of complicated machines while increasing overall productivity through extensive utilization of Internet-of-Things (IoT) and Deep Learning (DL). Unfortunately, IoT sensors and DL algorithms are both prone to cyber-attacks. For instance, DL algorithms are known for their susceptibility to adversarial examples. Such adversarial attacks are vastly under-explored in the PdM domain. This is because the adversarial attacks in the computer vision domain for classification tasks cannot be directly applied to the PdM domain for multivariate time series (MTS) regression tasks. In this work, we propose an end-to-end methodology to design adversarially robust PdM systems by extensively analyzing the effect of different types of adversarial attacks and proposing a novel adversarial defense technique for DL-enabled PdM models. First, we propose novel MTS Projected Gradient Descent (PGD) and MTS PGD with random restarts (PGD_r) attacks. Then, we evaluate the impact of MTS PGD and PGD_r along with MTS Fast Gradient Sign Method (FGSM) and MTS Basic Iterative Method (BIM) on Long Short-Term Memory (LSTM), Gated Recurrent Unit (GRU), Convolutional Neural Network (CNN), and Bi-directional LSTM based PdM system. Our results using NASA's turbofan engine dataset show that adversarial attacks can cause a severe defect (up to 11X) in the RUL prediction, outperforming the effectiveness of the state-of-the-art PdM attacks by 3X. Furthermore, we present a novel approximate adversarial training method to defend against adversarial attacks. We observe that approximate adversarial training can significantly improve the robustness of PdM models (up to 54X) and outperforms the state-of-the-art PdM defense methods by offering 3X more robustness.

TruVR: Trustworthy Cybersickness Detection using Explainable Machine Learning

Sep 12, 2022

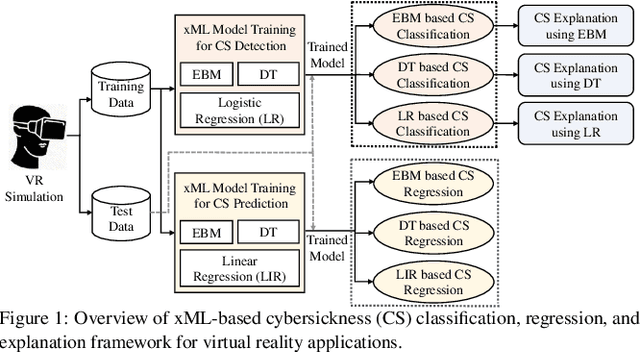

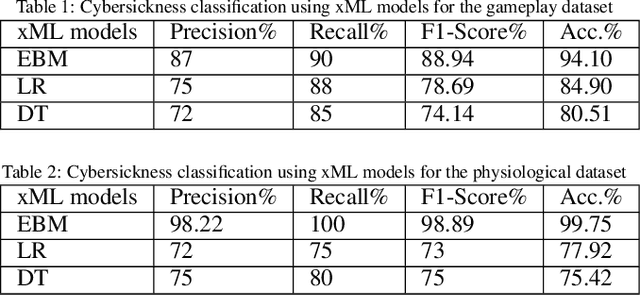

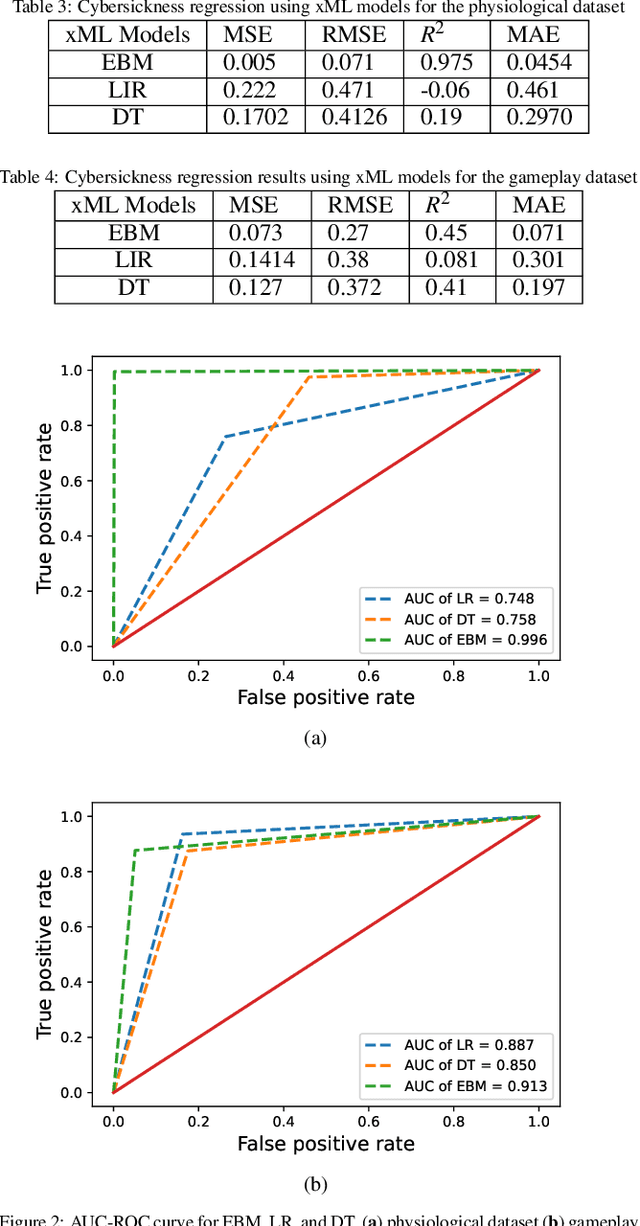

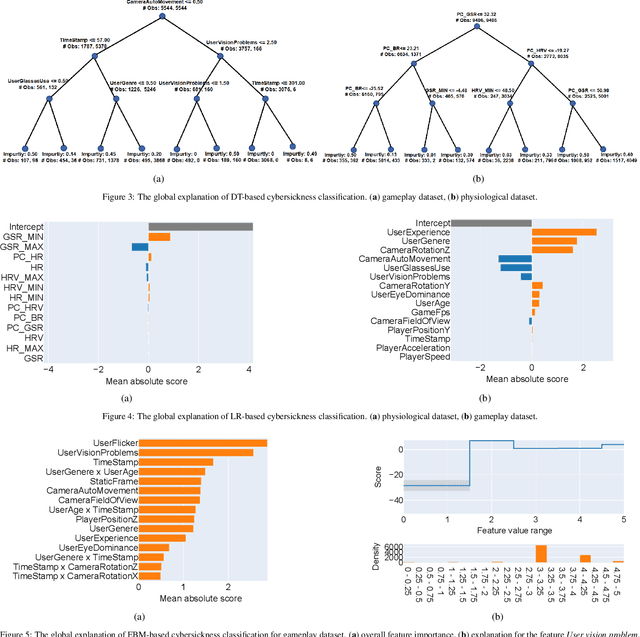

Abstract:Cybersickness can be characterized by nausea, vertigo, headache, eye strain, and other discomforts when using virtual reality (VR) systems. The previously reported machine learning (ML) and deep learning (DL) algorithms for detecting (classification) and predicting (regression) VR cybersickness use black-box models; thus, they lack explainability. Moreover, VR sensors generate a massive amount of data, resulting in complex and large models. Therefore, having inherent explainability in cybersickness detection models can significantly improve the model's trustworthiness and provide insight into why and how the ML/DL model arrived at a specific decision. To address this issue, we present three explainable machine learning (xML) models to detect and predict cybersickness: 1) explainable boosting machine (EBM), 2) decision tree (DT), and 3) logistic regression (LR). We evaluate xML-based models with publicly available physiological and gameplay datasets for cybersickness. The results show that the EBM can detect cybersickness with an accuracy of 99.75% and 94.10% for the physiological and gameplay datasets, respectively. On the other hand, while predicting the cybersickness, EBM resulted in a Root Mean Square Error (RMSE) of 0.071 for the physiological dataset and 0.27 for the gameplay dataset. Furthermore, the EBM-based global explanation reveals exposure length, rotation, and acceleration as key features causing cybersickness in the gameplay dataset. In contrast, galvanic skin responses and heart rate are most significant in the physiological dataset. Our results also suggest that EBM-based local explanation can identify cybersickness-causing factors for individual samples. We believe the proposed xML-based cybersickness detection method can help future researchers understand, analyze, and design simpler cybersickness detection and reduction models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge