Rimantas Butleris

Comparison of Modern Multilingual Text Embedding Techniques for Hate Speech Detection Task

Apr 16, 2026Abstract:Online hate speech and abusive language pose a growing challenge for content moderation, especially in multilingual settings and for low-resource languages such as Lithuanian. This paper investigates to what extent modern multilingual sentence embedding models can support accurate hate speech detection in Lithuanian, Russian, and English, and how their performance depends on downstream modeling choices and feature dimensionality. We introduce LtHate, a new Lithuanian hate speech corpus derived from news portals and social networks, and benchmark six modern multilingual encoders (potion, gemma, bge, snow, jina, e5) on LtHate, RuToxic, and EnSuperset using a unified Python pipeline. For each embedding, we train both a one class HBOS anomaly detector and a two class CatBoost classifier, with and without principal component analysis (PCA) compression to 64-dimensional feature vectors. Across all datasets, two class supervised models consistently and substantially outperform one class anomaly detection, with the best configurations achieving up to 80.96% accuracy and AUC ROC of 0.887 in Lithuanian (jina), 92.19% accuracy and AUC ROC of 0.978 in Russian (e5), and 77.21% accuracy and AUC ROC of 0.859 in English (e5 with PCA). PCA compression preserves almost all discriminative power in the supervised setting, while showing some negative impact for the unsupervised anomaly detection case. These results demonstrate how modern multilingual sentence embeddings combined with gradient boosted decision trees provide robust soft-computing solutions for multilingual hate speech detection applications.

Meta-Learning for Time Series Forecasting Ensemble

Nov 20, 2020

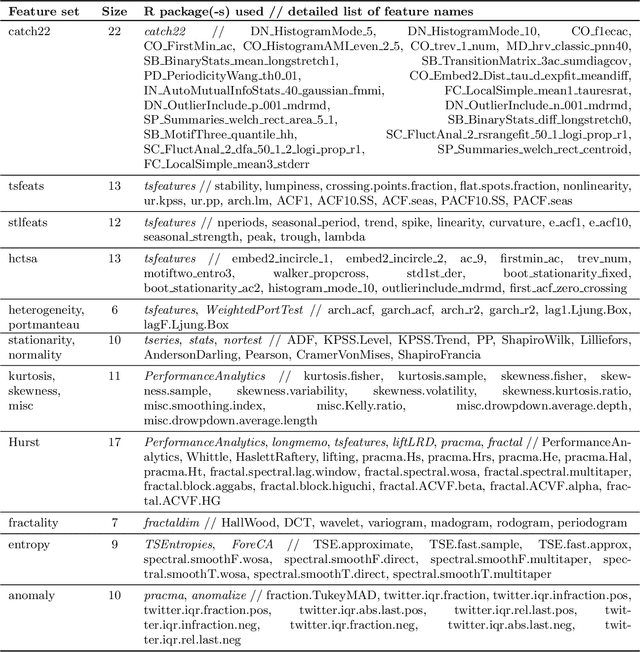

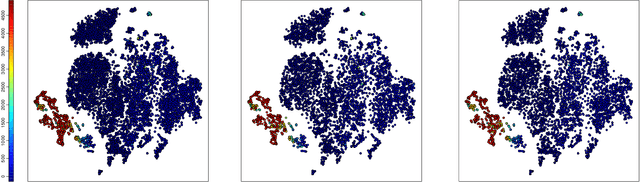

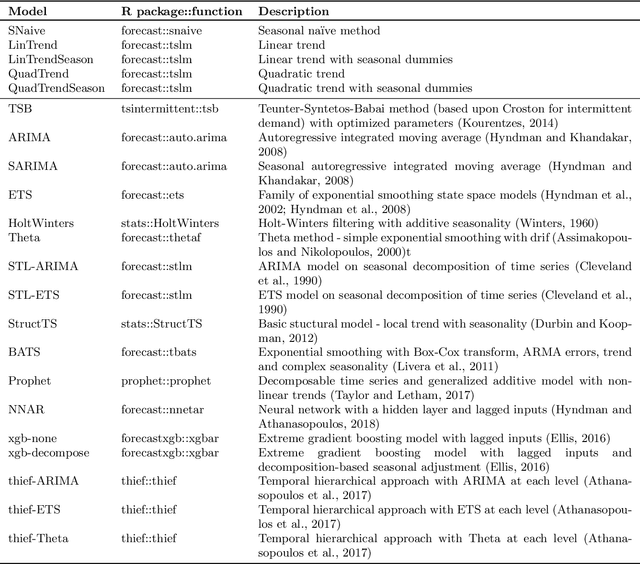

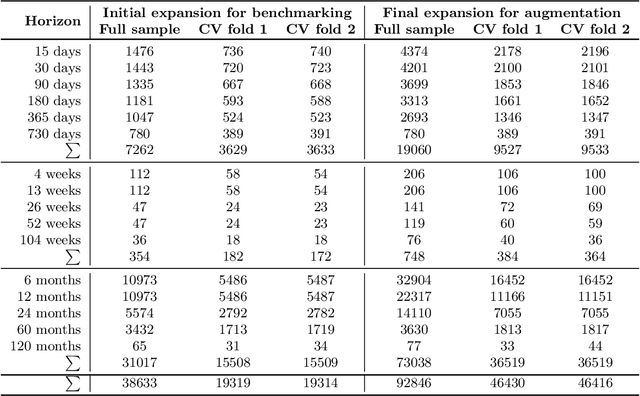

Abstract:Amounts of historical data collected increase together with business intelligence applicability and demands for automatic forecasting of time series. While no single time series modeling method is universal to all types of dynamics, forecasting using ensemble of several methods is often seen as a compromise. Instead of fixing ensemble diversity and size we propose to adaptively predict these aspects using meta-learning. Meta-learning here considers two separate random forest regression models, built on 390 time series features, to rank 22 univariate forecasting methods and to recommend ensemble size. Forecasting ensemble is consequently formed from methods ranked as the best and forecasts are pooled using either simple or weighted average (with weight corresponding to reciprocal rank). Proposed approach was tested on 12561 micro-economic time series (expanded to 38633 for various forecasting horizons) of M4 competition where meta-learning outperformed Theta and Comb benchmarks by relative forecasting errors for all data types and horizons. Best overall results were achieved by weighted pooling with symmetric mean absolute percentage error of 9.21% versus 11.05% obtained using Theta method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge