Renato Hermoza Aragonés

Using Neural Networks and Diversifying Differential Evolution for Dynamic Optimisation

Aug 10, 2020

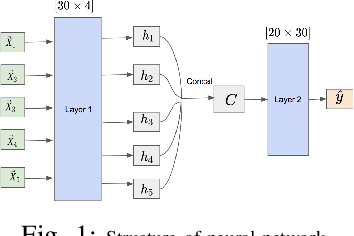

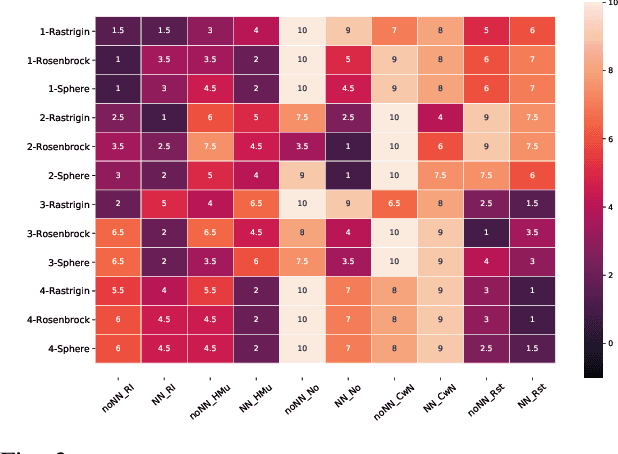

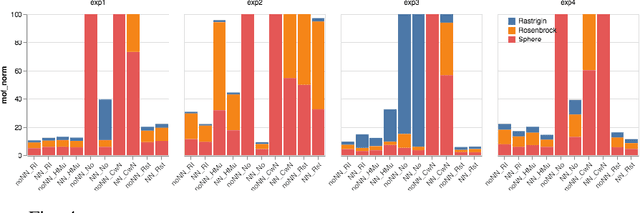

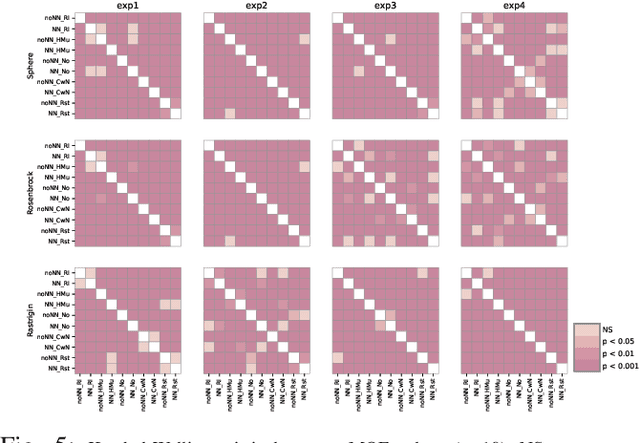

Abstract:Dynamic optimisation occurs in a variety of real-world problems. To tackle these problems, evolutionary algorithms have been extensively used due to their effectiveness and minimum design effort. However, for dynamic problems, extra mechanisms are required on top of standard evolutionary algorithms. Among them, diversity mechanisms have proven to be competitive in handling dynamism, and recently, the use of neural networks have become popular for this purpose. Considering the complexity of using neural networks in the process compared to simple diversity mechanisms, we investigate whether they are competitive and the possibility of integrating them to improve the results. However, for a fair comparison, we need to consider the same time budget for each algorithm. Thus, instead of the usual number of fitness evaluations as the measure for the available time between changes, we use wall clock timing. The results show the significance of the improvement when integrating the neural network and diversity mechanisms depends on the type and the frequency of changes. Moreover, we observe that for differential evolution, having a proper diversity in population when using neural networks plays a key role in the neural network's ability to improve the results.

Neural Networks in Evolutionary Dynamic Constrained Optimization: Computational Cost and Benefits

Jan 22, 2020

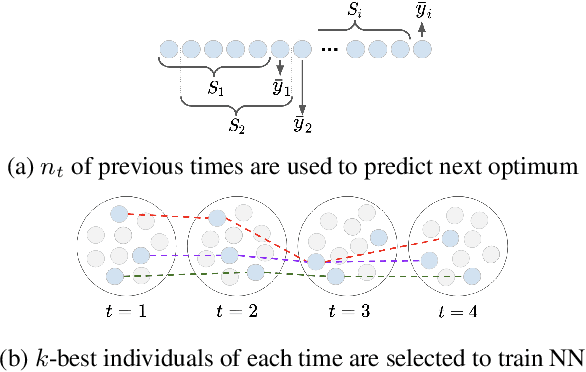

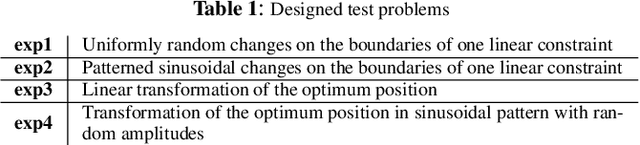

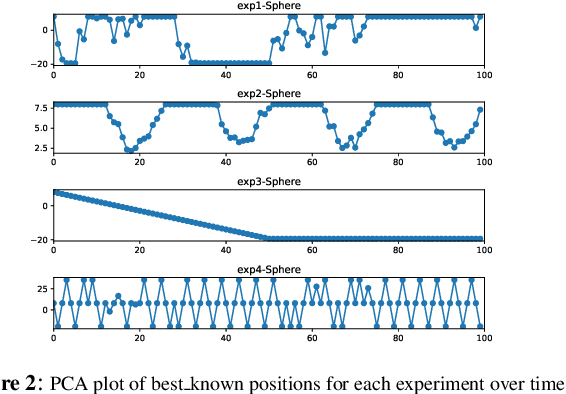

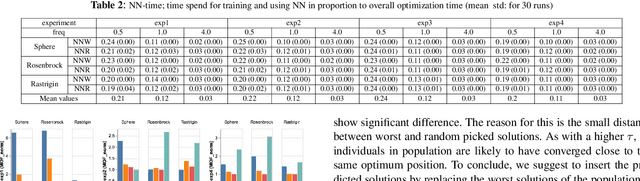

Abstract:Neural networks (NN) have been recently applied together with evolutionary algorithms (EAs) to solve dynamic optimization problems. The applied NN estimates the position of the next optimum based on the previous time best solutions. After detecting a change, the predicted solution can be employed to move the EA's population to a promising region of the solution space in order to accelerate convergence and improve accuracy in tracking the optimum. While previous works show improvement of the results, they neglect the overhead created by NN. In this work, we reflect the time spent on training NN in the optimization time and compare the results with a baseline EA. We explore if by considering the generated overhead, NN is still able to improve the results, and under which condition is able to do so. The main difficulties to train the NN are: 1) to get enough samples to generalize predictions for new data, and 2) to obtain reliable samples. As NN needs to collect data at each time step, if the time horizon is short, we will not be able to collect enough samples to train the NN. To alleviate this, we propose to consider more individuals on each change to speed up sample collection in shorter time steps. In environments with a high frequency of changes, the solutions produced by EA are likely to be far from the real optimum. Using unreliable train data for the NN will, in consequence, produce unreliable predictions. Also, as the time spent for NN stays fixed regardless of the frequency, a higher frequency of change will mean a higher produced overhead by the NN in proportion to the EA. In general, after considering the generated overhead, we conclude that NN is not suitable in environments with a high frequency of changes and/or short time horizons. However, it can be promising for the low frequency of changes, and especially for the environments that changes have a pattern.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge