Maryam Hasani-Shoreh

Neural Networks in Evolutionary Dynamic Constrained Optimization: Computational Cost and Benefits

Jan 22, 2020

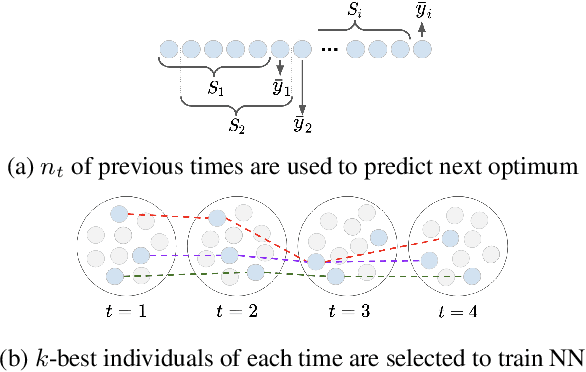

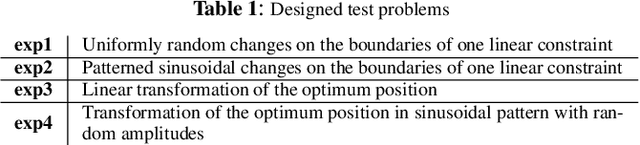

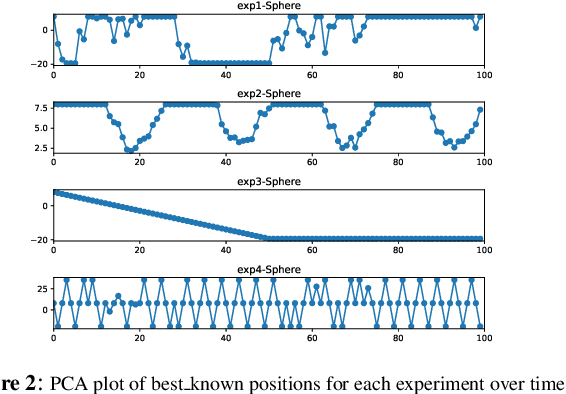

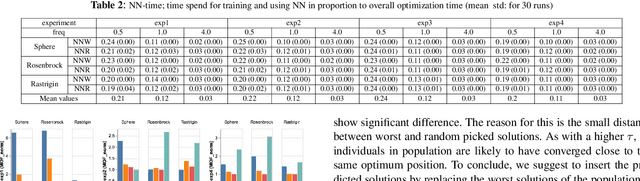

Abstract:Neural networks (NN) have been recently applied together with evolutionary algorithms (EAs) to solve dynamic optimization problems. The applied NN estimates the position of the next optimum based on the previous time best solutions. After detecting a change, the predicted solution can be employed to move the EA's population to a promising region of the solution space in order to accelerate convergence and improve accuracy in tracking the optimum. While previous works show improvement of the results, they neglect the overhead created by NN. In this work, we reflect the time spent on training NN in the optimization time and compare the results with a baseline EA. We explore if by considering the generated overhead, NN is still able to improve the results, and under which condition is able to do so. The main difficulties to train the NN are: 1) to get enough samples to generalize predictions for new data, and 2) to obtain reliable samples. As NN needs to collect data at each time step, if the time horizon is short, we will not be able to collect enough samples to train the NN. To alleviate this, we propose to consider more individuals on each change to speed up sample collection in shorter time steps. In environments with a high frequency of changes, the solutions produced by EA are likely to be far from the real optimum. Using unreliable train data for the NN will, in consequence, produce unreliable predictions. Also, as the time spent for NN stays fixed regardless of the frequency, a higher frequency of change will mean a higher produced overhead by the NN in proportion to the EA. In general, after considering the generated overhead, we conclude that NN is not suitable in environments with a high frequency of changes and/or short time horizons. However, it can be promising for the low frequency of changes, and especially for the environments that changes have a pattern.

On the Use of Diversity Mechanisms in Dynamic Constrained Continuous Optimization

Oct 02, 2019

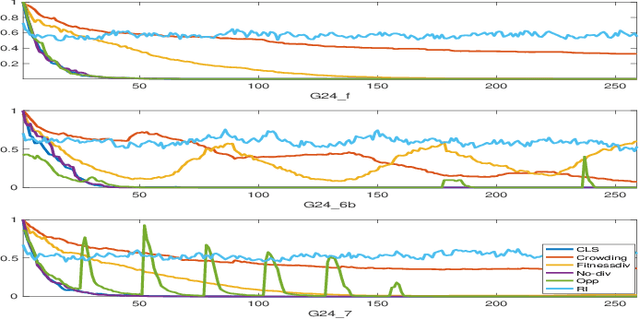

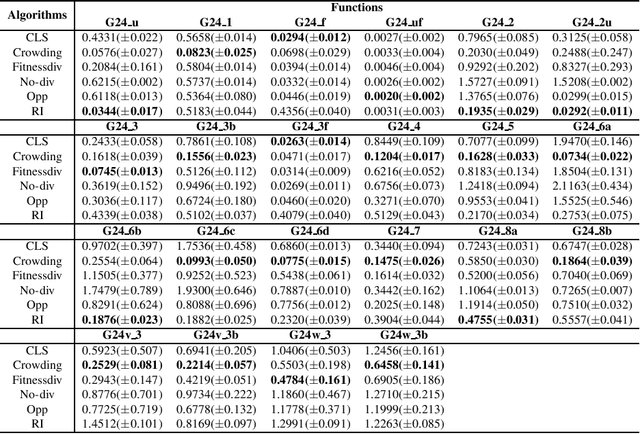

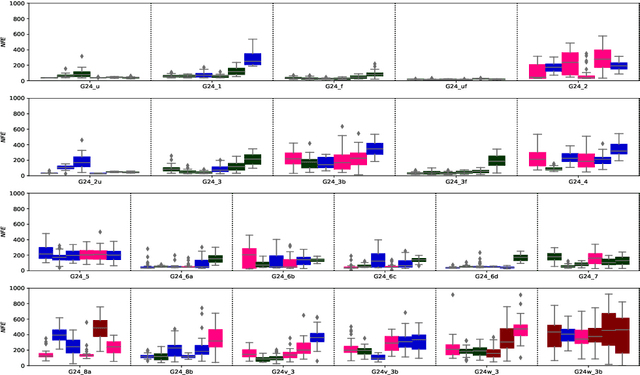

Abstract:Population diversity plays a key role in evolutionary algorithms that enables global exploration and avoids premature convergence. This is especially more crucial in dynamic optimization in which diversity can ensure that the population keeps track of the global optimum by adapting to the changing environment. Dynamic constrained optimization problems (DCOPs) have been the target for many researchers in recent years as they comprehend many of the current real-world problems. Regardless of the importance of diversity in dynamic optimization, there is not an extensive study investigating the effects of diversity promotion techniques in DCOPs so far. To address this gap, this paper aims to investigate how the use of different diversity mechanisms may influence the behavior of algorithms in DCOPs. To achieve this goal, we apply and adapt the most common diversity promotion mechanisms for dynamic environments using differential evolution (DE) as our base algorithm. The results show that applying diversity techniques to solve DCOPs in most test cases lead to significant enhancement in the baseline algorithm in terms of modified offline error values.

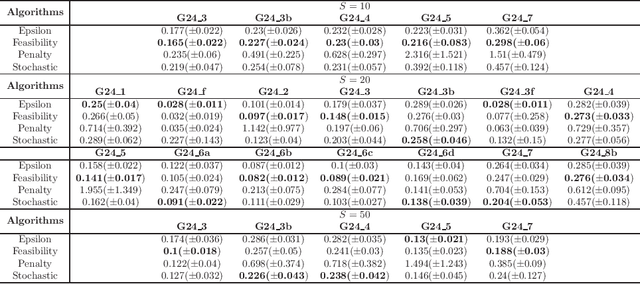

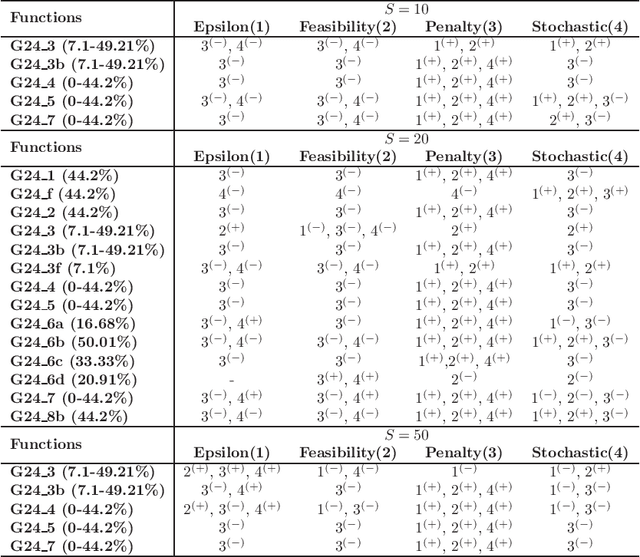

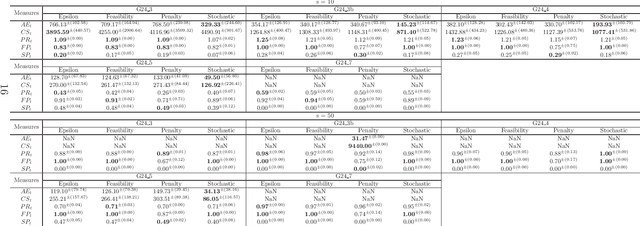

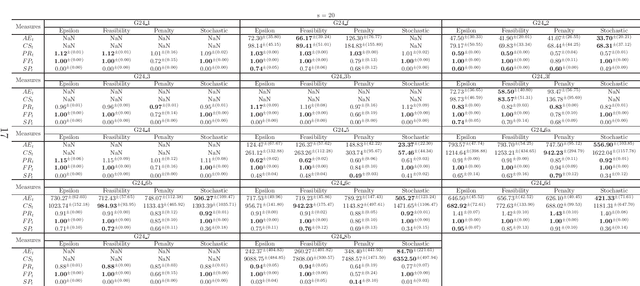

A Comparison of Constraint Handling Techniques for Dynamic Constrained Optimization Problems

Feb 16, 2018

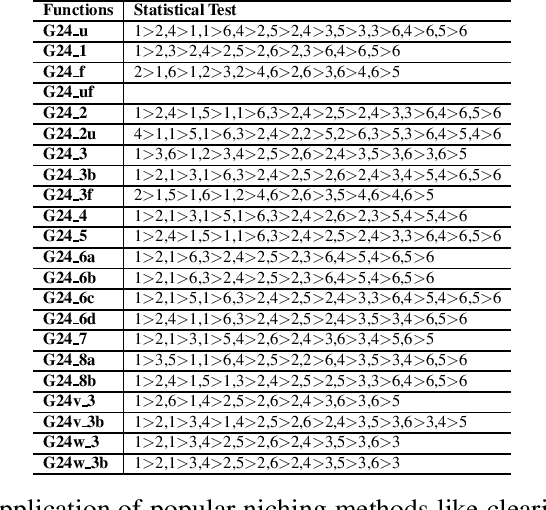

Abstract:Dynamic constrained optimization problems (DCOPs) have gained researchers attention in recent years because a vast majority of real world problems change over time. There are studies about the effect of constrained handling techniques in static optimization problems. However, there lacks any substantial study in the behavior of the most popular constraint handling techniques when dealing with DCOPs. In this paper we study the four most popular used constraint handling techniques and apply a simple Differential Evolution (DE) algorithm coupled with a change detection mechanism to observe the behavior of these techniques. These behaviors were analyzed using a common benchmark to determine which techniques are suitable for the most prevalent types of DCOPs. For the purpose of analysis, common measures in static environments were adapted to suit dynamic environments. While an overall superior technique could not be determined, certain techniques outperformed others in different aspects like rate of optimization or reliability of solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge