Recep Yusuf Bekci

Probabilistic Models for Manufacturing Lead Times

Apr 28, 2022

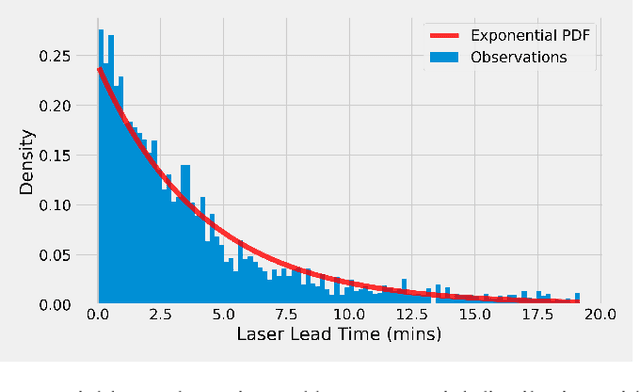

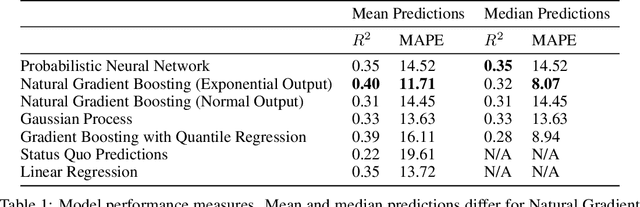

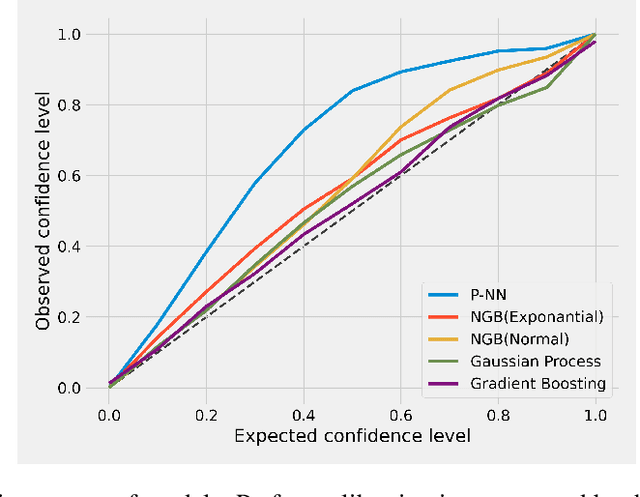

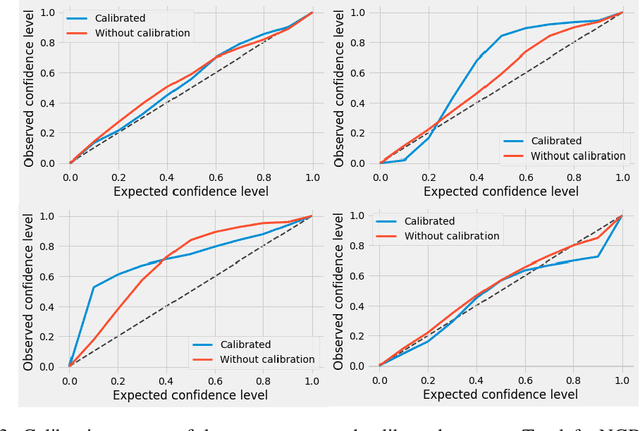

Abstract:In this study, we utilize Gaussian processes, probabilistic neural network, natural gradient boosting, and quantile regression augmented gradient boosting to model lead times of laser manufacturing processes. We introduce probabilistic modelling in the domain and compare the models in terms of different abilities. While providing a comparison between the models in real-life data, our work has many use cases and substantial business value. Our results indicate that all of the models beat the company estimation benchmark that uses domain experience and have good calibration with the empirical frequencies.

Visualizing the Loss Landscape of Actor Critic Methods with Applications in Inventory Optimization

Sep 04, 2020

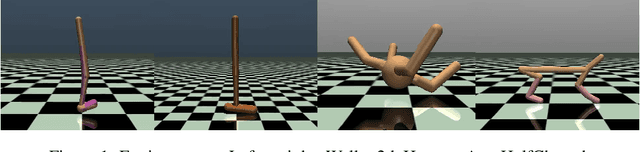

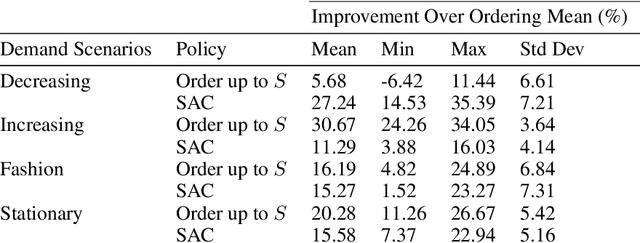

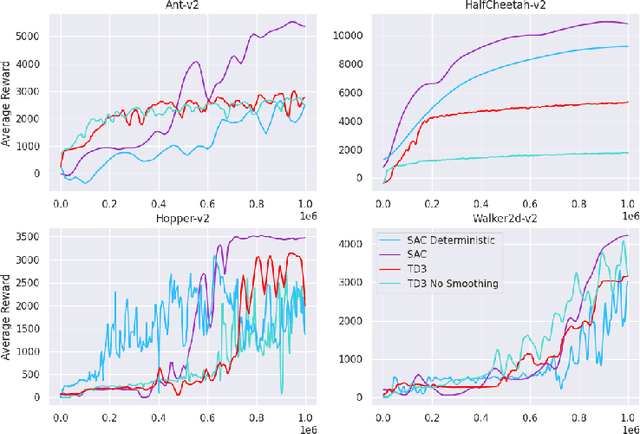

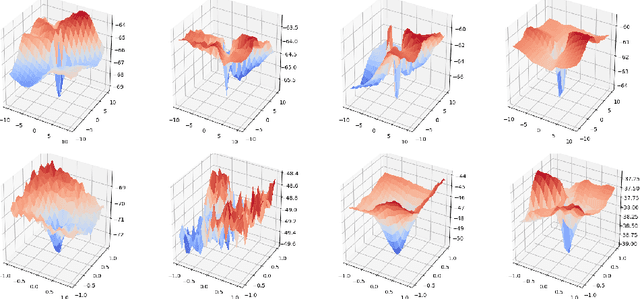

Abstract:Continuous control is a widely applicable area of reinforcement learning. The main players of this area are actor-critic methods that utilize policy gradients of neural approximators as a common practice. The focus of our study is to show the characteristics of the actor loss function which is the essential part of the optimization. We exploit low dimensional visualizations of the loss function and provide comparisons for loss landscapes of various algorithms. Furthermore, we apply our approach to multi-store dynamic inventory control, a notoriously difficult problem in supply chain operations, and explore the shape of the loss function associated with the optimal policy. We modelled and solved the problem using reinforcement learning while having a loss landscape in favor of optimality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge