Rashmi Bakshi

Feature Extraction and Prediction for Hand Hygiene Gestures with KNN Algorithm

Dec 30, 2021

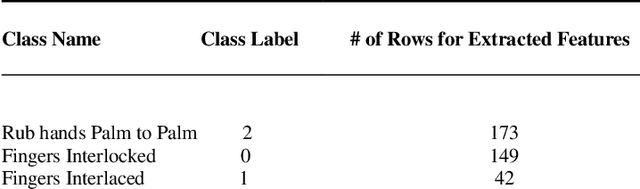

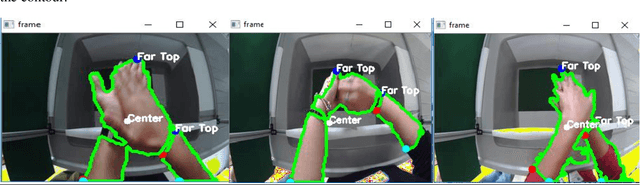

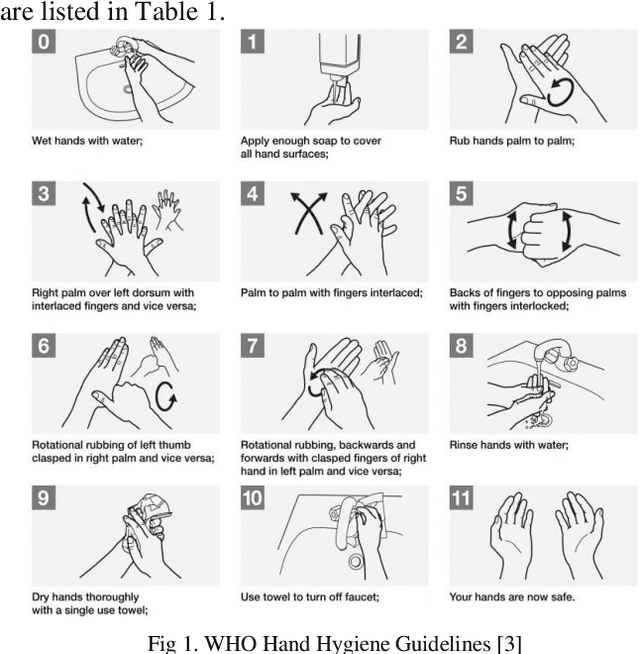

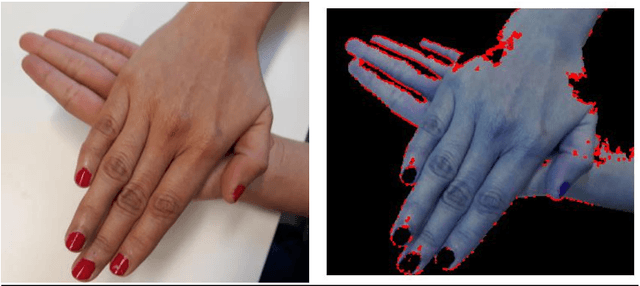

Abstract:This work focuses upon the analysis of hand gestures involved in the process of hand washing. There are six standard hand hygiene gestures for washing hands as provided by World Health Organisation hand hygiene guidelines. In this paper, hand features such as contours of hands, the centroid of the hands, and extreme hand points along the largest contour are extracted with the use of the computer vision library, OpenCV. These hand features are extracted for each data frame in a hand hygiene video. A robust hand hygiene dataset of video recordings was created in the project. A subset of this dataset is used in this work. Extracted hand features are further grouped into classes based on the KNN algorithm with a cross-fold validation technique for the classification and prediction of the unlabelled data. A mean accuracy score of >95% is achieved and proves that the KNN algorithm with an appropriate input value of K=5 is efficient for classification. A complete dataset with six distinct hand hygiene classes will be used with the KNN classifier for future work.

A Comparison of Deep Learning Models for the Prediction of Hand Hygiene Videos

Nov 03, 2021

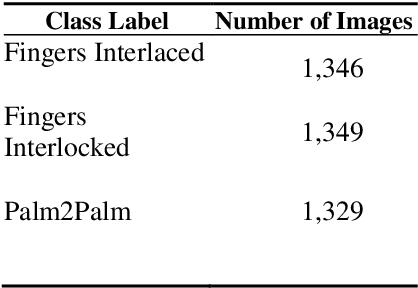

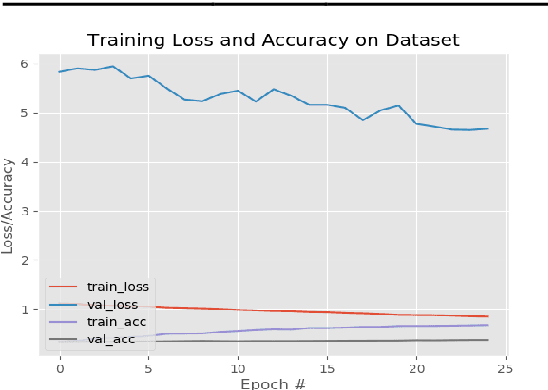

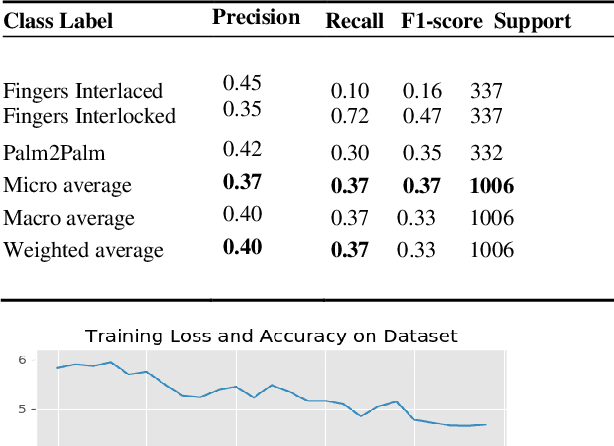

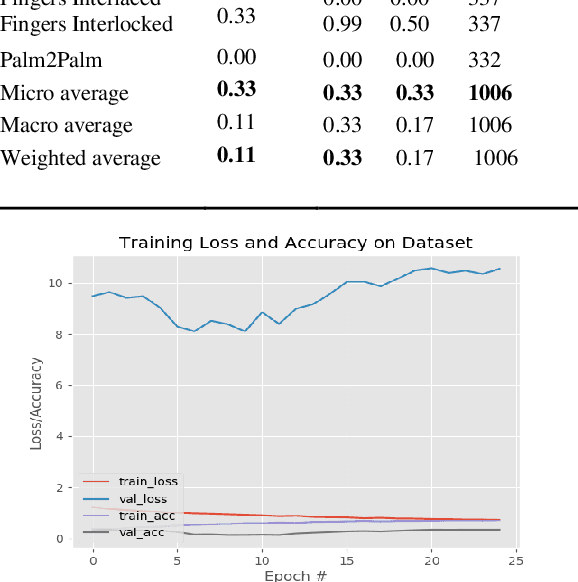

Abstract:This paper presents a comparison of various deep learning models such as Exception, Resnet-50, and Inception V3 for the classification and prediction of hand hygiene gestures, which were recorded in accordance with the World Health Organization (WHO) guidelines. The dataset consists of six hand hygiene movements in a video format, gathered for 30 participants. The network consists of pre-trained models with image net weights and a modified head of the model. An accuracy of 37% (Xception model), 33% (Inception V3), and 72% (ResNet-50) is achieved in the classification report after the training of the models for 25 epochs. ResNet-50 model clearly outperforms with correct class predictions. The major speed limitation can be overcome with the use of fast processing GPU for future work. A complete hand hygiene dataset along with other generic gestures such as one-hand movements (linear hand motion; circular hand rotation) will be tested with ResNet-50 architecture and the variants for health care workers.

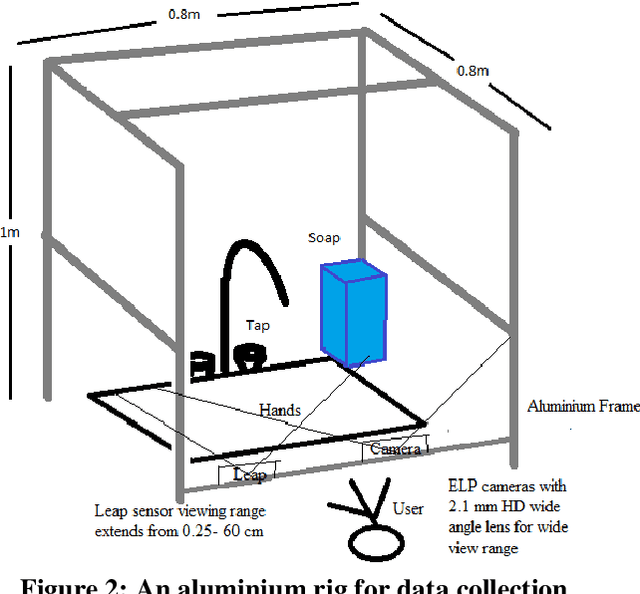

WHO-Hand Hygiene Gesture Classification System

Oct 06, 2021

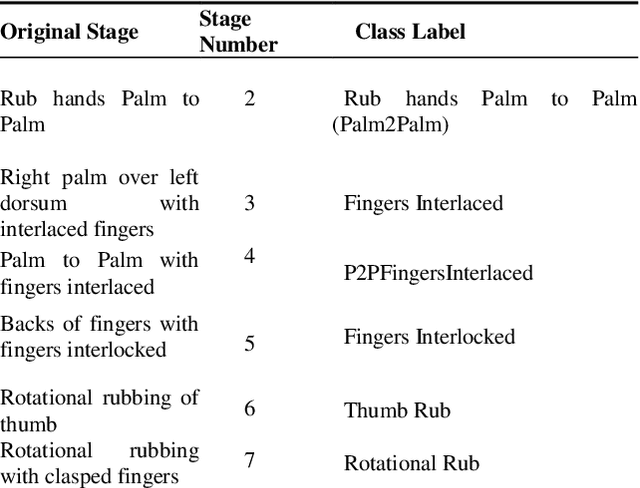

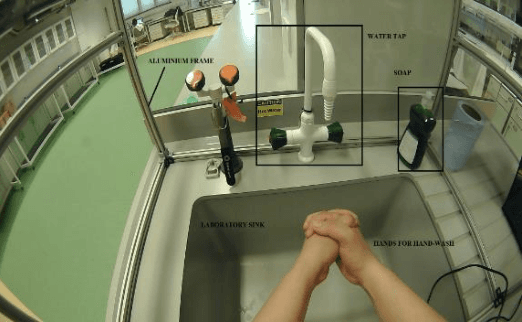

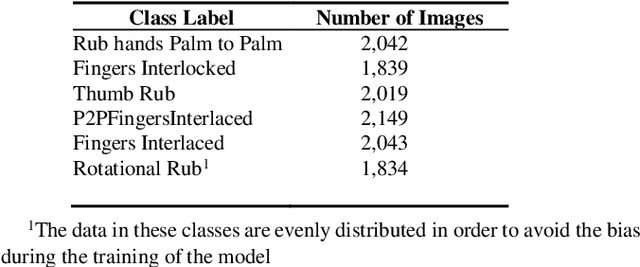

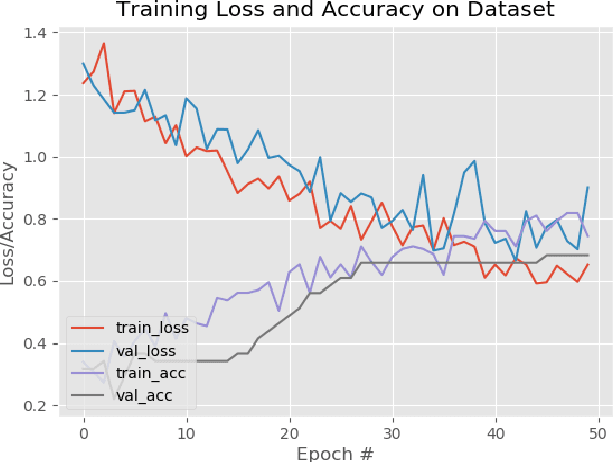

Abstract:The recent ongoing coronavirus pandemic highlights the importance of hand hygiene practices in our daily lives, with governments and worldwide health authorities promoting good hand hygiene practices. More than one million cases of hospital-acquired infections occur in Europe annually. Hand hygiene compliance may reduce the risk of transmission by reducing the number of infections as well as healthcare expenditures. In this paper, the World Health Organization, hand hygiene gestures are recorded and analyzed with the construction of an aluminum frame, placed at the laboratory sink. The hand hygiene gestures are recorded for thirty participants after conducting a training session about hand hygiene gestures demonstration. The video recordings are converted into image files and are organized into six different hand hygiene classes. The Resnet50 framework selection for the classification of multiclass hand hygiene stages. The model is trained with the first set of classes; Fingers Interlaced, P2PFingers Interlaced, and Rotational Rub for 25 epochs. An accuracy of 44 percent for the first set of experiments with a loss score greater than 1.5 in the validation set is achieved. The training steps for the second set of classes; Rub hands palm to palm, Fingers Interlocked, Thumb Rub are 50 epochs. An accuracy of 72 percent is achieved for the second set with a loss score of less than 0.8 for the validation set. In this work, a preliminary analysis of a robust hand hygiene dataset with transfer learning takes place. The future aim for deploying a hand hygiene prediction system for healthcare workers in real-time.

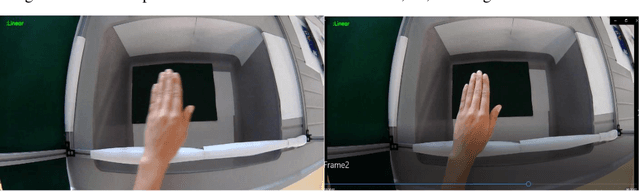

Hand Hygiene Video Classification Based on Deep Learning

Aug 18, 2021

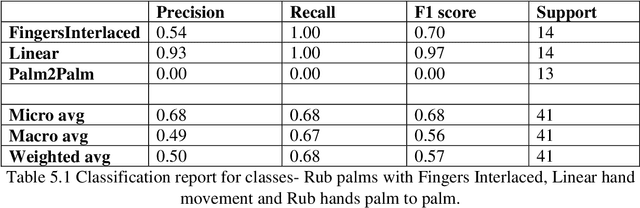

Abstract:In this work, an extensive review of literature in the field of gesture recognition carried out along with the implementation of a simple classification system for hand hygiene stages based on deep learning solutions. A subset of robust dataset that consist of handwashing gestures with two hands as well as one-hand gestures such as linear hand movement utilized. A pretrained neural network model, RES Net 50, with image net weights used for the classification of 3 categories: Linear hand movement, rub hands palm to palm and rub hands with fingers interlaced movement. Correct predictions made for the first two classes with > 60% accuracy. A complete dataset along with increased number of classes and training steps will be explored as a future work.

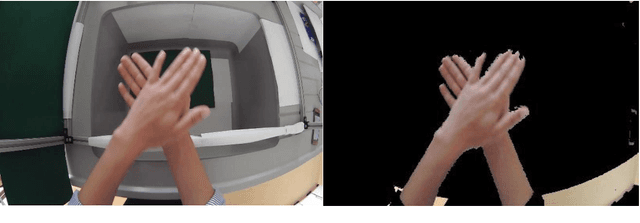

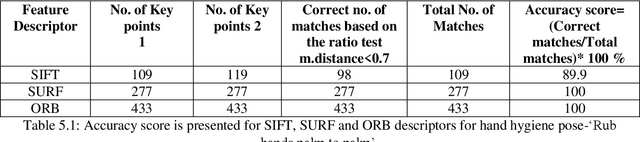

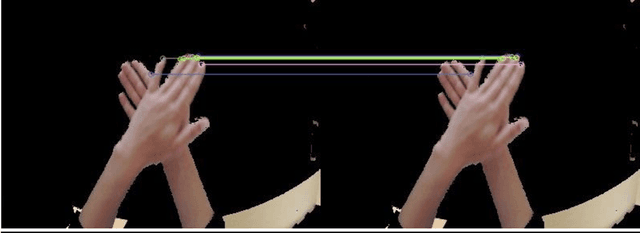

Feature Identification and Matching for Hand Hygiene Pose

Aug 14, 2021

Abstract:Three popular feature descriptors of computer vision such as SIFT, SURF, and ORB compared and evaluated. The number of correct features extracted and matched for the original hand hygiene pose-Rub hands palm to palm image and rotated image. An accuracy score calculated based on the total number of matches and the correct number of matches produced. The experiment demonstrated that ORB algorithm outperforms by giving the high number of correct matches in less amount of time. ORB feature detection technique applied over handwashing video recordings for feature extraction and hand hygiene pose classification as a future work. OpenCV utilized to apply the algorithms within python scripts.

Tracking Hand Hygiene Gestures with Leap Motion Controller

Aug 11, 2021

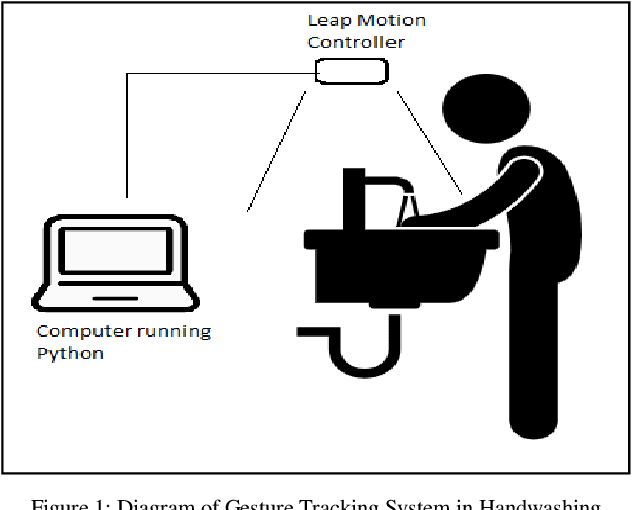

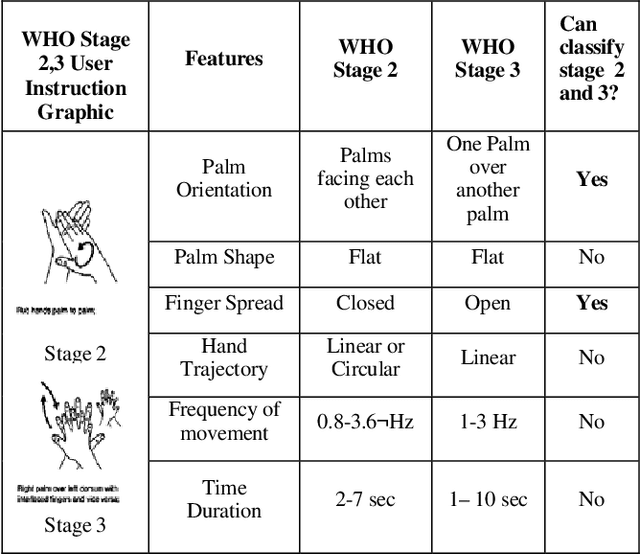

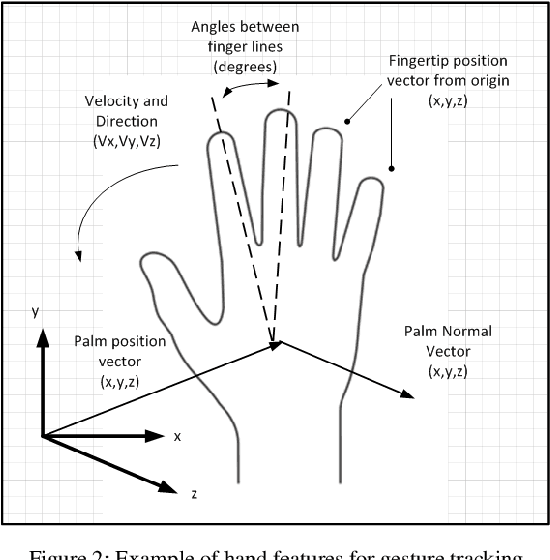

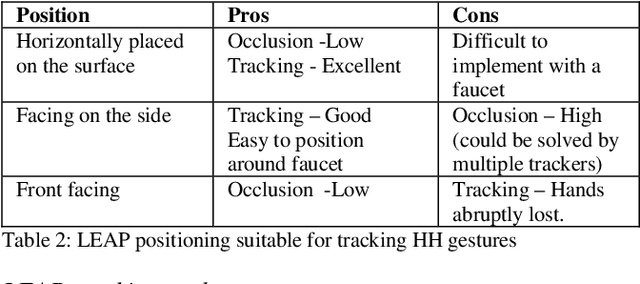

Abstract:The process of hand washing, according to the WHO, is divided into stages with clearly defined two handed dynamic gestures. In this paper, videos of hand washing experts are segmented and analyzed with the goal of extracting their corresponding features. These features can be further processed in software to classify particular hand movements, determine whether the stages have been successfully completed by the user and also assess the quality of washing. Having identified the important features, a 3D gesture tracker, the Leap Motion Controller (LEAP), was used to track and detect the hand features associated with these stages. With the help of sequential programming and threshold values, the hand features were combined together to detect the initiation and completion of a sample WHO Stage 2 (Rub hands Palm to Palm). The LEAP provides accurate raw positional data for tracking single hand gestures and two hands in separation but suffers from occlusion when hands are in contact. Other than hand hygiene the approaches shown here can be applied in other biomedical applications requiring close hand gesture analysis.

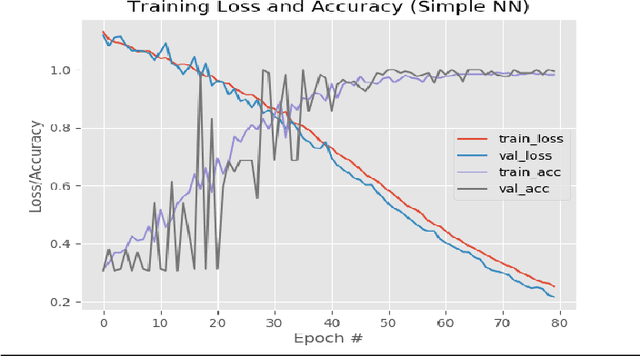

Hand Pose Classification Based on Neural Networks

Aug 10, 2021

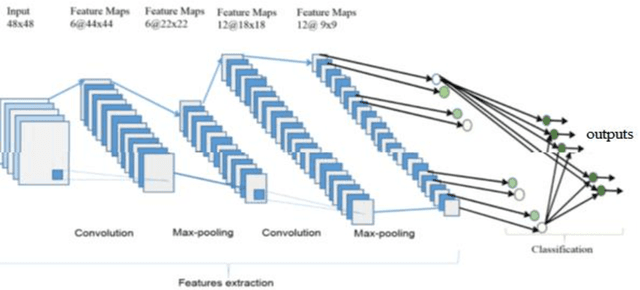

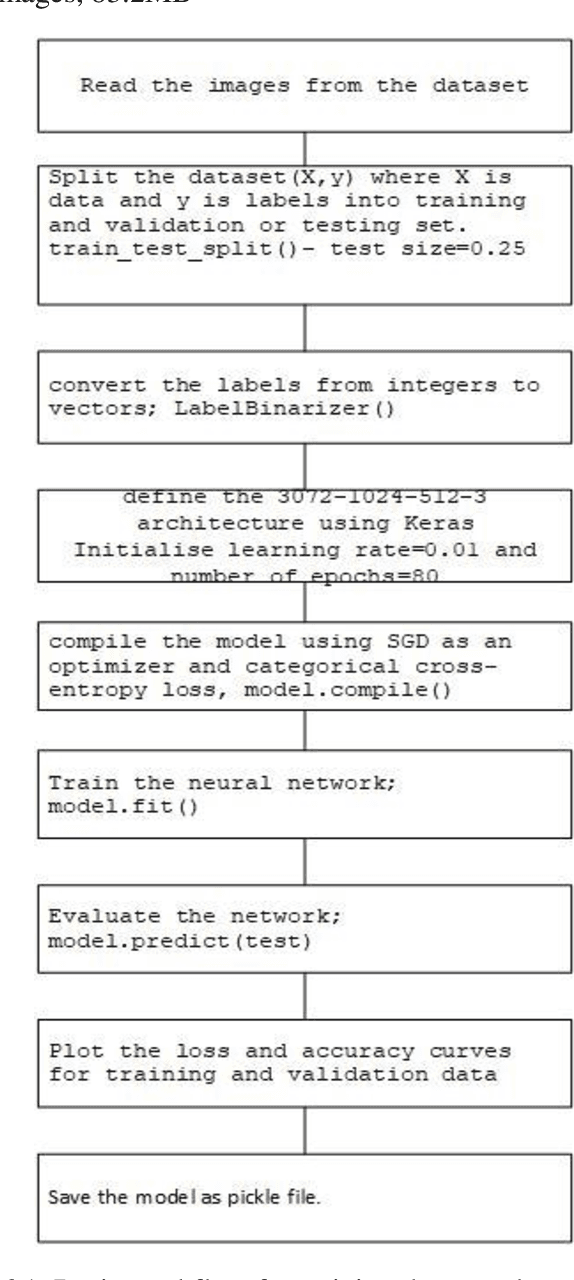

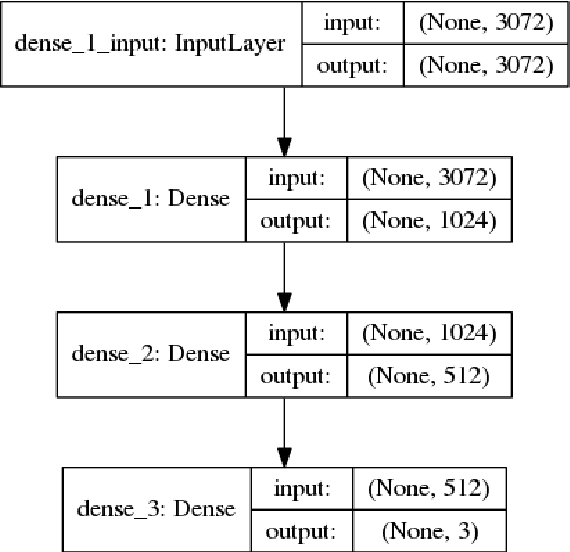

Abstract:In this work, deep learning models are applied to a segment of a robust hand-washing dataset that has been created with the help of 30 volunteers. This work demonstrates the classification of presence of one hand, two hands and no hand in the scene based on transfer learning. The pre-trained model; simplest NN from Keras library is utilized to train the network with 704 images of hand gestures and the predictions are carried out for the input image. Due to the controlled and restricted dataset, 100% accuracy is achieved during the training with correct predictions for the input image. Complete handwashing dataset with dense models such as AlexNet for video classification for hand hygiene stages will be used in the future work.

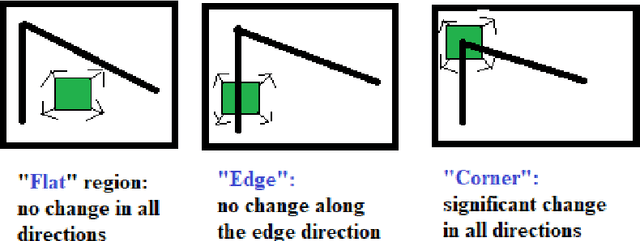

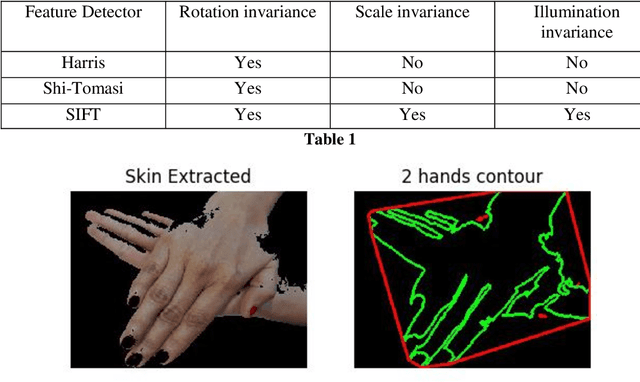

Feature Detection for Hand Hygiene Stages

Aug 06, 2021

Abstract:The process of hand washing involves complex hand movements. There are six principal sequential steps for washing hands as per the World Health Organisation (WHO) guidelines. In this work, a detailed description of an aluminium rig construction for creating a robust hand-washing dataset is discussed. The preliminary results with the help of image processing and computer vision algorithms for hand pose extraction and feature detection such as Harris detector, Shi-Tomasi and SIFT are demonstrated. The hand hygiene pose- Rub hands palm to palm was captured as an input image for running all the experiments. The future work will focus upon processing the video recordings of hand movements captured and applying deep-learning solutions for the classification of hand-hygiene stages.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge