Graham Gavin

Tracking Hand Hygiene Gestures with Leap Motion Controller

Aug 11, 2021

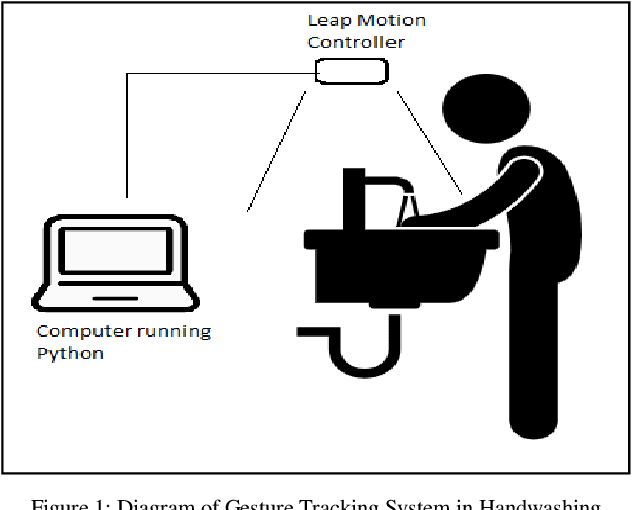

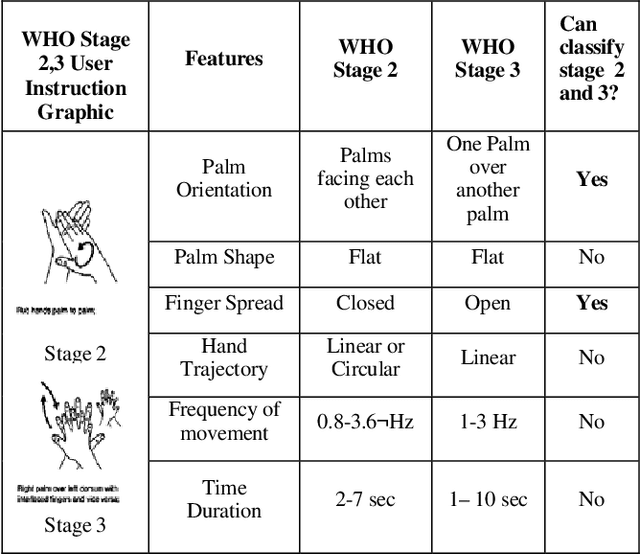

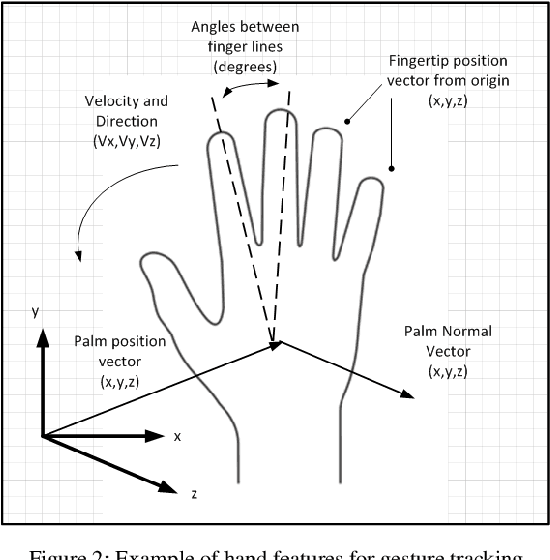

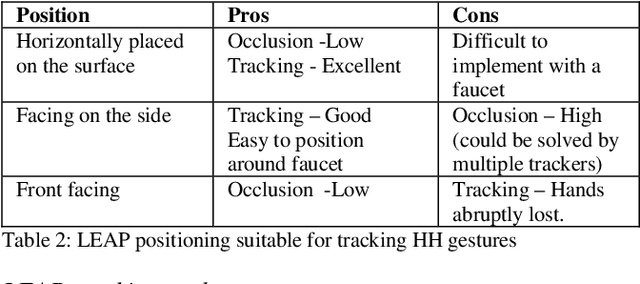

Abstract:The process of hand washing, according to the WHO, is divided into stages with clearly defined two handed dynamic gestures. In this paper, videos of hand washing experts are segmented and analyzed with the goal of extracting their corresponding features. These features can be further processed in software to classify particular hand movements, determine whether the stages have been successfully completed by the user and also assess the quality of washing. Having identified the important features, a 3D gesture tracker, the Leap Motion Controller (LEAP), was used to track and detect the hand features associated with these stages. With the help of sequential programming and threshold values, the hand features were combined together to detect the initiation and completion of a sample WHO Stage 2 (Rub hands Palm to Palm). The LEAP provides accurate raw positional data for tracking single hand gestures and two hands in separation but suffers from occlusion when hands are in contact. Other than hand hygiene the approaches shown here can be applied in other biomedical applications requiring close hand gesture analysis.

Feature Detection for Hand Hygiene Stages

Aug 06, 2021

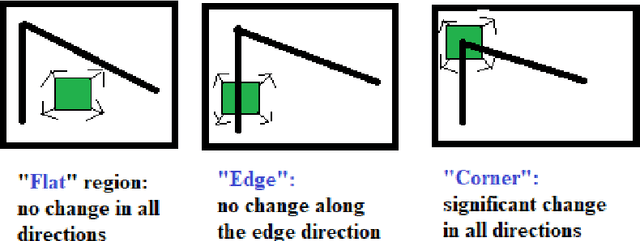

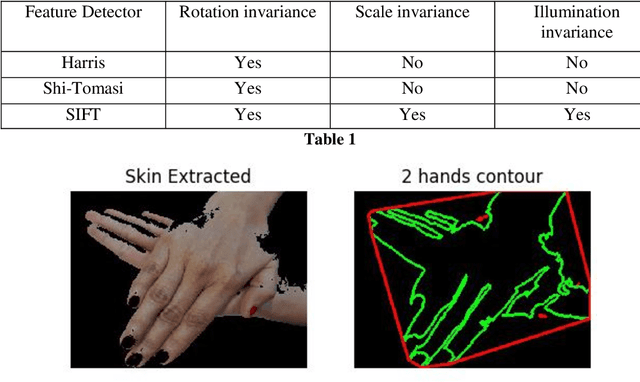

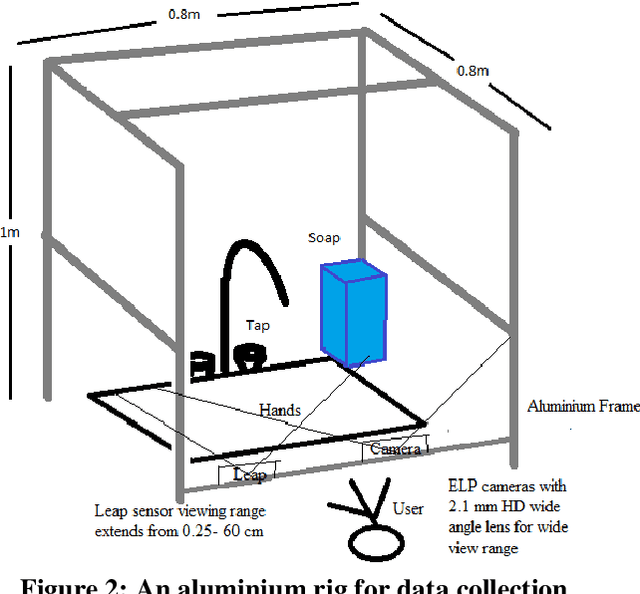

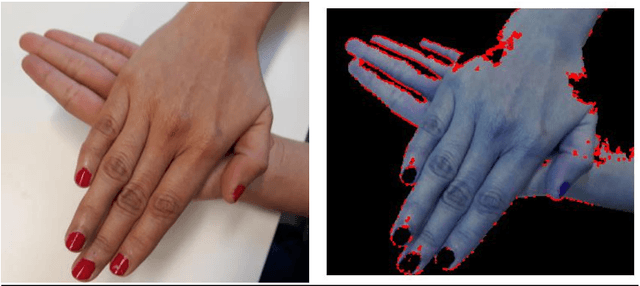

Abstract:The process of hand washing involves complex hand movements. There are six principal sequential steps for washing hands as per the World Health Organisation (WHO) guidelines. In this work, a detailed description of an aluminium rig construction for creating a robust hand-washing dataset is discussed. The preliminary results with the help of image processing and computer vision algorithms for hand pose extraction and feature detection such as Harris detector, Shi-Tomasi and SIFT are demonstrated. The hand hygiene pose- Rub hands palm to palm was captured as an input image for running all the experiments. The future work will focus upon processing the video recordings of hand movements captured and applying deep-learning solutions for the classification of hand-hygiene stages.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge