Raphael T. Haftka

Testing Surrogate-Based Optimization with the Fortified Branin-Hoo Extended to Four Dimensions

Jul 16, 2021

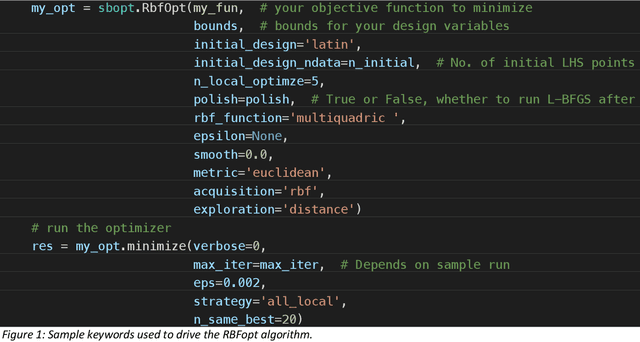

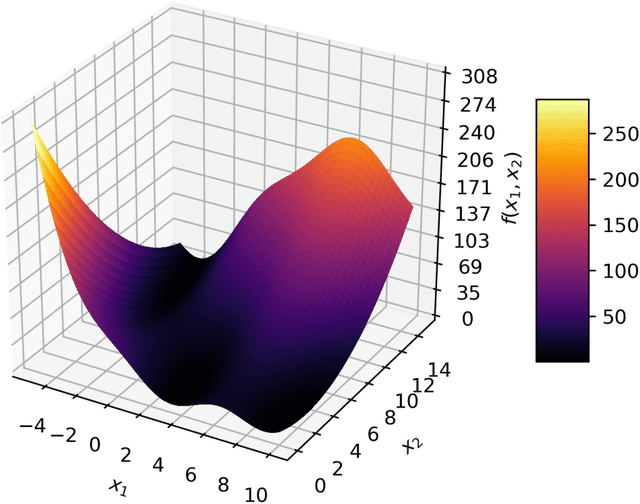

Abstract:Some popular functions used to test global optimization algorithms have multiple local optima, all with the same value, making them all global optima. It is easy to make them more challenging by fortifying them via adding a localized bump at the location of one of the optima. In previous work the authors illustrated this for the Branin-Hoo function and the popular differential evolution algorithm, showing that the fortified Branin-Hoo required an order of magnitude more function evaluations. This paper examines the effect of fortifying the Branin-Hoo function on surrogate-based optimization, which usually proceeds by adaptive sampling. Two algorithms are considered. The EGO algorithm, which is based on a Gaussian process (GP) and an algorithm based on radial basis functions (RBF). EGO is found to be more frugal in terms of the number of required function evaluations required to identify the correct basin, but it is expensive to run on a desktop, limiting the number of times the runs could be repeated to establish sound statistics on the number of required function evaluations. The RBF algorithm was cheaper to run, providing more sound statistics on performance. A four-dimensional version of the Branin-Hoo function was introduced in order to assess the effect of dimensionality. It was found that the difference between the ordinary function and the fortified one was much more pronounced for the four-dimensional function compared to the two dimensional one.

Classifying Online Dating Profiles on Tinder using FaceNet Facial Embeddings

Mar 12, 2018

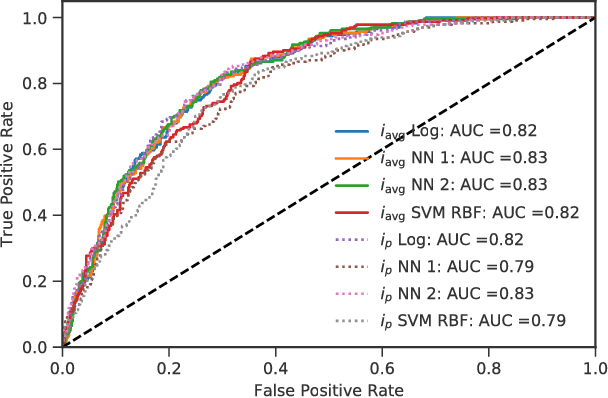

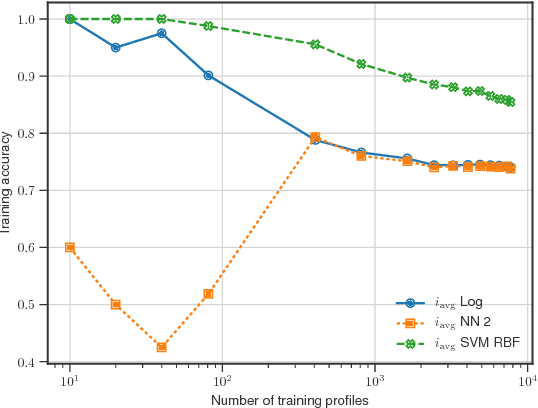

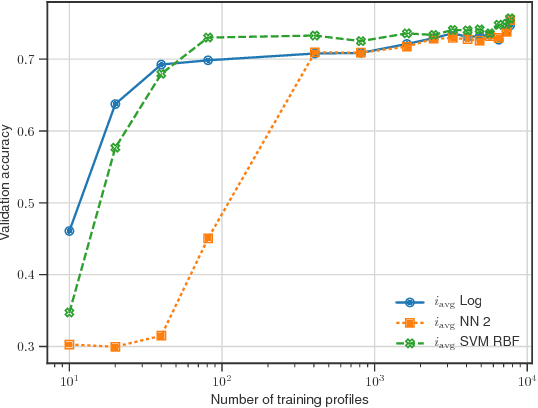

Abstract:A method to produce personalized classification models to automatically review online dating profiles on Tinder is proposed, based on the user's historical preference. The method takes advantage of a FaceNet facial classification model to extract features which may be related to facial attractiveness. The embeddings from a FaceNet model were used as the features to describe an individual's face. A user reviewed 8,545 online dating profiles. For each reviewed online dating profile, a feature set was constructed from the profile images which contained just one face. Two approaches are presented to go from the set of features for each face, to a set of profile features. A simple logistic regression trained on the embeddings from just 20 profiles could obtain a 65% validation accuracy. A point of diminishing marginal returns was identified to occur around 80 profiles, at which the model accuracy of 73% would only improve marginally after reviewing a significant number of additional profiles.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge