R. Miikkulainen

Characterizing the Effect of Sentence Context on Word Meanings: Mapping Brain to Behavior

Aug 06, 2020

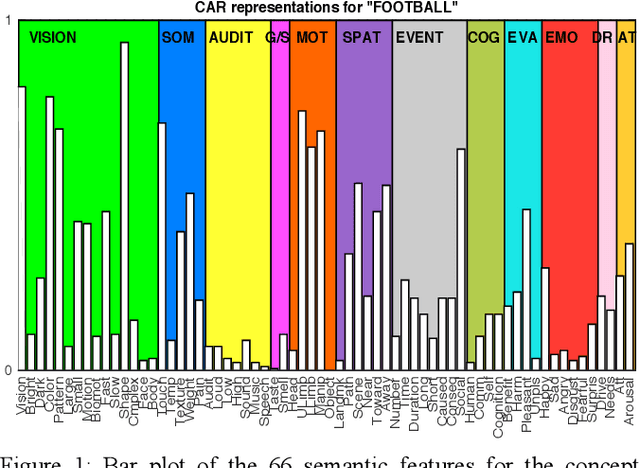

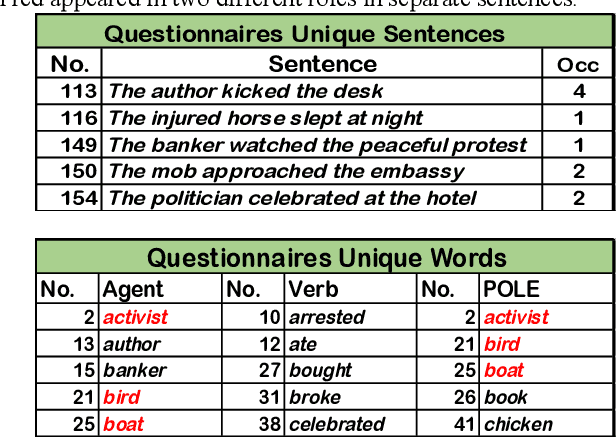

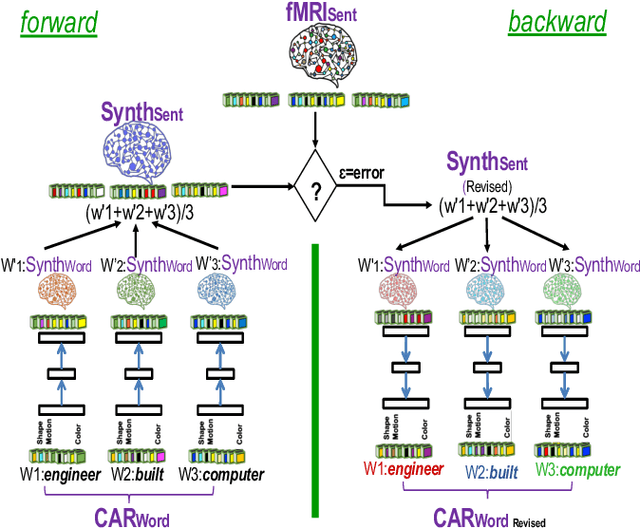

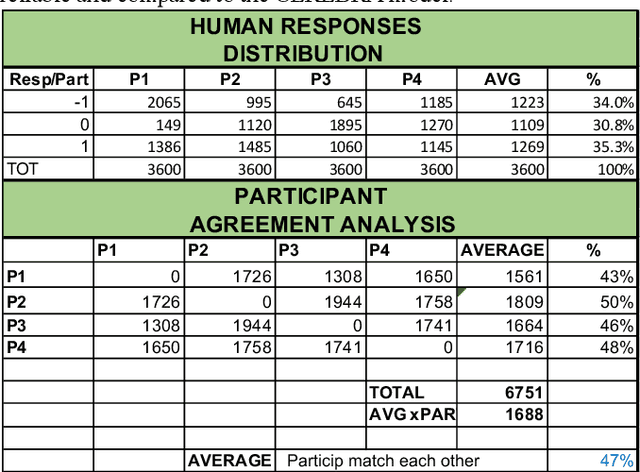

Abstract:Semantic feature models have become a popular tool for prediction and interpretation of fMRI data. In particular, prior work has shown that differences in the fMRI patterns in sentence reading can be explained by context-dependent changes in the semantic feature representations of the words. However, whether the subjects are aware of such changes and agree with them has been an open question. This paper aims to answer this question through a human-subject study. Subjects were asked to judge how the word change from their generic meaning when the words were used in specific sentences. The judgements were consistent with the model predictions well above chance. Thus, the results support the hypothesis that word meaning change systematically depending on sentence context.

Using context to make gas classifiers robust to sensor drift

Mar 16, 2020

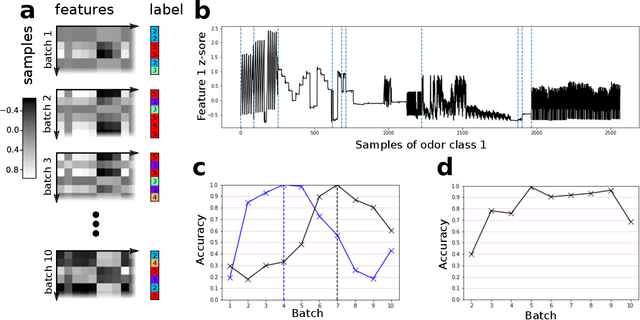

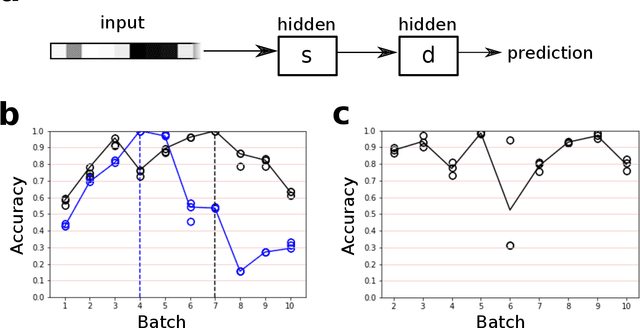

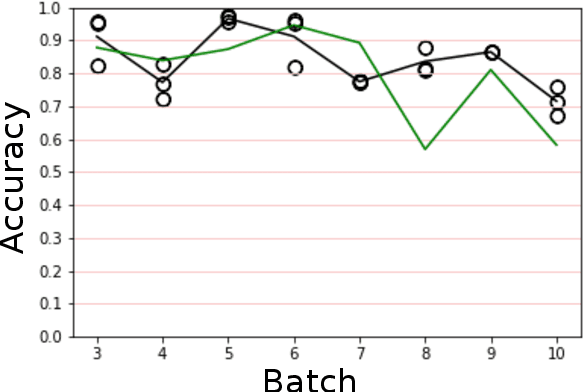

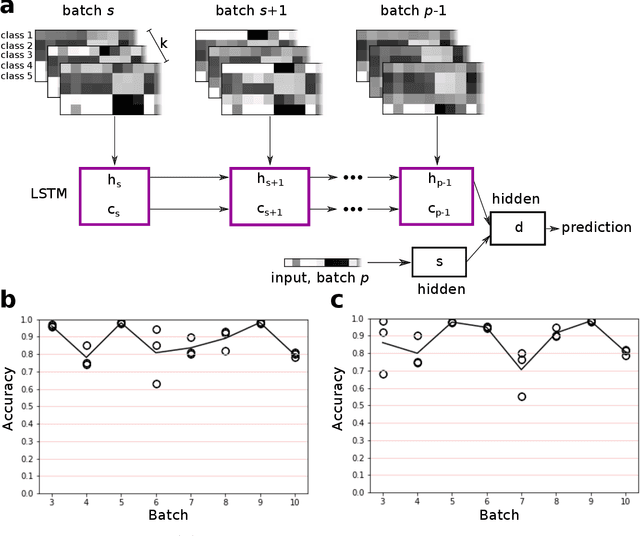

Abstract:The interaction of a gas particle with a metal-oxide based gas sensor changes the sensor irreversibly. The compounded changes, referred to as sensor drift, are unstable, but adaptive algorithms can sustain the accuracy of odor sensor systems. Here we focus on extending the lifetime of sensor systems without additional data acquisition by transfering knowledge from one time window to a subsequent one after drift has occurred. To support generalization across sensor states, we introduce a context-based neural network model which forms a latent representation of sensor state. We tested our models to classify samples taken from unseen subsequent time windows and discovered favorable accuracy compared to drift-naive and ensemble methods on a gas sensor array drift dataset. By reducing the effect that sensor drift has on classification accuracy, context-based models may extend the effective lifetime of gas identification systems in practical settings.

Competitive Coevolution through Evolutionary Complexification

Jun 30, 2011

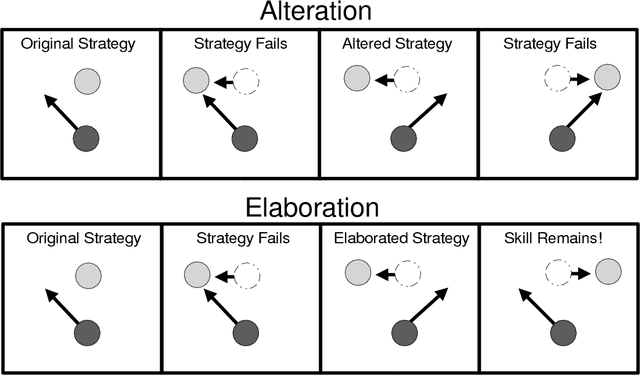

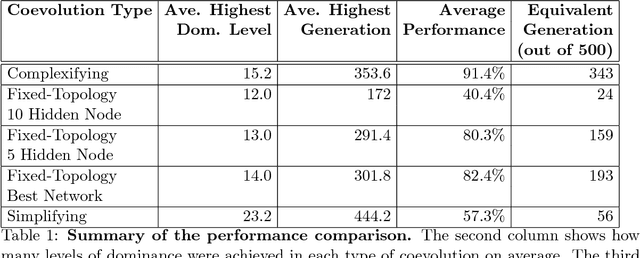

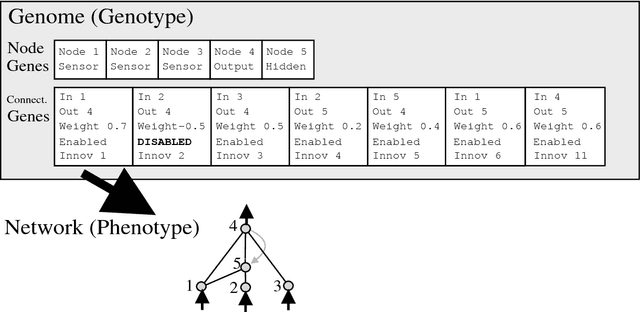

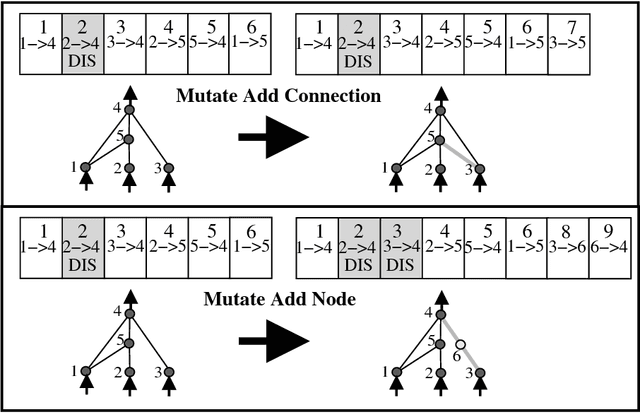

Abstract:Two major goals in machine learning are the discovery and improvement of solutions to complex problems. In this paper, we argue that complexification, i.e. the incremental elaboration of solutions through adding new structure, achieves both these goals. We demonstrate the power of complexification through the NeuroEvolution of Augmenting Topologies (NEAT) method, which evolves increasingly complex neural network architectures. NEAT is applied to an open-ended coevolutionary robot duel domain where robot controllers compete head to head. Because the robot duel domain supports a wide range of strategies, and because coevolution benefits from an escalating arms race, it serves as a suitable testbed for studying complexification. When compared to the evolution of networks with fixed structure, complexifying evolution discovers significantly more sophisticated strategies. The results suggest that in order to discover and improve complex solutions, evolution, and search in general, should be allowed to complexify as well as optimize.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge