R. K. Agrawal

IterMiUnet: A lightweight architecture for automatic blood vessel segmentation

Aug 02, 2022

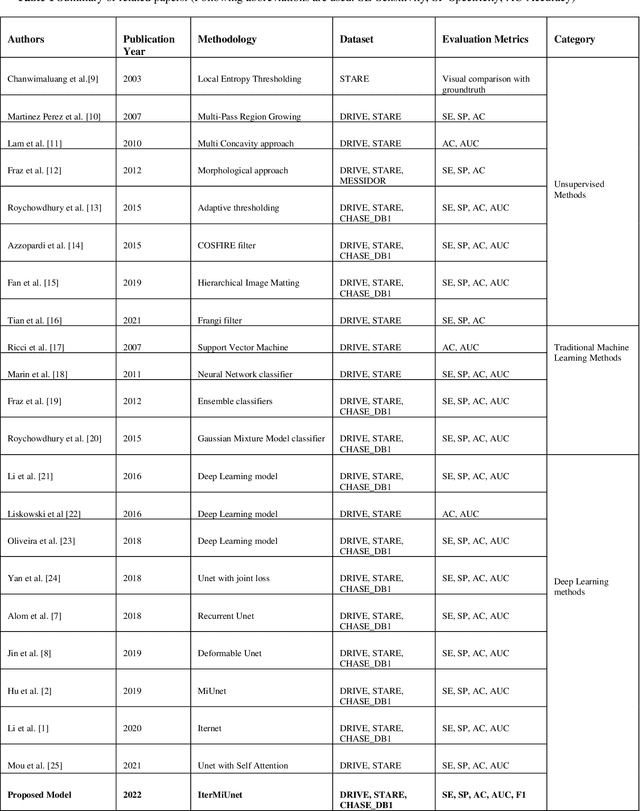

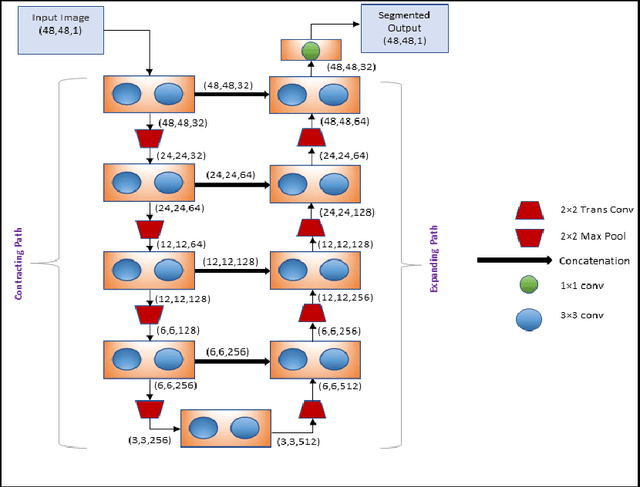

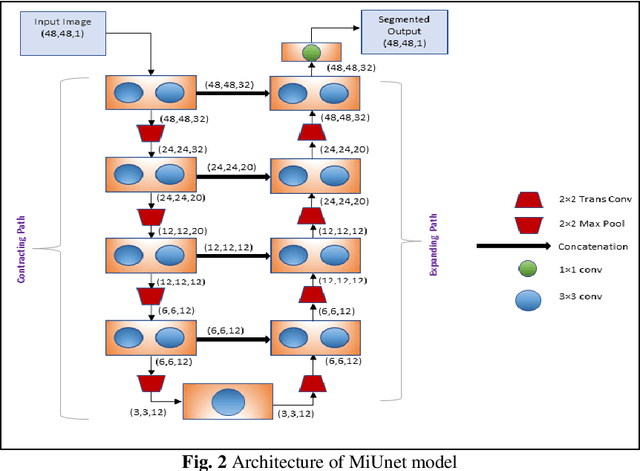

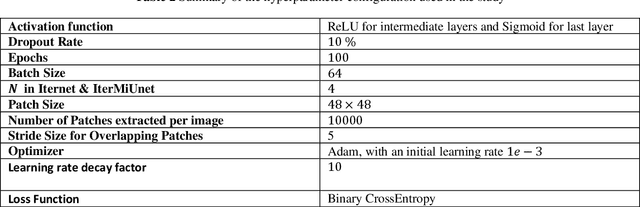

Abstract:The automatic segmentation of blood vessels in fundus images can help analyze the condition of retinal vasculature, which is crucial for identifying various systemic diseases like hypertension, diabetes, etc. Despite the success of Deep Learning-based models in this segmentation task, most of them are heavily parametrized and thus have limited use in practical applications. This paper proposes IterMiUnet, a new lightweight convolution-based segmentation model that requires significantly fewer parameters and yet delivers performance similar to existing models. The model makes use of the excellent segmentation capabilities of Iternet architecture but overcomes its heavily parametrized nature by incorporating the encoder-decoder structure of MiUnet model within it. Thus, the new model reduces parameters without any compromise with the network's depth, which is necessary to learn abstract hierarchical concepts in deep models. This lightweight segmentation model speeds up training and inference time and is potentially helpful in the medical domain where data is scarce and, therefore, heavily parametrized models tend to overfit. The proposed model was evaluated on three publicly available datasets: DRIVE, STARE, and CHASE-DB1. Further cross-training and inter-rater variability evaluations have also been performed. The proposed model has a lot of potential to be utilized as a tool for the early diagnosis of many diseases.

PSO based Neural Networks vs. Traditional Statistical Models for Seasonal Time Series Forecasting

Feb 26, 2013

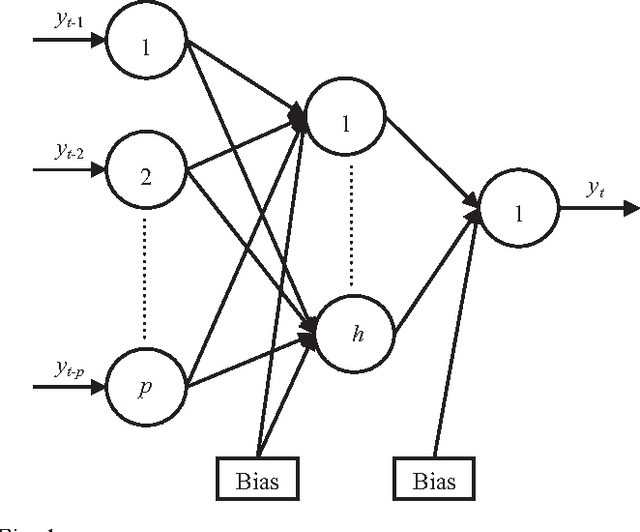

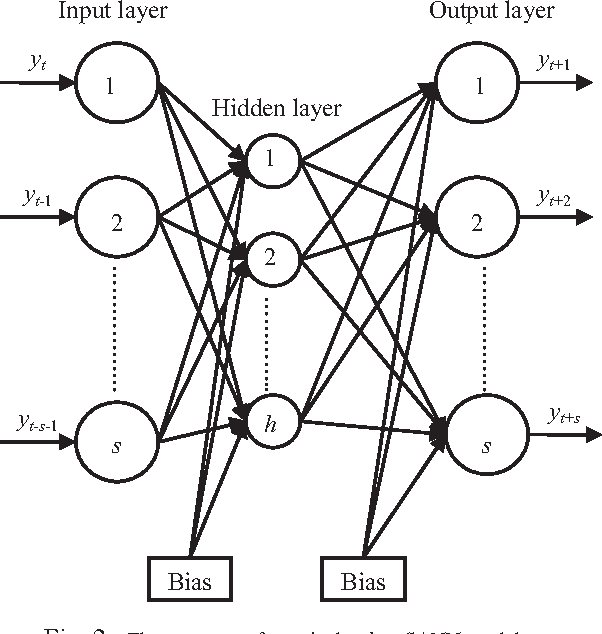

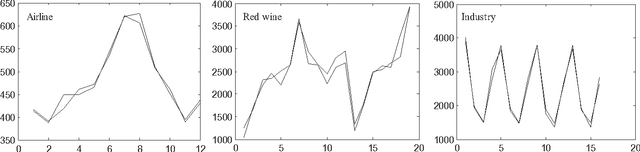

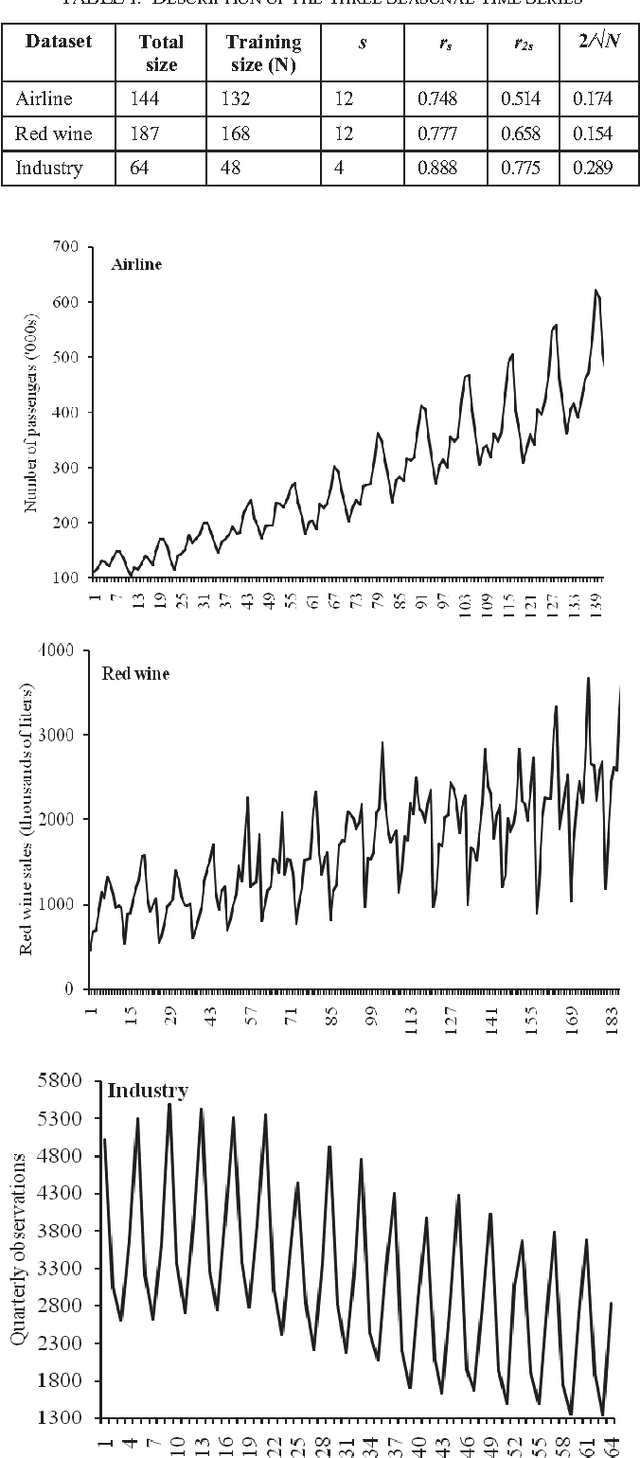

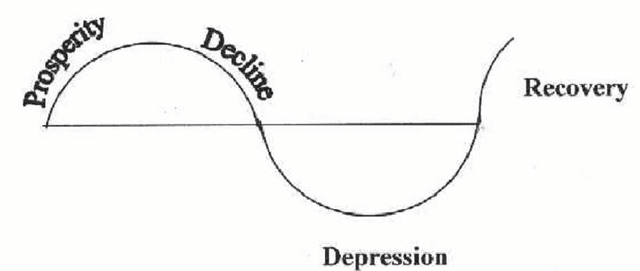

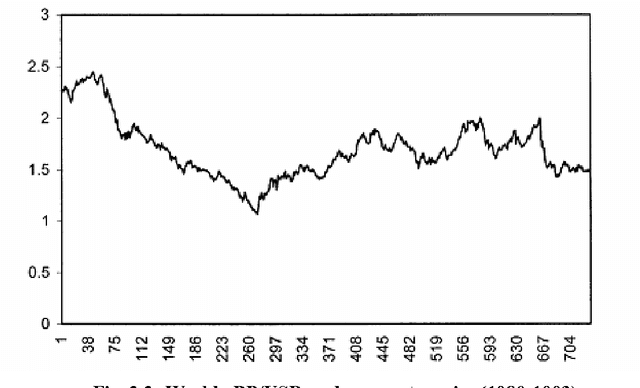

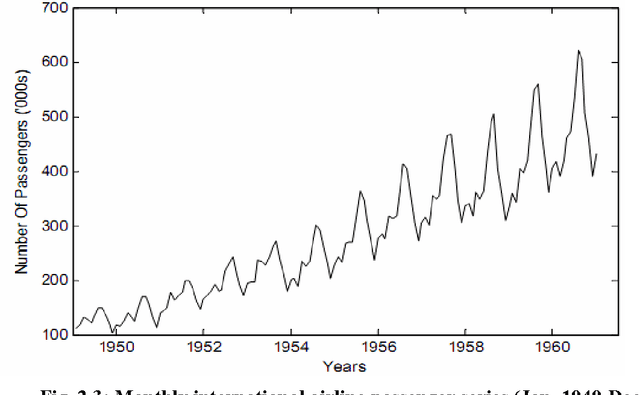

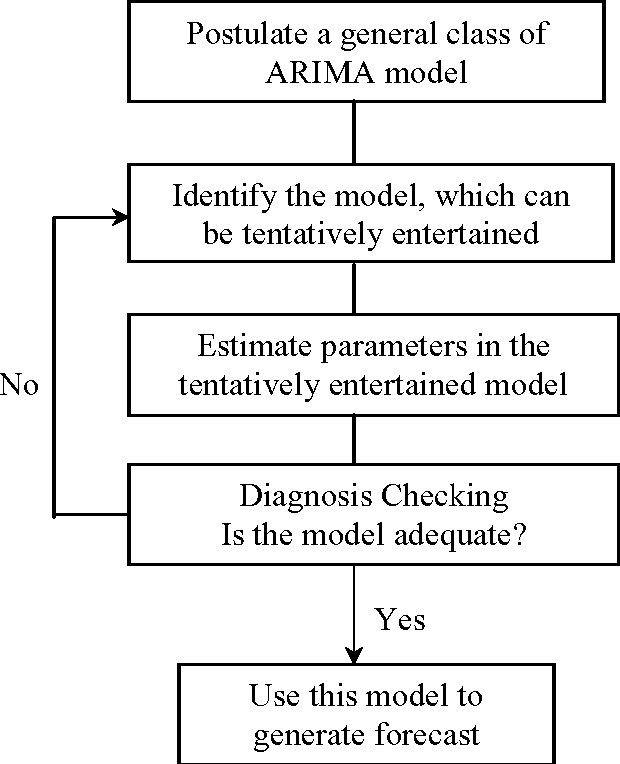

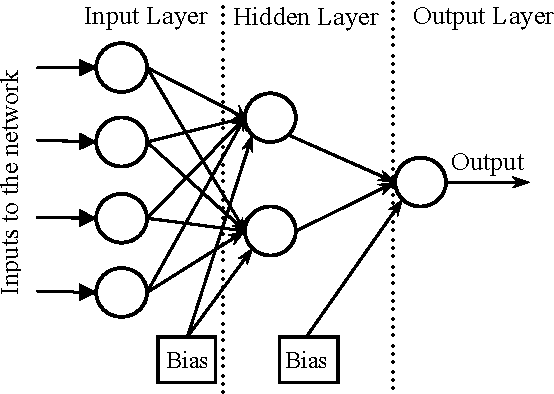

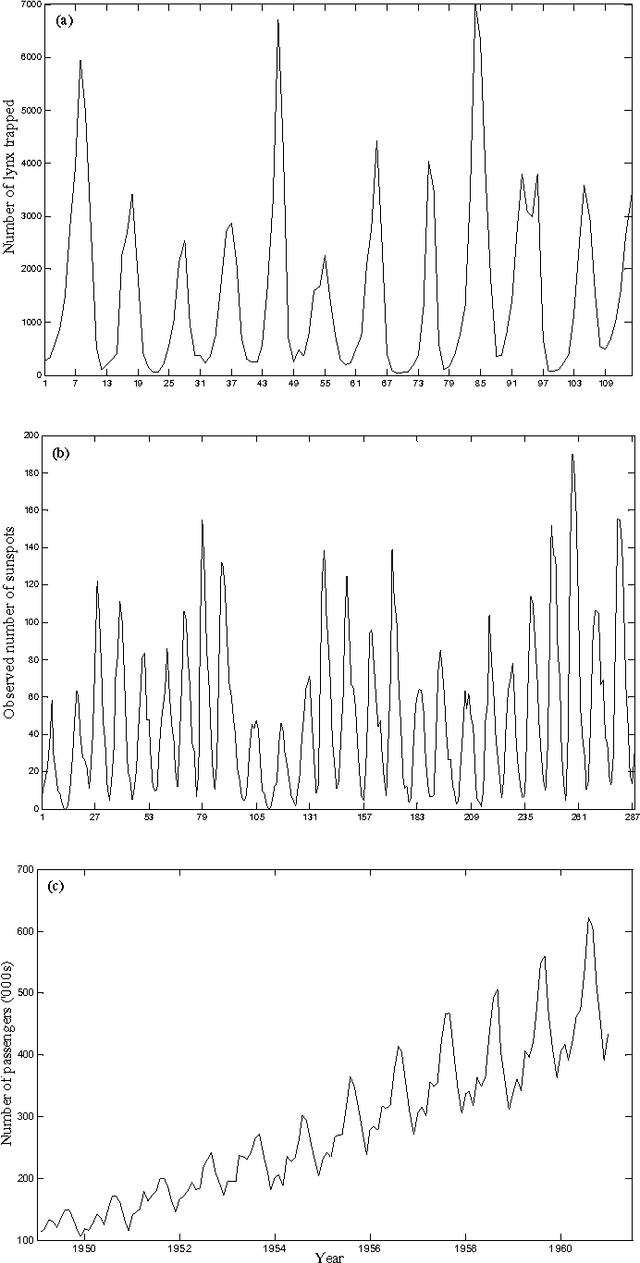

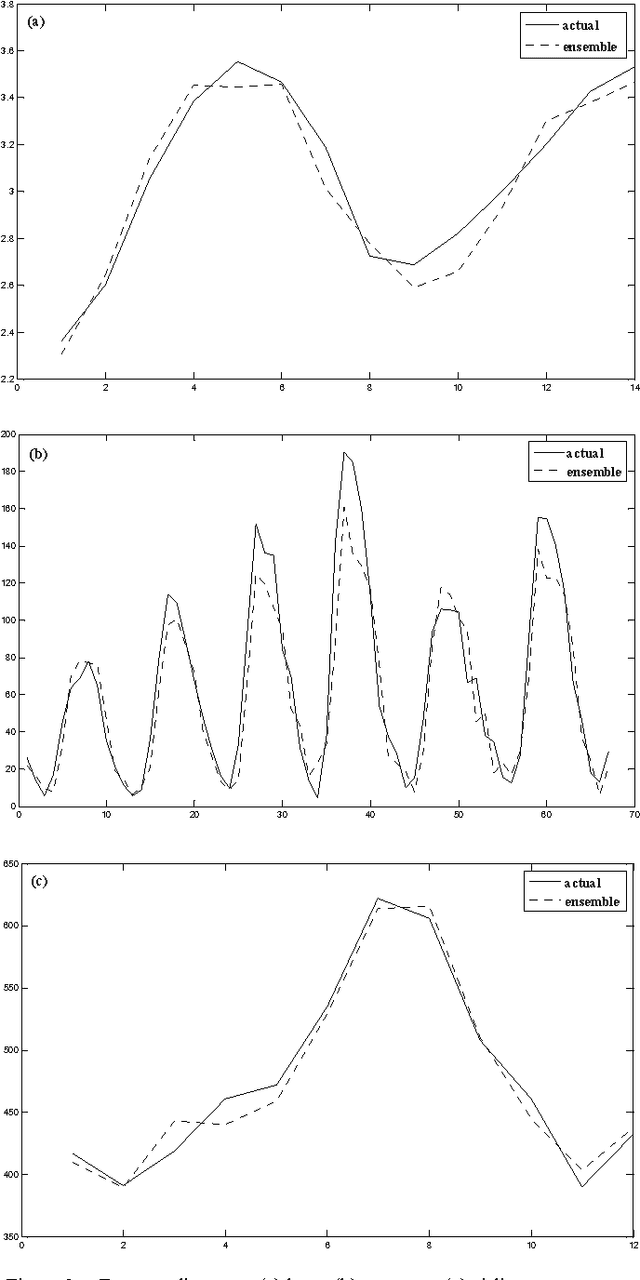

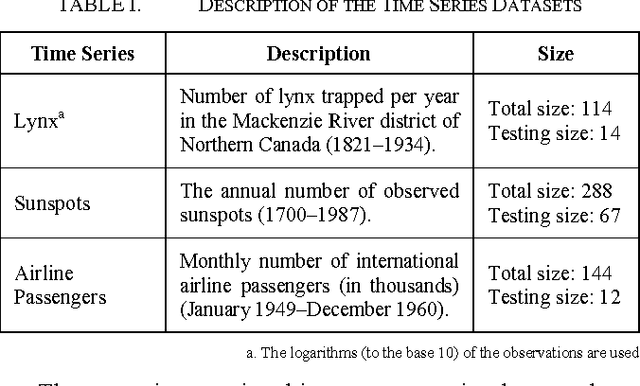

Abstract:Seasonality is a distinctive characteristic which is often observed in many practical time series. Artificial Neural Networks (ANNs) are a class of promising models for efficiently recognizing and forecasting seasonal patterns. In this paper, the Particle Swarm Optimization (PSO) approach is used to enhance the forecasting strengths of feedforward ANN (FANN) as well as Elman ANN (EANN) models for seasonal data. Three widely popular versions of the basic PSO algorithm, viz. Trelea-I, Trelea-II and Clerc-Type1 are considered here. The empirical analysis is conducted on three real-world seasonal time series. Results clearly show that each version of the PSO algorithm achieves notably better forecasting accuracies than the standard Backpropagation (BP) training method for both FANN and EANN models. The neural network forecasting results are also compared with those from the three traditional statistical models, viz. Seasonal Autoregressive Integrated Moving Average (SARIMA), Holt-Winters (HW) and Support Vector Machine (SVM). The comparison demonstrates that both PSO and BP based neural networks outperform SARIMA, HW and SVM models for all three time series datasets. The forecasting performances of ANNs are further improved through combining the outputs from the three PSO based models.

* 4 figures, 4 tables, 31 references, conference proceedings

An Introductory Study on Time Series Modeling and Forecasting

Feb 26, 2013

Abstract:Time series modeling and forecasting has fundamental importance to various practical domains. Thus a lot of active research works is going on in this subject during several years. Many important models have been proposed in literature for improving the accuracy and effectiveness of time series forecasting. The aim of this dissertation work is to present a concise description of some popular time series forecasting models used in practice, with their salient features. In this thesis, we have described three important classes of time series models, viz. the stochastic, neural networks and SVM based models, together with their inherent forecasting strengths and weaknesses. We have also discussed about the basic issues related to time series modeling, such as stationarity, parsimony, overfitting, etc. Our discussion about different time series models is supported by giving the experimental forecast results, performed on six real time series datasets. While fitting a model to a dataset, special care is taken to select the most parsimonious one. To evaluate forecast accuracy as well as to compare among different models fitted to a time series, we have used the five performance measures, viz. MSE, MAD, RMSE, MAPE and Theil's U-statistics. For each of the six datasets, we have shown the obtained forecast diagram which graphically depicts the closeness between the original and forecasted observations. To have authenticity as well as clarity in our discussion about time series modeling and forecasting, we have taken the help of various published research works from reputed journals and some standard books.

* 67 pages, 29 figures, 33 references, book

Combining Multiple Time Series Models Through A Robust Weighted Mechanism

Feb 26, 2013

Abstract:Improvement of time series forecasting accuracy through combining multiple models is an important as well as a dynamic area of research. As a result, various forecasts combination methods have been developed in literature. However, most of them are based on simple linear ensemble strategies and hence ignore the possible relationships between two or more participating models. In this paper, we propose a robust weighted nonlinear ensemble technique which considers the individual forecasts from different models as well as the correlations among them while combining. The proposed ensemble is constructed using three well-known forecasting models and is tested for three real-world time series. A comparison is made among the proposed scheme and three other widely used linear combination methods, in terms of the obtained forecast errors. This comparison shows that our ensemble scheme provides significantly lower forecast errors than each individual model as well as each of the four linear combination methods.

* 6 pages, 3 figures, 2 tables, conference

A Homogeneous Ensemble of Artificial Neural Networks for Time Series Forecasting

Feb 25, 2013

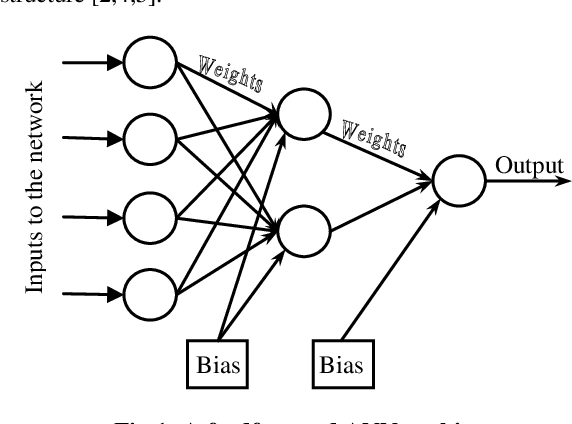

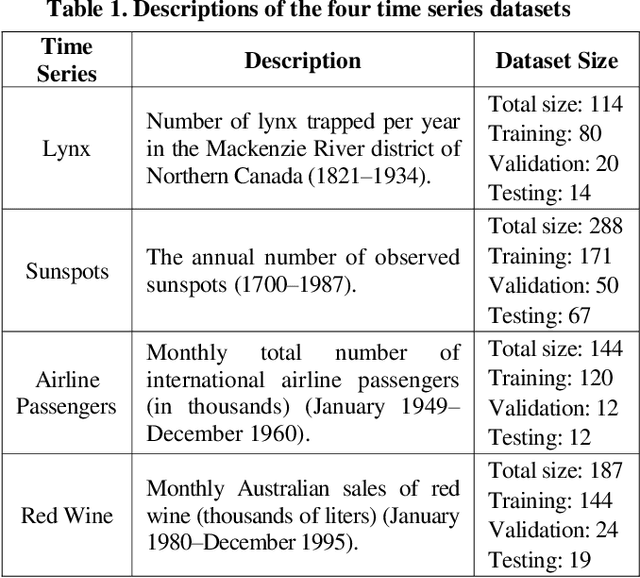

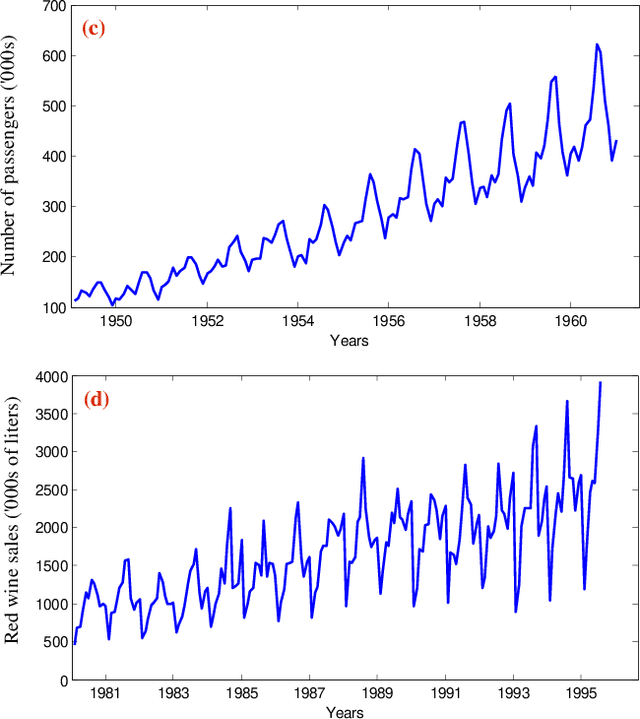

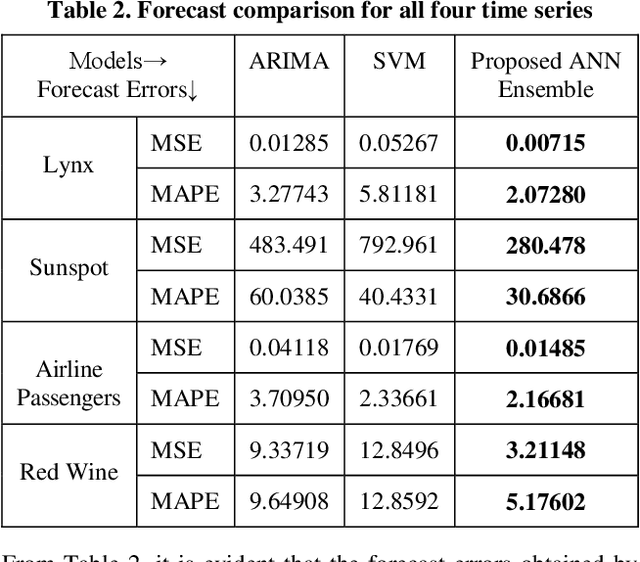

Abstract:Enhancing the robustness and accuracy of time series forecasting models is an active area of research. Recently, Artificial Neural Networks (ANNs) have found extensive applications in many practical forecasting problems. However, the standard backpropagation ANN training algorithm has some critical issues, e.g. it has a slow convergence rate and often converges to a local minimum, the complex pattern of error surfaces, lack of proper training parameters selection methods, etc. To overcome these drawbacks, various improved training methods have been developed in literature; but, still none of them can be guaranteed as the best for all problems. In this paper, we propose a novel weighted ensemble scheme which intelligently combines multiple training algorithms to increase the ANN forecast accuracies. The weight for each training algorithm is determined from the performance of the corresponding ANN model on the validation dataset. Experimental results on four important time series depicts that our proposed technique reduces the mentioned shortcomings of individual ANN training algorithms to a great extent. Also it achieves significantly better forecast accuracies than two other popular statistical models.

* 8 pages, 4 figures, 2 tables, 26 references, international journal

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge