R. Arora

The Physical Systems Behind Optimization Algorithms

Oct 25, 2018

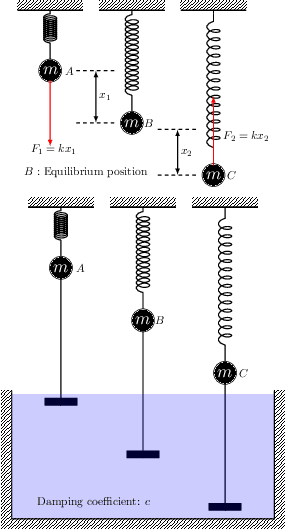

Abstract:We use differential equations based approaches to provide some {\it \textbf{physics}} insights into analyzing the dynamics of popular optimization algorithms in machine learning. In particular, we study gradient descent, proximal gradient descent, coordinate gradient descent, proximal coordinate gradient, and Newton's methods as well as their Nesterov's accelerated variants in a unified framework motivated by a natural connection of optimization algorithms to physical systems. Our analysis is applicable to more general algorithms and optimization problems {\it \textbf{beyond}} convexity and strong convexity, e.g. Polyak-\L ojasiewicz and error bound conditions (possibly nonconvex).

Identifying Nominals with No Head Match Co-references Using Deep Learning

Oct 02, 2017

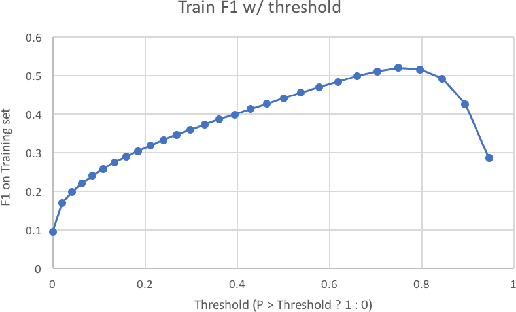

Abstract:Identifying nominals with no head match is a long-standing challenge in coreference resolution with current systems performing significantly worse than humans. In this paper we present a new neural network architecture which outperforms the current state-of-the-art system on the English portion of the CoNLL 2012 Shared Task. This is done by using a logistic regression on features produced by two submodels, one of which is has the architecture proposed in [CM16a] while the other combines domain specific embeddings of the antecedent and the mention. We also propose some simple additional features which seem to improve performance for all models substantially, increasing F1 by almost 4% on basic logistic regression and other complex models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge