Pranav M. Pawar

Multi-model approach for autonomous driving: A comprehensive study on traffic sign-, vehicle- and lane detection and behavioral cloning

Mar 10, 2026Abstract:Deep learning and computer vision techniques have become increasingly important in the development of self-driving cars. These techniques play a crucial role in enabling self-driving cars to perceive and understand their surroundings, allowing them to safely navigate and make decisions in real-time. Using Neural Networks self-driving cars can accurately identify and classify objects such as pedestrians, other vehicles, and traffic signals. Using deep learning and analyzing data from sensors such as cameras and radar, self-driving cars can predict the likely movement of other objects and plan their own actions accordingly. In this study, a novel approach to enhance the performance of selfdriving cars by using pre-trained and custom-made neural networks for key tasks, including traffic sign classification, vehicle detection, lane detection, and behavioral cloning is provided. The methodology integrates several innovative techniques, such as geometric and color transformations for data augmentation, image normalization, and transfer learning for feature extraction. These techniques are applied to diverse datasets,including the German Traffic Sign Recognition Benchmark (GTSRB), road and lane segmentation datasets, vehicle detection datasets, and data collected using the Udacity selfdriving car simulator to evaluate the model efficacy. The primary objective of the work is to review the state-of-the-art in deep learning and computer vision for self-driving cars. The findings of the work are effective in solving various challenges related to self-driving cars like traffic sign classification, lane prediction, vehicle detection, and behavioral cloning, and provide valuable insights into improving the robustness and reliability of autonomous systems, paving the way for future research and deployment of safer and more efficient self-driving technologies.

Distributed Deep Reinforcement Learning for Collaborative Spectrum Sharing

Apr 06, 2021

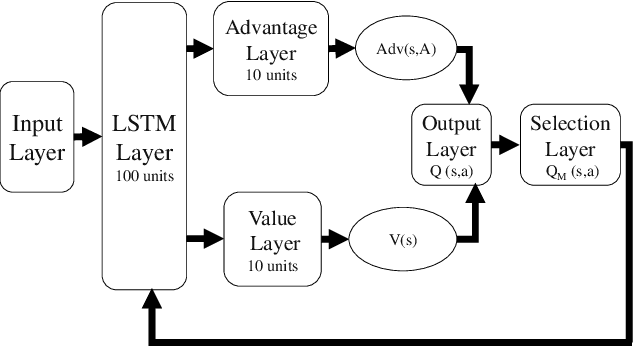

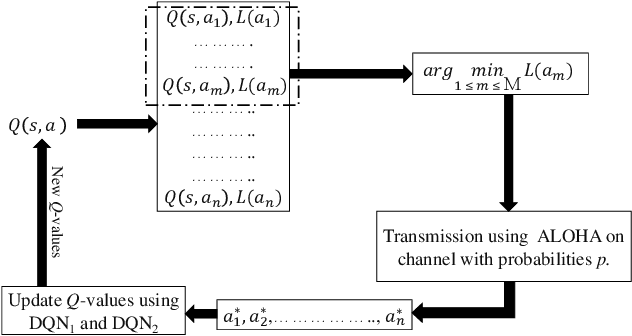

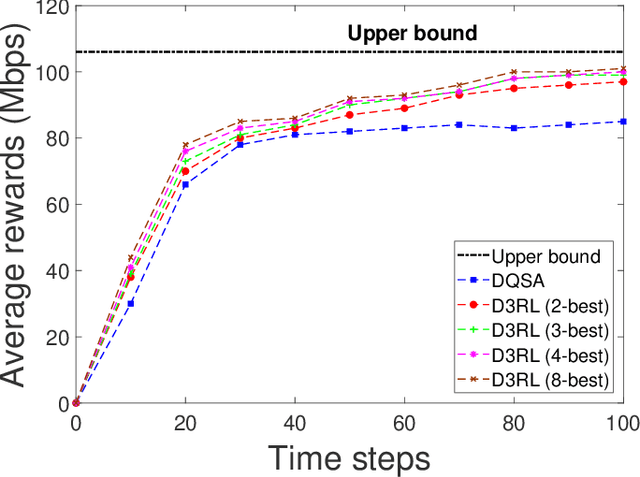

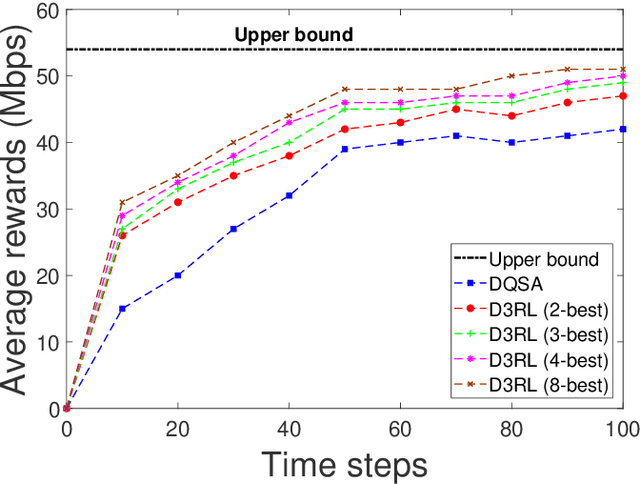

Abstract:Spectrum sharing among users is a fundamental problem in the management of any wireless network. In this paper, we discuss the problem of distributed spectrum collaboration without central management under general unknown channels. Since the cost of communication, coordination and control is rapidly increasing with the number of devices and the expanding bandwidth used there is an obvious need to develop distributed techniques for spectrum collaboration where no explicit signaling is used. In this paper, we combine game-theoretic insights with deep Q-learning to provide a novel asymptotically optimal solution to the spectrum collaboration problem. We propose a deterministic distributed deep reinforcement learning(D3RL) mechanism using a deep Q-network (DQN). It chooses the channels using the Q-values and the channel loads while limiting the options available to the user to a few channels with the highest Q-values and among those, it selects the least loaded channel. Using insights from both game theory and combinatorial optimization we show that this technique is asymptotically optimal for large overloaded networks. The selected channel and the outcome of the successful transmission are fed back into the learning of the deep Q-network to incorporate it into the learning of the Q-values. We also analyzed performance to understand the behavior of D3RL in differ

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge