Phuong D. Dao

Department of Agricultural Biology, Colorado State University, USA, Graduate Degree Program in Ecology, Colorado State University, USA, School of Global Environmental Sustainability, Colorado State University, USA

LeafNet: A Large-Scale Dataset and Comprehensive Benchmark for Foundational Vision-Language Understanding of Plant Diseases

Feb 17, 2026Abstract:Foundation models and vision-language pre-training have significantly advanced Vision-Language Models (VLMs), enabling multimodal processing of visual and linguistic data. However, their application in domain-specific agricultural tasks, such as plant pathology, remains limited due to the lack of large-scale, comprehensive multimodal image--text datasets and benchmarks. To address this gap, we introduce LeafNet, a comprehensive multimodal dataset, and LeafBench, a visual question-answering benchmark developed to systematically evaluate the capabilities of VLMs in understanding plant diseases. The dataset comprises 186,000 leaf digital images spanning 97 disease classes, paired with metadata, generating 13,950 question-answer pairs spanning six critical agricultural tasks. The questions assess various aspects of plant pathology understanding, including visual symptom recognition, taxonomic relationships, and diagnostic reasoning. Benchmarking 12 state-of-the-art VLMs on our LeafBench dataset, we reveal substantial disparity in their disease understanding capabilities. Our study shows performance varies markedly across tasks: binary healthy--diseased classification exceeds 90\% accuracy, while fine-grained pathogen and species identification remains below 65\%. Direct comparison between vision-only models and VLMs demonstrates the critical advantage of multimodal architectures: fine-tuned VLMs outperform traditional vision models, confirming that integrating linguistic representations significantly enhances diagnostic precision. These findings highlight critical gaps in current VLMs for plant pathology applications and underscore the need for LeafBench as a rigorous framework for methodological advancement and progress evaluation toward reliable AI-assisted plant disease diagnosis. Code is available at https://github.com/EnalisUs/LeafBench.

GreenHyperSpectra: A multi-source hyperspectral dataset for global vegetation trait prediction

Jul 09, 2025Abstract:Plant traits such as leaf carbon content and leaf mass are essential variables in the study of biodiversity and climate change. However, conventional field sampling cannot feasibly cover trait variation at ecologically meaningful spatial scales. Machine learning represents a valuable solution for plant trait prediction across ecosystems, leveraging hyperspectral data from remote sensing. Nevertheless, trait prediction from hyperspectral data is challenged by label scarcity and substantial domain shifts (\eg across sensors, ecological distributions), requiring robust cross-domain methods. Here, we present GreenHyperSpectra, a pretraining dataset encompassing real-world cross-sensor and cross-ecosystem samples designed to benchmark trait prediction with semi- and self-supervised methods. We adopt an evaluation framework encompassing in-distribution and out-of-distribution scenarios. We successfully leverage GreenHyperSpectra to pretrain label-efficient multi-output regression models that outperform the state-of-the-art supervised baseline. Our empirical analyses demonstrate substantial improvements in learning spectral representations for trait prediction, establishing a comprehensive methodological framework to catalyze research at the intersection of representation learning and plant functional traits assessment. All code and data are available at: https://github.com/echerif18/HyspectraSSL.

MOB-GCN: A Novel Multiscale Object-Based Graph Neural Network for Hyperspectral Image Classification

Feb 22, 2025Abstract:This paper introduces a novel multiscale object-based graph neural network called MOB-GCN for hyperspectral image (HSI) classification. The central aim of this study is to enhance feature extraction and classification performance by utilizing multiscale object-based image analysis (OBIA). Traditional pixel-based methods often suffer from low accuracy and speckle noise, while single-scale OBIA approaches may overlook crucial information of image objects at different levels of detail. MOB-GCN overcomes these challenges by extracting and integrating features from multiple segmentation scales, leveraging the Multiresolution Graph Network (MGN) architecture to capture both fine-grained and global spatial patterns. MOB-GCN addresses this issue by extracting and integrating features from multiple segmentation scales to improve classification results using the Multiresolution Graph Network (MGN) architecture that can model fine-grained and global spatial patterns. By constructing a dynamic multiscale graph hierarchy, MOB-GCN offers a more comprehensive understanding of the intricate details and global context of HSIs. Experimental results demonstrate that MOB-GCN consistently outperforms single-scale graph convolutional networks (GCNs) in terms of classification accuracy, computational efficiency, and noise reduction, particularly when labeled data is limited. The implementation of MOB-GCN is publicly available at https://github.com/HySonLab/MultiscaleHSI

A study on the effects of compression on hyperspectral image classification

Apr 01, 2021

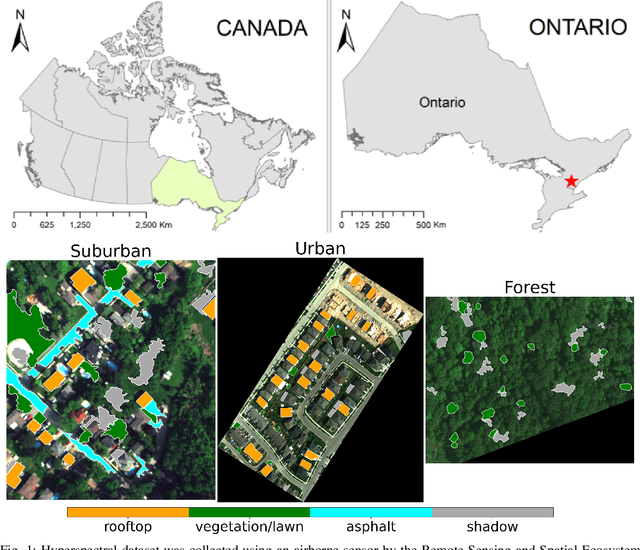

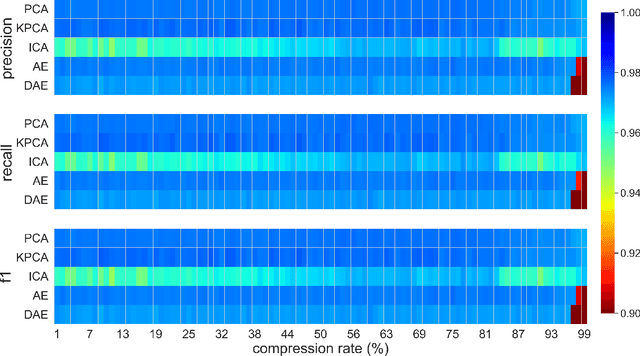

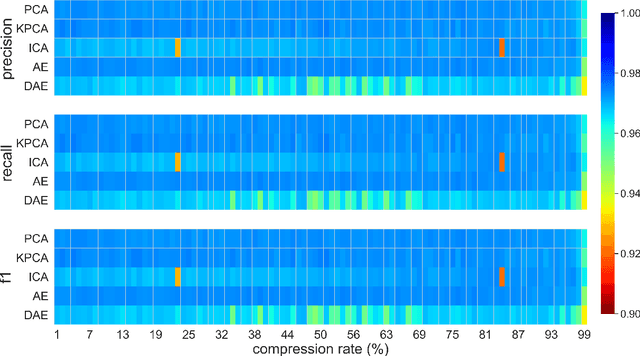

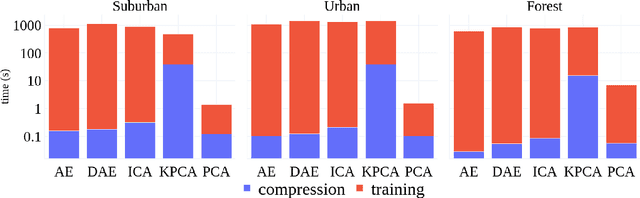

Abstract:This paper presents a systematic study the effects of compression on hyperspectral pixel classification task. We use five dimensionality reduction methods -- PCA, KPCA, ICA, AE, and DAE -- to compress 301-dimensional hyperspectral pixels. Compressed pixels are subsequently used to perform pixel-based classifications. Pixel classification accuracies together with compression method, compression rates, and reconstruction errors provide a new lens to study the suitability of a compression method for the task of pixel-based classification. We use three high-resolution hyperspectral image datasets, representing three common landscape units (i.e. urban, transitional suburban, and forests) collected by the Remote Sensing and Spatial Ecosystem Modeling laboratory of the University of Toronto. We found that PCA, KPCA, and ICA post greater signal reconstruction capability; however, when compression rate is more than 90\% those methods showed lower classification scores. AE and DAE methods post better classification accuracy at 95\% compression rate, however decreasing again at 97\%, suggesting a sweet-spot at the 95\% mark. Our results demonstrate that the choice of a compression method with the compression rate are important considerations when designing a hyperspectral image classification pipeline.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge