Peder A. Olsen

Learning to See More: UAS-Guided Super-Resolution of Satellite Imagery for Precision Agriculture

May 27, 2025Abstract:Unmanned Aircraft Systems (UAS) and satellites are key data sources for precision agriculture, yet each presents trade-offs. Satellite data offer broad spatial, temporal, and spectral coverage but lack the resolution needed for many precision farming applications, while UAS provide high spatial detail but are limited by coverage and cost, especially for hyperspectral data. This study presents a novel framework that fuses satellite and UAS imagery using super-resolution methods. By integrating data across spatial, spectral, and temporal domains, we leverage the strengths of both platforms cost-effectively. We use estimation of cover crop biomass and nitrogen (N) as a case study to evaluate our approach. By spectrally extending UAS RGB data to the vegetation red edge and near-infrared regions, we generate high-resolution Sentinel-2 imagery and improve biomass and N estimation accuracy by 18% and 31%, respectively. Our results show that UAS data need only be collected from a subset of fields and time points. Farmers can then 1) enhance the spectral detail of UAS RGB imagery; 2) increase the spatial resolution by using satellite data; and 3) extend these enhancements spatially and across the growing season at the frequency of the satellite flights. Our SRCNN-based spectral extension model shows considerable promise for model transferability over other cropping systems in the Upper and Lower Chesapeake Bay regions. Additionally, it remains effective even when cloud-free satellite data are unavailable, relying solely on the UAS RGB input. The spatial extension model produces better biomass and N predictions than models built on raw UAS RGB images. Once trained with targeted UAS RGB data, the spatial extension model allows farmers to stop repeated UAS flights. While we introduce super-resolution advances, the core contribution is a lightweight and scalable system for affordable on-farm use.

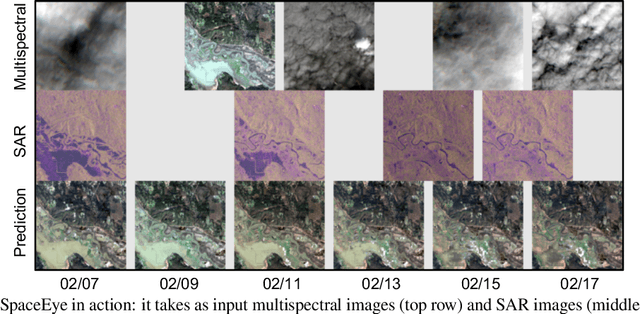

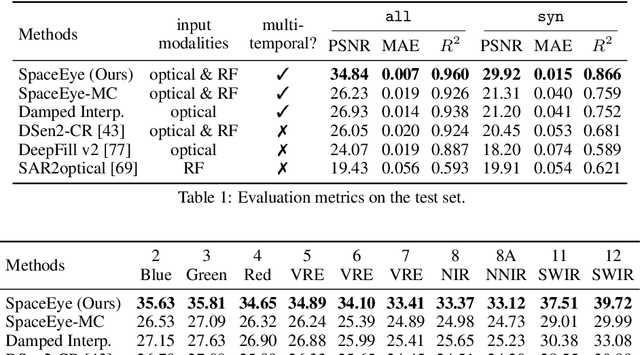

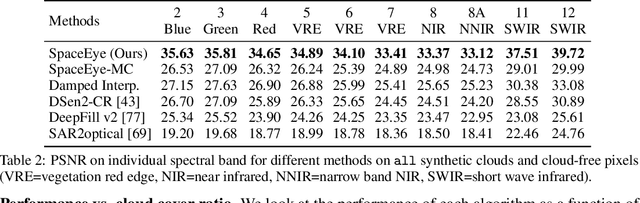

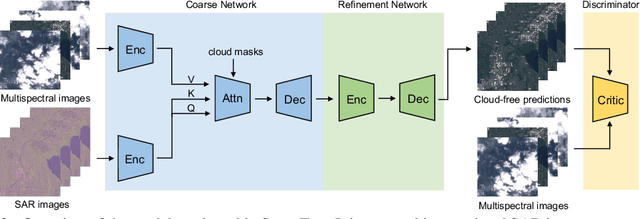

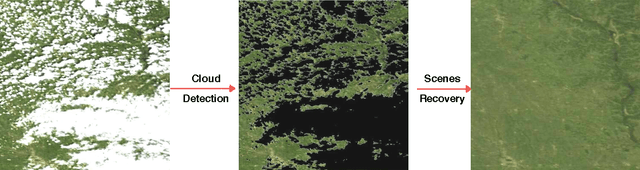

Seeing Through Clouds in Satellite Images

Jun 15, 2021

Abstract:This paper presents a neural-network-based solution to recover pixels occluded by clouds in satellite images. We leverage radio frequency (RF) signals in the ultra/super-high frequency band that penetrate clouds to help reconstruct the occluded regions in multispectral images. We introduce the first multi-modal multi-temporal cloud removal model. Our model uses publicly available satellite observations and produces daily cloud-free images. Experimental results show that our system significantly outperforms baselines by 8dB in PSNR. We also demonstrate use cases of our system in digital agriculture, flood monitoring, and wildfire detection. We will release the processed dataset to facilitate future research.

Crowd Counting with Decomposed Uncertainty

Mar 15, 2019

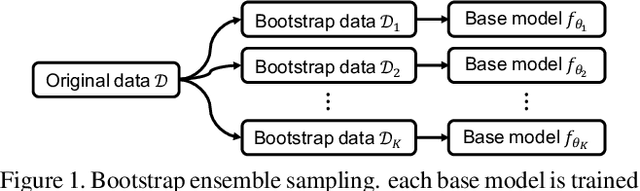

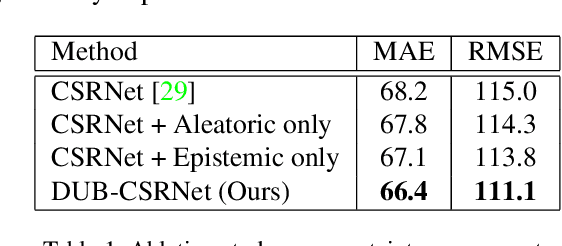

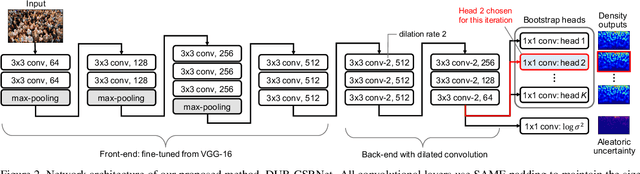

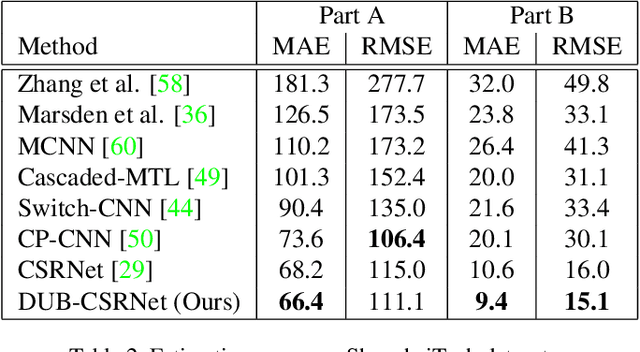

Abstract:Research in neural networks in the field of computer vision has achieved remarkable accuracy for point estimation. However, the uncertainty in the estimation is rarely addressed. Uncertainty quantification accompanied by point estimation can lead to a more informed decision, and even improve the prediction quality. In this work, we focus on uncertainty estimation in the domain of crowd counting. We propose a scalable neural network framework with quantification of decomposed uncertainty using a bootstrap ensemble. We demonstrate that the proposed uncertainty quantification method provides additional insight to the crowd counting problem and is simple to implement. We also show that our proposed method outperforms the current state of the art method in many benchmark data sets. To the best of our knowledge, we have the best system for ShanghaiTech part A and B, UCF CC 50, UCSD, and UCF-QNRF datasets.

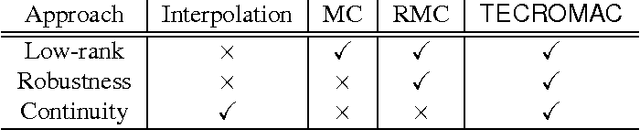

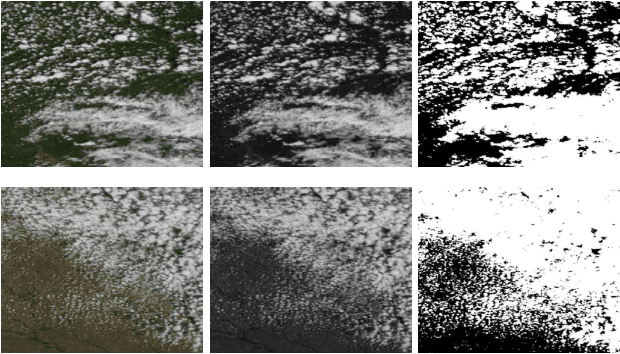

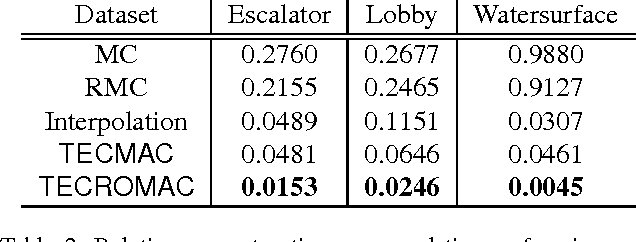

Removing Clouds and Recovering Ground Observations in Satellite Image Sequences via Temporally Contiguous Robust Matrix Completion

Apr 13, 2016

Abstract:We consider the problem of removing and replacing clouds in satellite image sequences, which has a wide range of applications in remote sensing. Our approach first detects and removes the cloud-contaminated part of the image sequences. It then recovers the missing scenes from the clean parts using the proposed "TECROMAC" (TEmporally Contiguous RObust MAtrix Completion) objective. The objective function balances temporal smoothness with a low rank solution while staying close to the original observations. The matrix whose the rows are pixels and columnsare days corresponding to the image, has low-rank because the pixels reflect land-types such as vegetation, roads and lakes and there are relatively few variations as a result. We provide efficient optimization algorithms for TECROMAC, so we can exploit images containing millions of pixels. Empirical results on real satellite image sequences, as well as simulated data, demonstrate that our approach is able to recover underlying images from heavily cloud-contaminated observations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge