Paul Rodriguez

Optimal Hyperparameter $ε$ for Adaptive Stochastic Optimizers through Gradient Histograms

Nov 20, 2023Abstract:Optimizers are essential components for successfully training deep neural network models. In order to achieve the best performance from such models, designers need to carefully choose the optimizer hyperparameters. However, this can be a computationally expensive and time-consuming process. Although it is known that all optimizer hyperparameters must be tuned for maximum performance, there is still a lack of clarity regarding the individual influence of minor priority hyperparameters, including the safeguard factor $\epsilon$ and momentum factor $\beta$, in leading adaptive optimizers (specifically, those based on the Adam optimizers). In this manuscript, we introduce a new framework based on gradient histograms to analyze and justify important attributes of adaptive optimizers, such as their optimal performance and the relationships and dependencies among hyperparameters. Furthermore, we propose a novel gradient histogram-based algorithm that automatically estimates a reduced and accurate search space for the safeguard hyperparameter $\epsilon$, where the optimal value can be easily found.

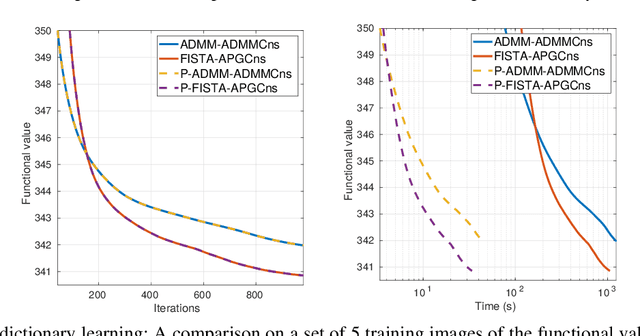

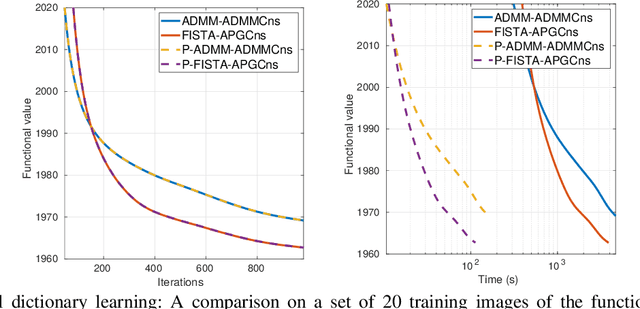

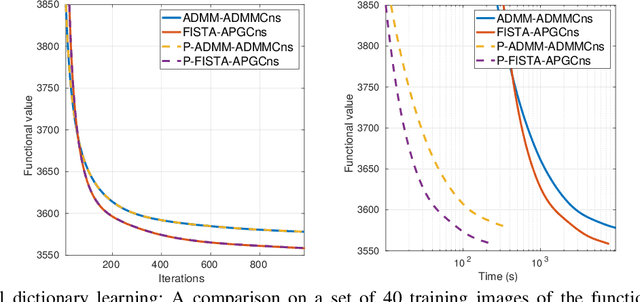

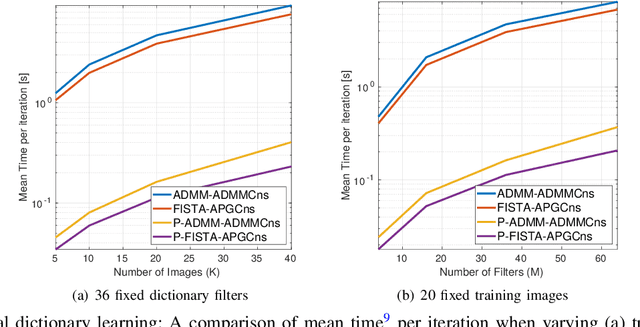

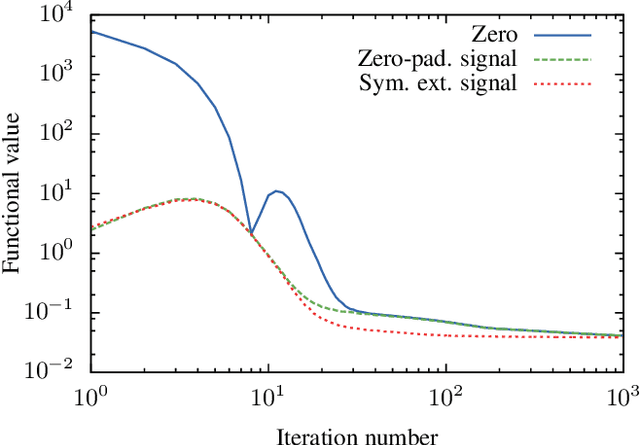

Efficient Consensus Model based on Proximal Gradient Method applied to Convolutional Sparse Problems

Nov 19, 2020

Abstract:Convolutional sparse representation (CSR), shift-invariant model for inverse problems, has gained much attention in the fields of signal/image processing, machine learning and computer vision. The most challenging problems in CSR implies the minimization of a composite function of the form $min_x \sum_i f_i(x) + g(x)$, where a direct and low-cost solution can be difficult to achieve. However, it has been reported that semi-distributed formulations such as ADMM consensus can provide important computational benefits. In the present work, we derive and detail a thorough theoretical analysis of an efficient consensus algorithm based on proximal gradient (PG) approach. The effectiveness of the proposed algorithm with respect to its ADMM counterpart is primarily assessed in the classic convolutional dictionary learning problem. Furthermore, our consensus method, which is generically structured, can be used to solve other optimization problems, where a sum of convex functions with a regularization term share a single global variable. As an example, the proposed algorithm is also applied to another particular convolutional problem for the anomaly detection task.

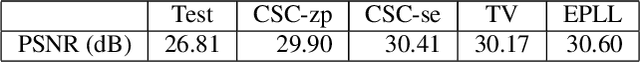

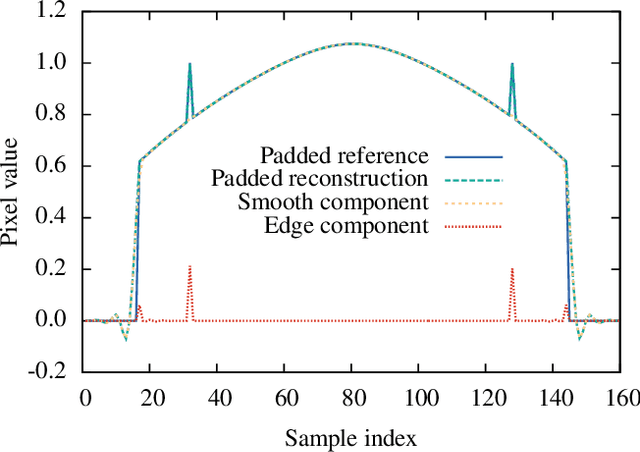

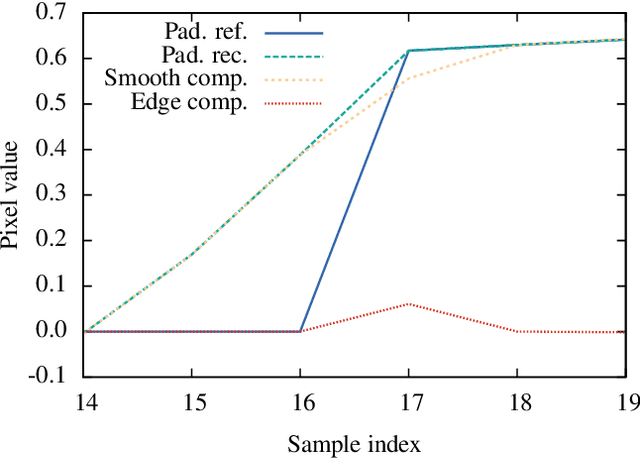

Convolutional Sparse Coding: Boundary Handling Revisited

Jul 20, 2017

Abstract:Two different approaches have recently been proposed for boundary handling in convolutional sparse representations, avoiding potential boundary artifacts arising from the circular boundary conditions implied by the use of frequency domain solution methods by introducing a spatial mask into the convolutional sparse coding problem. In the present paper we show that, under certain circumstances, these methods fail in their design goal of avoiding boundary artifacts. The reasons for this failure are discussed, a solution is proposed, and the practical implications are illustrated in an image deblurring problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge