Ou Wei

Accurate and Consistent Graph Model Generation from Text with Large Language Models

Aug 01, 2025

Abstract:Graph model generation from natural language description is an important task with many applications in software engineering. With the rise of large language models (LLMs), there is a growing interest in using LLMs for graph model generation. Nevertheless, LLM-based graph model generation typically produces partially correct models that suffer from three main issues: (1) syntax violations: the generated model may not adhere to the syntax defined by its metamodel, (2) constraint inconsistencies: the structure of the model might not conform to some domain-specific constraints, and (3) inaccuracy: due to the inherent uncertainty in LLMs, the models can include inaccurate, hallucinated elements. While the first issue is often addressed through techniques such as constraint decoding or filtering, the latter two remain largely unaddressed. Motivated by recent self-consistency approaches in LLMs, we propose a novel abstraction-concretization framework that enhances the consistency and quality of generated graph models by considering multiple outputs from an LLM. Our approach first constructs a probabilistic partial model that aggregates all candidate outputs and then refines this partial model into the most appropriate concrete model that satisfies all constraints. We evaluate our framework on several popular open-source and closed-source LLMs using diverse datasets for model generation tasks. The results demonstrate that our approach significantly improves both the consistency and quality of the generated graph models.

CoAPI: An Efficient Two-Phase Algorithm Using Core-Guided Over-Approximate Cover for Prime Compilation of Non-Clausal Formulae

Jun 07, 2019

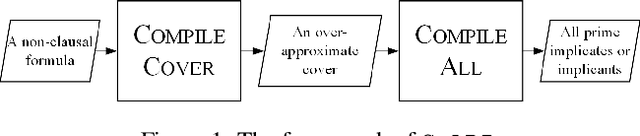

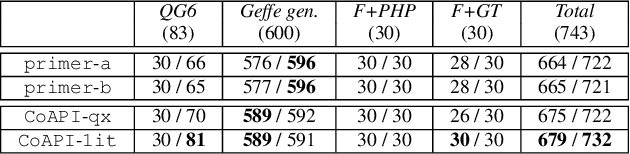

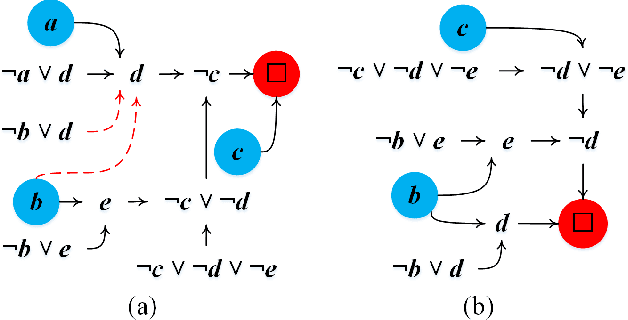

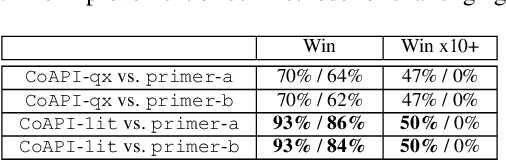

Abstract:Prime compilation, i.e., the generation of all prime implicates or implicants (primes for short) of formulae, is a prominent fundamental issue for AI. Recently, the prime compilation for non-clausal formulae has received great attention. The state-of-the-art approaches generate all primes along with a prime cover constructed by prime implicates using dual rail encoding. However, the dual rail encoding potentially expands search space. In addition, constructing a prime cover, which is necessary for their methods, is time-consuming. To address these issues, we propose a novel two-phase method -- CoAPI. The two phases are the key to construct a cover without using dual rail encoding. Specifically, given a non-clausal formula, we first propose a core-guided method to rewrite the non-clausal formula into a cover constructed by over-approximate implicates in the first phase. Then, we generate all the primes based on the cover in the second phase. In order to reduce the size of the cover, we provide a multi-order based shrinking method, with a good tradeoff between the small size and efficiency, to compress the size of cover considerably. The experimental results show that CoAPI outperforms state-of-the-art approaches. Particularly, for generating all prime implicates, CoAPI consumes about one order of magnitude less time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge