Oscar De Silva

Improving the Region of Attraction of a Multi-rotor UAV by Estimating Unknown Disturbances

Aug 30, 2024Abstract:This study presents a machine learning-aided approach to accurately estimate the region of attraction (ROA) of a multi-rotor unmanned aerial vehicle (UAV) controlled using a linear quadratic regulator (LQR) controller. Conventional ROA estimation approaches rely on a nominal dynamic model for ROA calculation, leading to inaccurate estimation due to unknown dynamics and disturbances associated with the physical system. To address this issue, our study utilizes a neural network to predict these unknown disturbances of a planar quadrotor. The nominal model integrated with the learned disturbances is then employed to calculate the ROA of the planer quadrotor using a graphical technique. The estimated ROA is then compared with the ROA calculated using Lyapunov analysis and the graphical approach without incorporating the learned disturbances. The results illustrated that the proposed method provides a more accurate estimation of the ROA, while the conventional Lyapunov-based estimation tends to be more conservative.

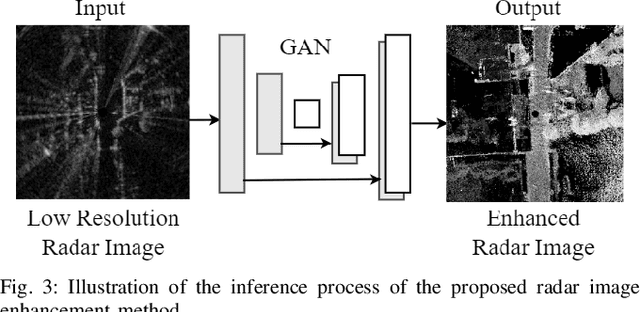

A Generative Adversarial Network-based Method for LiDAR-Assisted Radar Image Enhancement

Aug 30, 2024

Abstract:This paper presents a generative adversarial network (GAN) based approach for radar image enhancement. Although radar sensors remain robust for operations under adverse weather conditions, their application in autonomous vehicles (AVs) is commonly limited by the low-resolution data they produce. The primary goal of this study is to enhance the radar images to better depict the details and features of the environment, thereby facilitating more accurate object identification in AVs. The proposed method utilizes high-resolution, two-dimensional (2D) projected light detection and ranging (LiDAR) point clouds as ground truth images and low-resolution radar images as inputs to train the GAN. The ground truth images were obtained through two main steps. First, a LiDAR point cloud map was generated by accumulating raw LiDAR scans. Then, a customized LiDAR point cloud cropping and projection method was employed to obtain 2D projected LiDAR point clouds. The inference process of the proposed method relies solely on radar images to generate an enhanced version of them. The effectiveness of the proposed method is demonstrated through both qualitative and quantitative results. These results show that the proposed method can generate enhanced images with clearer object representation compared to the input radar images, even under adverse weather conditions.

MUN-FRL: A Visual Inertial LiDAR Dataset for Aerial Autonomous Navigation and Mapping

Oct 12, 2023Abstract:This paper presents a unique outdoor aerial visual-inertial-LiDAR dataset captured using a multi-sensor payload to promote the global navigation satellite system (GNSS)-denied navigation research. The dataset features flight distances ranging from 300m to 5km, collected using a DJI M600 hexacopter drone and the National Research Council (NRC) Bell 412 Advanced Systems Research Aircraft (ASRA). The dataset consists of hardware synchronized monocular images, IMU measurements, 3D LiDAR point-clouds, and high-precision real-time kinematic (RTK)-GNSS based ground truth. Ten datasets were collected as ROS bags over 100 mins of outdoor environment footage ranging from urban areas, highways, hillsides, prairies, and waterfronts. The datasets were collected to facilitate the development of visual-inertial-LiDAR odometry and mapping algorithms, visual-inertial navigation algorithms, object detection, segmentation, and landing zone detection algorithms based upon real-world drone and full-scale helicopter data. All the datasets contain raw sensor measurements, hardware timestamps, and spatio-temporally aligned ground truth. The intrinsic and extrinsic calibrations of the sensors are also provided along with raw calibration datasets. A performance summary of state-of-the-art methods applied on the datasets is also provided.

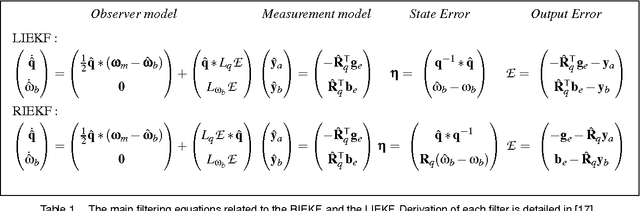

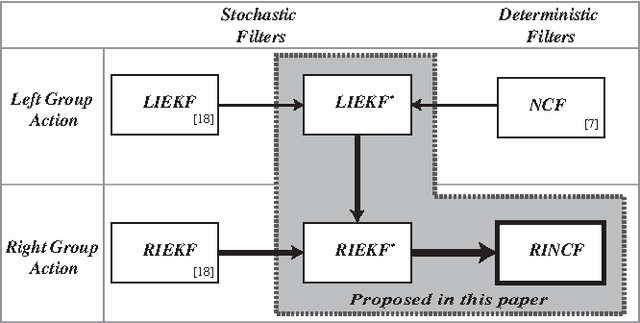

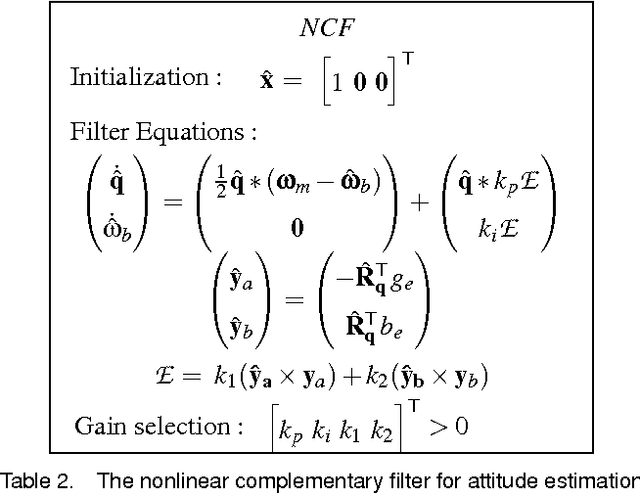

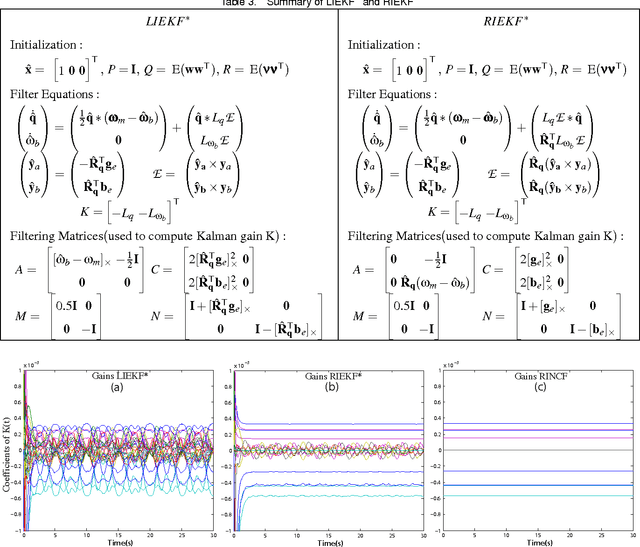

The Right Invariant Nonlinear Complementary Filter for Low Cost Attitude and Heading Estimation of Platforms

Dec 01, 2016

Abstract:This paper presents a novel filter with low computational demand to address the problem of orientation estimation of a robotic platform. This is conventionally addressed by extended Kalman filtering of measurements from a sensor suit which mainly includes accelerometers, gyroscopes, and a digital compass. Low cost robotic platforms demand simpler and computationally more efficient methods to address this filtering problem. Hence nonlinear observers with constant gains have emerged to assume this role. The nonlinear complementary filter is a popular choice in this domain which does not require covariance matrix propagation and associated computational overhead in its filtering algorithm. However, the gain tuning procedure of the complementary filter is not optimal, where it is often hand picked by trial and error. This process is counter intuitive to system noise based tuning capability offered by a stochastic filter like the Kalman filter. This paper proposes the right invariant formulation of the complementary filter, which preserves Kalman like system noise based gain tuning capability for the filter. The resulting filter exhibits efficient operation in elementary embedded hardware, intuitive system noise based gain tuning capability and accurate attitude estimation. The performance of the filter is validated using numerical simulations and by experimentally implementing the filter on an ARDrone 2.0 micro aerial vehicle platform.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge